Statistics

for

geoscientists

Pieter

Vermeesch

Department

of

Earth

Sciences

University

College

London

p.vermeesch@ucl.ac.uk

______________

According to the Oxford dictionary of English, the definition of ‘statistics’ is:

The practice or science of collecting and analysing numerical data in large quantities, especially for the purpose of inferring proportions in a whole from those in a representative sample.

The words ‘inferring’ and ‘sample’ are written in bold face because they are really central to the practice and purpose of Science in general, and Geology as a whole. For example:

Thus, pretty much everything that we do as Earth Scientists involves statistics in one way or another. We need statistics to plot, summarise, average, interpolate and extrapolate data; to quantify the precision of quantitative inferences; to fit analytical models to our measurements; to classify data and to extract patterns from it.

The field of statistics is as vast as it is deep and it is simply impossible to cover all its aspects in a single text. These notes are meant as a basic statistical ‘survival guide’ for geoscientists, which may be used as a starting point for further study. There are dozens of statistics textbooks that could be used for this purpose. Two books that I have consulted whilst writing these notes are “Mathematical Statistics and Data Analysis” by John A. Rice (Duxbury Press), which provides an in-depth introduction to general statistics; and “Statistics and Data Analysis in Geology” by John C. Davis (Wiley), which presents an excellent and very detailed introduction to geoscience-specific statistics.

Important though a basic understanding of statistics may be, it is only when this understanding is combined with some programming skills that it becomes truly useful. These notes take a hands-on approach, using a popular statistical programming language called R. To make life easier and lower the barrier to using the statistical methods described herein, a large number of datasets and predefined R functions have been bundled in an accompanying R-package called geostats. To demonstrate the versatility of R, all the 189 figures in these notes were made with R, without involving any other graphics software. Most of these figures can be reproduced using the geostats package.

These notes begin by introducing the basic principles of general statistics, before moving on to ‘geological’ data. Their main purpose is to instill a critical attitude in the student, and to raise an awareness of the many pitfalls of blindly applying statistical ‘black boxes’ to geological problems. For example, Chapters 2 and 3 introduce basic notions of exploratory data analysis using plots and summary statistics. Right from the start they will show that geological data, such as sedimentary clast sizes or reservoir porosity measurements, cannot readily be analysed using these techniques without some simple pre-treatment.

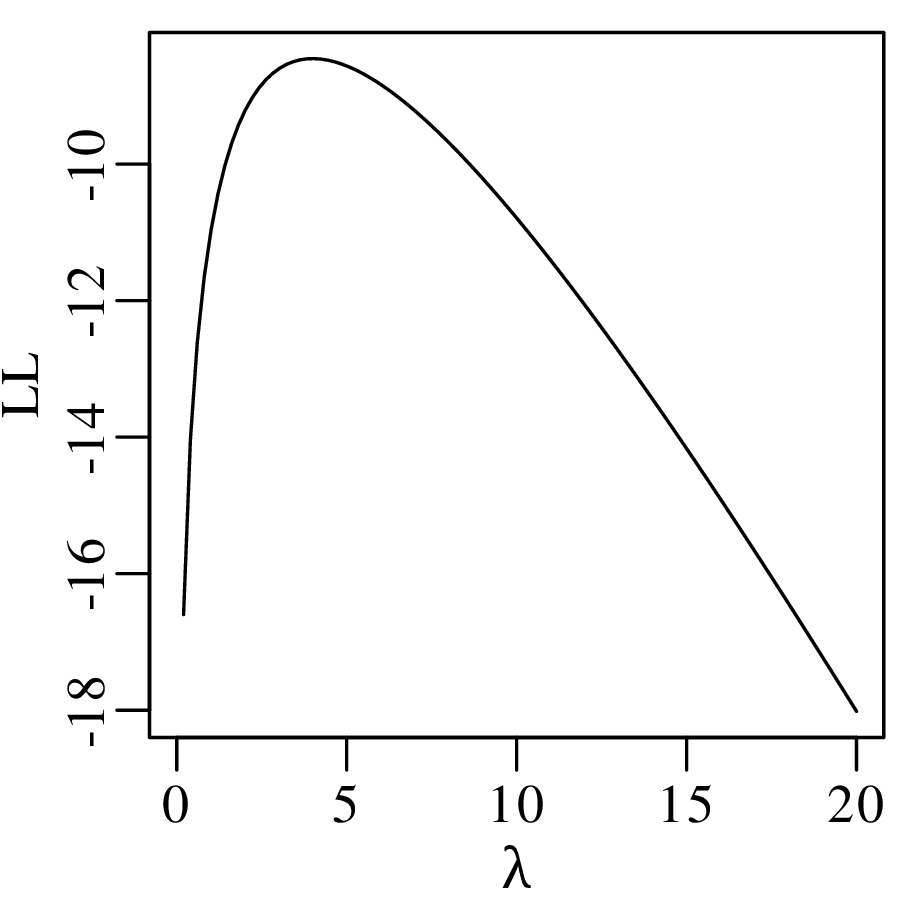

Chapters 4, 5 and 6 introduce the basic principles of probability theory, and uses these to derive two classical examples of discrete probability distributions, namely the binomial and Poisson distributions. These two distributions are used as a vehicle to introduce the important concepts of parameter estimation, hypothesis tests and confidence intervals. Here the text goes into considerably more detail than it does elsewhere. In a departure from many other introductory texts, Section 5.1 briefly introduces the method of maximum likelihood. This topic is revisited again in Sections 6.2, 7.4, 8.4 and 10.3. Even though few geoscientists may use maximum likelihood theory themselves, it is nevertheless useful that they are aware of its main principles, because these principles underlie many common statistical tools.

Chapter 7 discusses the normal or Gaussian distribution. It begins with an empirical demonstration of the central limit theorem in one and two dimensions. This demonstration serves as an explanation of “what is so normal about the Gaussian distribution?”. This is an important question because much of the remainder of the notes (including Chapters 11, 14 and 15) point out that many geological datasets are not normal. Ignoring this non-normality can lead to biased and sometimes nonsensical results.

Scientists use measurements to make quantitative inferences about the world. Random sampling fluctuations can have a significant effect on these inferences, and it is important that these are evaluated before drawing any scientific conclusions. In fact, it may be argued that estimating the precision of a quantitative inference is as important as the inference itself. Chapter 8 uses basic calculus to derive some generic error propagation formulas. However, these formulas are useful regardless of whether one is able to derive them or not. The error propagation chapter points out a strong dependency of statistical precision on sample size.

This sample size dependency is further explored in Chapter 9, which introduces a number of statistical tests to compare one dataset with a theoretical distribution, or to compare two datasets with each other. Useful though these tests are in principle, in practice their outcome should be treated with caution. Here we revisit an important caveat that is also discussed in Section 5.5, namely that the power of statistical tests to detect even the tiniest violation of a null hypothesis, monotonically increases with sample size. This is the reason why statistical hypothesis tests have come under increasing scrutiny in recent years. Chapter 9 advocates that, rather than testing whether a hypothesis is true or false, it is much more productive to quantify ‘how false’ said hypothesis is.

Chapter 10 introduces linear regression using the method of least squares. But whereas most introductory statistics texts only use least squares, these notes also demonstrate the mathematical equivalence of least squares with the method of maximum likelihood using normal residuals. The advantage of this approach is that it facilitates the derivation of confidence intervals and prediction intervals for linear fits, using the error propagation methods of Chapter 8. The method of maximum likelihood also provides a natural route towards more advanced weighted regression algorithms that account for correlated uncertainties in both variables (Section 10.5).

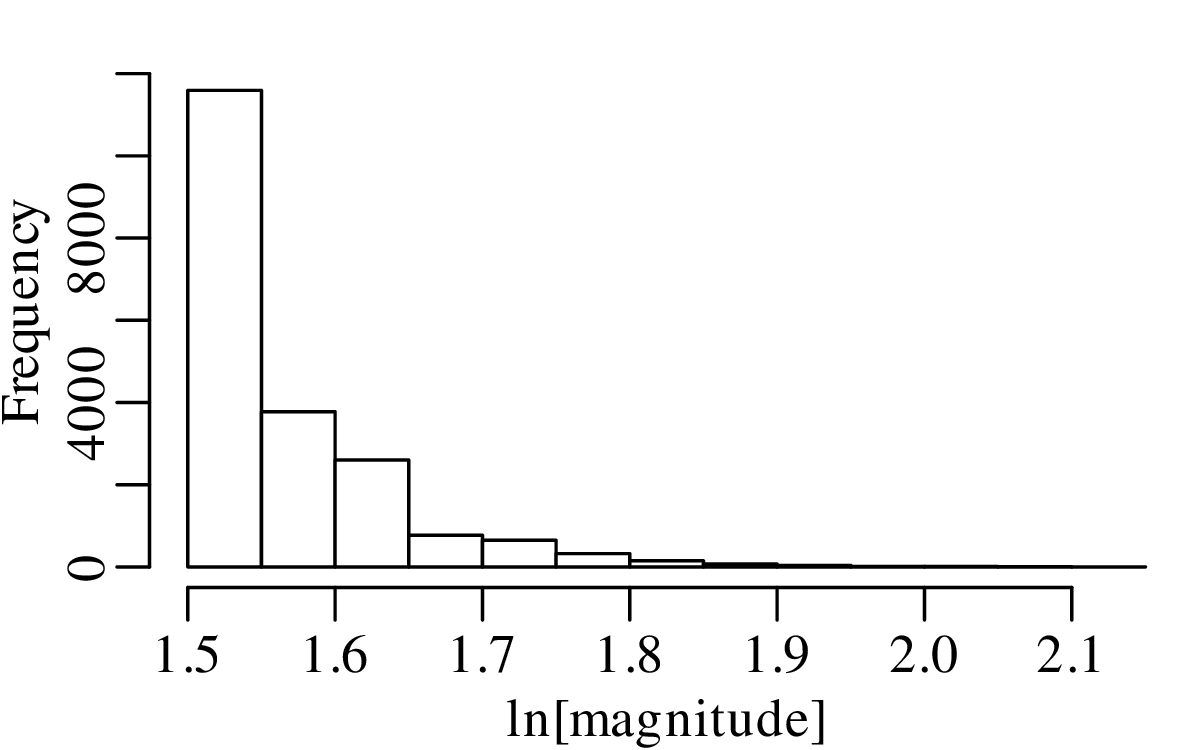

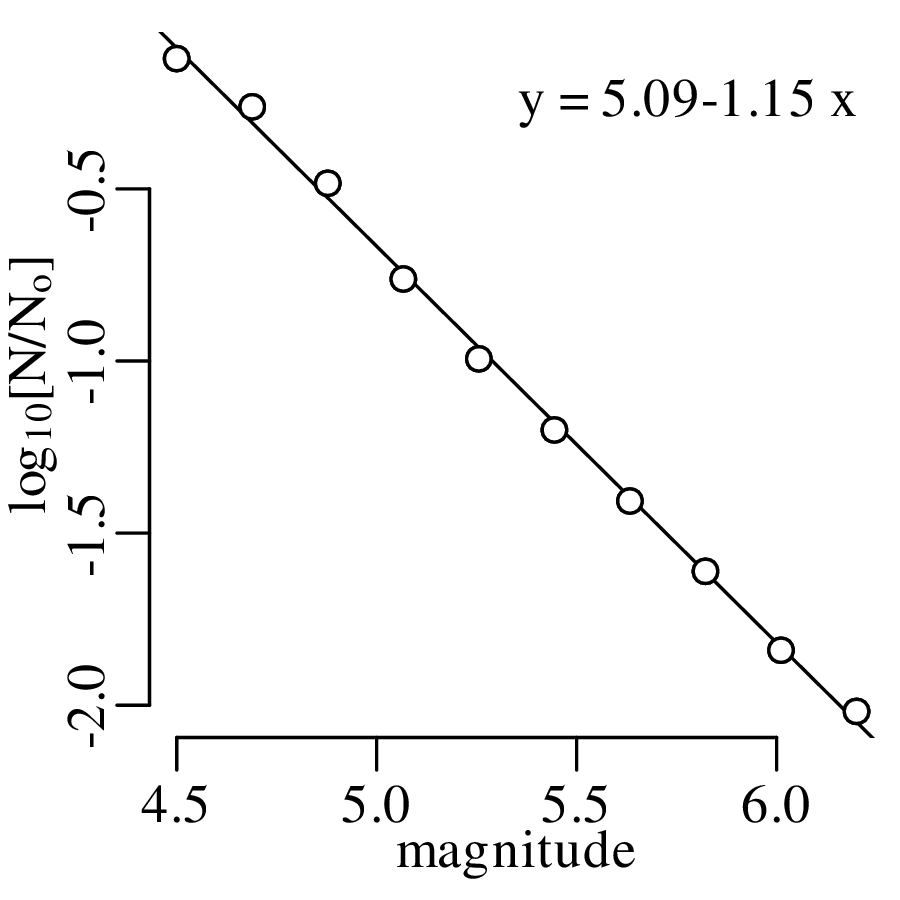

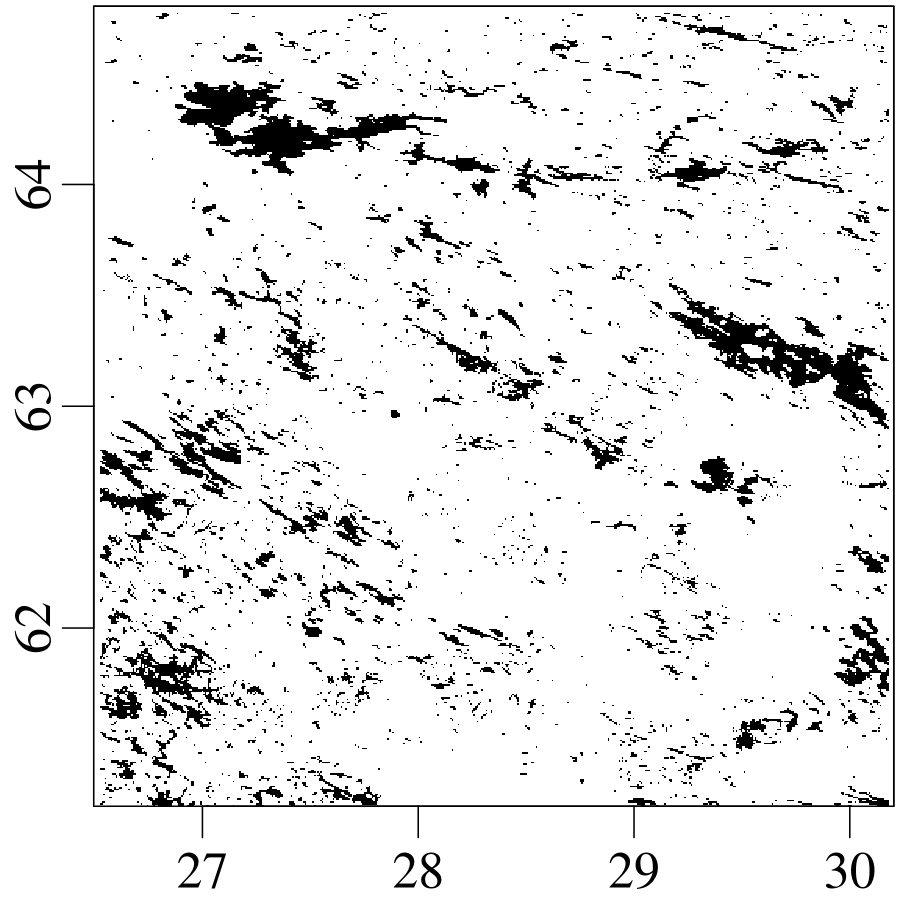

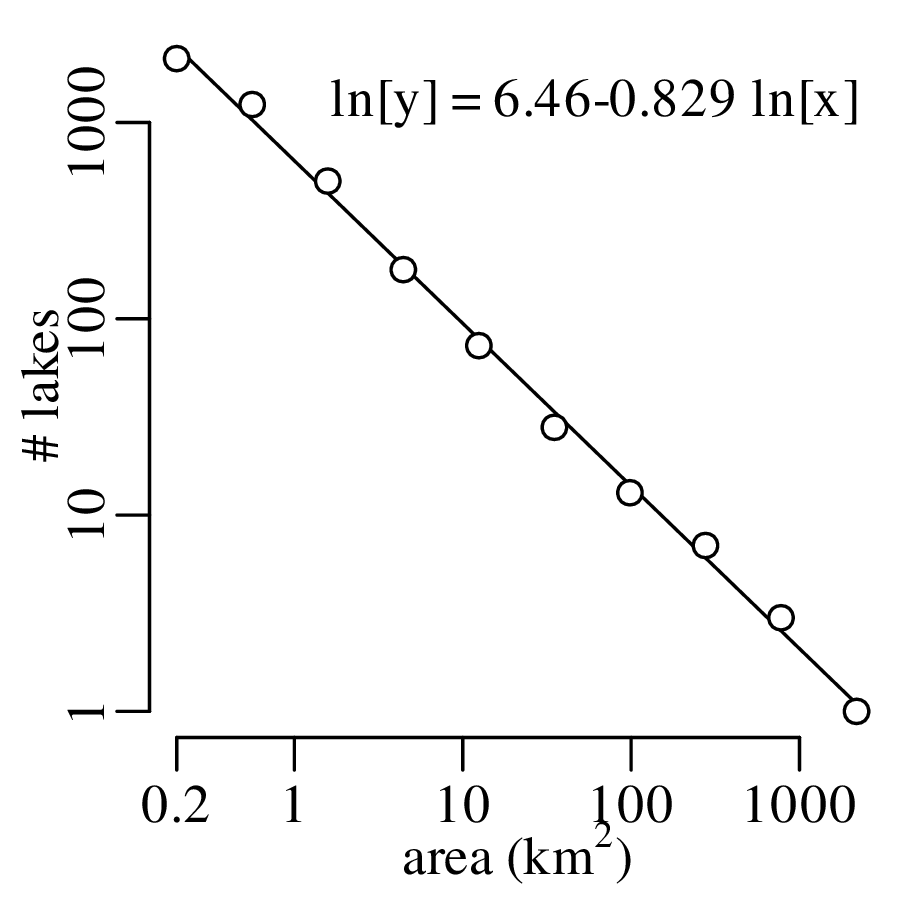

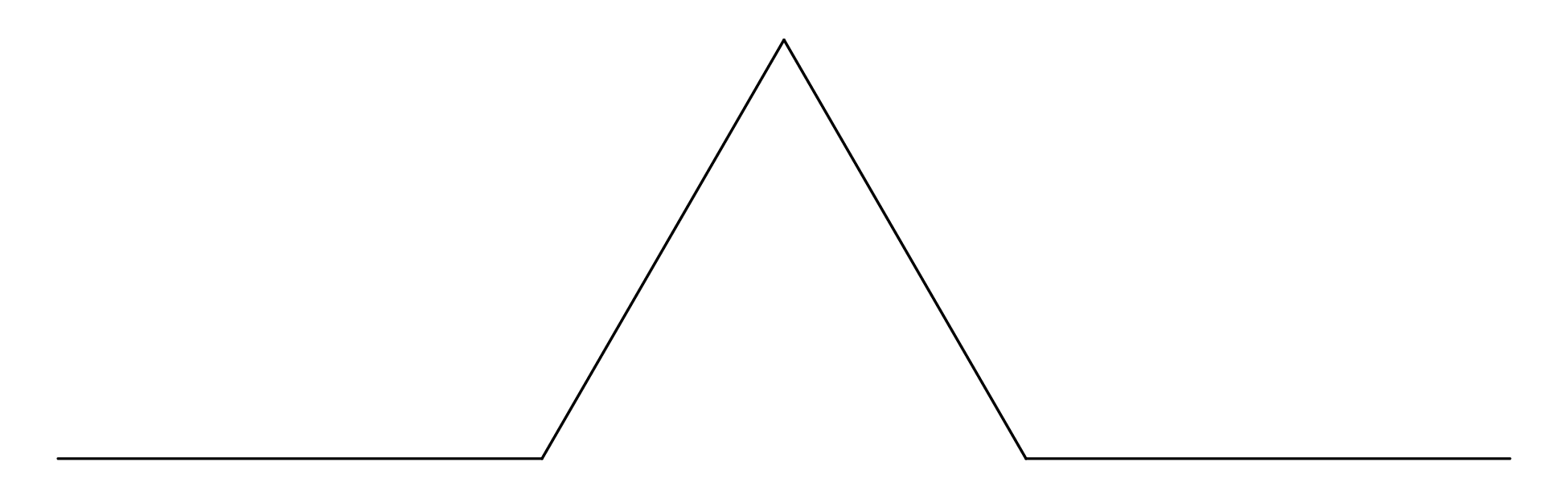

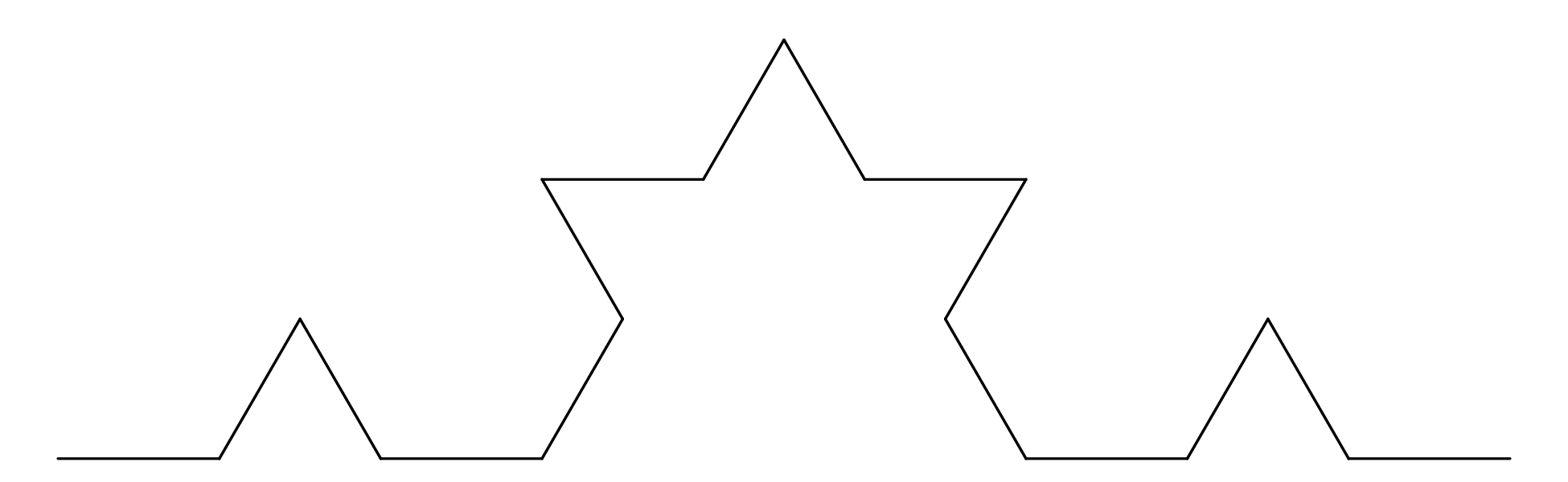

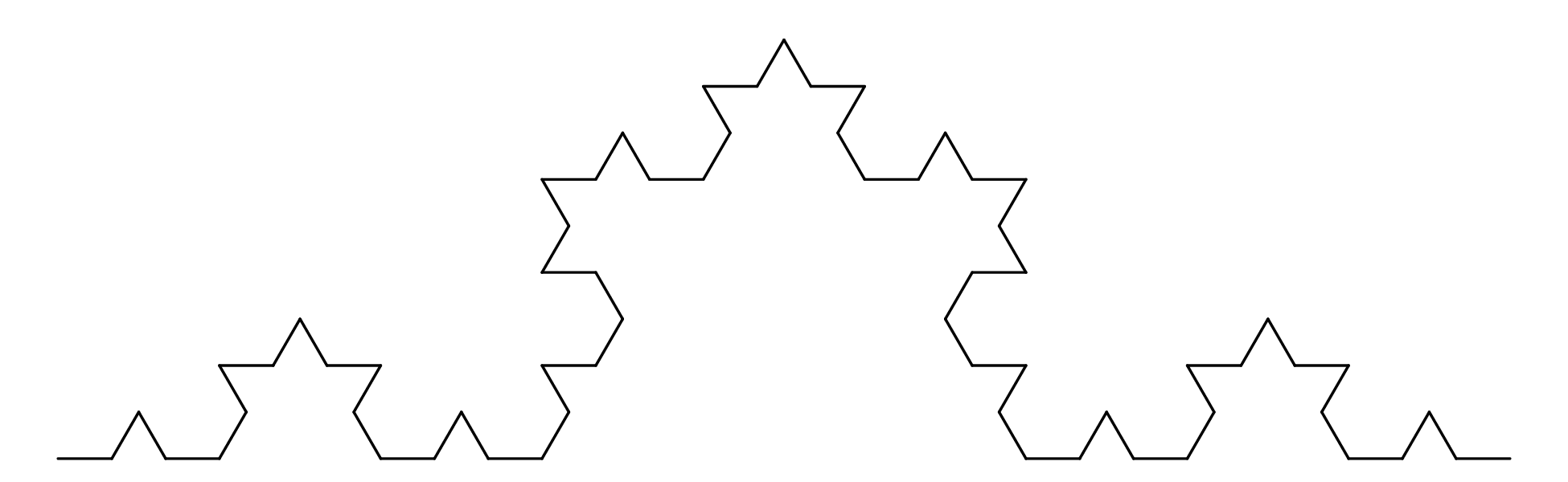

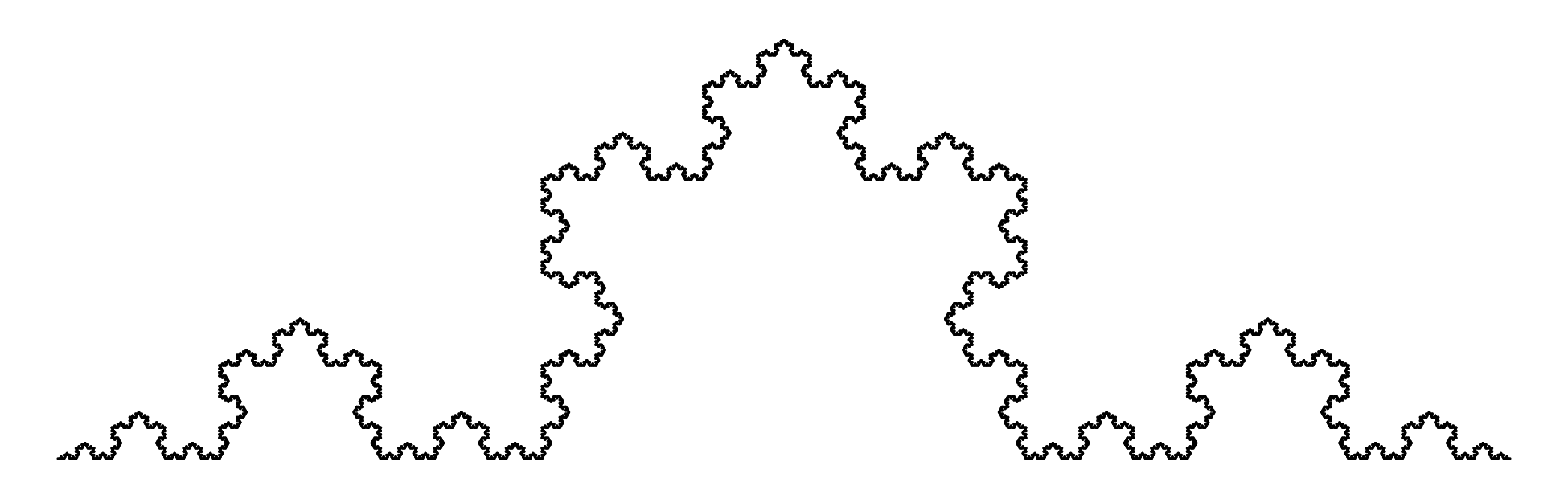

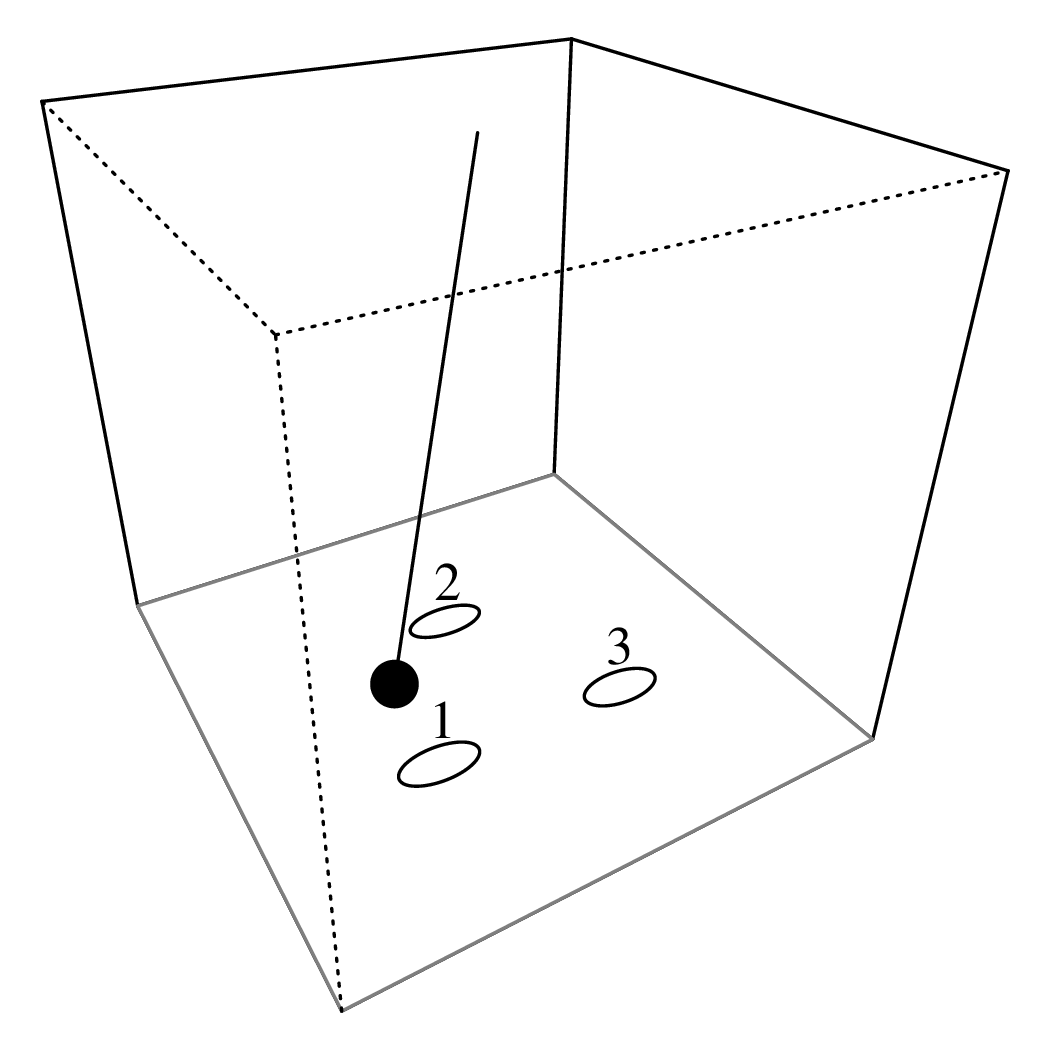

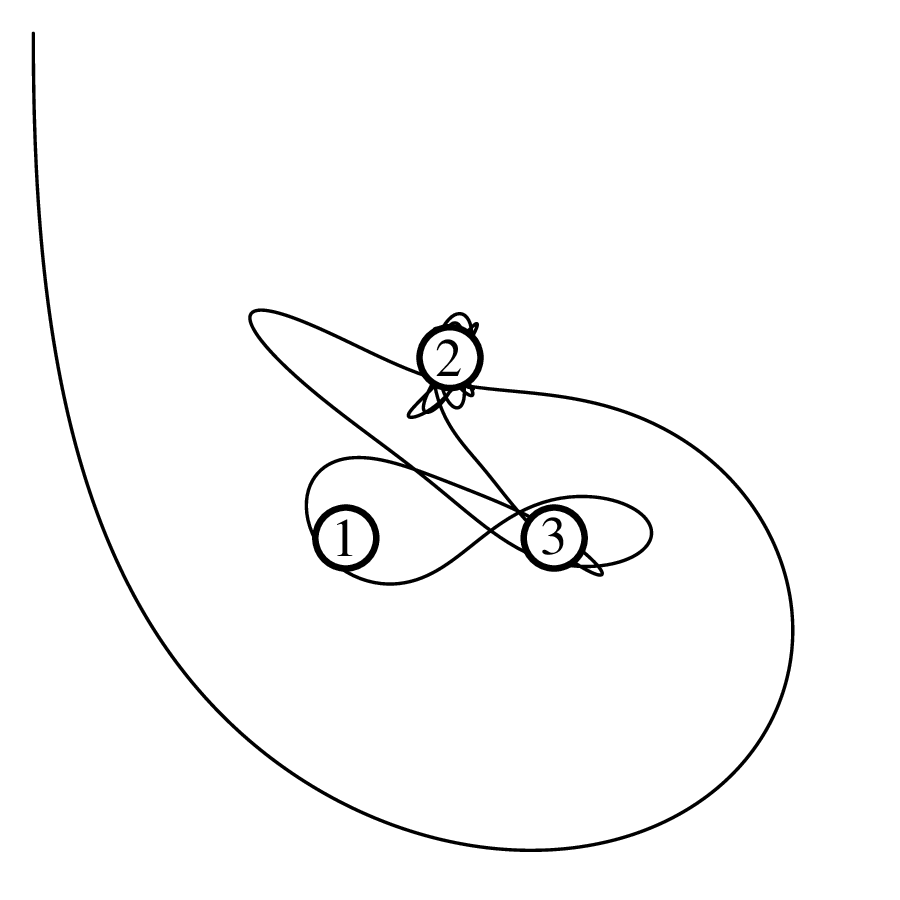

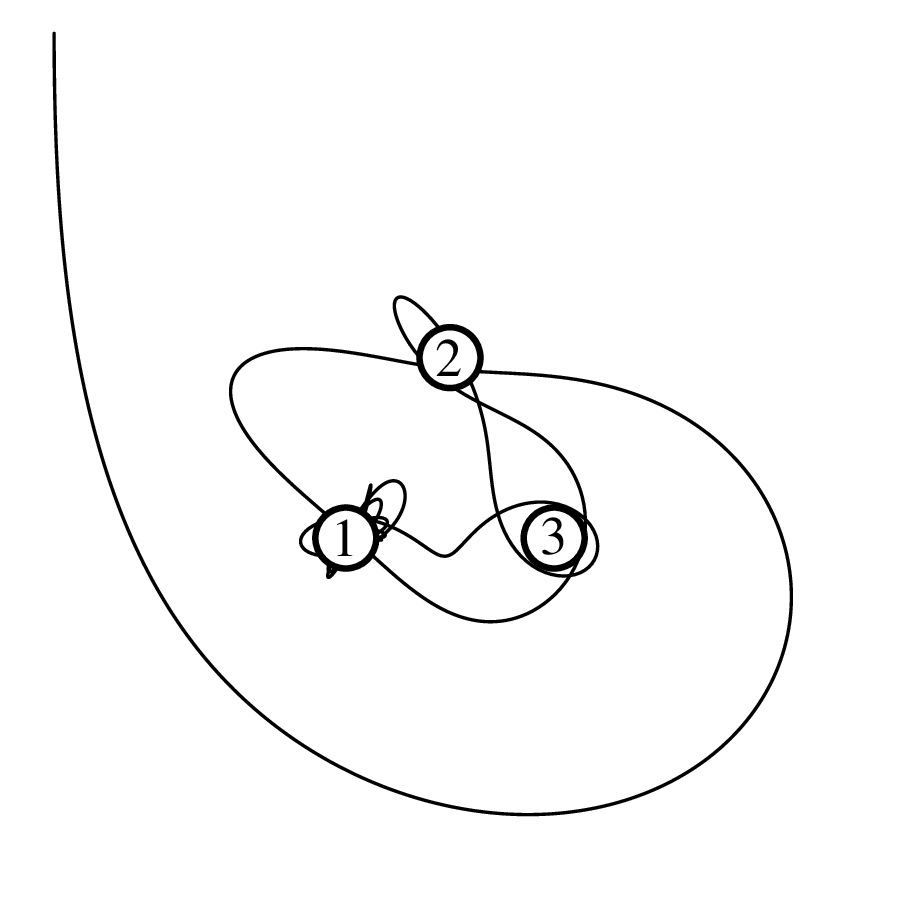

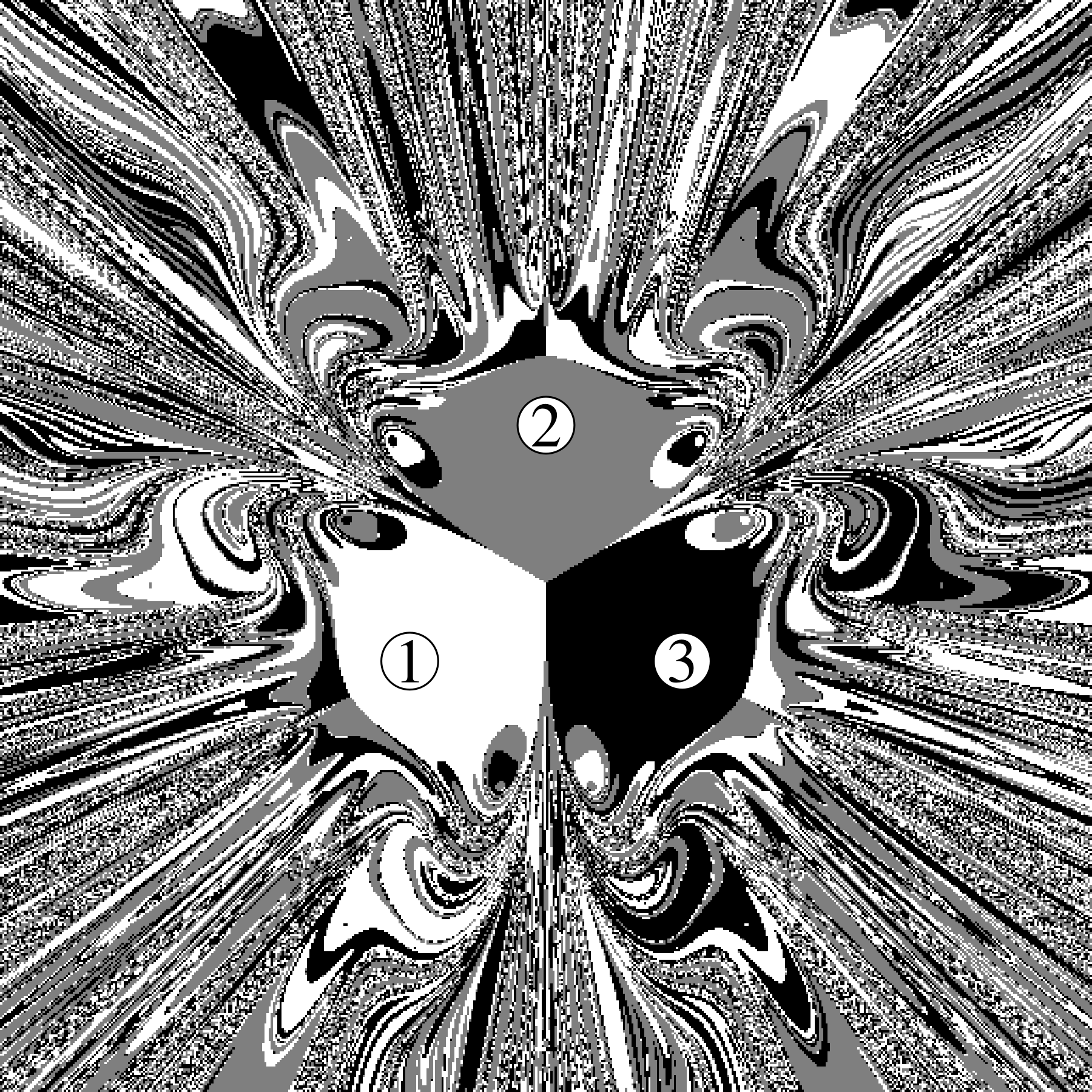

Chapter 11 covers the subjects of fractals and chaos. These topics may seem a bit esoteric at first glance, but do play a key role in the geosciences. We will see that the scale invariance of many geological processes gives rise to data that cannot be captured by conventional probability distributions. Earthquake magnitudes, fracture networks and the grain size distribution of glacial till are just three examples of quantities that follow power law distributions, which do not have a well defined mean. In the briefest of introductions to the fascinating world of deterministic chaos, Section 11.4 uses a simple magnetic pendulum example to introduce the ‘butterfly effect’, which describes the phenomenon whereby even simple systems of nonlinear equations can give rise to unpredictable and highly complex behaviour.

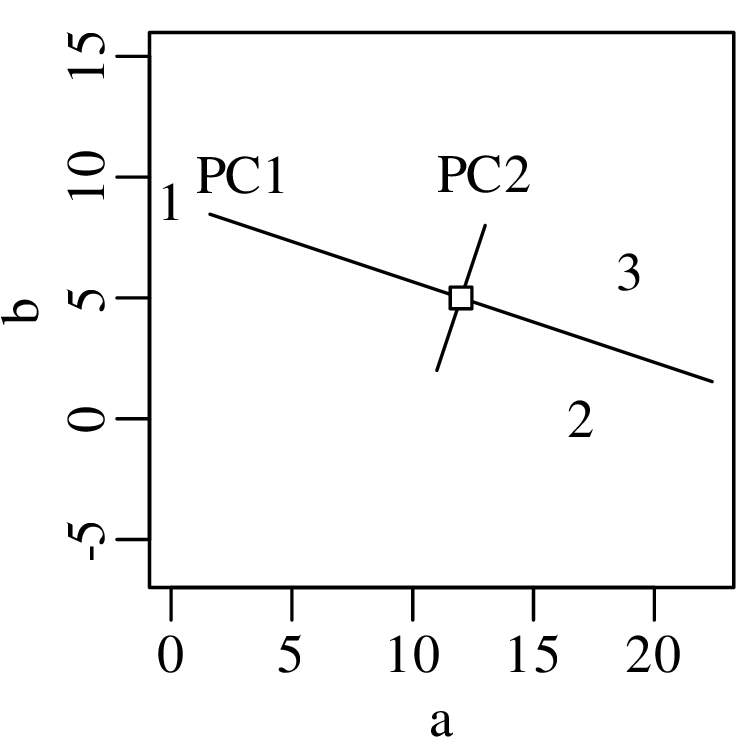

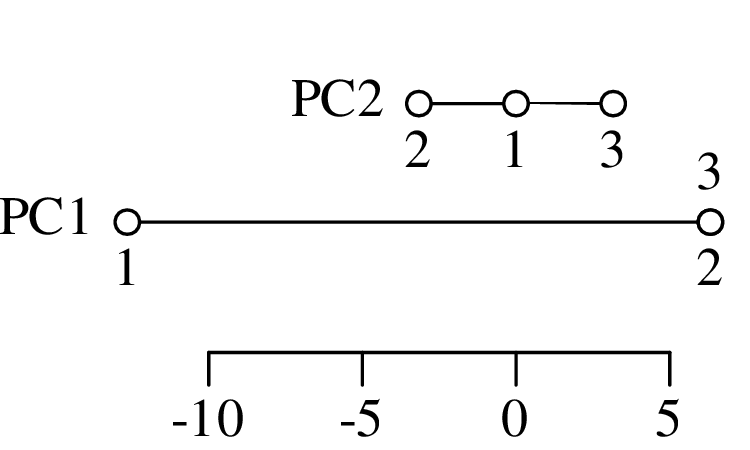

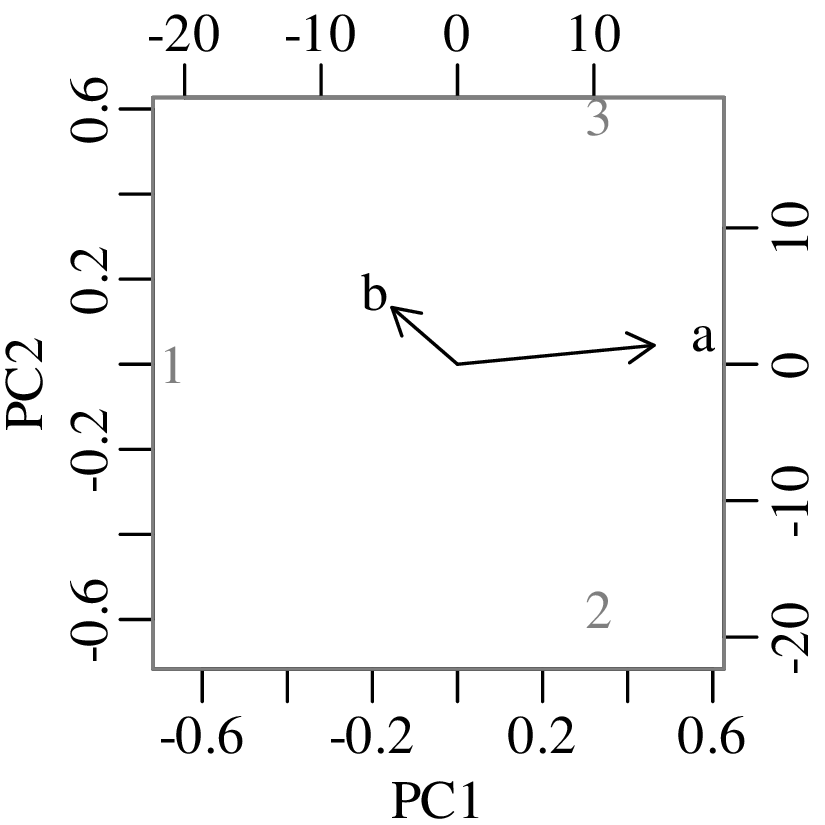

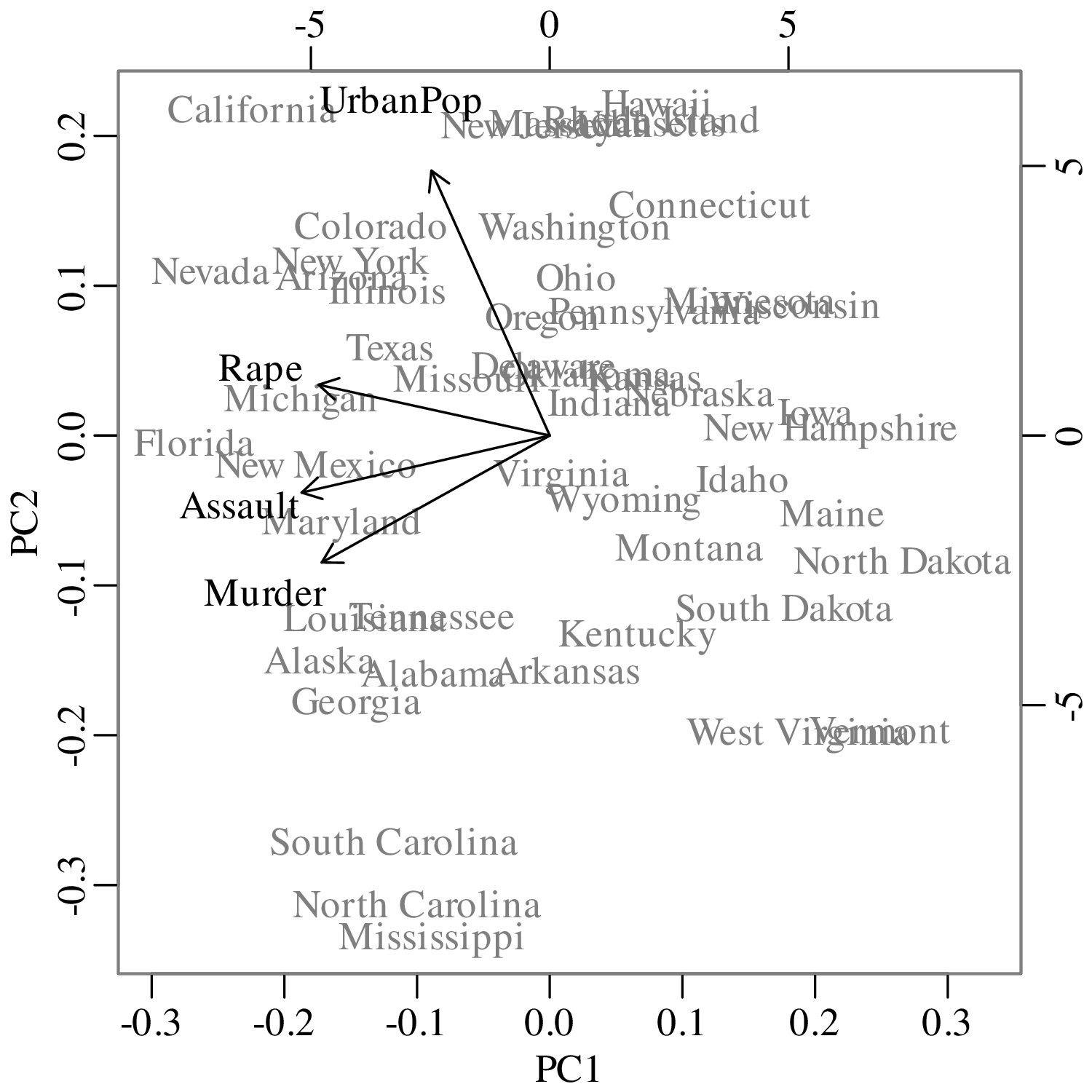

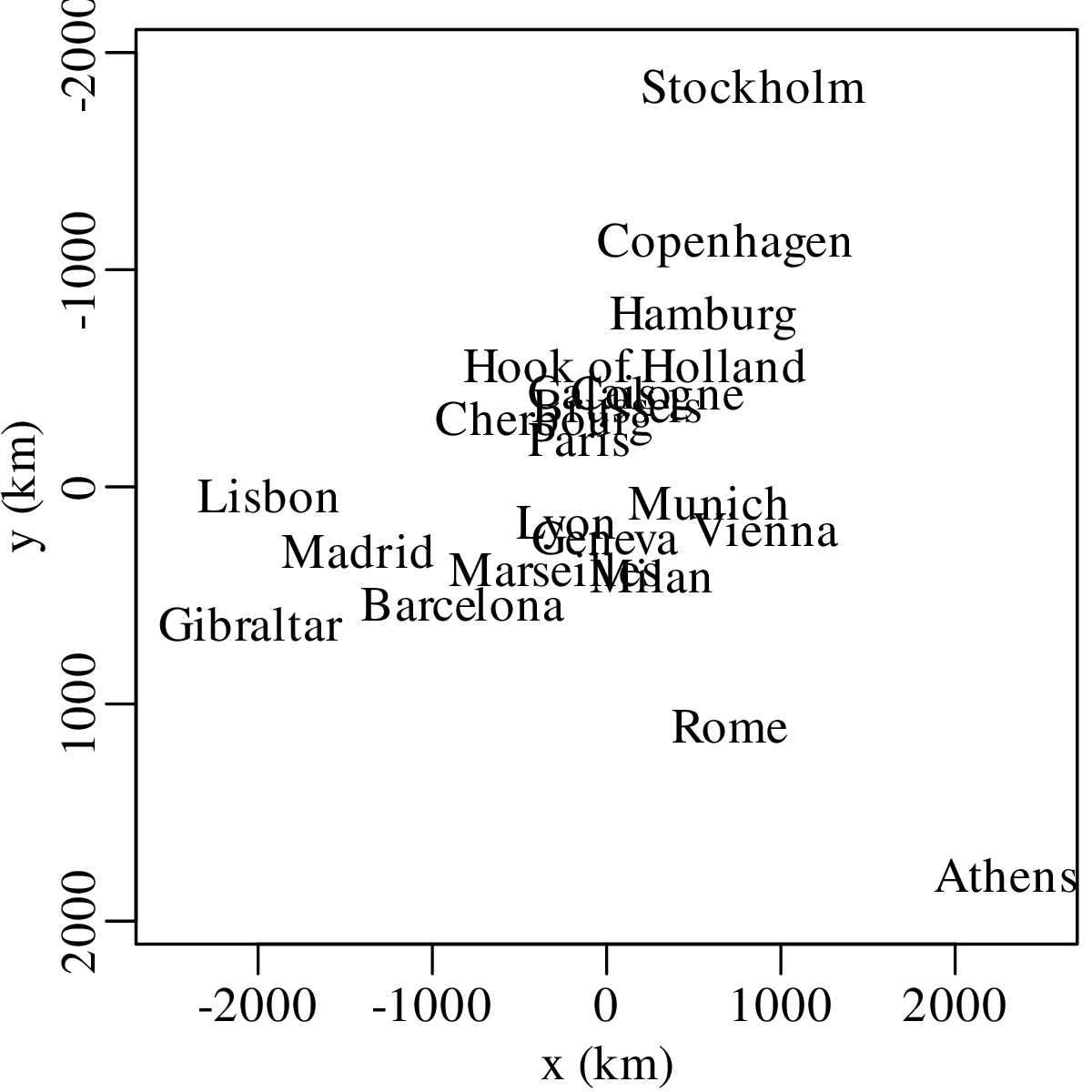

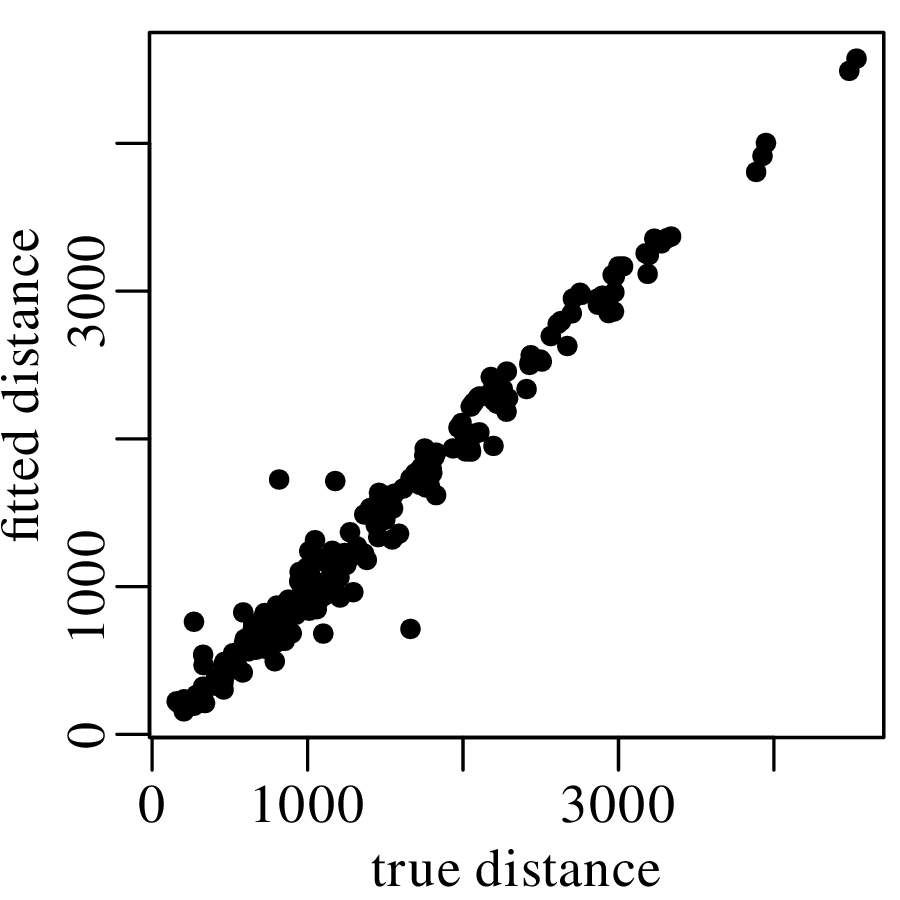

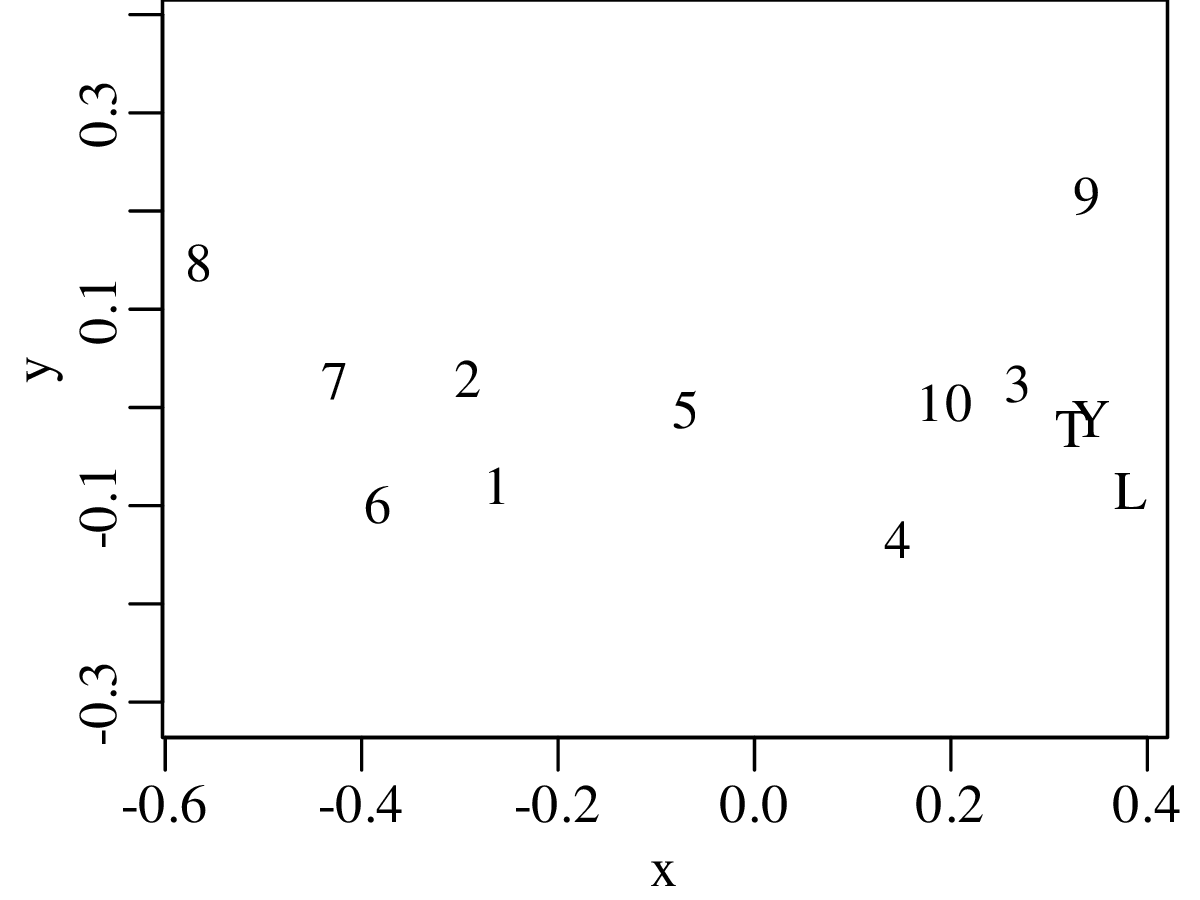

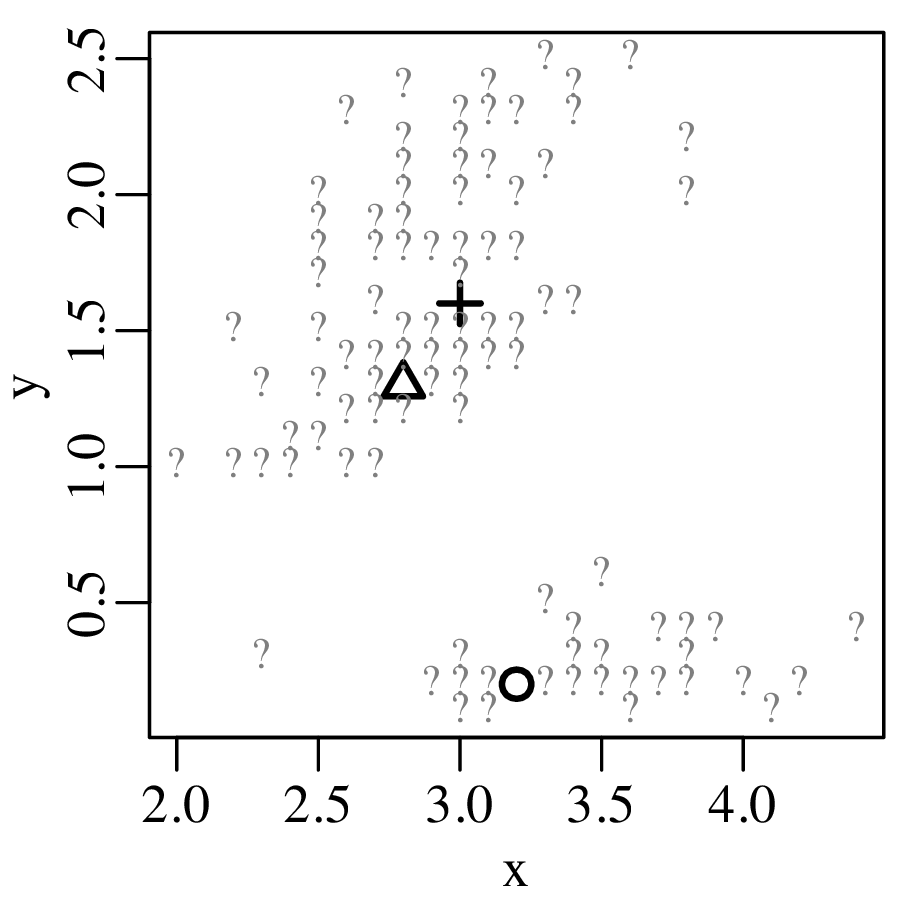

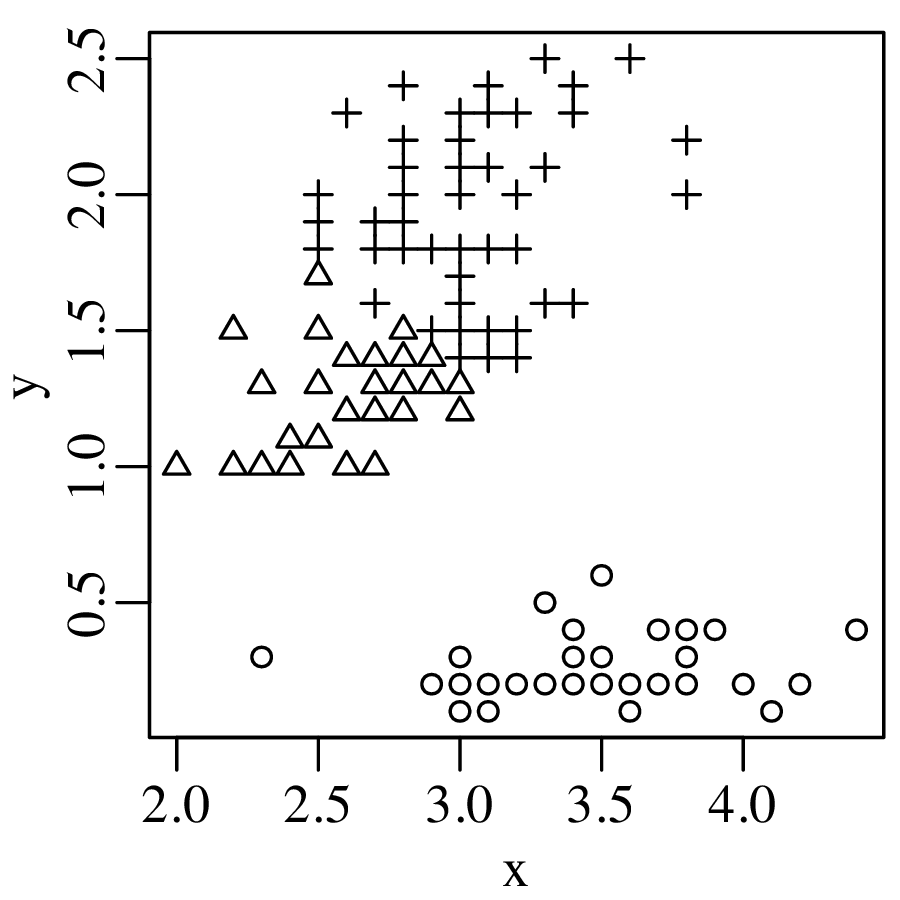

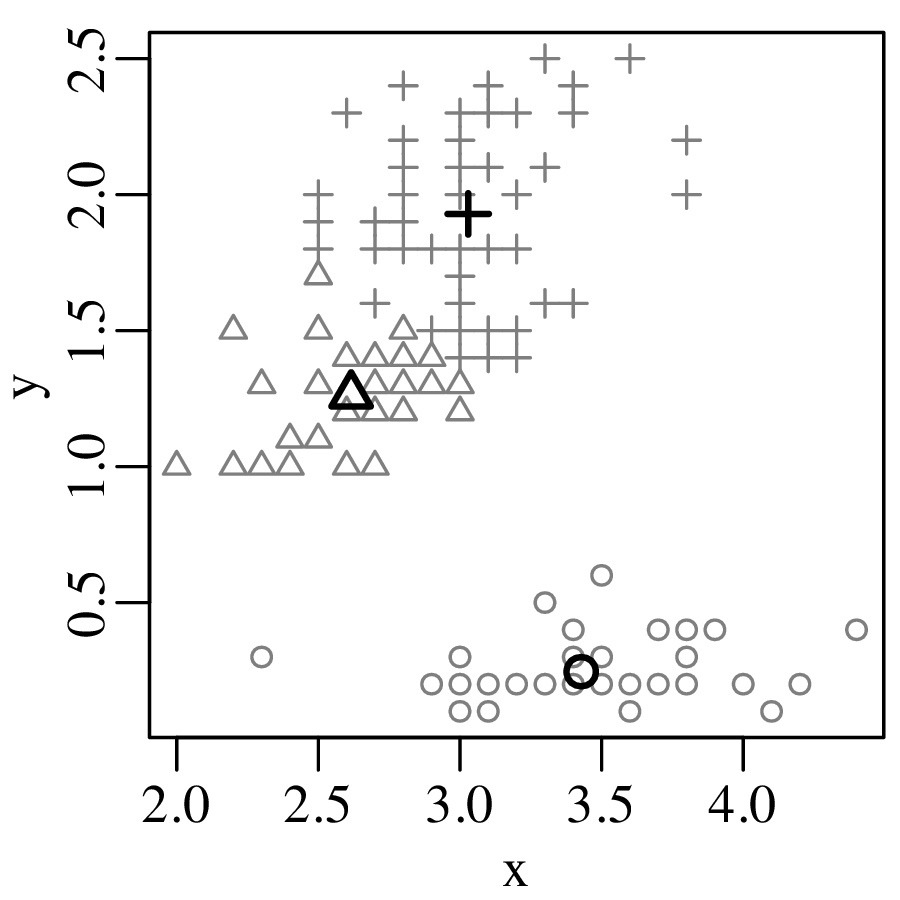

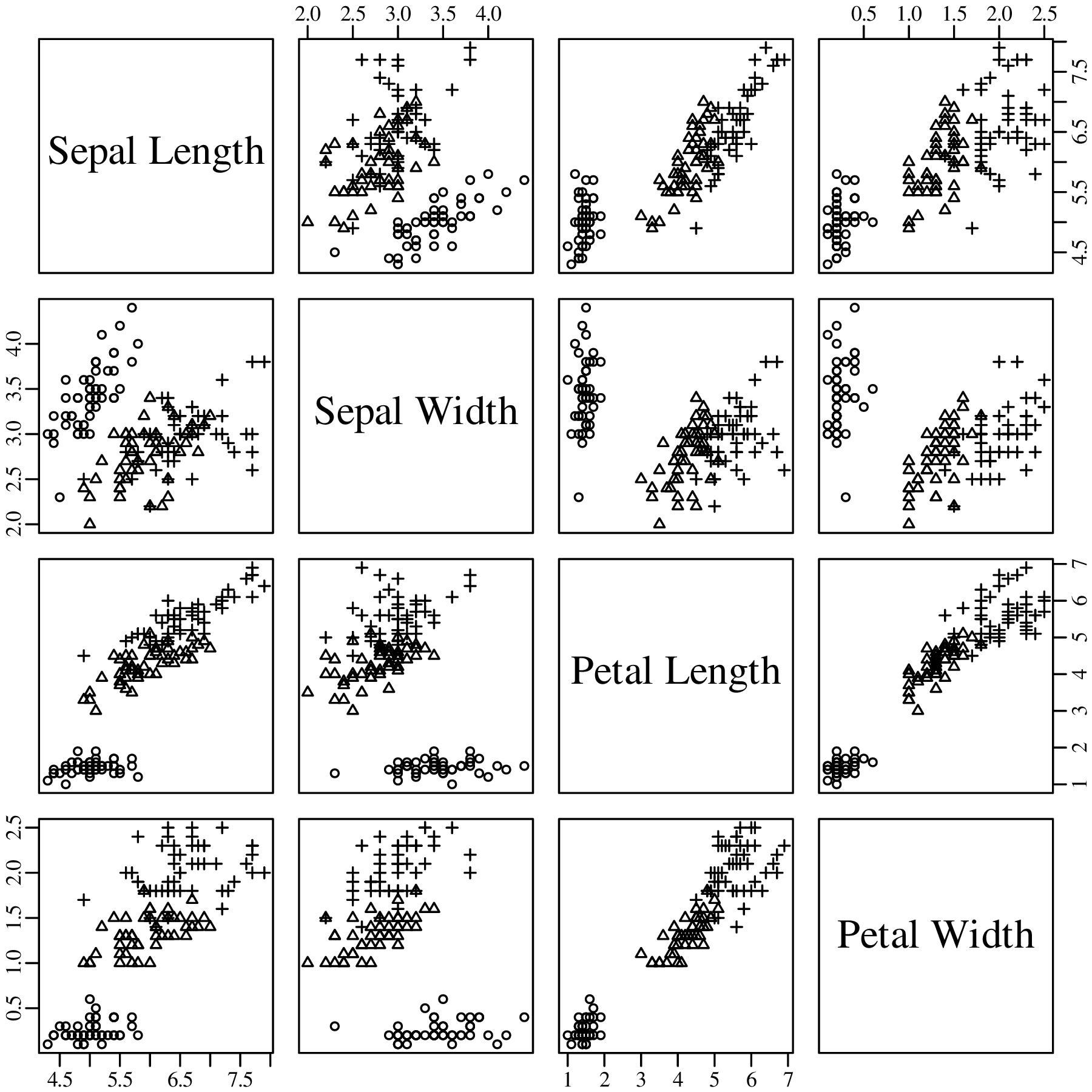

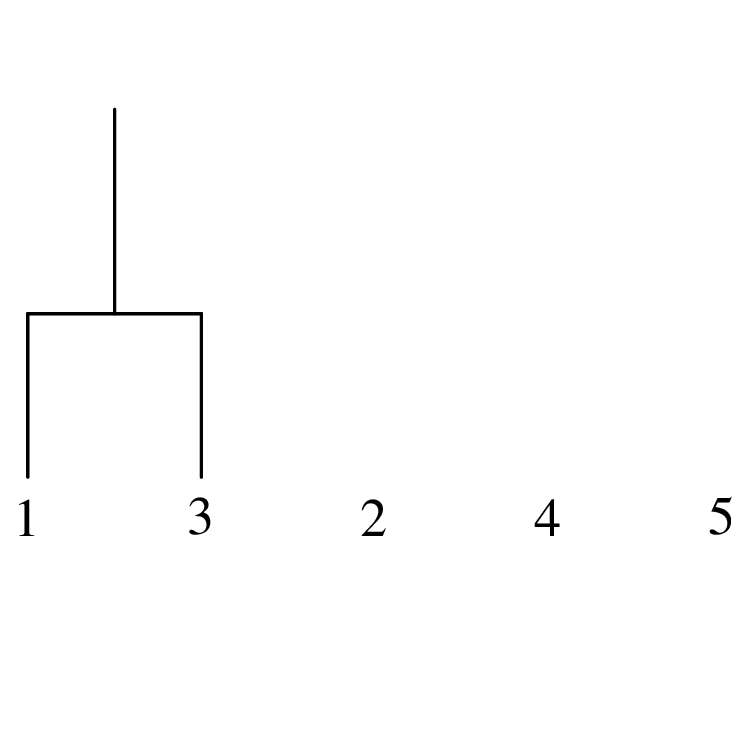

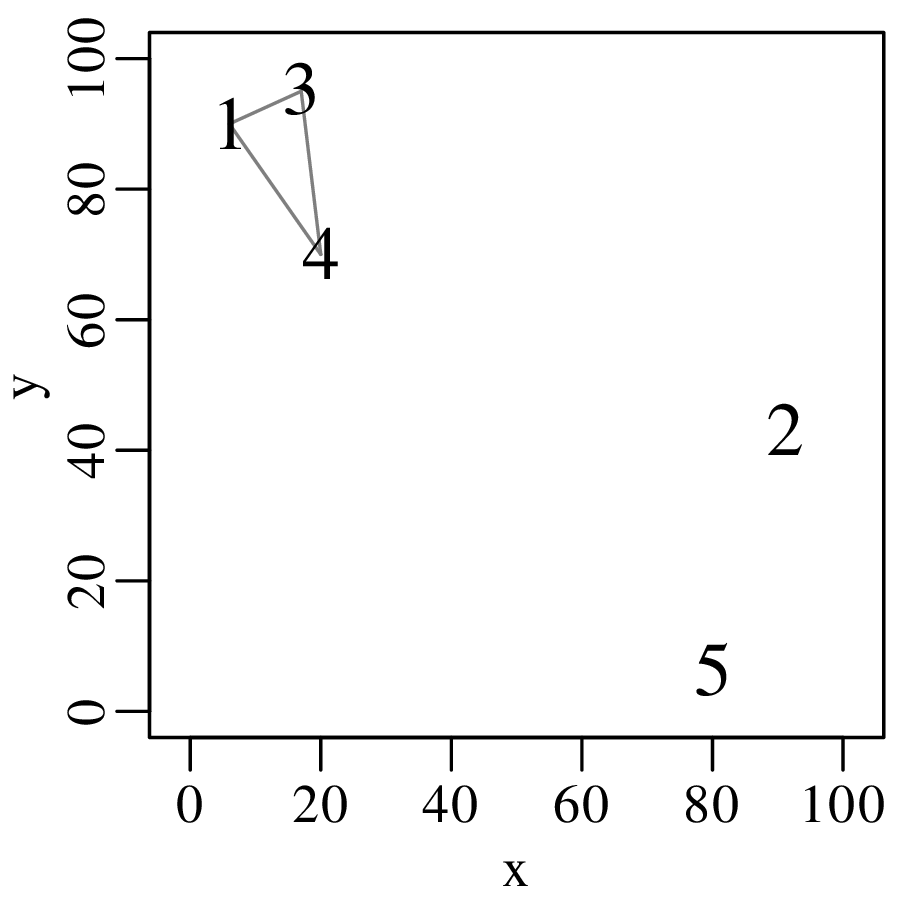

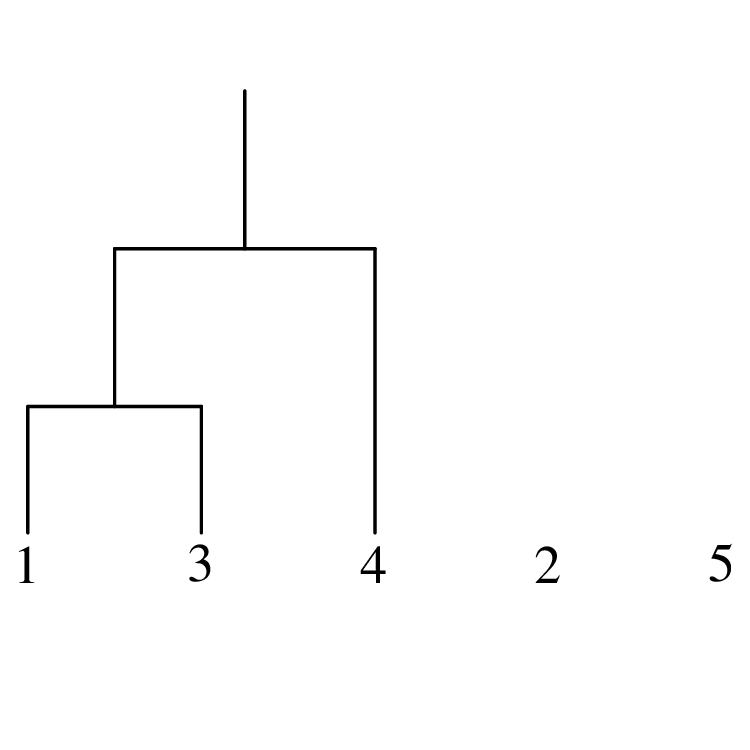

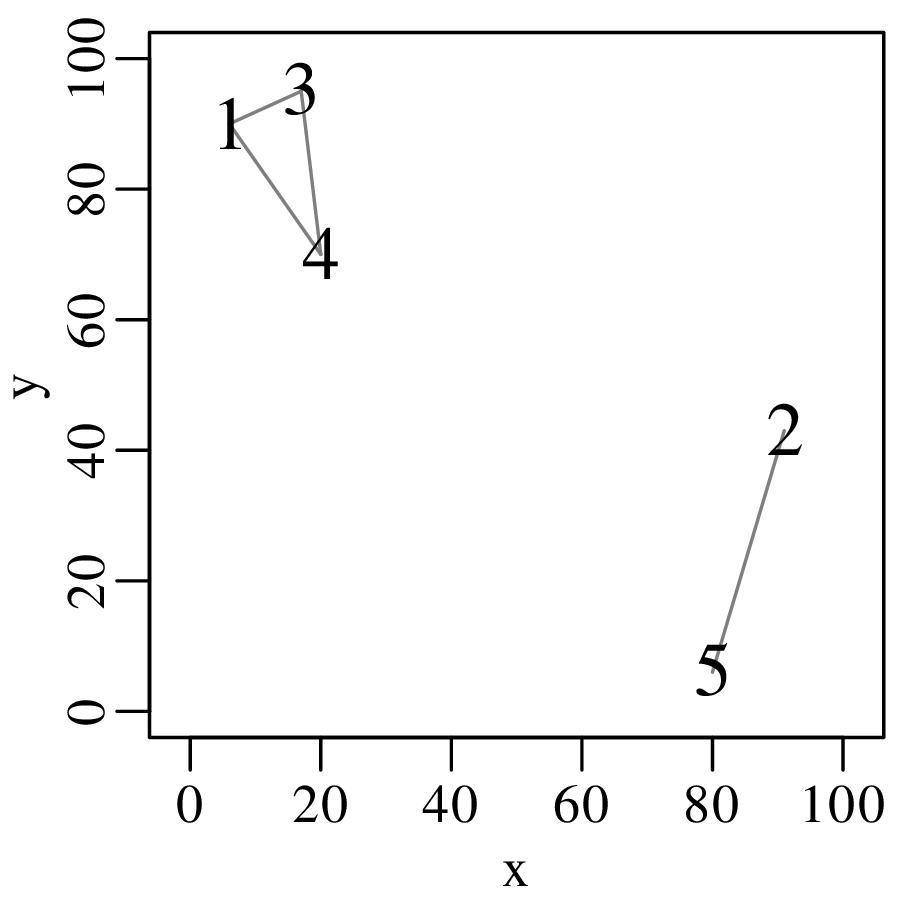

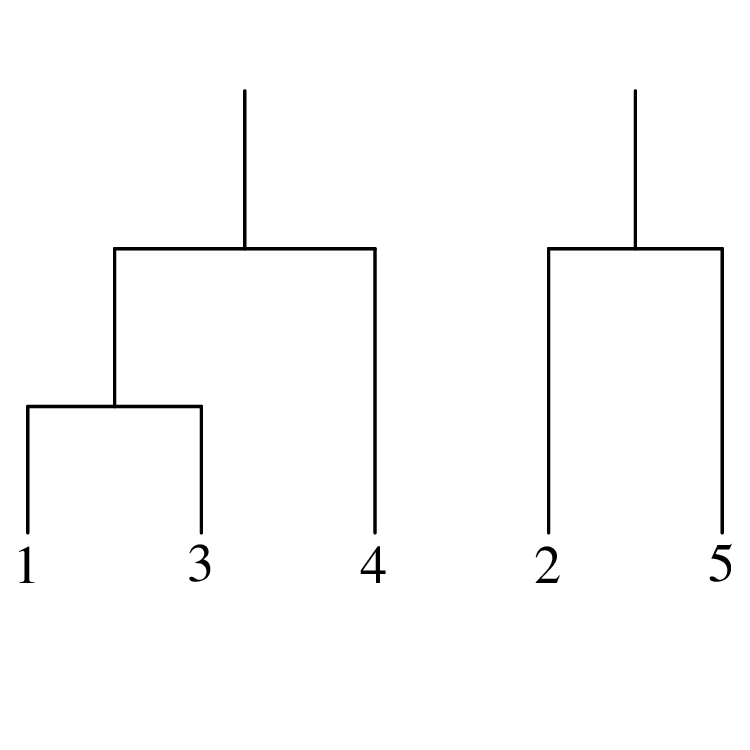

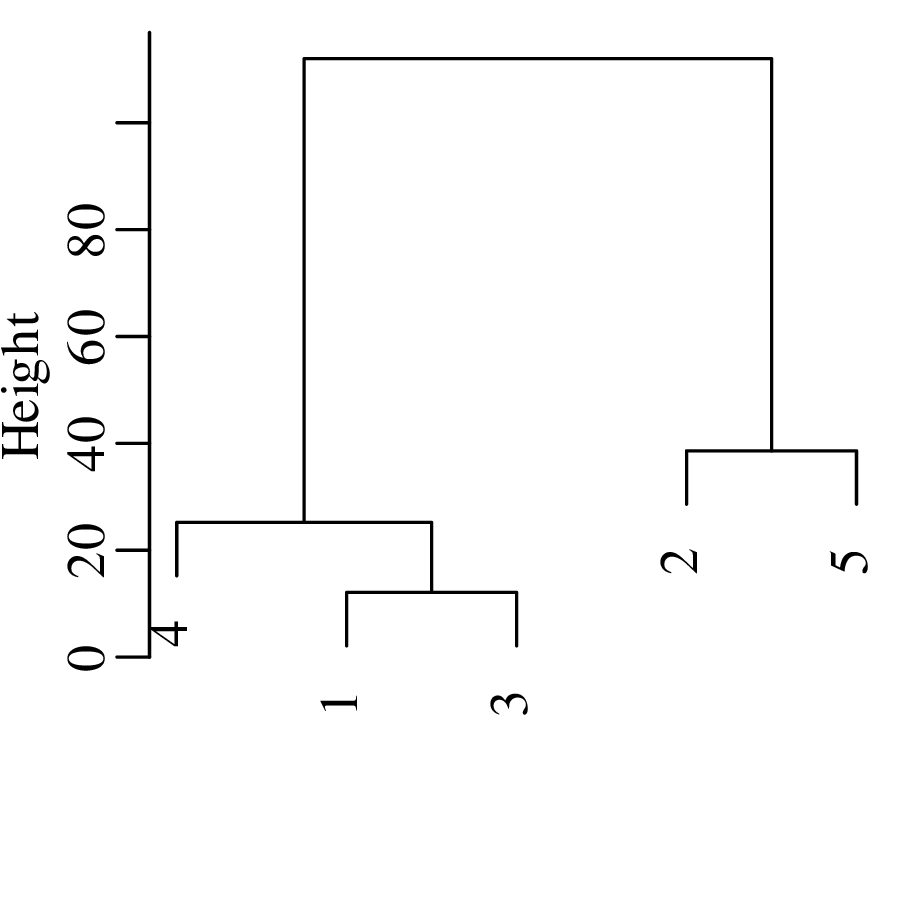

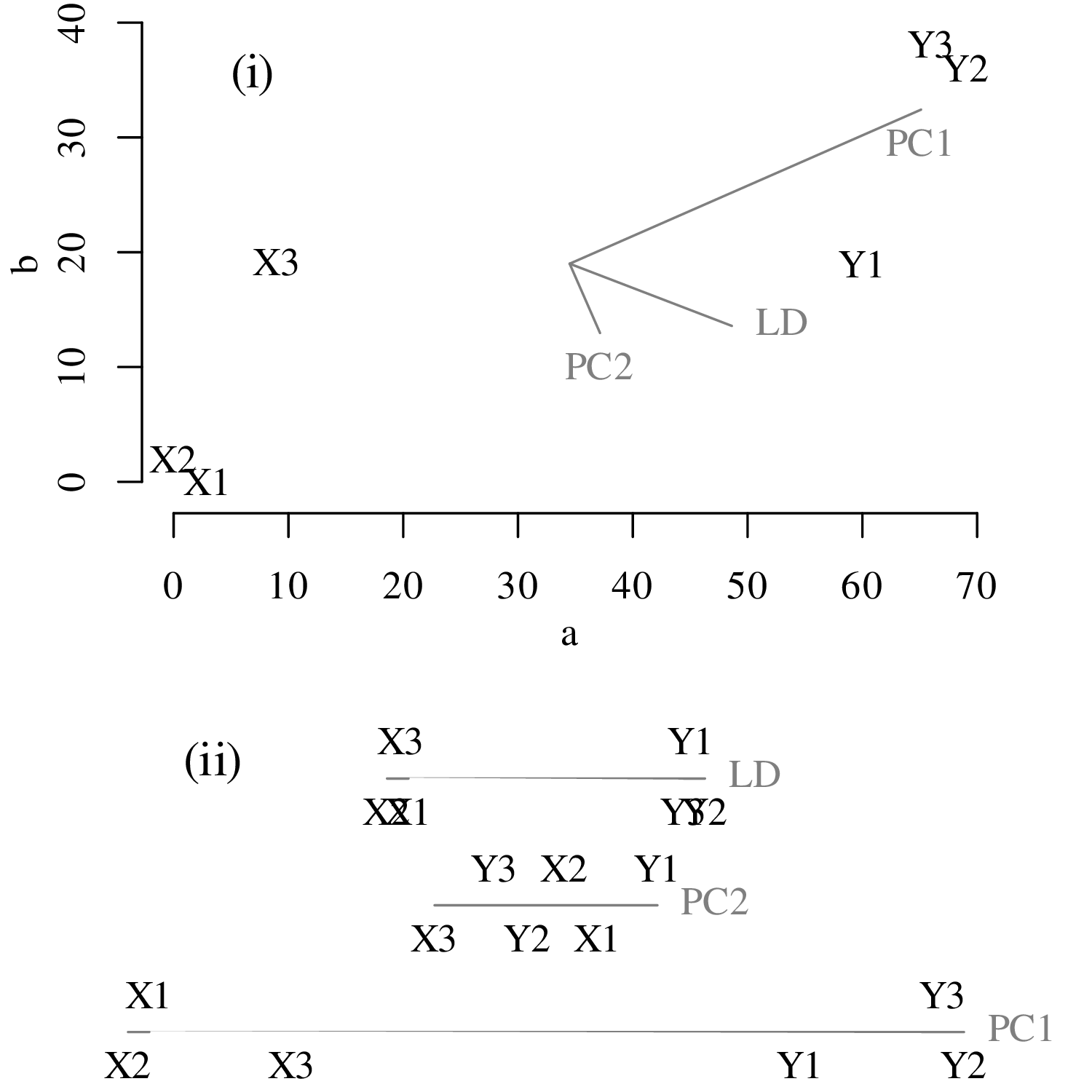

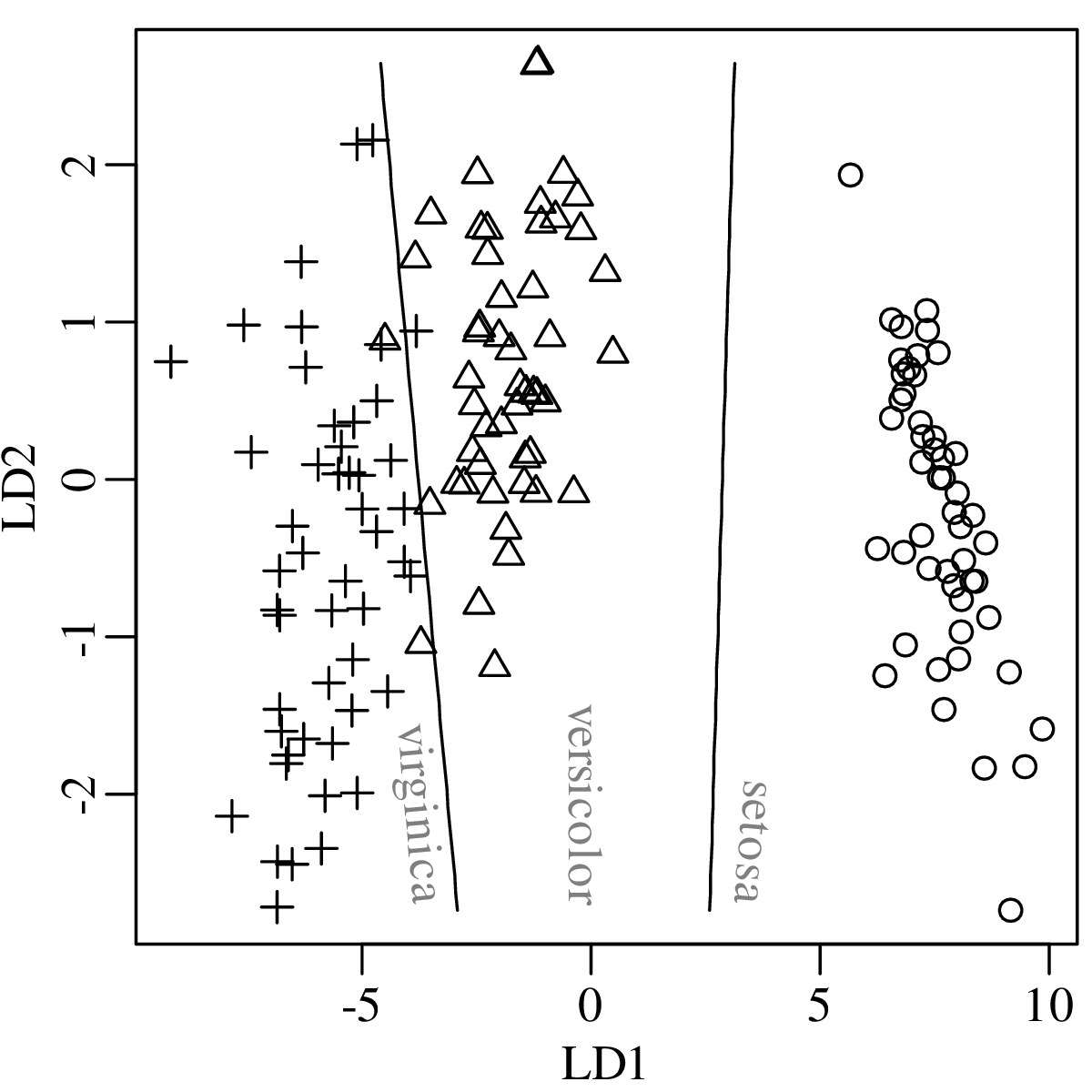

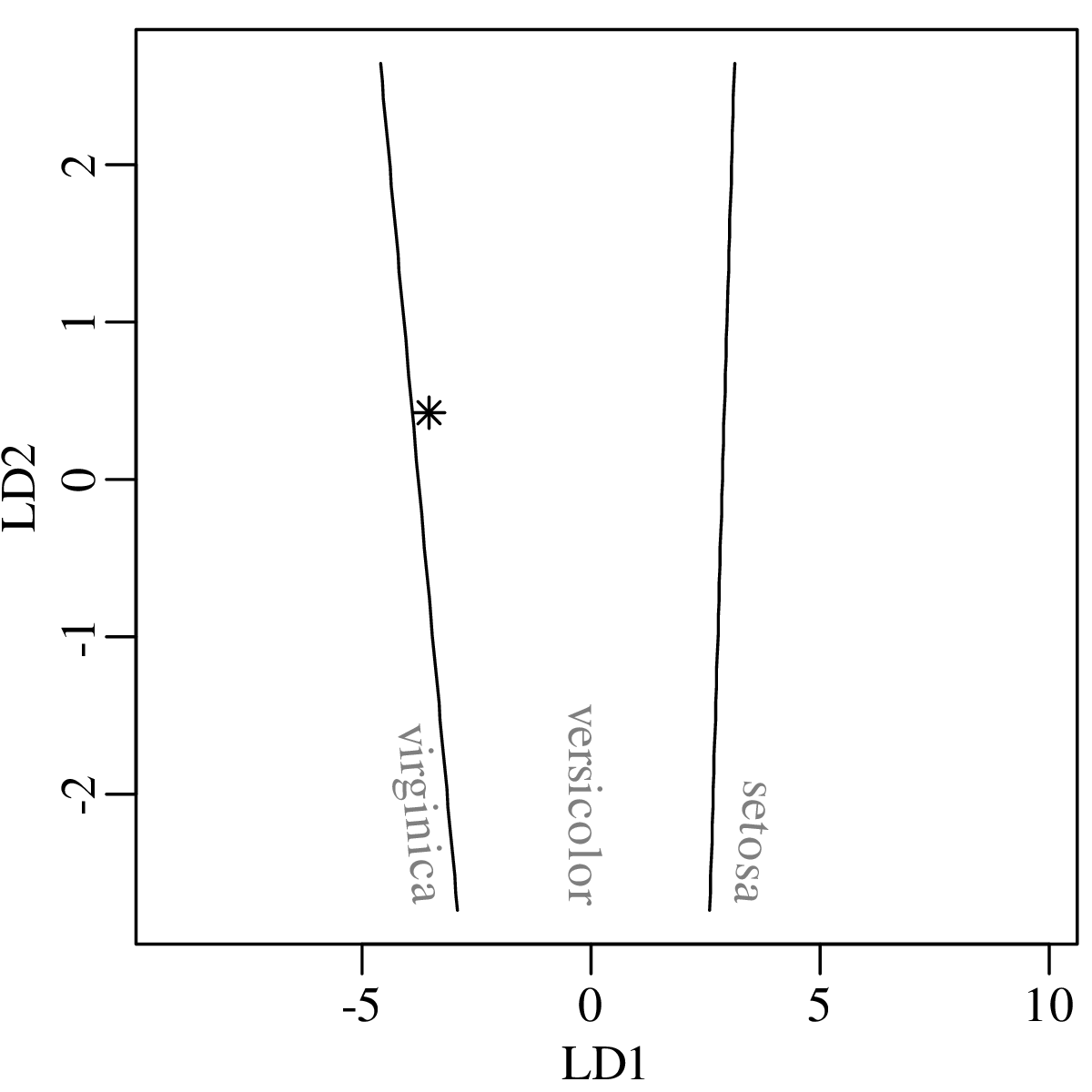

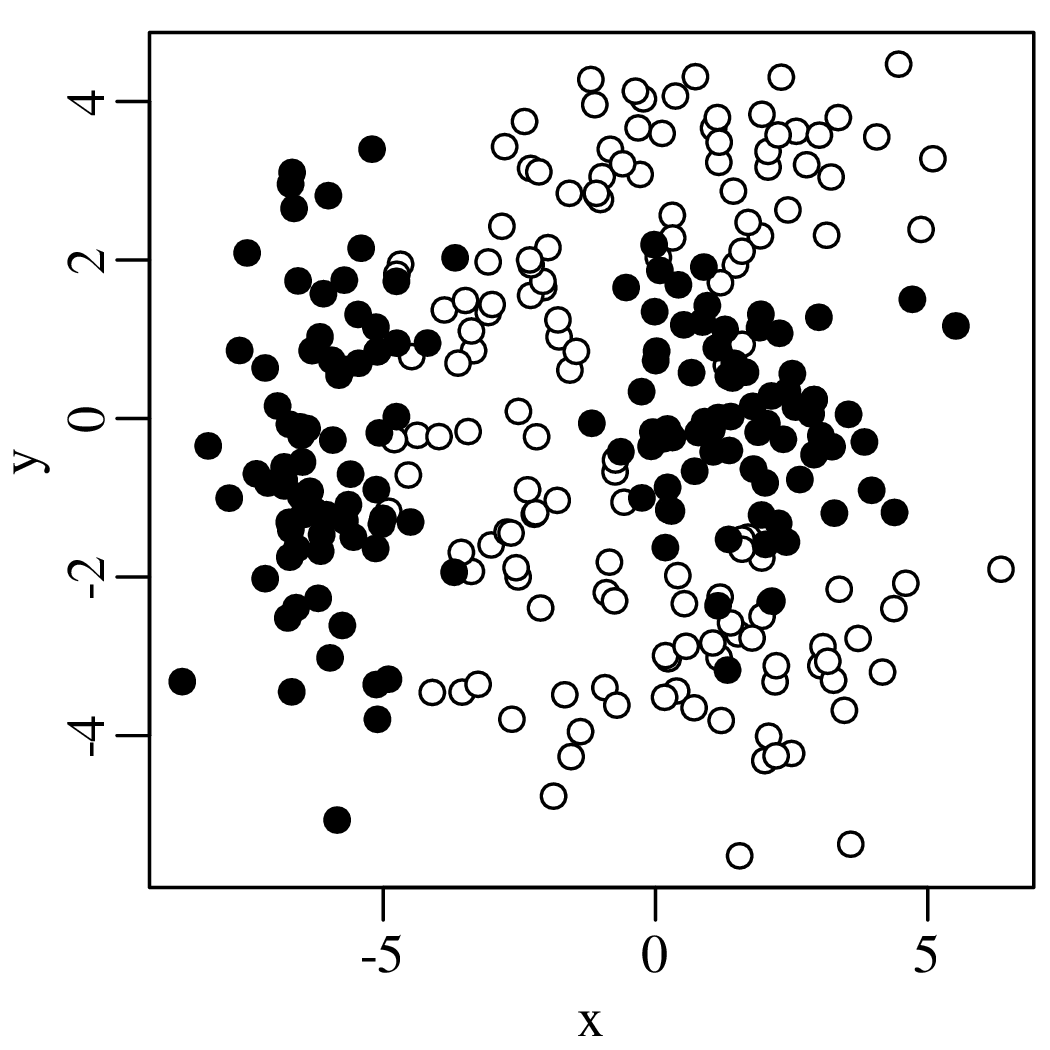

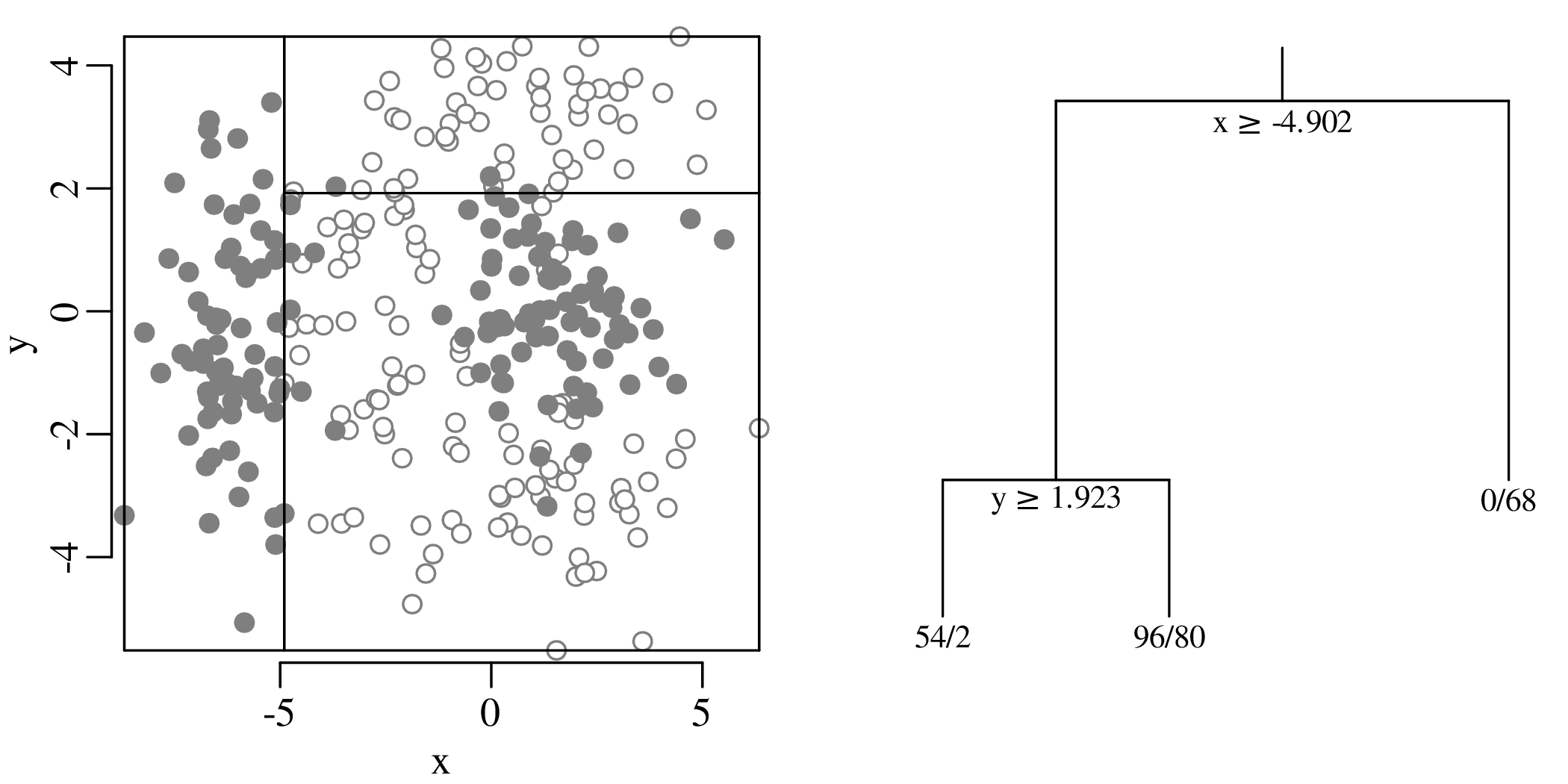

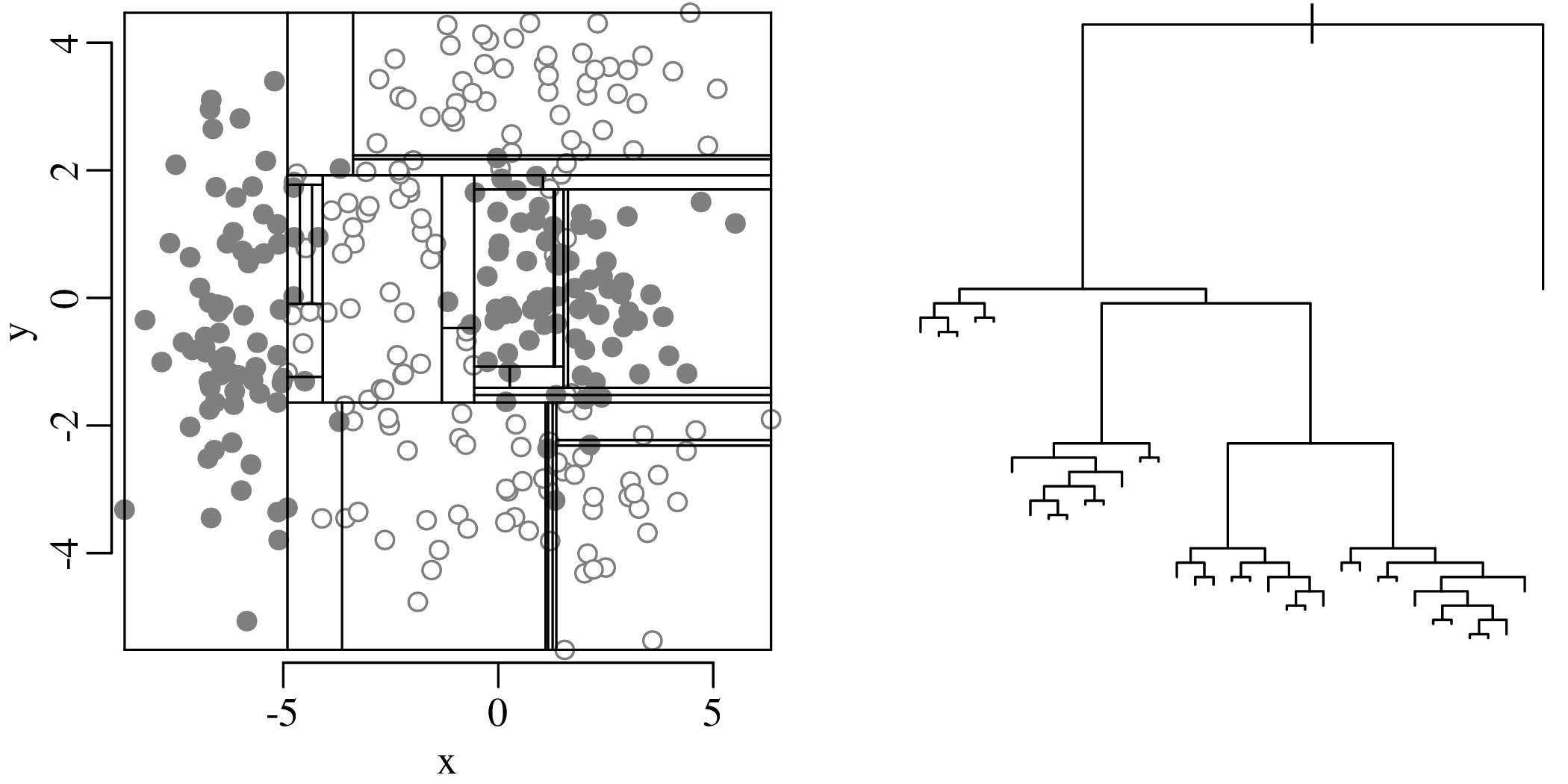

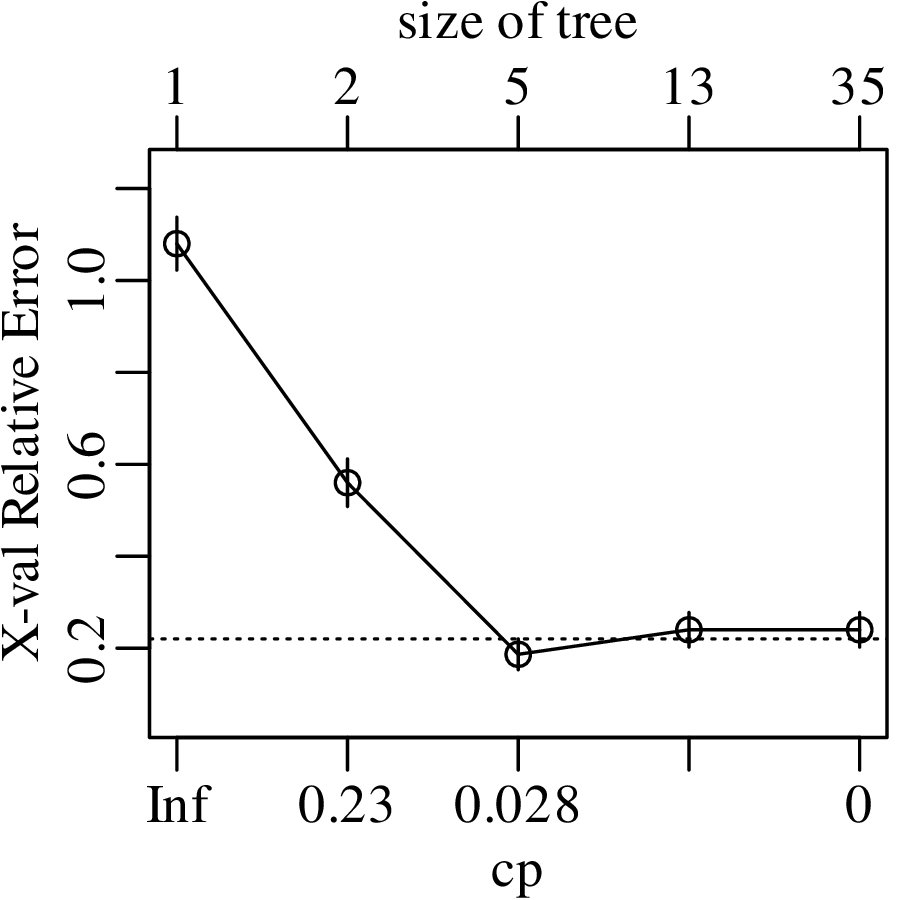

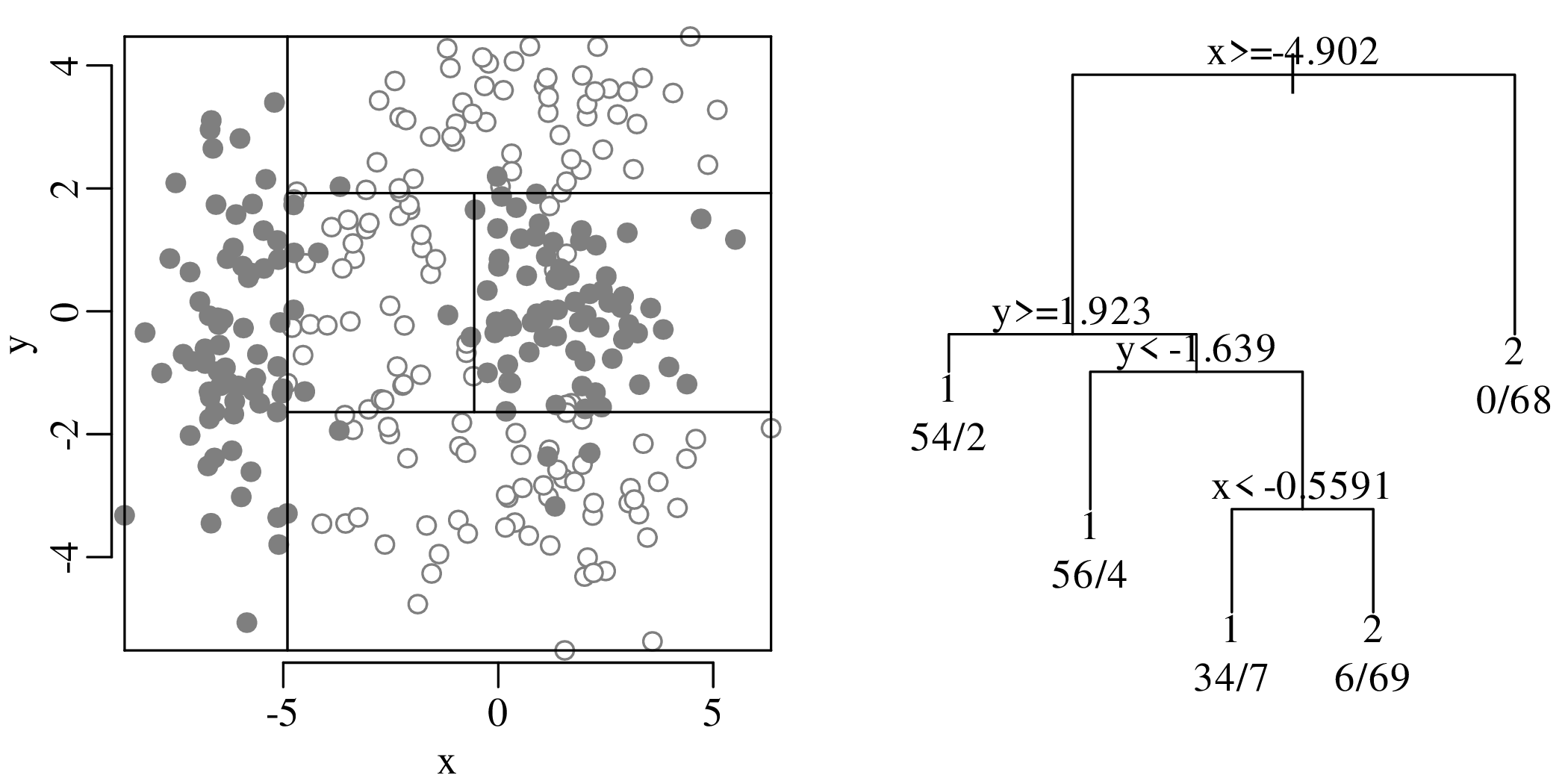

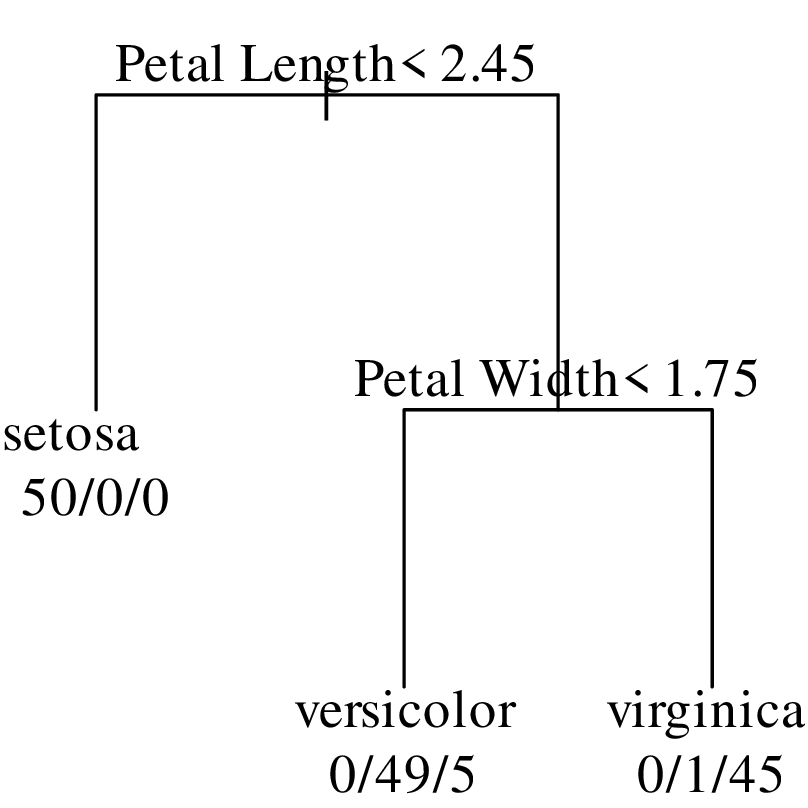

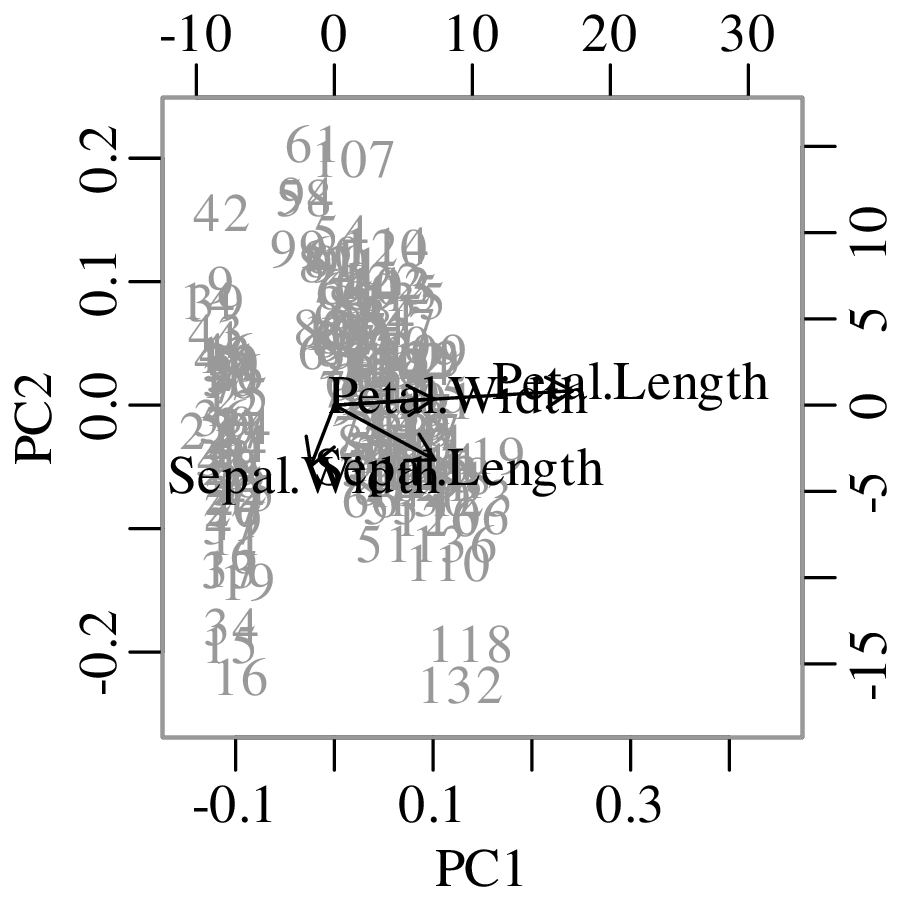

Chapters 12 and 13 introduce some simple algorithms for unsupervised and supervised machine learning, respectively. As the name suggests, unsupervised learning helps scientists visualise and analyse large datasets without requiring any prior knowledge of their underlying structure. It includes multivariate ordination techniques such as Principal Component Analysis (PCA, Section 12.1) and Multidimensional Scaling (MDS, Section 12.2); as well as the k-means (Section 12.3) and hierarchical (Section 12.4) clustering algorithms. Supervised learning algorithms such as discriminant analysis (Section 13.1) and decision trees (Section 13.2) define decision rules to identify pre-defined groups in some training data. These rules can then be used to assign a dataset of new samples to one of these same groups.

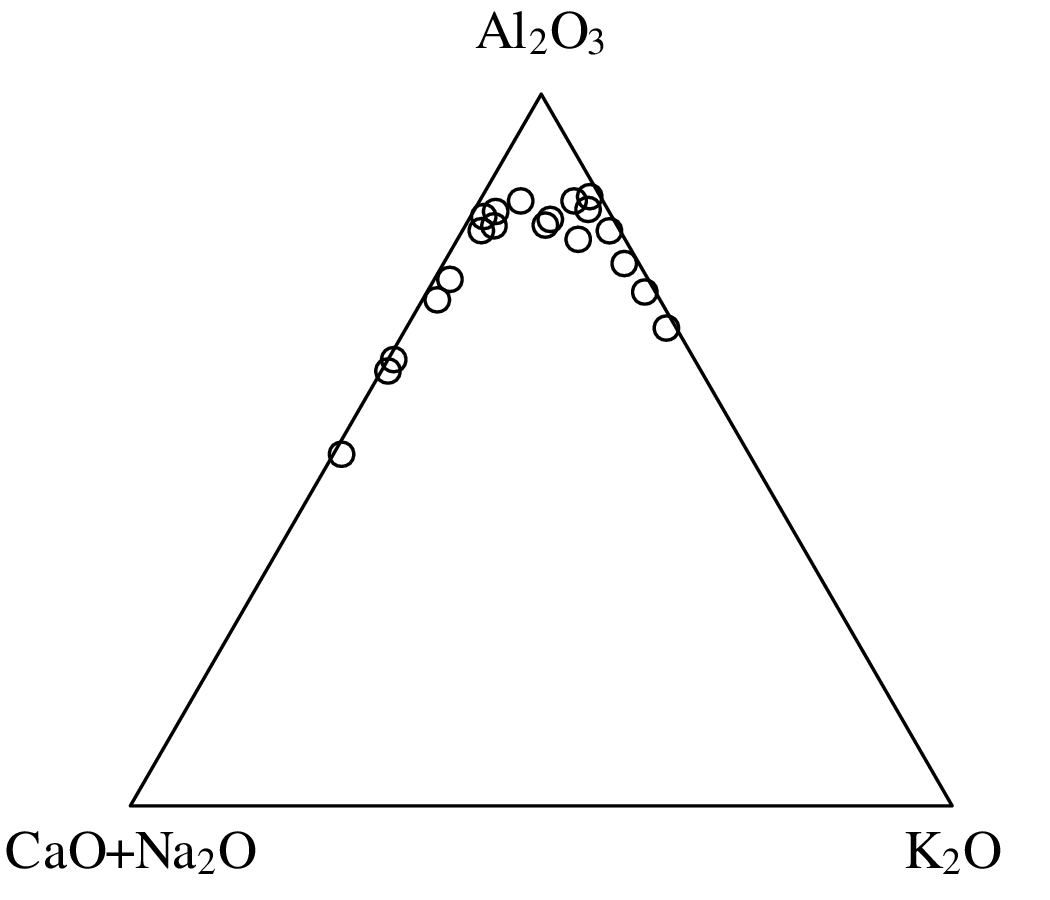

Chapter 14 discusses compositional data, which are one of the most common data types in the Earth Sciences. Chemical compositions and the relative abundances of minerals, isotopes and animal species are all strictly positive quantities that can be renormalised to a constant sum. They cannot be safely analysed by statistical methods that assume normal distributions. Even the simplest statistical operations such as the calculation of an arithmetic mean or a standard deviation can go horribly wrong when applied to compositional data. Fortunately, the compositional data conundrum can be solved using a simple data transformation using logratios (Section 14.2). Following logratio transformation, compositional datasets can be safely averaged or analysed by more sophisticated statistical methods.

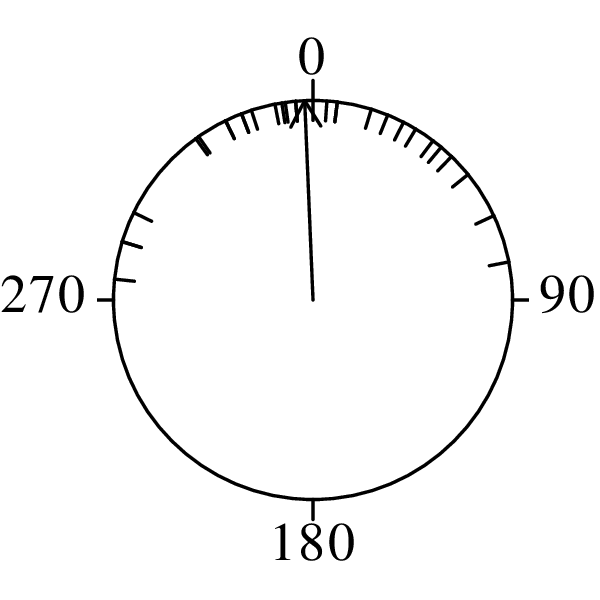

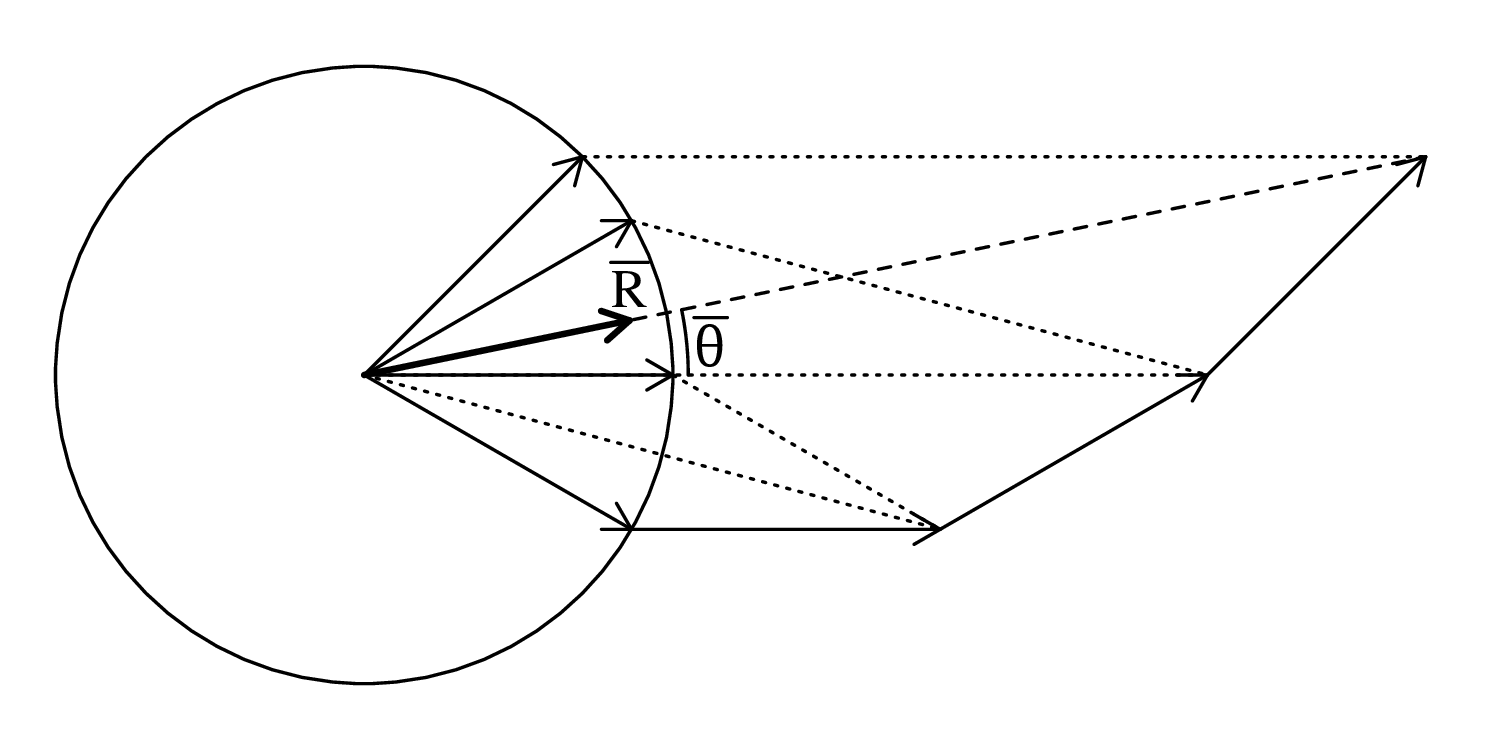

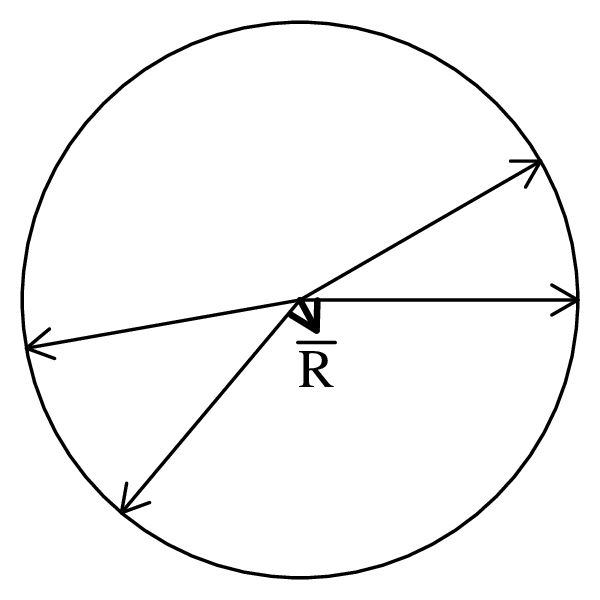

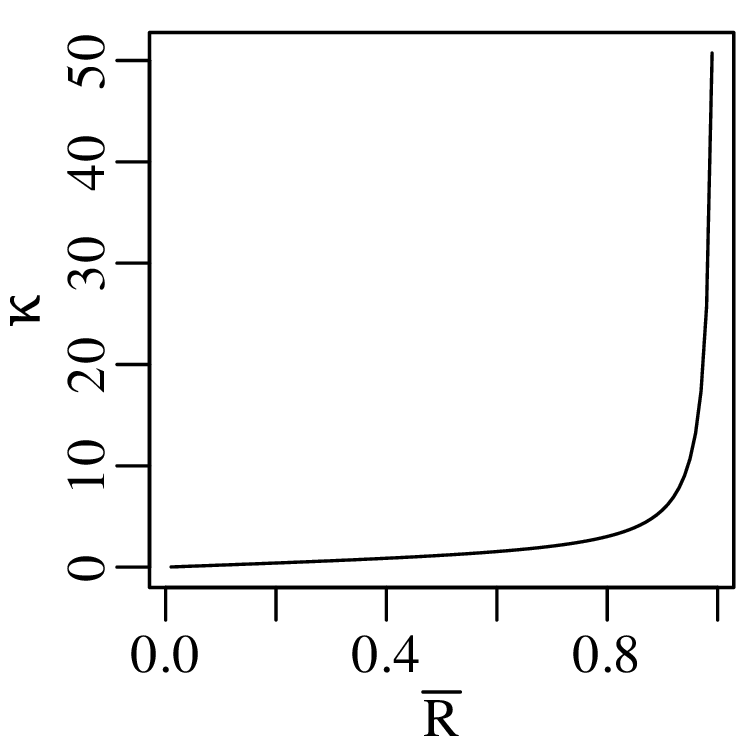

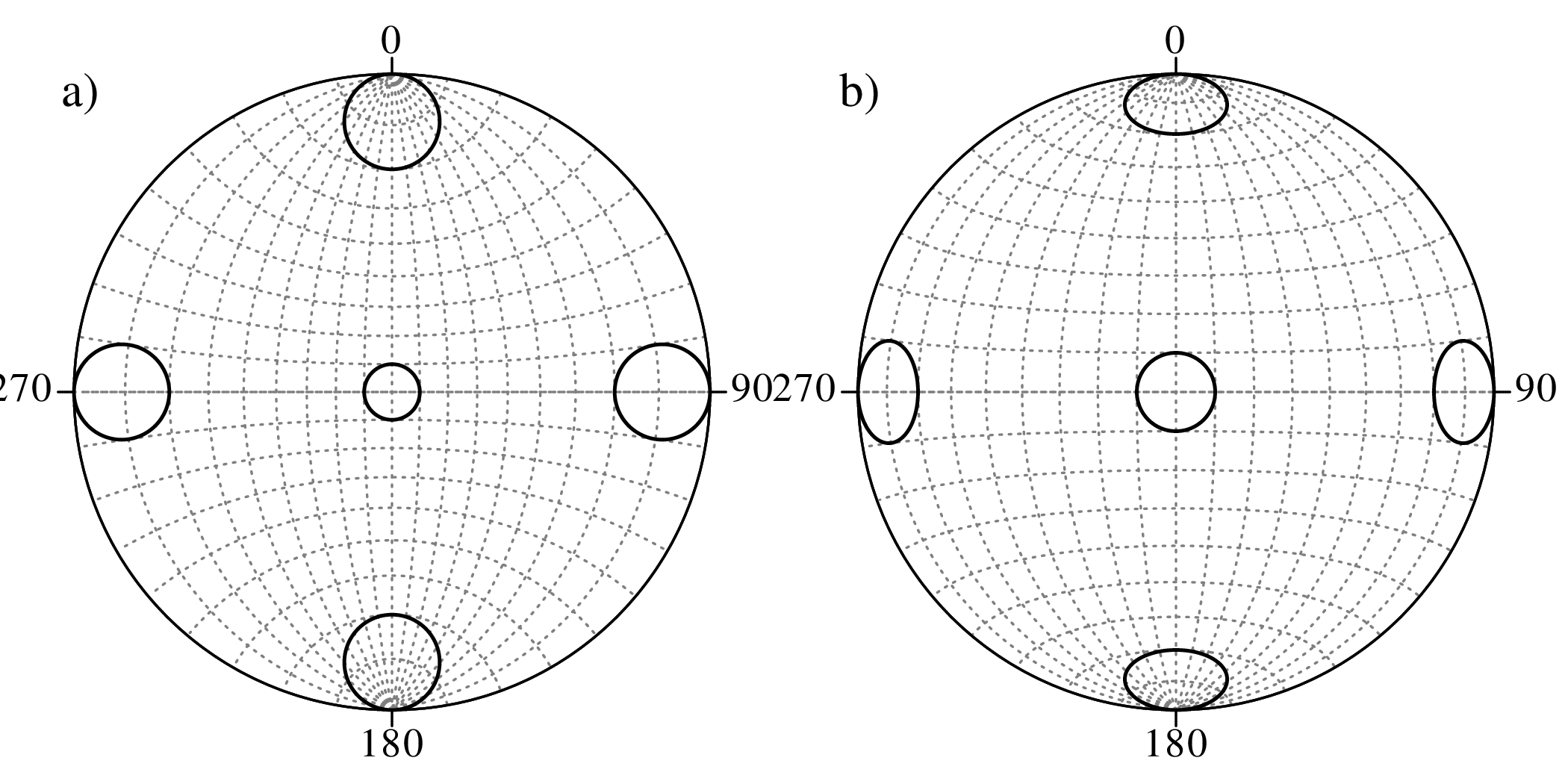

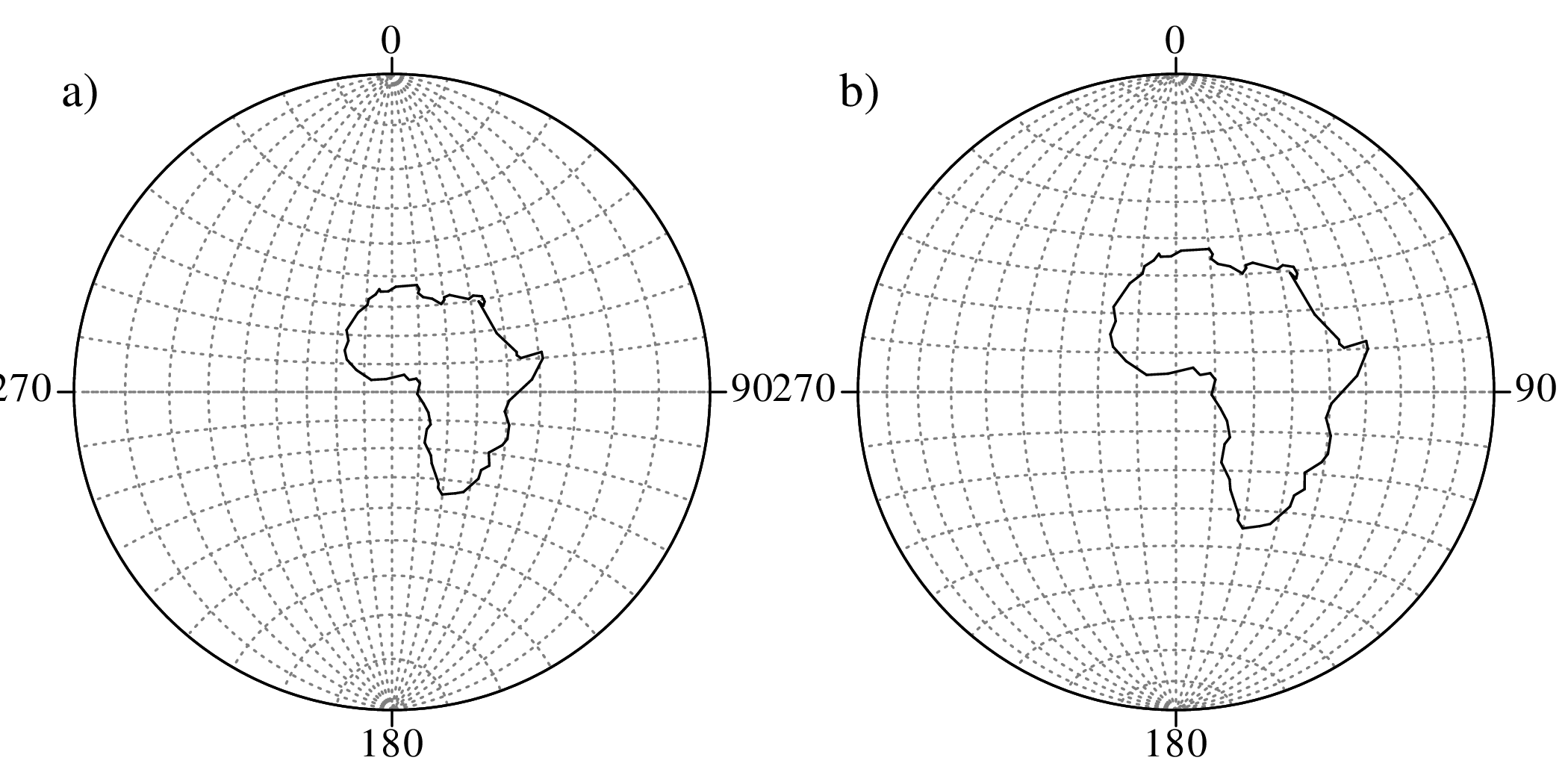

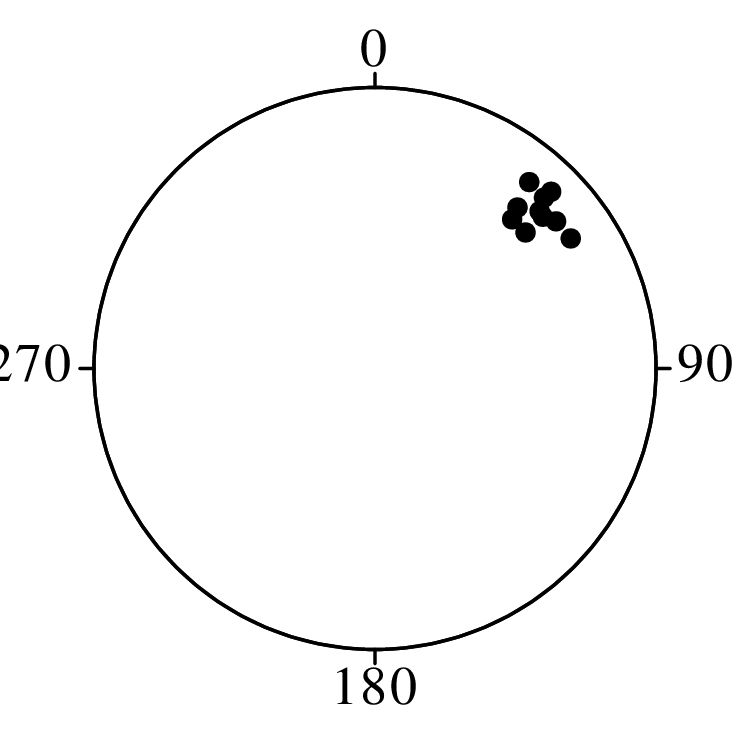

Directional data such as compass bearings and geographical coordinates are another type of measurement that is probably more common in the Earth Sciences than in any other discipline. Chapter 15 shows that, like the compositional data of Chapter 14, also directional data cannot be safely analysed with ‘normal statistics’. For example, the arithmetic mean of two angles 1∘ and 359∘ is 180∘, which is clearly a nonsensical result. Chapter 15 introduces the vector sum as a better way to average directional data, both in one dimension (i.e., a circle, Sections 15.1–15.2) and two dimensions (i.e., a sphere, Sections 15.3–15.4).

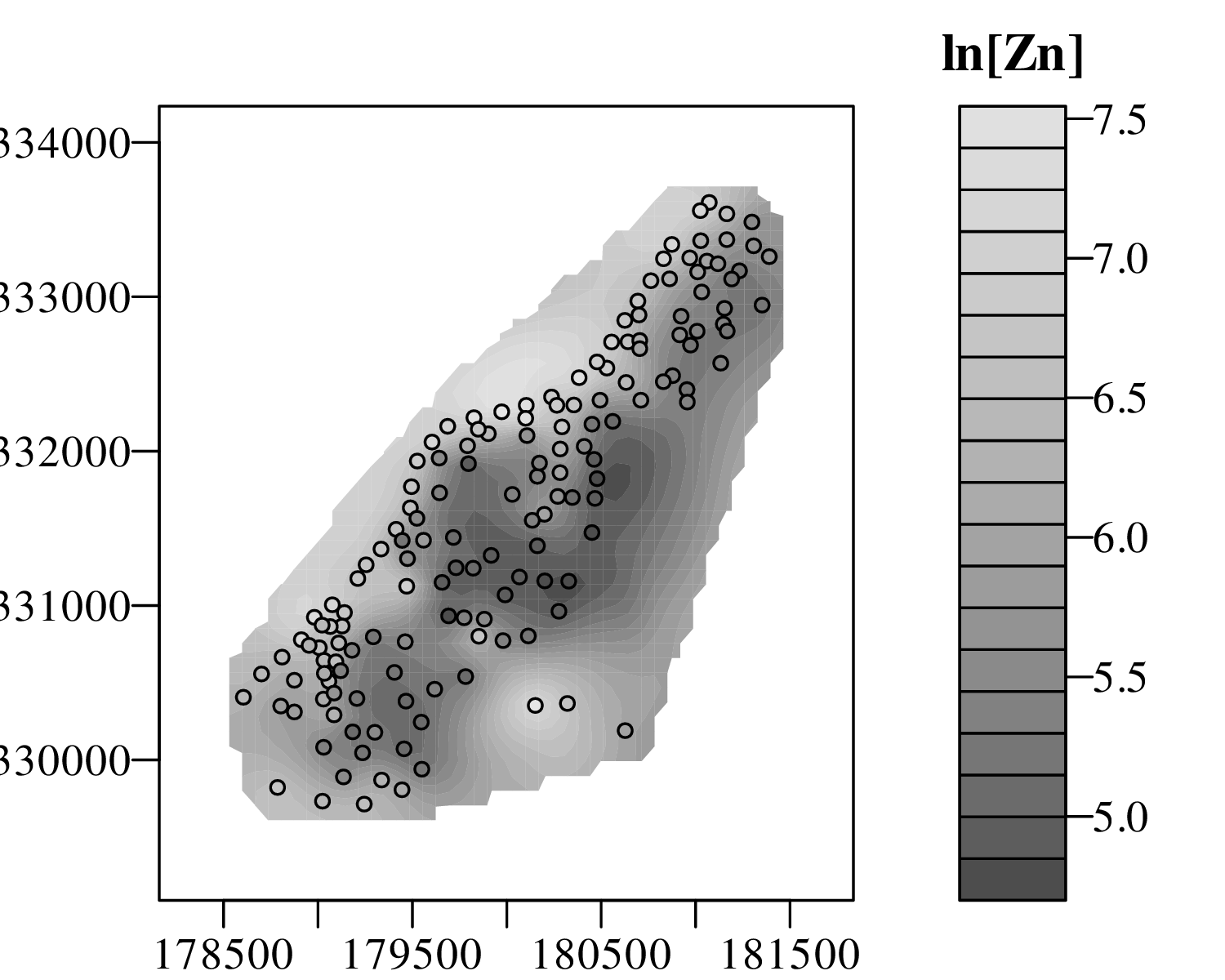

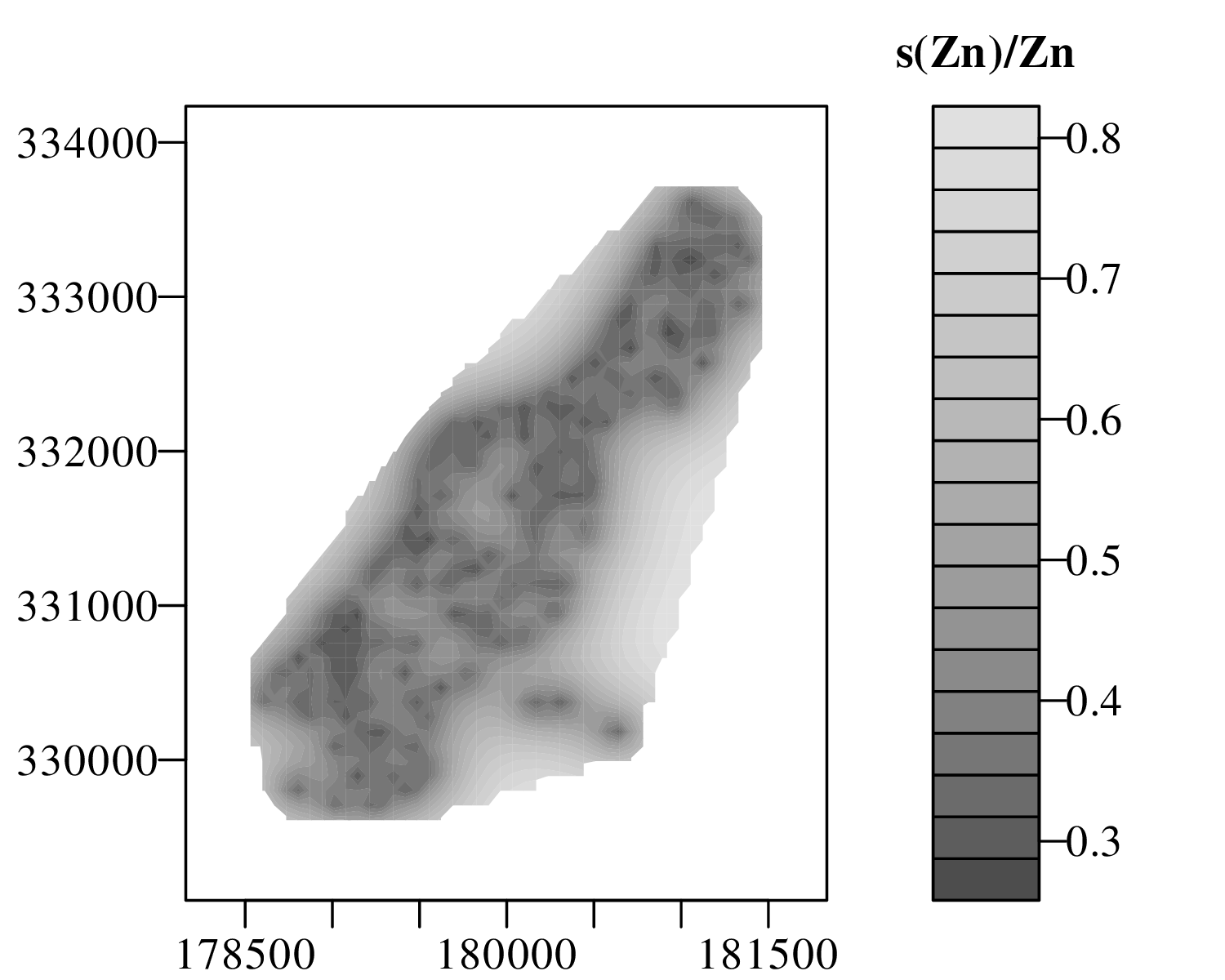

The spatial dimension is crucially important for the Earth Sciences. Geostatistics is a subfield of statistics that specifically deals with this dimension. Chapter 16 provides a basic introduction to kriging interpolation, which is a widely used method to create topographical and compositional maps from a set of sparsely distributed measurements. This chapter concludes the theoretical half of these notes. The remainder of the text (Chapters 17–19) is dedicated to practical exercises.

Chapter 17 provides an extensive introduction to the R programming language. It comprises 16 sections, one for each preceding chapter, and reproduces most of the examples that were covered in the first half of the text. Fortunately, several statistical procedures that are quite complicated to do manually, turn out to be really simple in R. For example, the binomial hypothesis test of Section 5.2 involves six different manual steps, or one simple R command called binom.test. And the Poisson test of Section 6.3 is implemented in an equally simple function called poisson.test.

Despite this simplicity, learning R does present the reader with a steep learning curve. The only way to climb this curve is to practice. One way to do so is to solve the exercises in Chapter 18. Like the R tutorials of Chapter 17, these exercises are also grouped in 16 sections, one for each of the 16 theory chapters. Most of the exercises can be solved with R, using the functions provided in Chapter 17. Fully worked solutions are provided in Chapter 19, which again comprises 16 sections.

I am grateful to my students Peter Elston, Veerle Troelstra and Shiqi Zheng for pointing out mistakes and inconsistencies in earlier versions of these notes. I would also like to thank Jianmei Wang for thoroughly checking Chapters 1–6. The source code of the geostats package and of these notes are provided on GitHub at https://github.com/pvermees/geostats/. Should you find any remaining mistakes in the text or bugs in the software, then you can correct them there and issue a pull request. Or alternatively, you can simply drop me an email. Thanks in advance for your help!

Pieter Vermeesch

University College London

November 2025

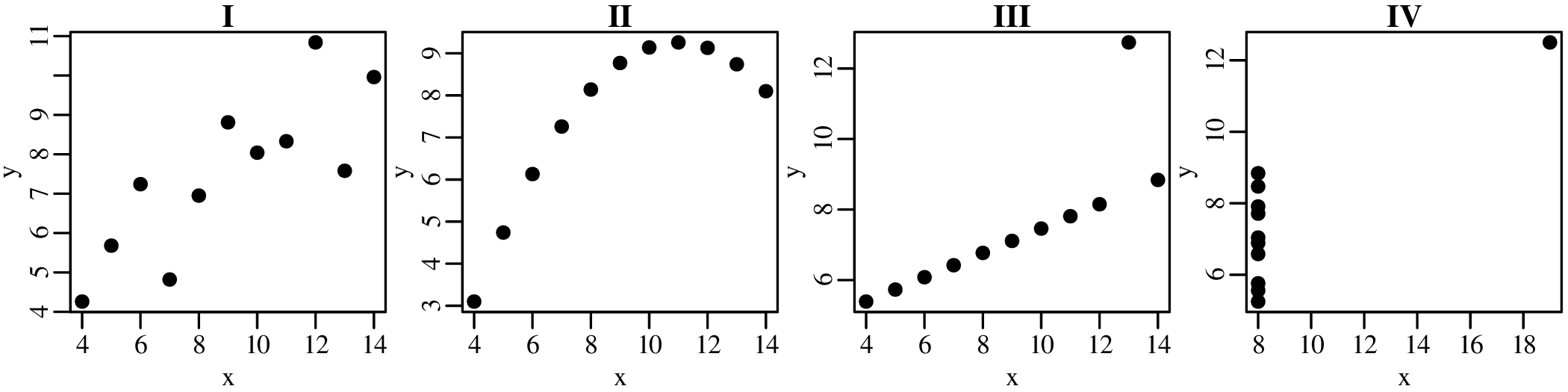

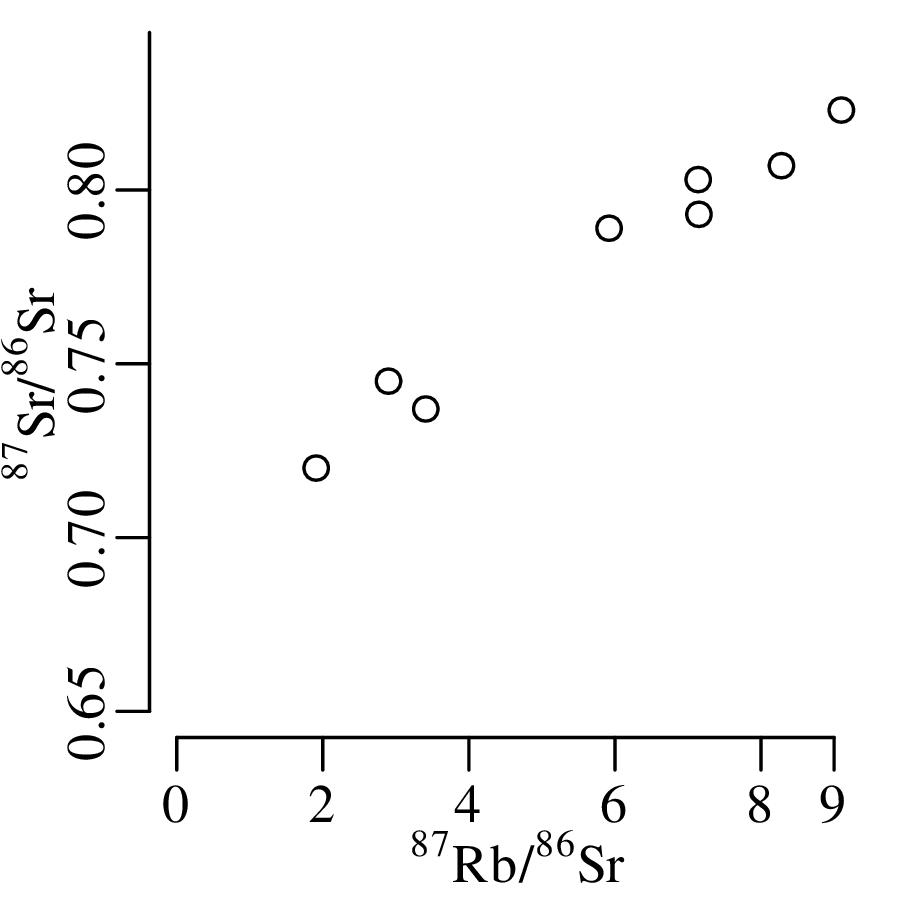

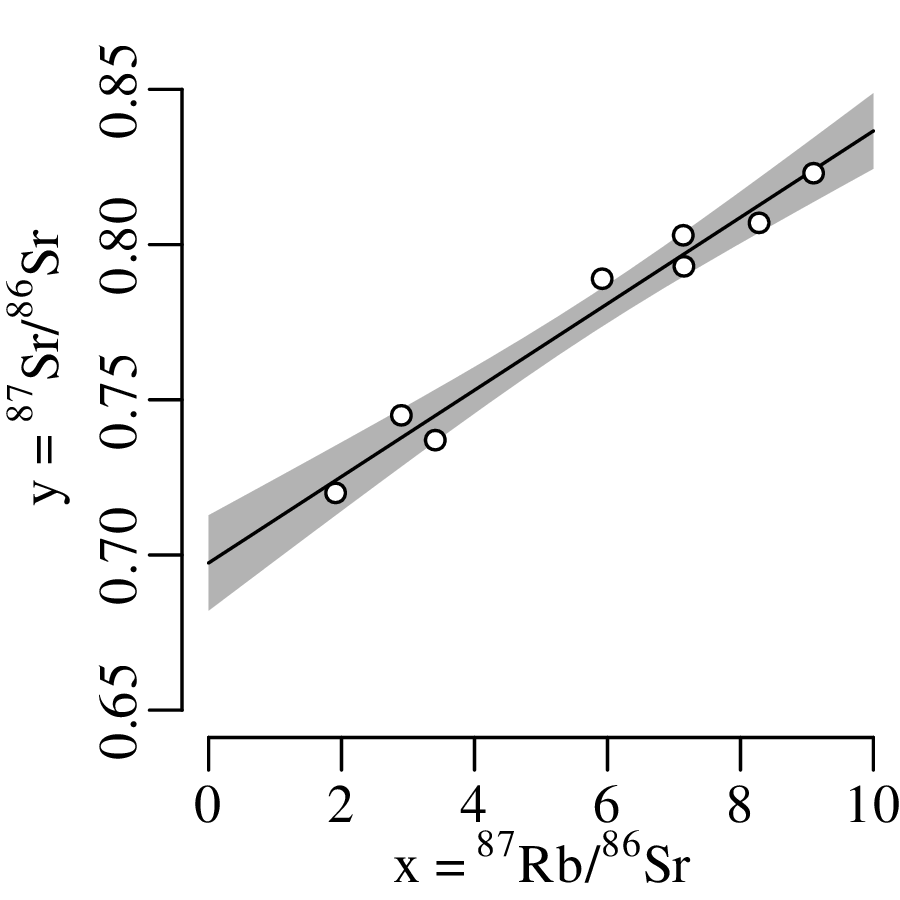

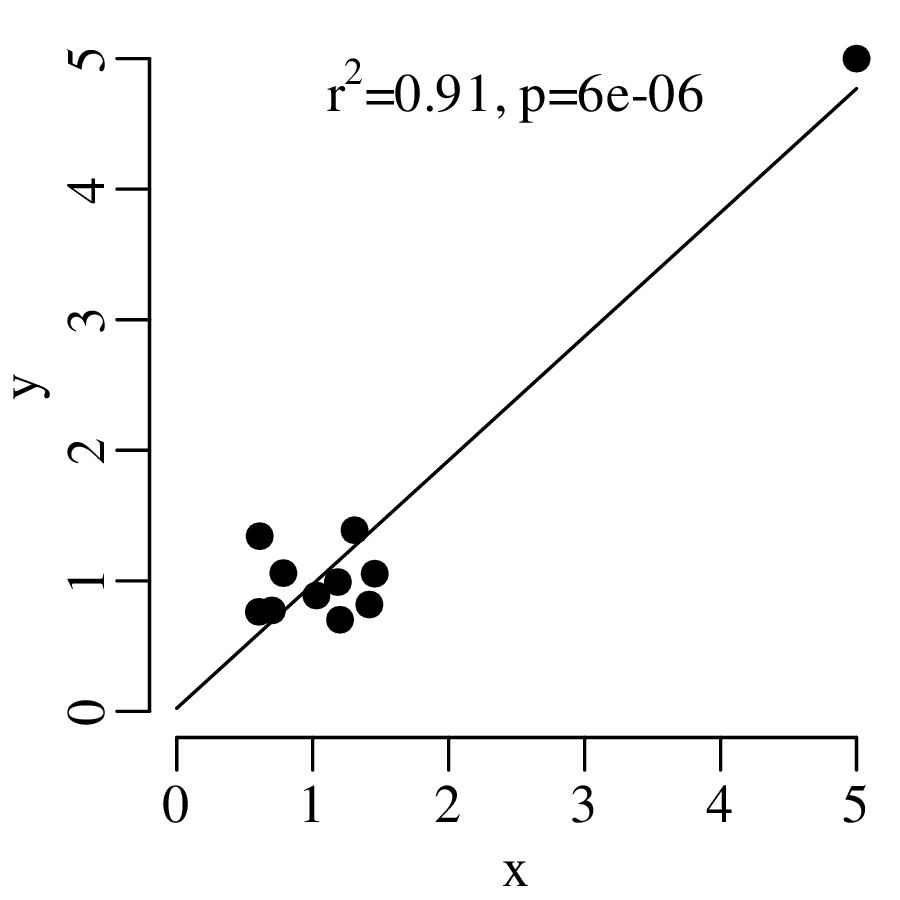

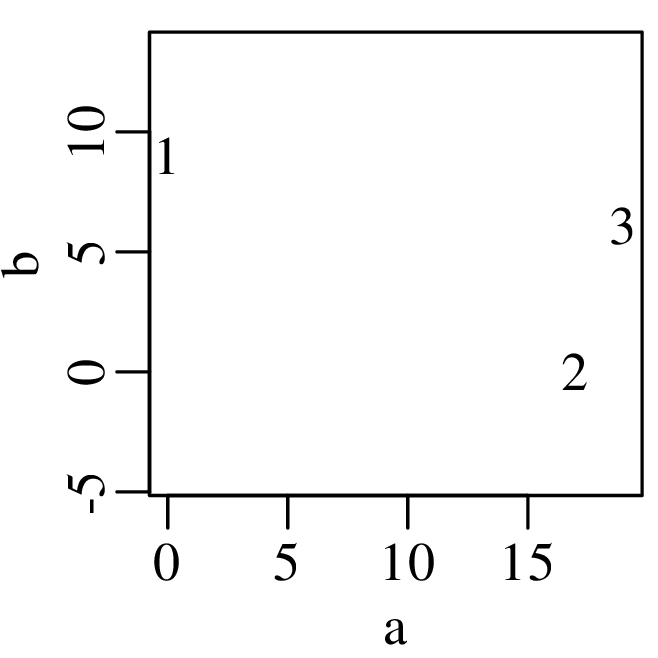

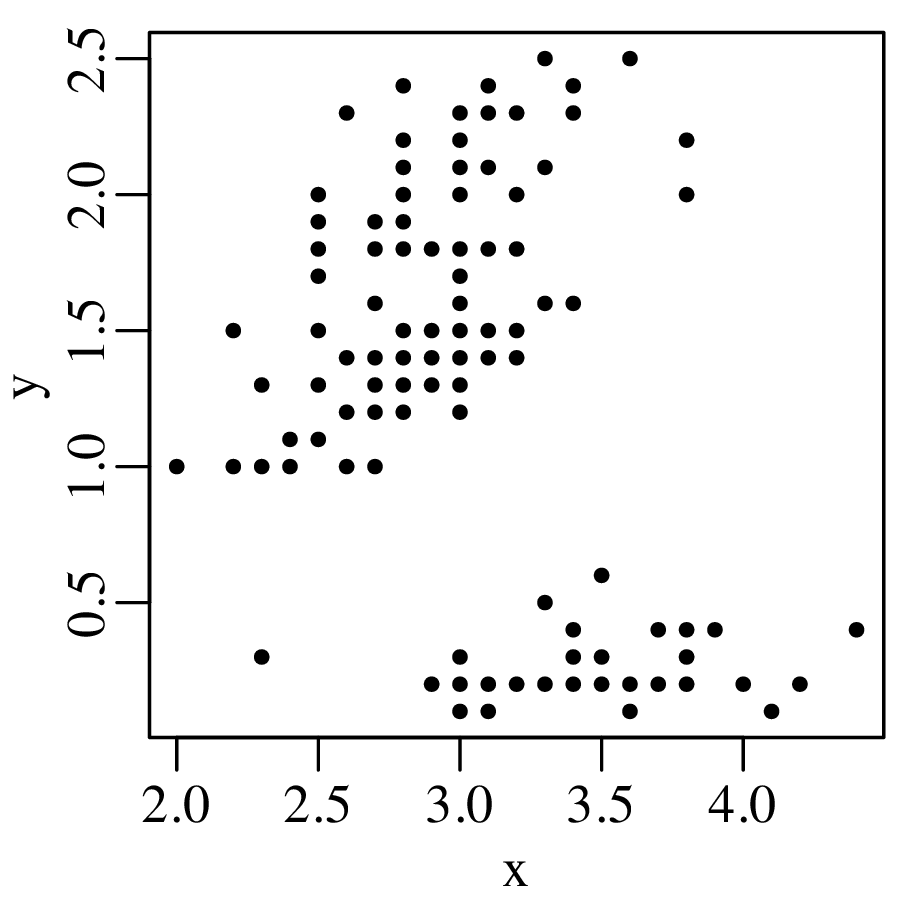

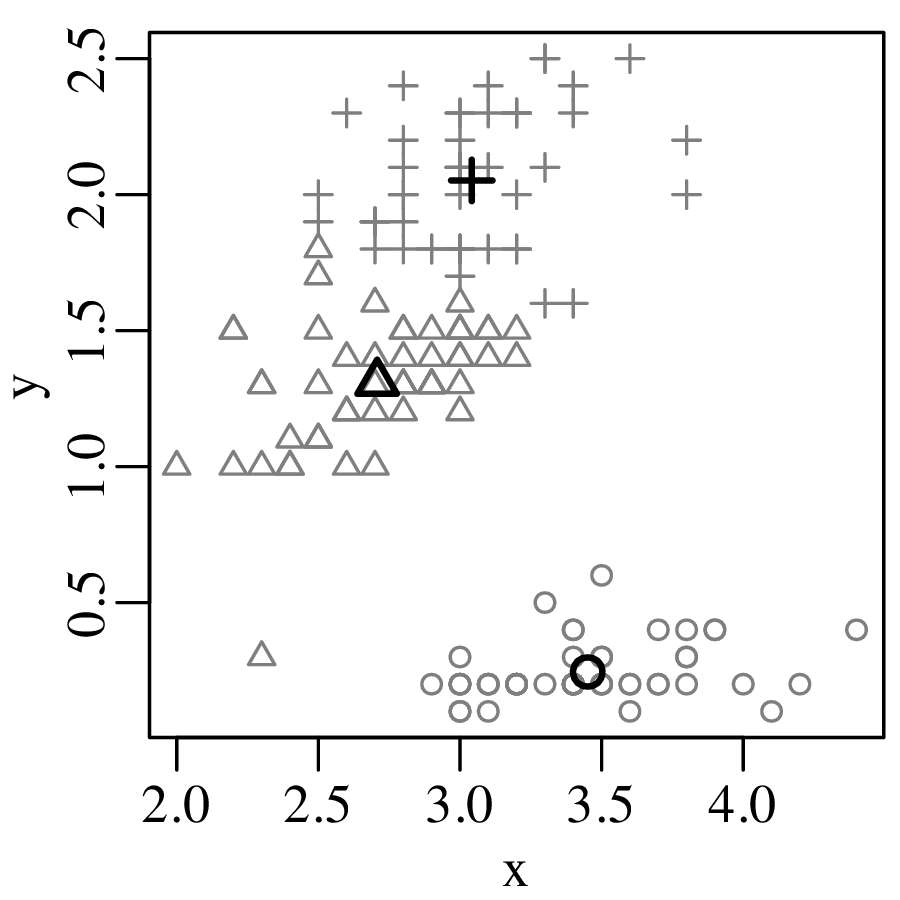

A picture says more than a thousand words and nowhere is this more true than in statistics. So before we explore the more quantitative aspects of data analysis, it is useful to visualise the data. Consider, for example, the following four bivariate datasets (Anscombe’s quartet1 ):

| I | II | III | IV | ||||

| x | y | x | y | x | y | x | y |

| 10.0 | 8.04 | 10.0 | 9.14 | 10.0 | 7.46 | 8.0 | 6.58 |

| 8.0 | 6.95 | 8.0 | 8.14 | 8.0 | 6.77 | 8.0 | 5.76 |

| 13.0 | 7.58 | 13.0 | 8.74 | 13.0 | 12.74 | 8.0 | 7.71 |

| 9.0 | 8.81 | 9.0 | 8.77 | 9.0 | 7.11 | 8.0 | 8.84 |

| 11.0 | 8.33 | 11.0 | 9.26 | 11.0 | 7.81 | 8.0 | 8.47 |

| 14.0 | 9.96 | 14.0 | 8.10 | 14.0 | 8.84 | 8.0 | 7.04 |

| 6.0 | 7.24 | 6.0 | 6.13 | 6.0 | 6.08 | 8.0 | 5.25 |

| 4.0 | 4.26 | 4.0 | 3.10 | 4.0 | 5.39 | 19.0 | 12.50 |

| 12.0 | 10.84 | 12.0 | 9.13 | 12.0 | 8.15 | 8.0 | 5.56 |

| 7.0 | 4.82 | 7.0 | 7.26 | 7.0 | 6.42 | 8.0 | 7.91 |

| 5.0 | 5.68 | 5.0 | 4.74 | 5.0 | 5.73 | 8.0 | 6.89 |

For all four datasets (I – IV):

the mean (see Chapter 3) of x is 9

the variance (see Chapter 3) of x is 11

the mean of y is 7.50

the variance of y is 4.125

the correlation coefficient (see Chapter 10) between x and y is 0.816

the best fit line (see Chapter 10) is given by y = 3.00 + 0.500x

So at the summary level, it would appear that all four datasets are identical. However, when we visualise the data as bivariate scatter plots, they turn out to be very different:

Consider the following data table, which reports six different properties of twenty fictitious river catchments:

| # | lithology | age | springs | pH | Ca/Mg | vegetation |

| 1 | basalt | Cz | 0 | 6.2 | 0.35 | 5.8 |

| 2 | granite | Mz | 1 | 4.4 | 11.00 | 28 |

| 3 | basalt | Mz | 0 | 5.6 | 6.00 | 12 |

| 4 | sandstone | Cz | 6 | 5.2 | 1.80 | 27 |

| 5 | shale | pC | 3 | 4.5 | 2.30 | 40 |

| 6 | basalt | Mz | 1 | 5.4 | 0.59 | 12 |

| 7 | shale | Pz | 2 | 4.8 | 8.40 | 3.8 |

| 8 | basalt | Pz | 0 | 5.9 | 2.90 | 6.3 |

| 9 | shale | Mz | 1 | 3.8 | 5.90 | 17 |

| 10 | shale | Mz | 2 | 4.0 | 2.10 | 16 |

| 11 | sandstone | Pz | 4 | 5.0 | 1.20 | 95 |

| 12 | shale | pC | 2 | 4.1 | 2.10 | 94 |

| 13 | sandstone | Mz | 4 | 5.2 | 1.10 | 92 |

| 14 | basalt | Cz | 1 | 5.5 | 1.60 | 88 |

| 15 | sandstone | Pz | 4 | 5.3 | 0.90 | 88 |

| 16 | granite | Pz | 0 | 4.6 | 1.70 | 70 |

| 17 | basalt | Mz | 0 | 5.7 | 3.40 | 92 |

| 18 | shale | Pz | 1 | 4.6 | 0.53 | 72 |

| 19 | granite | Mz | 0 | 4.6 | 2.20 | 74 |

| 20 | basalt | Cz | 1 | 5.6 | 7.70 | 84 |

The first three columns contain discrete data values and can be visualised as bar charts or histograms.

Discrete (categorical, ordinal and count) data naturally fall into bins and the most obvious way to visualise them is as bar charts (Figure 2.2). Likewise, continuous (Cartesian, Jeffreys, proportions) data can also be collected into bins and plotted as histograms (Figure 2.3). However, this binning exercise poses two practical problems.

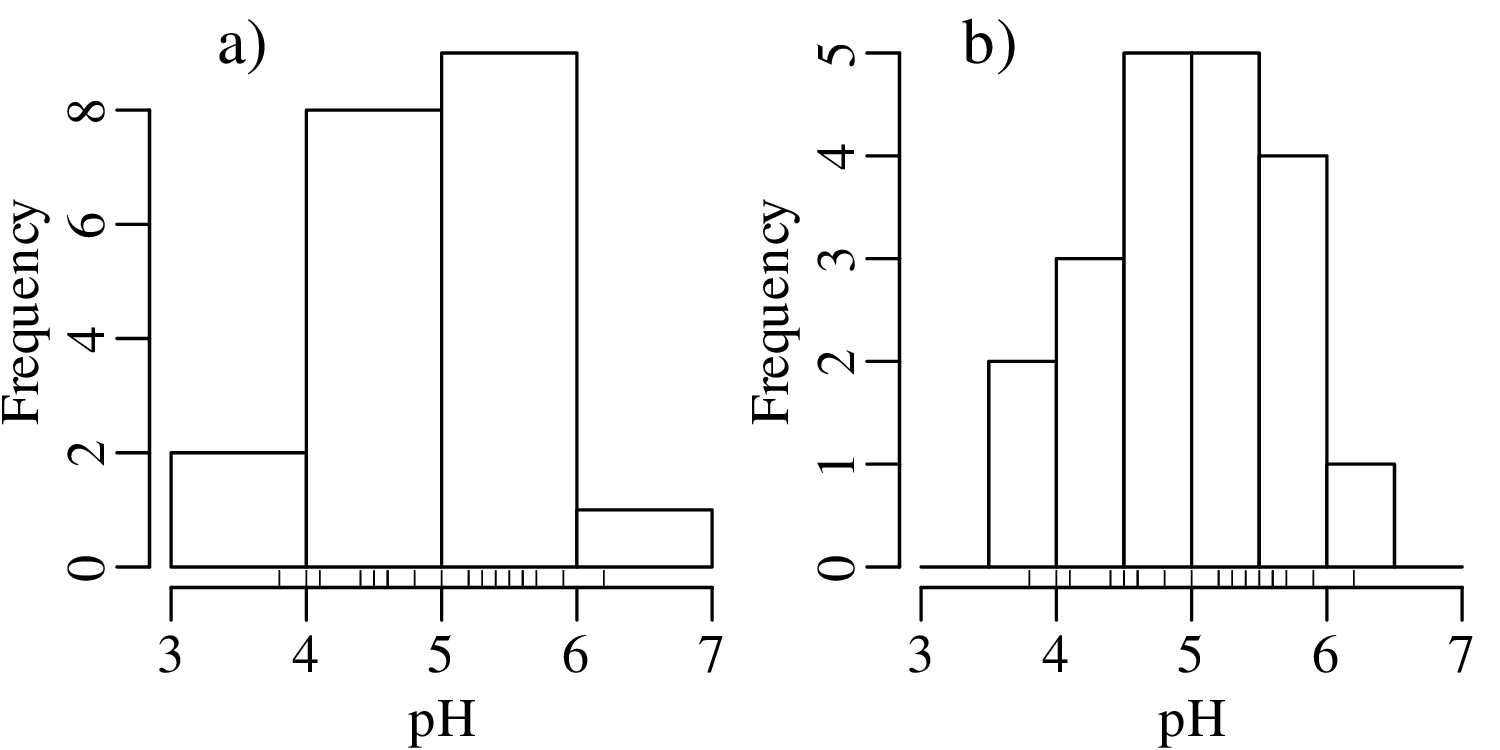

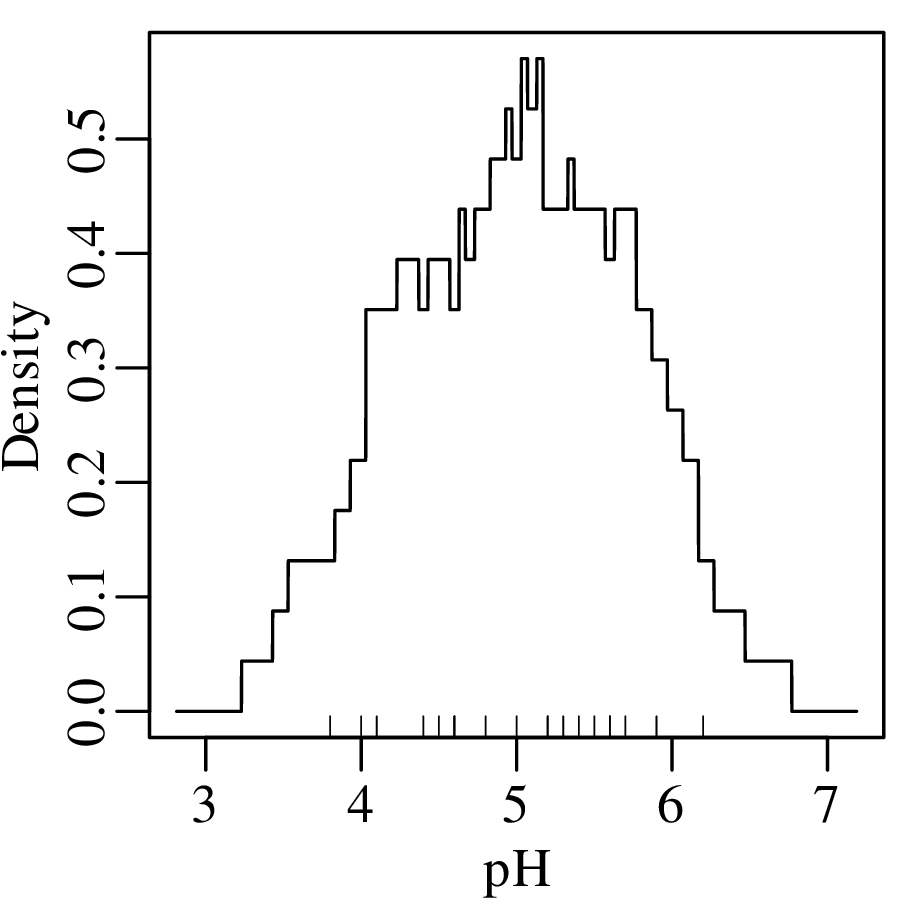

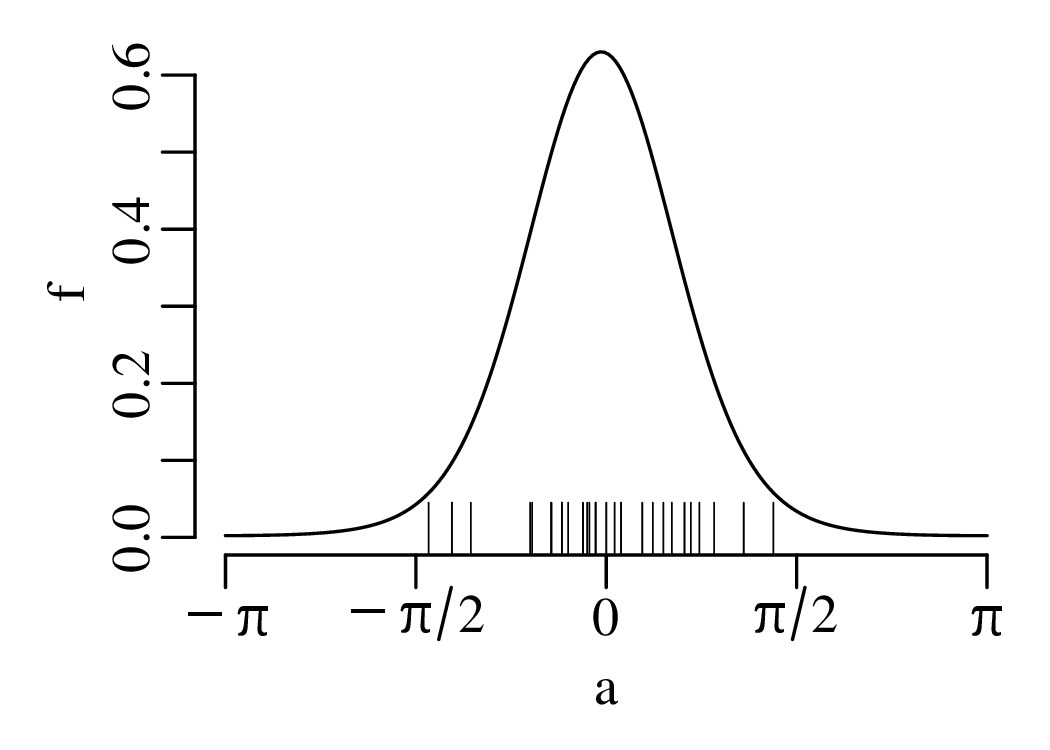

The number of bins strongly affects the appearance of a histogram. Figure 2.4 shows the pH data on two histograms with the individual measurements marked as vertical ticks underneath. This is also known as a rug plot, and allows us to better assess the effect of bin width on the appearance of histograms:

A number of rules of thumb are available to choose the optimal number of bins. For example, Excel uses a simple square root rule:

| (2.1) |

where n is the number of observations (i.e. n = 20 for the pH example) and ⌈∗⌉ means the ‘ceiling’ of ∗, i.e. the value rounded up to the next integer. R uses Sturges’ Rule:

| (2.2) |

however no rule of thumb is optimal in all situations.

Even when the number of bins has been fixed, just shifting them slightly to the left or to the right can have a significant effect on the appearance of the histogram. For example:

There is no optimal solution to the bin placement problem. This reflects a limitation of histograms as a way to present continuous data.

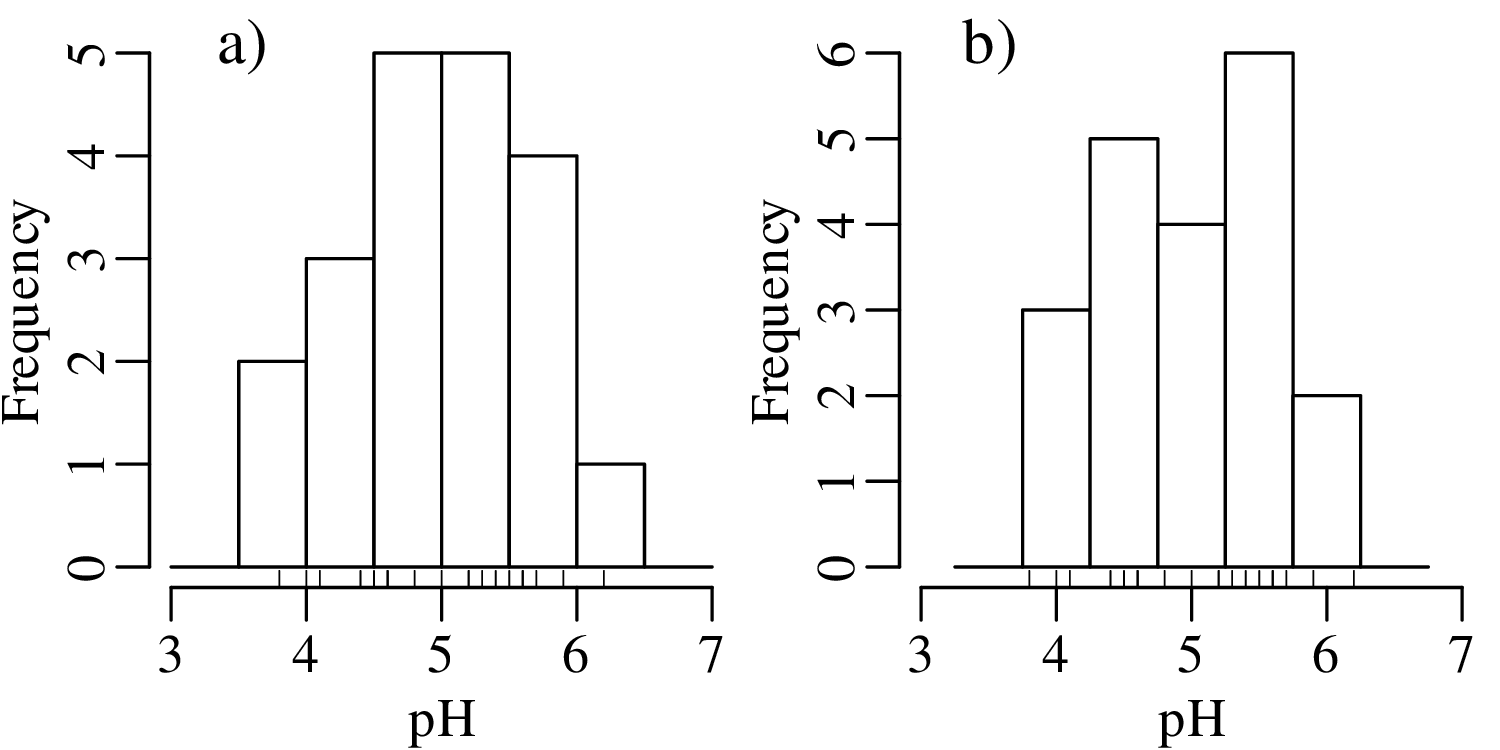

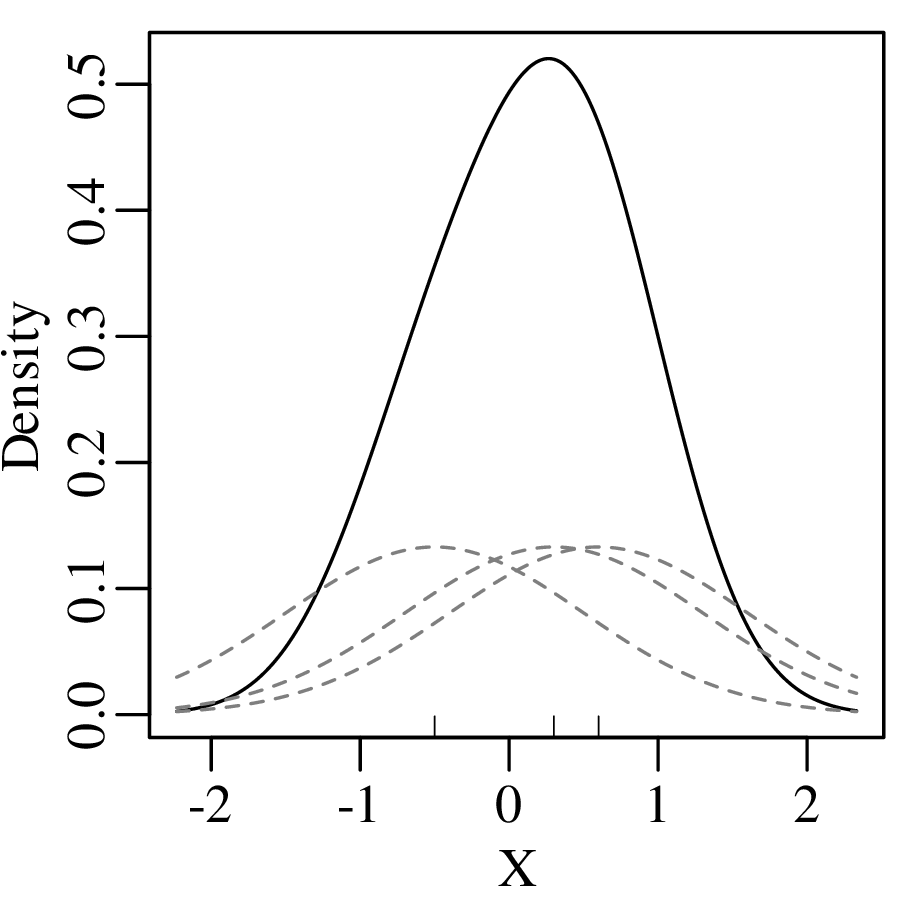

To overcome the bin placement problem, let us explore a variant of the ordinary histogram that is constructed as follows:

Normalising the area under the resulting curve produces a so-called Kernel Density Estimate (KDE). The mathematical definition of this function is:

| (2.3) |

where xi is the ith measurement (out of n), h is the ‘bandwidth’ of the kernel density estimator (h = 1 in Figure 2.6), and K(u) is the ‘kernel’ function. For the rectangular kernel:

| (2.4) |

where ‘|∗|’ stands for “the absolute value of ∗”. Applying this method to the pH data:

Instead of a rectangular kernel, we could also use triangles to construct the KDE curve, or any other (symmetric) function. One popular choice is the Gaussian function:

| (2.5) |

which produces a continuous KDE function:

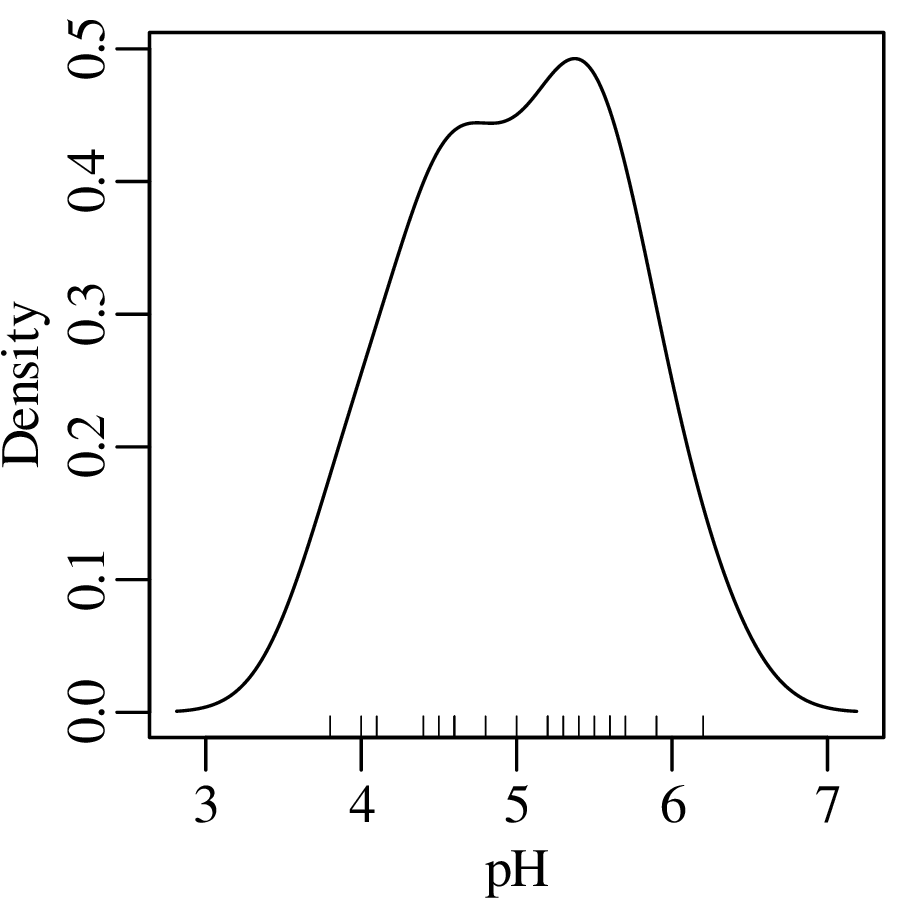

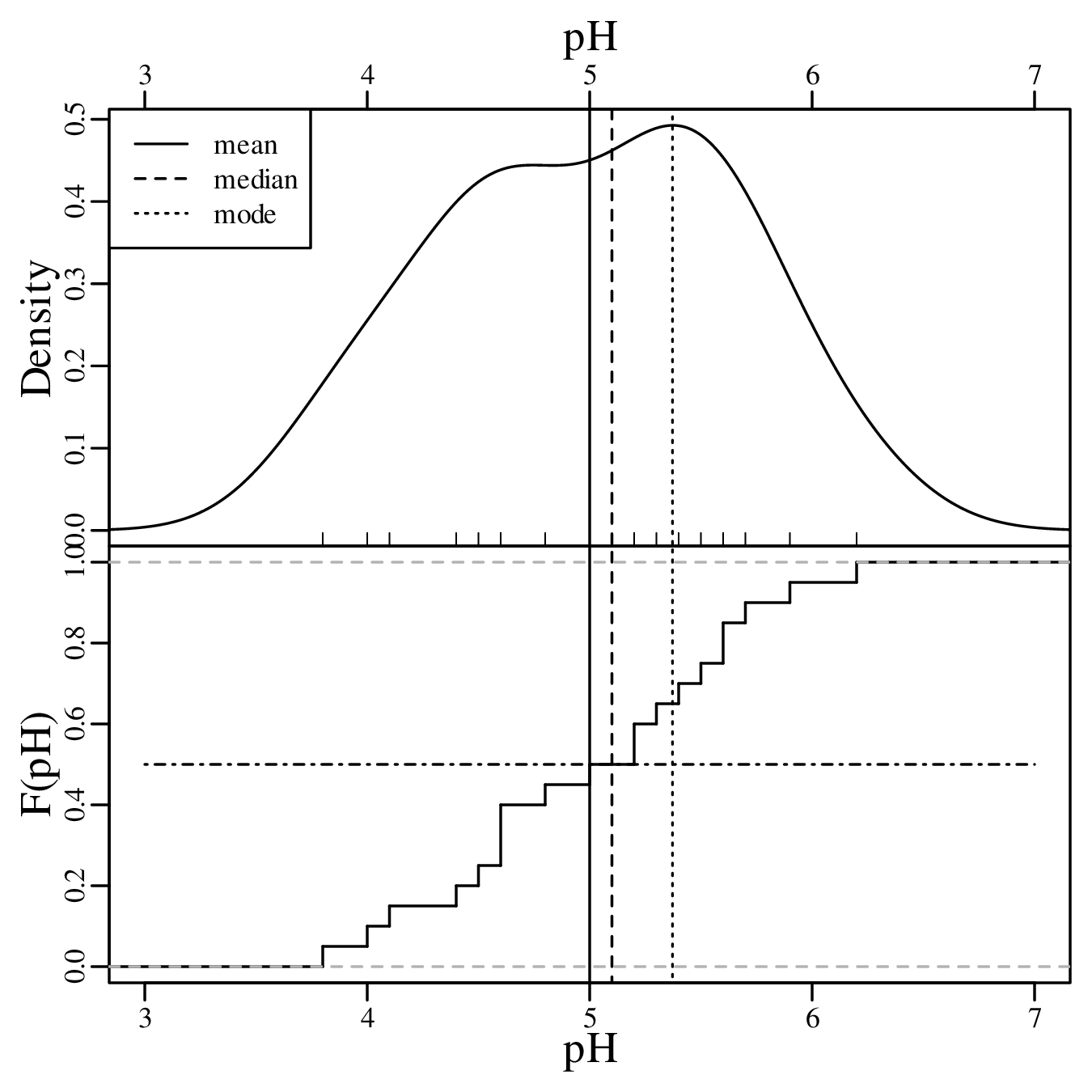

Using the Gaussian kernel to plot the pH data:

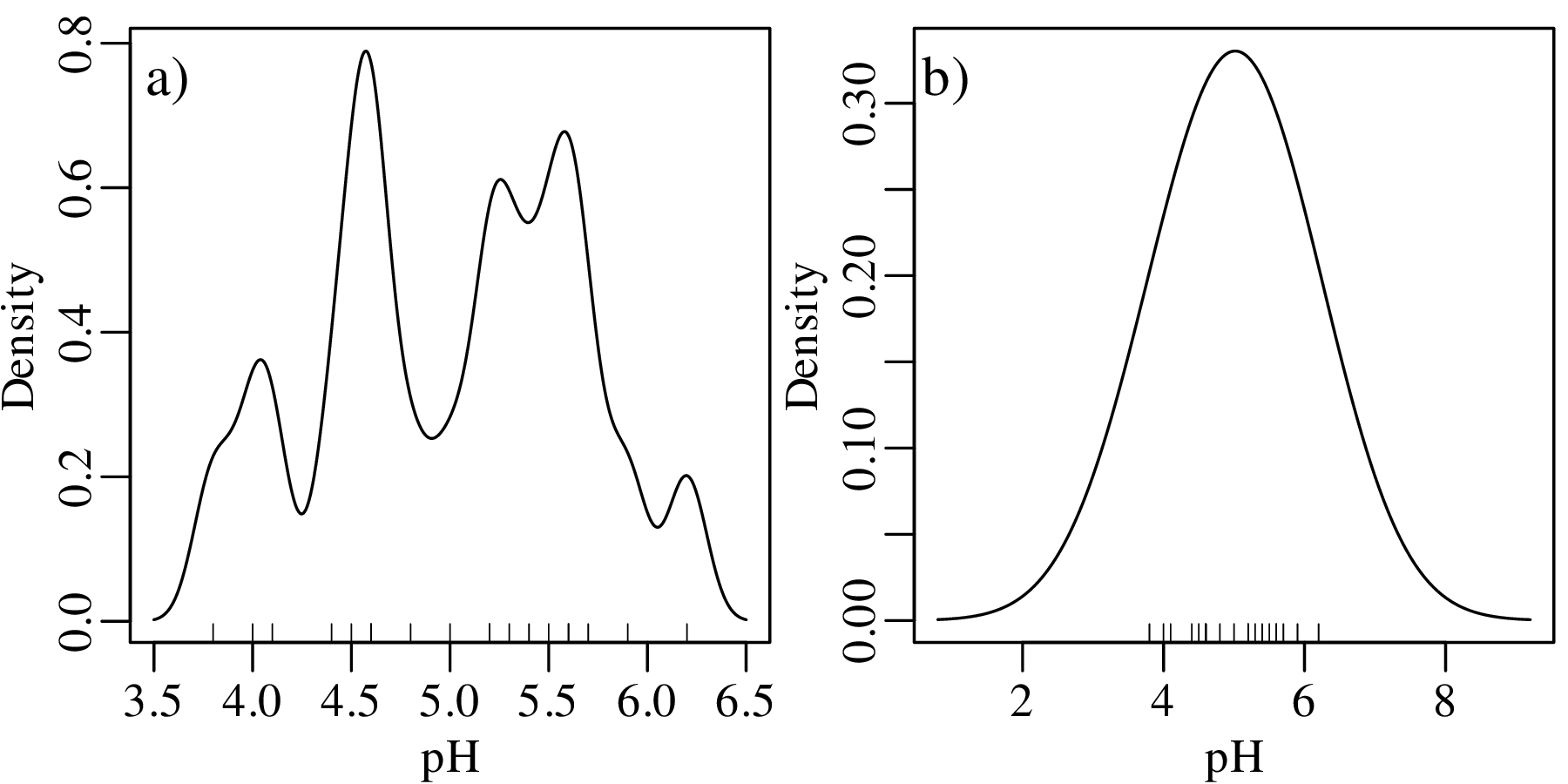

Although kernel density estimation solves the bin placement problem, it is not entirely free of design decisions. The bandwidth h of a KDE fulfils a similar role as the bin width of a histogram. Changes in h affect the smoothness of the KDE curve:

Bandwidth selection is a similar problem to bin width selection. A deeper discussion of this problem falls outside the scope of text. Suffice it to say that most statistical software (including R) use equivalent rules of thumb to Sturges’ Rule to set the bandwidth. But these values can be easily overruled by the user.

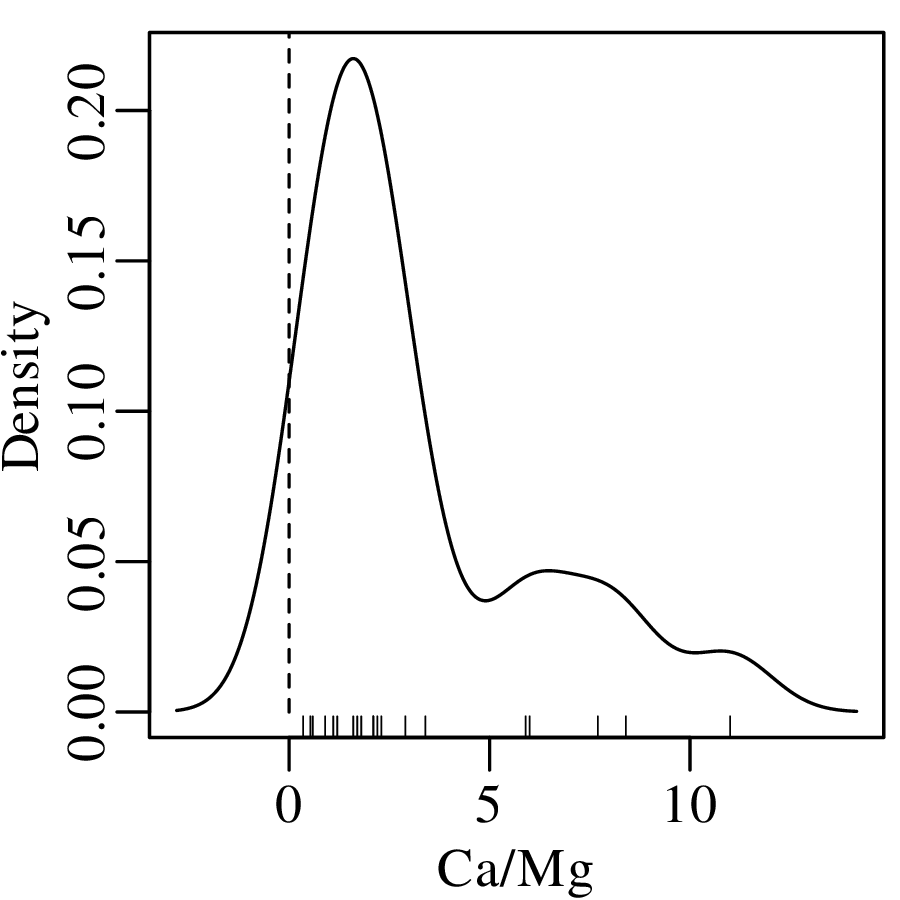

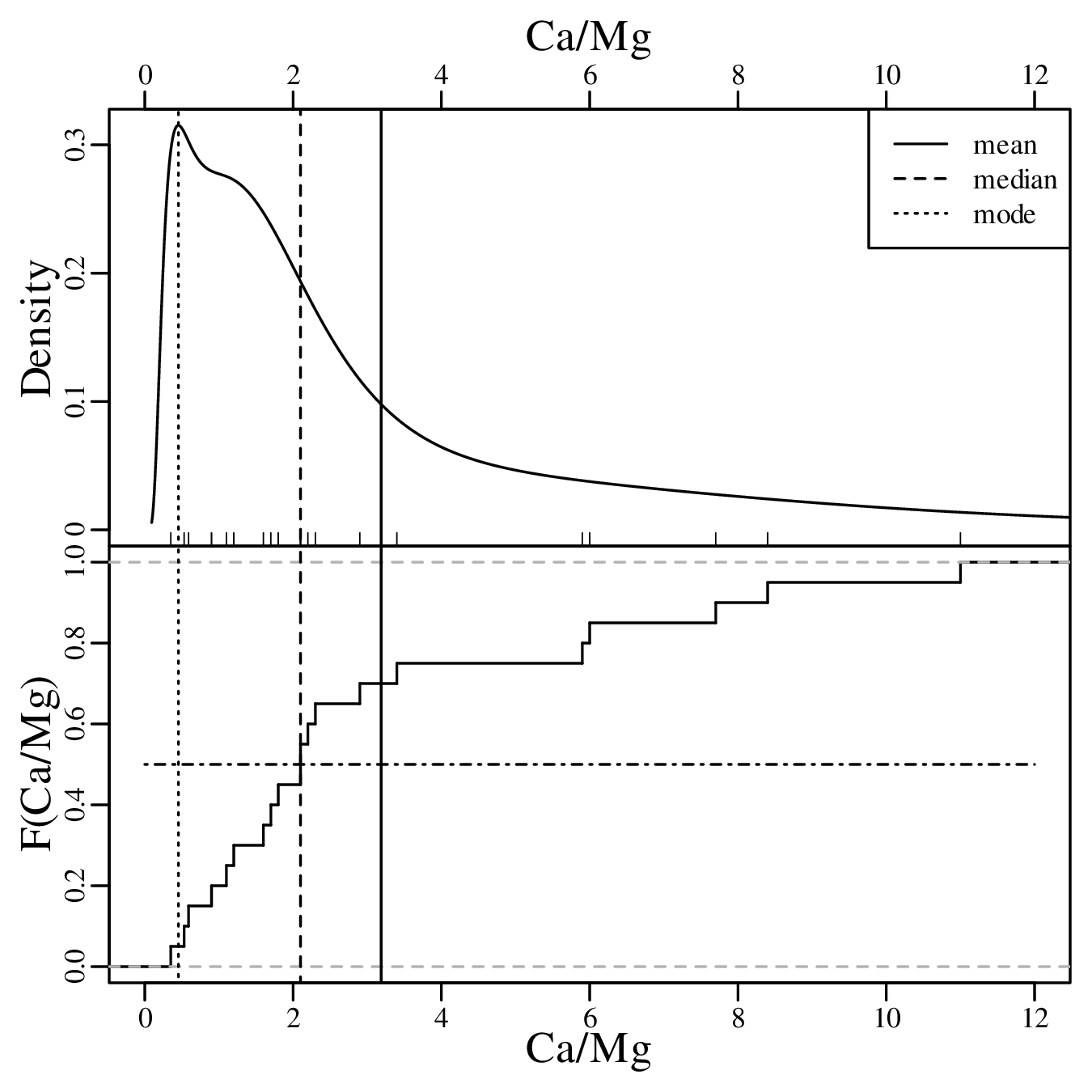

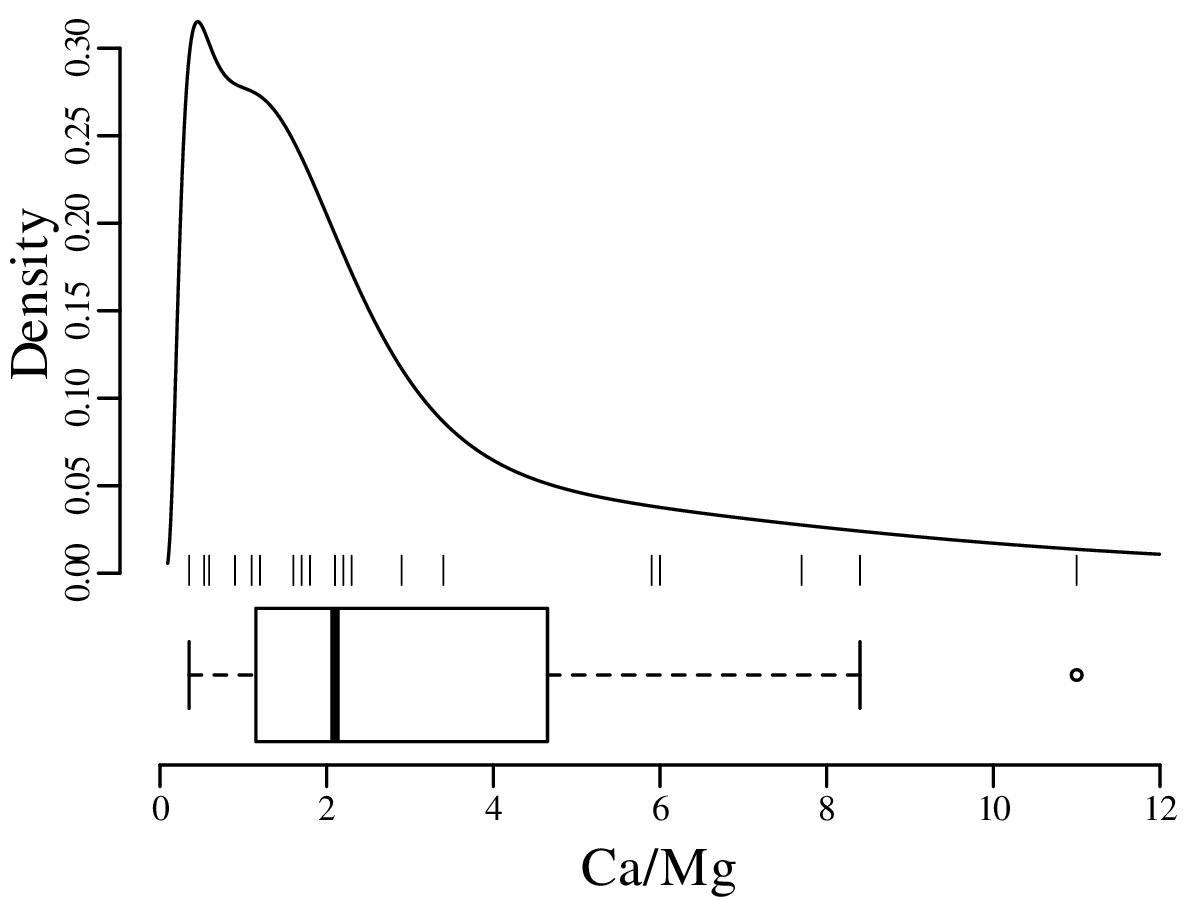

As discussed in Section 2.1, Jeffreys quantities such as the Ca/Mg ratios of Figure 2.3.b exist within an infinite half space between 0 and +∞. However, when we visualise them as a KDE, the left tail of this curve may extend into negative data space, implying that there is a finite chance of observing negative Ca/Mg ratios:

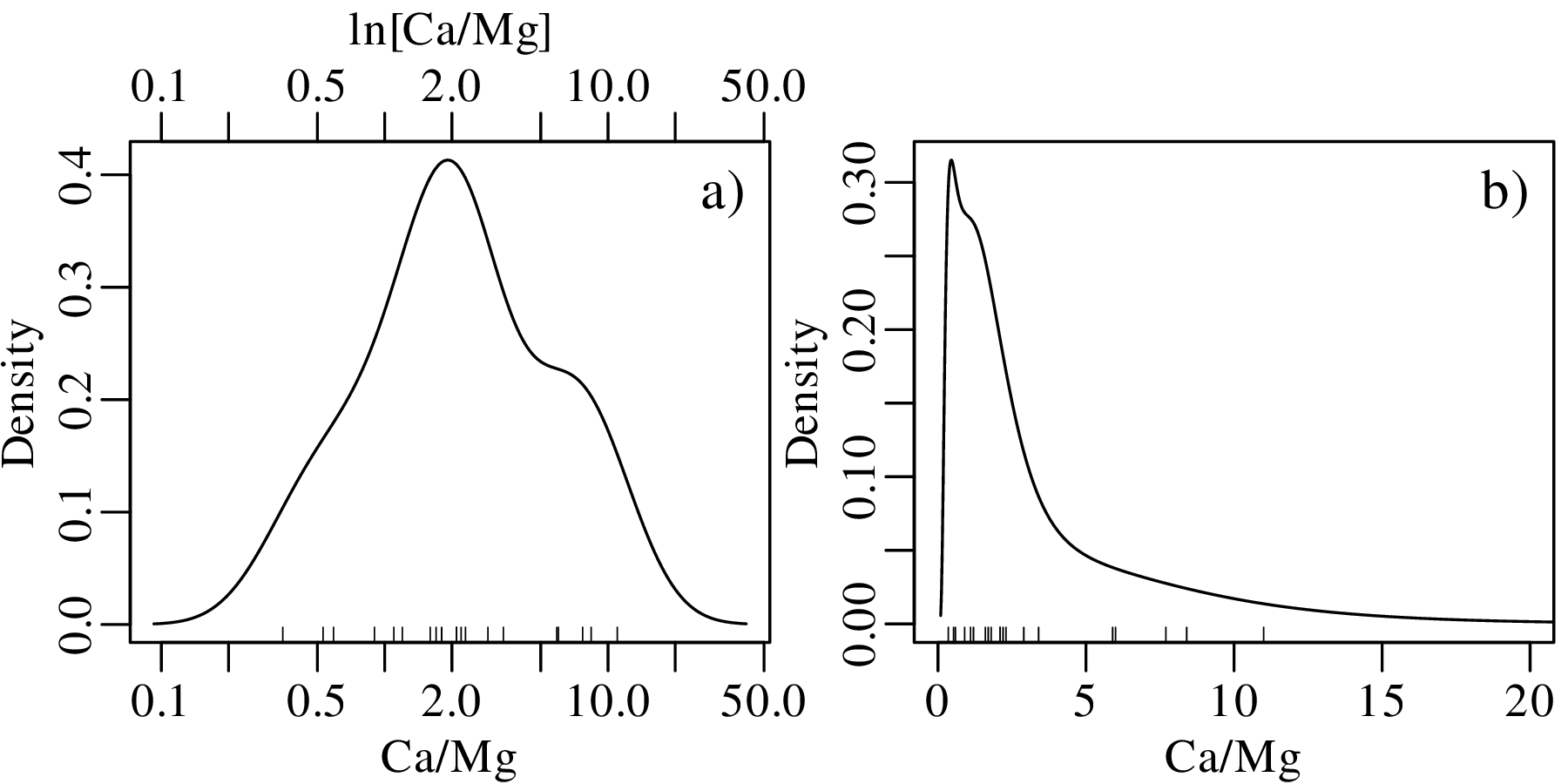

This problem can be solved by transforming the Jeffreys quantities to the entire infinite space of numbers by applying a logarithmic transformation:

| (2.6) |

After constructing the KDE, the results can then be mapped3 back to linear space using an exponential transformation:

| (2.7) |

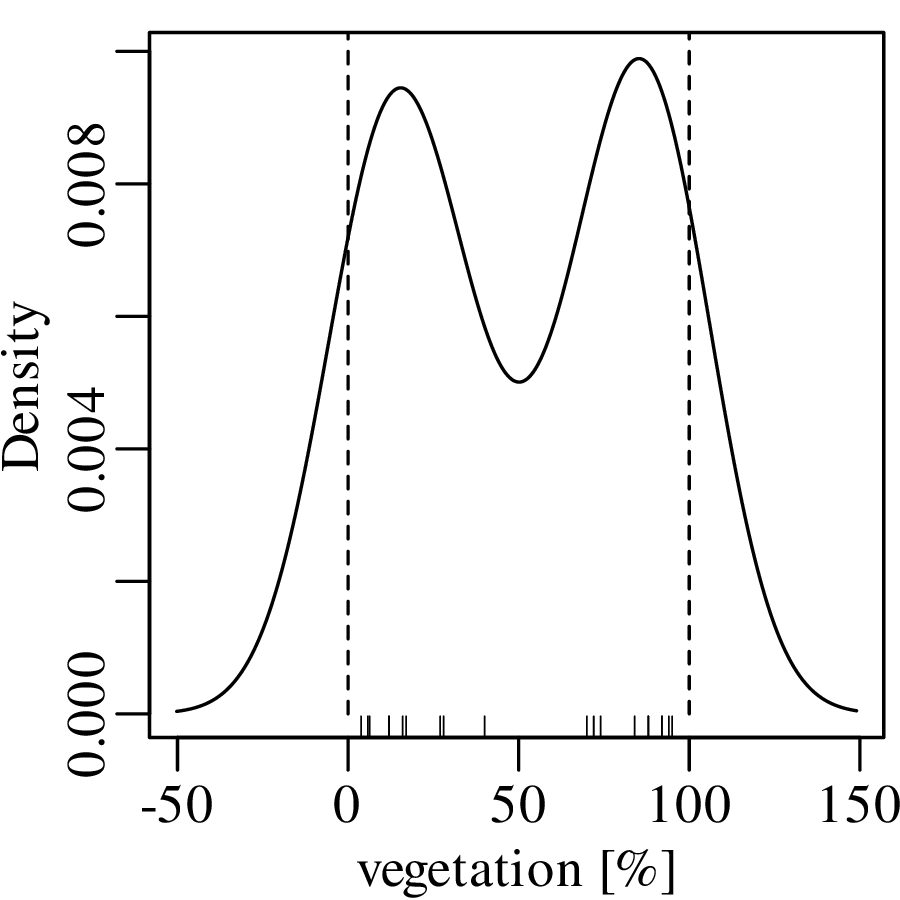

Jeffreys quantities are just one example of constrained measurements. As another example, consider the vegetation data of Figure 2.3.c. Again, the Gaussian KDE of the data plots into physically impossible values of < 0 and > 100%:

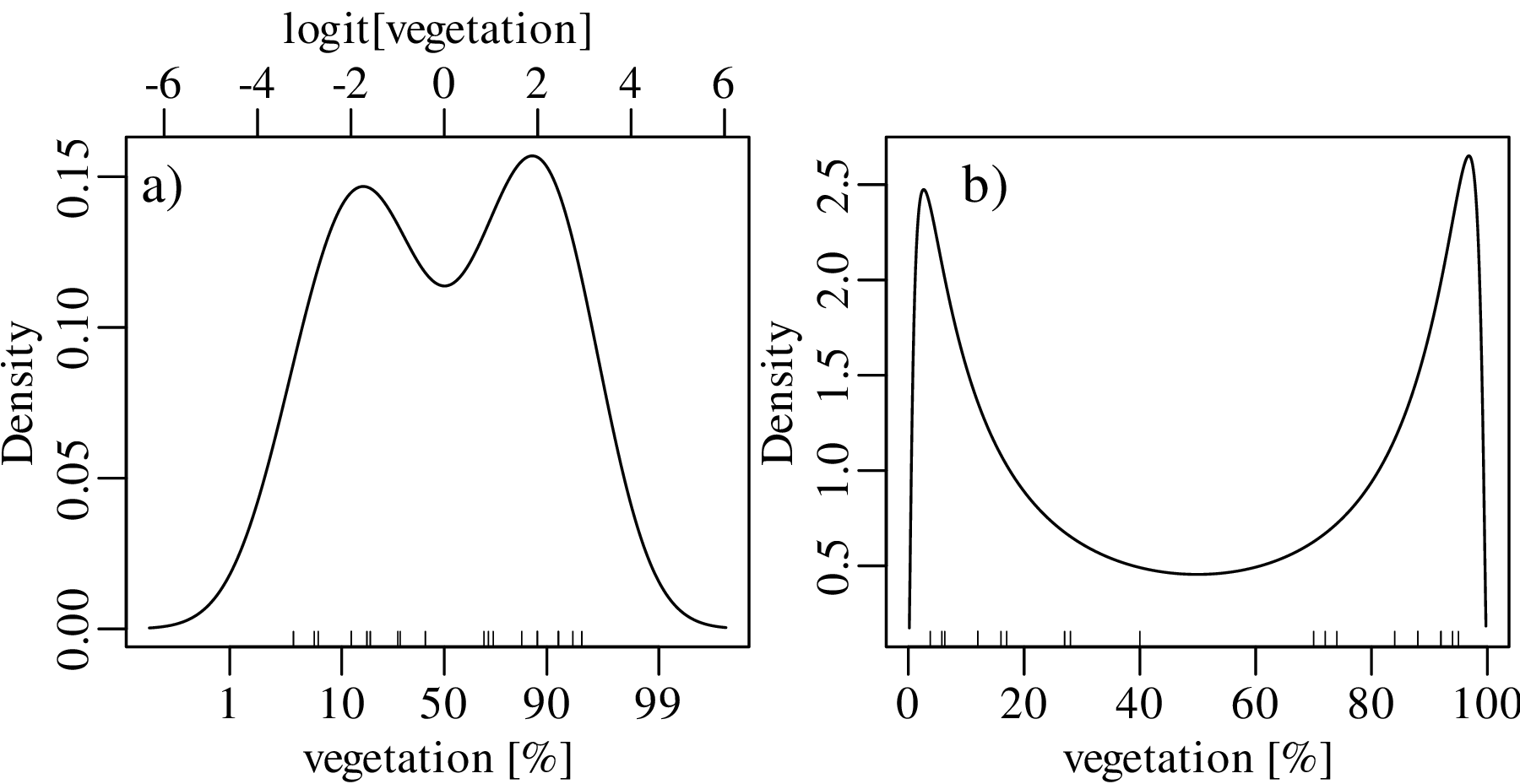

Using a similar approach as before, the dataspace can be opened up from the constraints of the 0 to 1 interval to the entire line of numbers, from −∞ to +∞. For proportions, this is achieved by the logistic transformation:

| (2.8) |

After constructing the density estimate (or carrying out any other numerical manipulation), the results can be mapped back to the 0 to 1 interval with the inverse logit transformation:

| (2.9) |

We will see in Chapter 14 that the logistic transformation is a special case of a general class of logratio transformations that are useful for the analysis of compositional data.

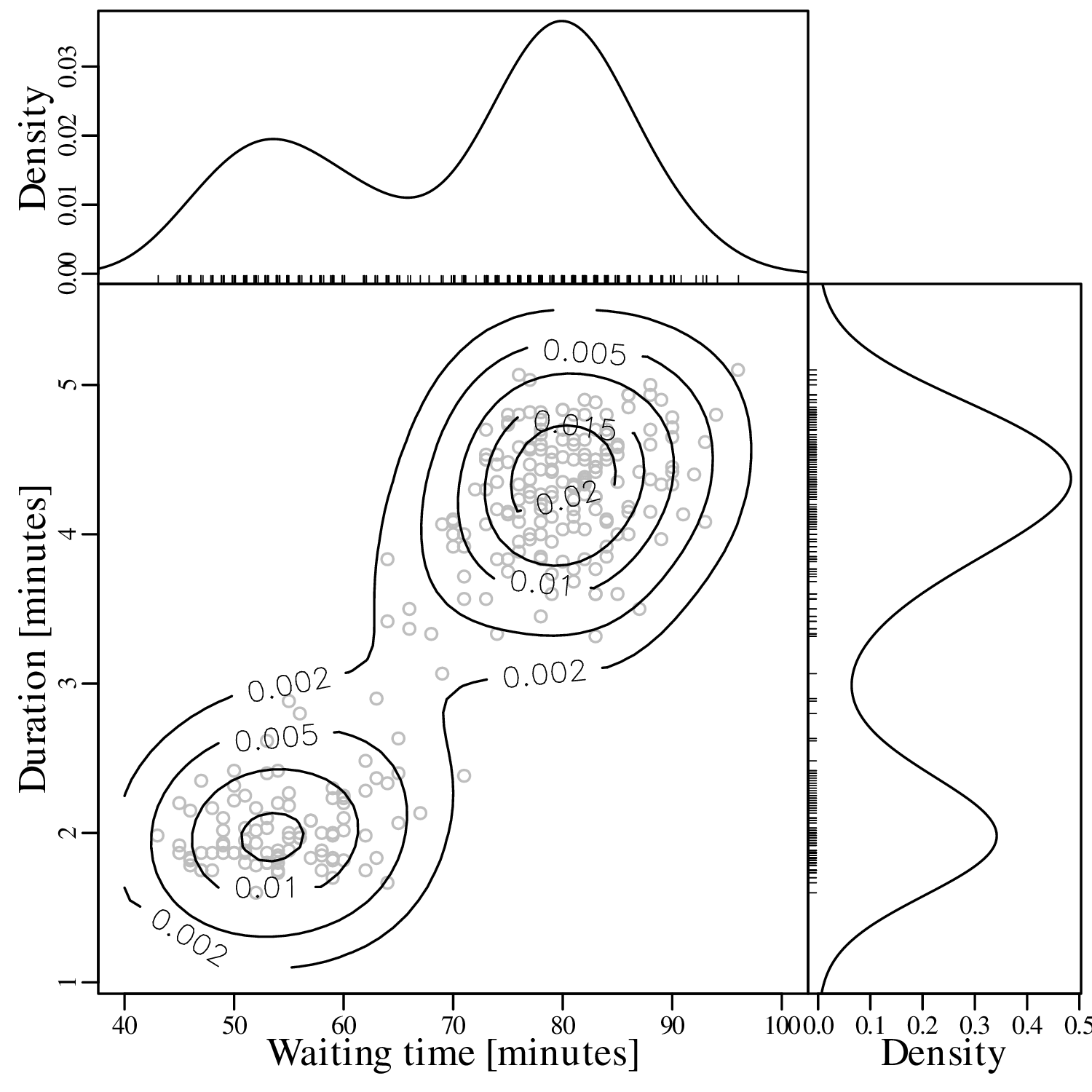

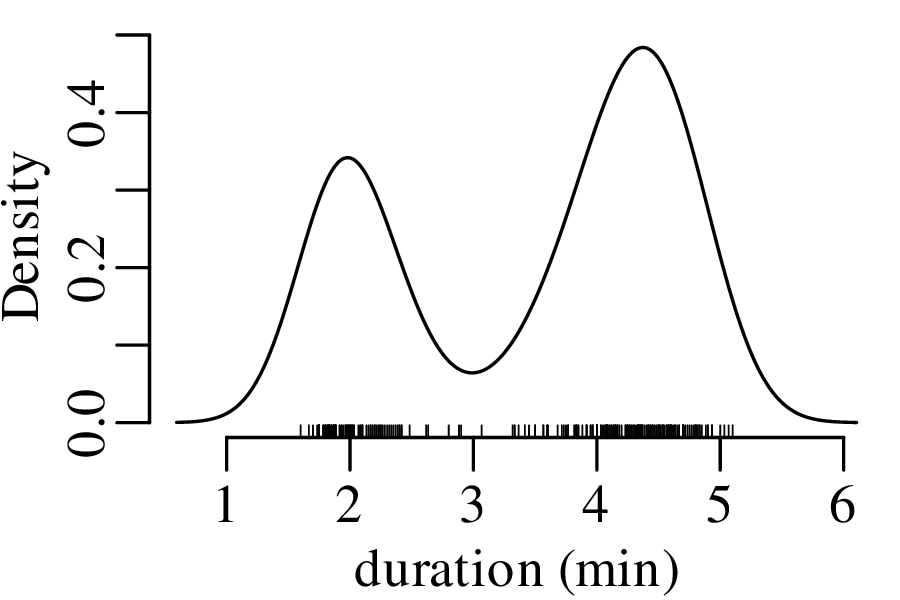

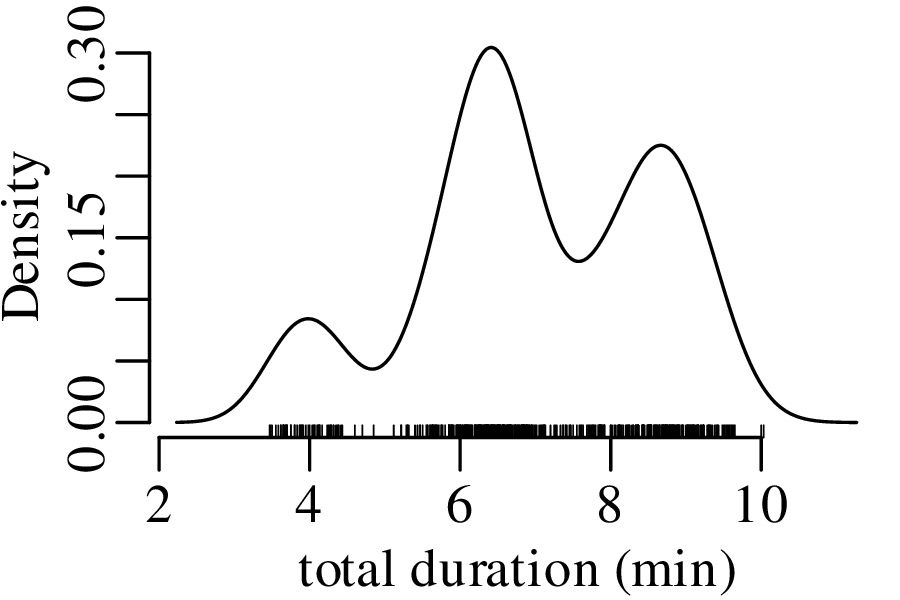

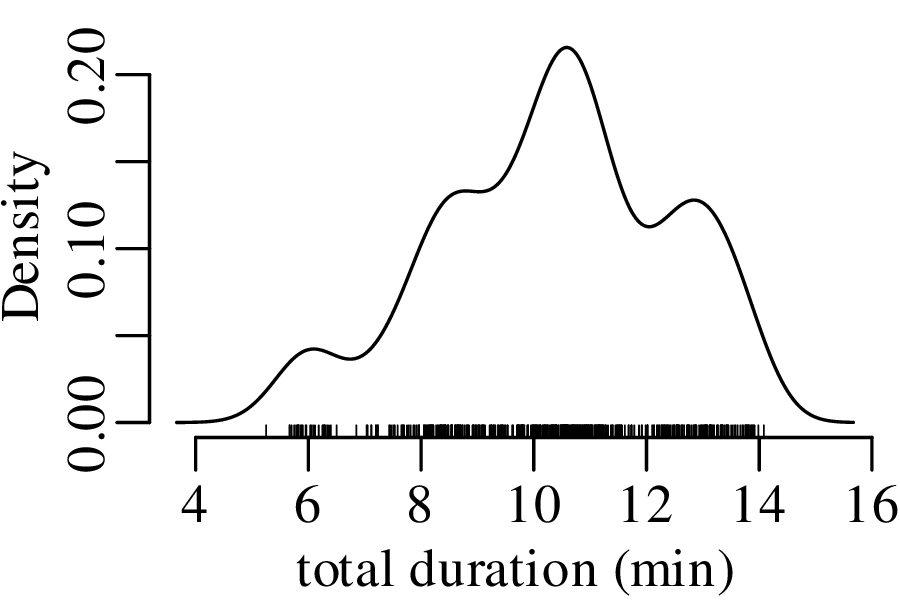

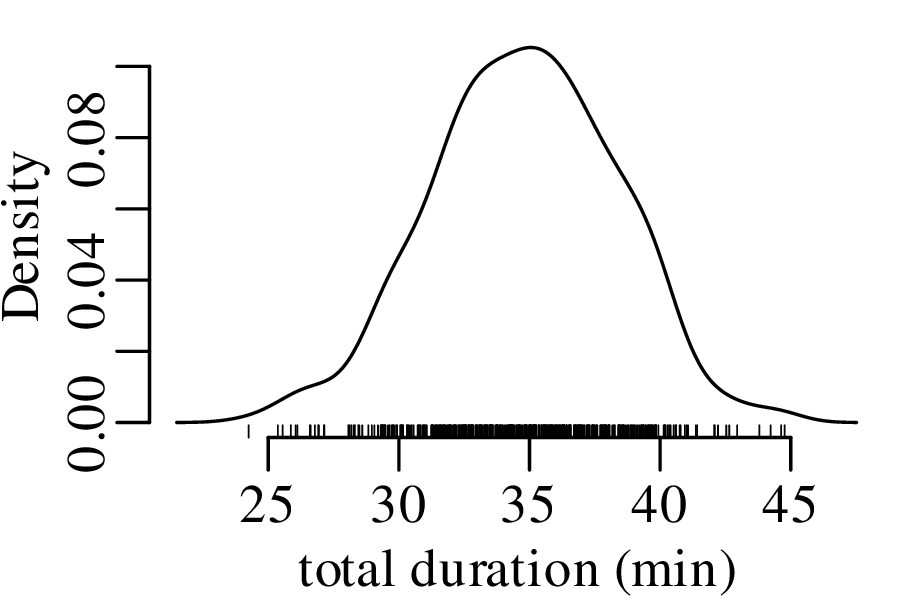

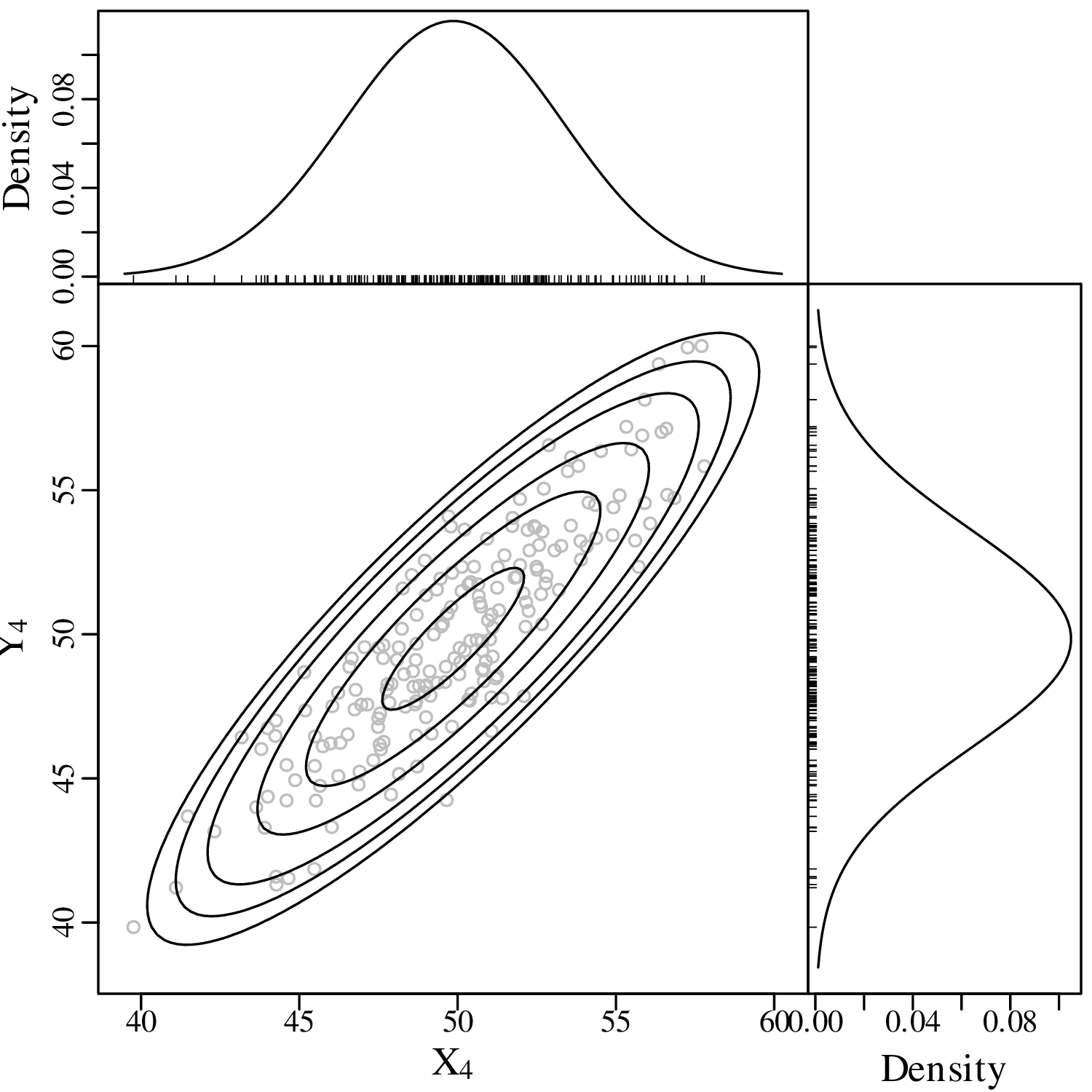

KDEs can be generalised from one to two dimensions. For example, consider a dataset of eruption timings from the Old Faithful geyser in Yellowstone national park (Wyoming, USA):

It is generally not possible to visualise datasets of more than two dimensions in a single graphic. In this case there are two options:

The second strategy is also known as “ordination” and will be discussed in detail in Section 12.1.

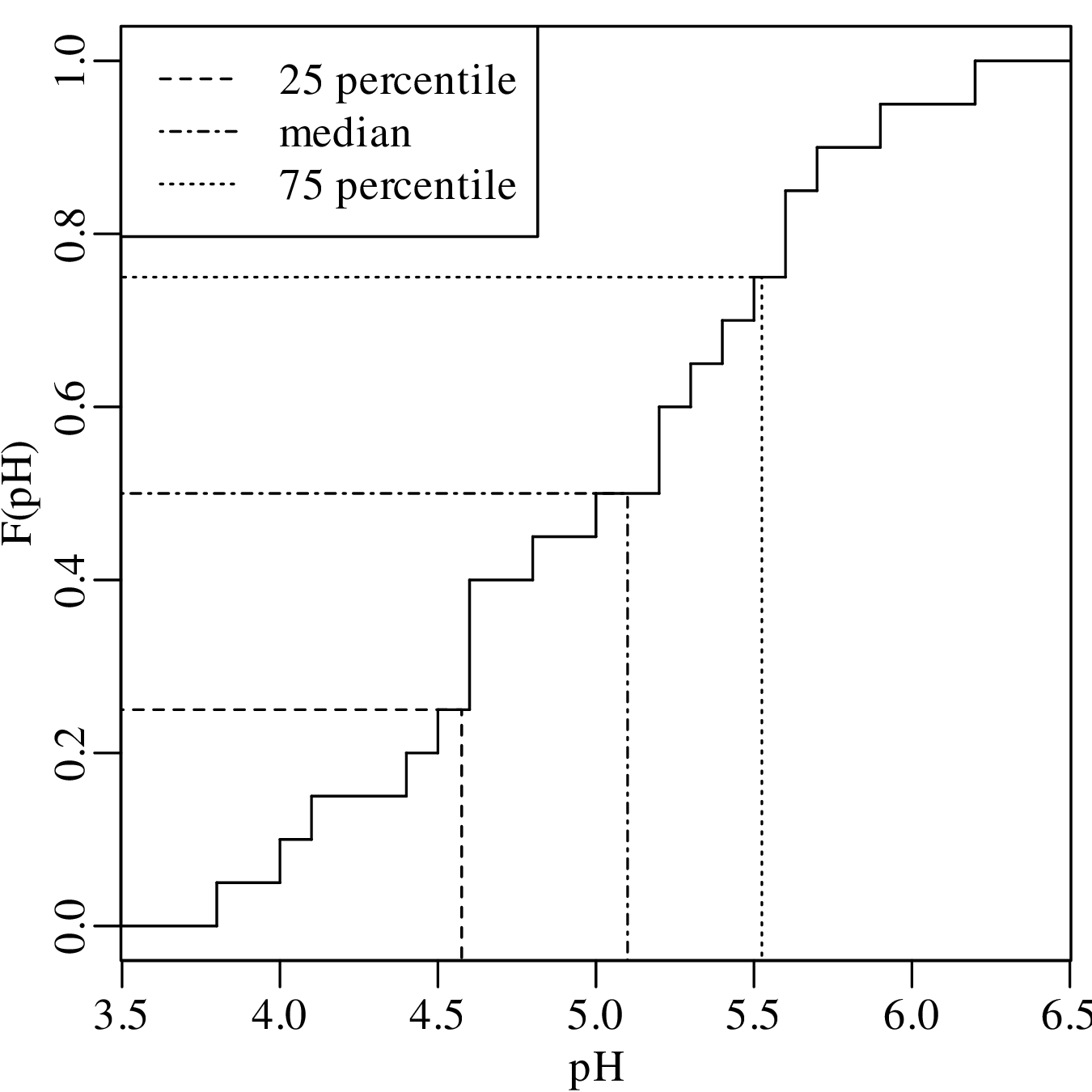

Both histograms and kernel density estimates require the selection of a ‘smoothing parameter’. For the histogram, this is the bin width; for the KDE, it is the bandwidth (h in Equation 2.3). Despite the existence of rules of thumb to automatically choose an appropriate value for the smoothing parameter, there nevertheless is a level of arbitrariness associated with them. The empirical cumulative distribution function (ECDF) is an alternative data visualisation device that does not require smoothing. An ECDF is step function that jumps up by 1∕n at each of n data points. The mathematical formula for this procedure can be written as:

| (2.10) |

where I(∗) = 1 if ∗ is ‘true’ and I(∗) = 0 if ∗ is ‘false’. The y-coordinates of the ECDF are values from 0 to 1 that mark the fraction of the measurements that are less than a particular value. Plotting the pH, Ca/Mg, vegetation and geyser data as ECDFs:

The visual interpretation of ECDFs is different from that of histograms or KDEs. Whereas different clusters of values stand out as ‘peaks’ in a histogram or KDE, they are marked by steep segments of the ECDF. For example, the two peaks in the KDE of the geyser data (Figure 2.15) correspond to two steps in the ECDF (Figure 2.16.d).

After a purely qualitative inspection of the data, we can now move on to a more quantitative description. This chapter will introduce a number of summary statistics to summarise larger datasets using just a few numerical values, including:

Before proceeding with this topic, it is useful to bear in mind that these summary statistics have limitations. The Anscombe quartet of Table 2.1 and Figure 2.1 showed that very different looking datasets can have identical summary statistics. But with this caveat in mind, summary statistics are an essential component of data analysis provided that they are preceded by a visual inspection of the data.

There are many ways to define the ‘average’ value of a multi-value dataset. In this chapter, we will introduce three of these but later chapters will introduce a few more.

| (3.1) |

| (3.2) |

If n is an even number, then the median is the average of the two numbers on either side of the middle. Graphically, the median can be identified as the value that corresponds to the halfway point of the ECDF.

Applying these three concepts to the pH data, the mean is given by:

|

|

The median is obtained by ranking the values in increasing order, and marking the two middle values in bold:

3.8, 4.0, 4.1, 4.4, 4.5, 4.6, 4.6, 4.6, 4.8, 5.0, 5.2, 5.2, 5.3, 5.4, 5.5, 5.6, 5.6, 5.7, 5.9, 6.2

Then the median is the average of these two values (median[x] = 5.1). The most frequently occurring pH value in the dataset is 4.6, but this is a rounded value from a continuous distribution. If instead we use a KDE to identify the mode, then this yields a value of 5.4:

Calculating the same three summary statistics for the Ca/Mg data:

Finally, repeating the exercise one more time for the vegetation and geyser eruption data:

It is rare for all the observations in a dataset to be exactly the same. In most cases they are spread out over a range of values. The amount of spread can be defined in a number of ways, the most common of which are:

| (3.3) |

where is the arithmetic mean (Equation 3.1). The square of the standard deviation (i.e. s[x]2) is also known as the variance.

| (3.4) |

Calculating the standard deviation of the pH data (whose mean is = 5.0):

| i | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| x i | 6.2 | 4.4 | 5.6 | 5.2 | 4.5 | 5.4 | 4.8 | 5.9 | 3.8 | 4.0 | 5.0 | 4.1 | 5.2 | 5.5 | 5.3 | 4.6 | 5.7 | 4.6 | 4.6 | 5.6 |

| (xi −) | 1.2 | -.6 | .6 | .2 | -.5 | .4 | -.2 | .9 | -1.2 | -1.0 | .0 | -.9 | .2 | .5 | .3 | -.4 | .7 | -.4 | -.4 | .6 |

| (xi −)2 | 1.44 | .36 | .36 | .04 | .25 | .16 | .04 | .81 | 1.44 | 1.0 | .0 | .81 | .04 | .25 | .09 | .16 | .49 | .16 | .16 | .36 |

Taking the sum of the last row:

from which we get:

Sorting the pH values in increasing order and recalling that med(x) = 5.1:

| i | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| x i | 3.8 | 4.0 | 4.1 | 4.4 | 4.5 | 4.6 | 4.6 | 4.6 | 4.8 | 5.0 | 5.2 | 5.2 | 5.3 | 5.4 | 5.5 | 5.6 | 5.6 | 5.7 | 5.9 | 6.2 |

| xi − med(x) | -1.3 | -1.1 | -1.0 | -0.7 | -0.6 | -0.5 | -0.5 | -0.5 | -0.3 | -0.1 | 0.1 | 0.1 | 0.2 | 0.3 | 0.4 | 0.5 | 0.5 | 0.6 | 0.8 | 1.1 |

| |xi − med(x)| | 1.3 | 1.1 | 1.0 | 0.7 | 0.6 | 0.5 | 0.5 | 0.5 | 0.3 | 0.1 | 0.1 | 0.1 | 0.2 | 0.3 | 0.4 | 0.5 | 0.5 | 0.6 | 0.8 | 1.1 |

| sorted | 0.1 | 0.1 | 0.1 | 0.2 | 0.3 | 0.3 | 0.4 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.6 | 0.6 | 0.7 | 0.8 | 1.0 | 1.1 | 1.1 | 1.3 |

The MAD is given by the mean of the bold values on the final row of this table, yielding a value of MAD = 0.5.

The 25 and 75 percentiles are obtained by averaging the two pairs of bold faced numbers on the second row of the table. They are 4.55 and 5.55, respectively. Therefore, IQR = 5.55 - 4.55 = 1.00. Showing the same calculation on an ECDF:

The skewness of a distribution is defined as:

| (3.5) |

To assess the meaning of this new summary statistic, let us plot the pH and Ca/Mg datasets alongside the distribution of Covid-19 death rates (in deaths per 100,000 people) in the UK:

A box-and-wisker plot is a compact way to jointly visualise the most important summary statistics in a dataset:

Probability is a numerical description of how likely it is for an event to occur or how likely it is for a proposition to be true. In the context of a random experiment, in which all outcomes are equally likely, the probability of an outcome A can be defined as:

| (4.1) |

For example, the probability of tossing an unbiased coin and observing a head (H) is

The probability of tossing the same coin three times and obtaining two heads and one tail (T) is:

| (4.2) |

Similarly, the probability of throwing two fair dice and obtaining a two (⚁ ) and a six (⚅ ) is:

| (4.3) |

The multiplicative rule of probability states that the probability of two combined independent1 experiments is given by the product of their respective probabilities. Thus, if one were to carry out a coin tossing and a dice throwing experiment, then the probability of obtaining two heads and one tail for the first experiment and throwing a two and a six in the second experiment is:

where ∩ stands for ‘and’ in the sense of “the intersection of two sets of outcomes”. Similarly, the likelihood of throwing an unbiased coin twice and obtaining two heads can be calculated as:

The additive rule of probability states that the probability of observing either of two outcomes A and B is given by:

| (4.4) |

For example the probability of observing two heads for the first experiment or throwing a two and a six in the second experiment is:

For two mutually exclusive experiments, the third term in Equation 4.4 disappears. For example, the probability of obtaining two heads and one tail or throwing three heads is:

A permutation is an ordered arrangement of objects. These objects can be selected in one of two ways:

| (4.5) |

| (4.6) |

possible sequences, where ‘!’ is the factorial operator.

Let us apply these two formulas to a classic statistical problem: “what is the probability that at least two students in a classroom of k celebrate their birthdays on the same day?”. The solution is as follows.

If k = 23, then P(> 1 overlapping birthdays) = 0.507. In other words, there is a greater than 50% chance that at least two students will share the same birthday in a classroom of 23.

Having considered coins, dice and lottery balls, we will now (literally!) deal with a fourth archetypal source of statistical experiments, namely playing cards. Equation 4.6 showed that there are 52!∕49! = 132600 unique possible ways to select three cards from a deck. Suppose that we have drawn the following cards:

then there are 3! = 6 ways to order these cards:

Suppose that we don’t care in which order the objects (cards) appear. How many different unordered samples (hands) are possible?

(# ordered samples) = (# unordered samples) × (# ways to order the samples)

There are n!∕(n−k)! ways to select k objects from a collection of n, and there are k! ways to order these k objects. Therefore

The formula on the right hand side of this equation gives the number of combinations of k elements

among a collection of n. This formula is also known as the binomial coefficient and is often written

as (pronounce “n choose k”):

| (4.7) |

Applying this formula to the card dealing example, the number of ways to draw three cards from a deck of 52 is

Revisiting the two examples at the start of this chapter, the number of ways to arrange two heads among three coins is

which is the numerator of Equation 4.2; and the number of combinations of one ⚀ and one ⚅ is

which is the numerator of Equation 4.3.

So far we have assumed that all experiments (coin tosses, throws of a dice) were done independently, so that the outcome of one experiment did not affect that of the other. However this is not always the case in geology. Sometimes one event depends on another one. We can capture this phenomenon with the following definition:

| (4.8) |

Let P(A) be the probability that a sedimentary deposit contains ammonite fossils. And let P(B)

be the proportion of our field area that is covered by sedimentary rocks of Bajocian age (170.3 –

168.3 Ma). Then P(A|B) is the probability that a given Bajocian deposit contains ammonite

fossils. Conversely, P(B|A) is the probability that an ammonite fossil came from a Bajocian

deposit.

The multiplication law states that:

| (4.9) |

Suppose that 70% of our field area is covered by Bajocian deposits (P(B) = 0.7), and that 20% of

those Bajocian deposits contain ammonite fossils (P(A|B) = 0.2). Then there is a 14% (= 0.7 × 0.2)

chance that the field area contains Bajocian ammonites.

The law of total probability states that, given n mutually exclusive scenarios Bi (for 1 ≤ i ≤ n):

| (4.10) |

Consider a river whose catchment contains 70% Bajocian deposits (P(B1) = 0.7) and 30% Bathonian2 deposits (P(B2) = 0.3). Recall that the Bajocian is 20% likely to contain ammonite fossils (P(A|B1) = 0.2), and suppose that the Bathonian is 50% likely to contain such fossils (P(A|B2) = 0.5). How likely is it that the river catchment contains ammonites? Using Equation 4.10:

Equation 4.9 can be rearranged to form Bayes’ Rule:

| (4.11) |

or, combining Equation 4.11 with Equation 4.10:

| (4.12) |

Suppose that we have found an ammonite fossil in the river bed. What is its likely age? Using Equation 4.12, the probability that the unknown fossil is Bajocian (B1) is given by:

Thus there is a 48% chance that the fossil is Bajocian, and a 52% chance that it is Bathonian.

A Bernoulli variable takes on only two values: 0 or 1. For example:

Consider five gold diggers during the 1849 California gold rush, who have each purchased a claim in the Sierra Nevada foothills. Geological evidence suggests that, on average, two thirds of the claims in the area contain gold (1), and the remaining third do not (0). The probability that none of the five prospectors find gold is

The chance that exactly one of the prospectors strikes gold is

where

| P(10000) = | (2∕3)(1∕3)4 = 0.0082 | ||

| P(01000) = | (1∕3)(2∕3)(1∕3)3 = 0.0082 | ||

| P(00100) = | (1∕3)2(2∕3)(1∕3)2 = 0.0082 | ||

| P(00010) = | (1∕3)3(2∕3)(1∕3) = 0.0082 | ||

| P(00001) = | (1∕3)4(2∕3) = 0.0082 |

so that

in which we recognise the binomial coefficient (Equation 4.7). Similarly:

| P(2 × gold) | = | ||

| P(3 × gold) | = | ||

| P(4 × gold) | = | ||

| P(5 × gold) | = |

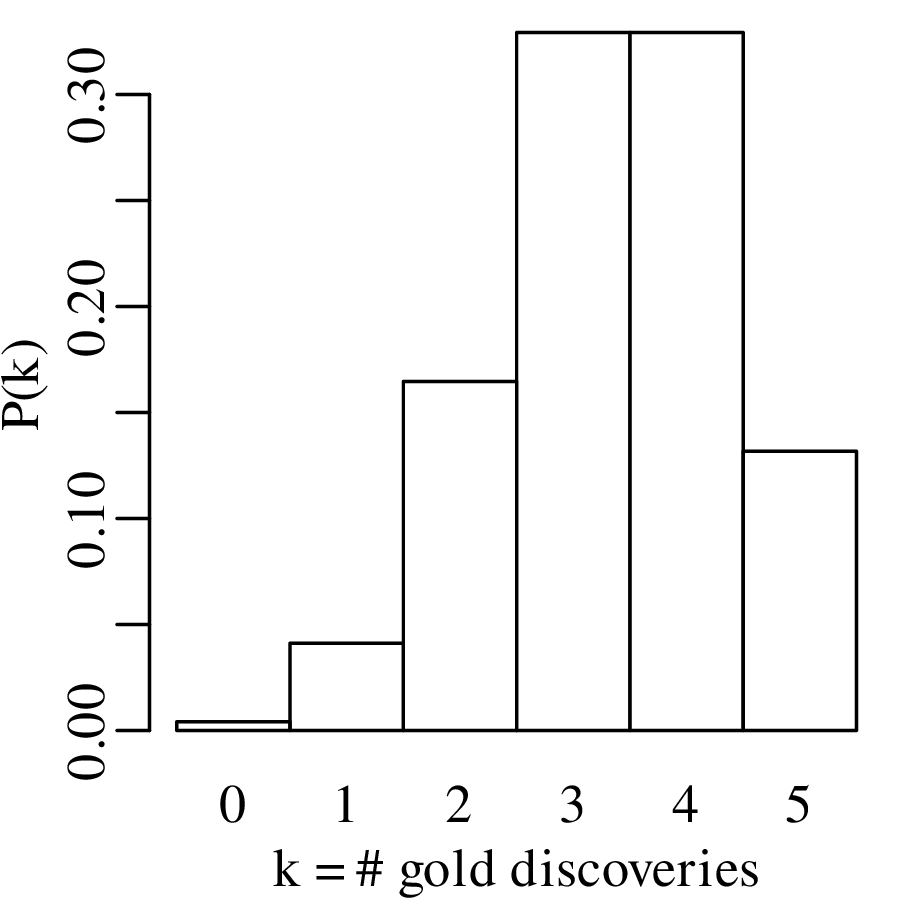

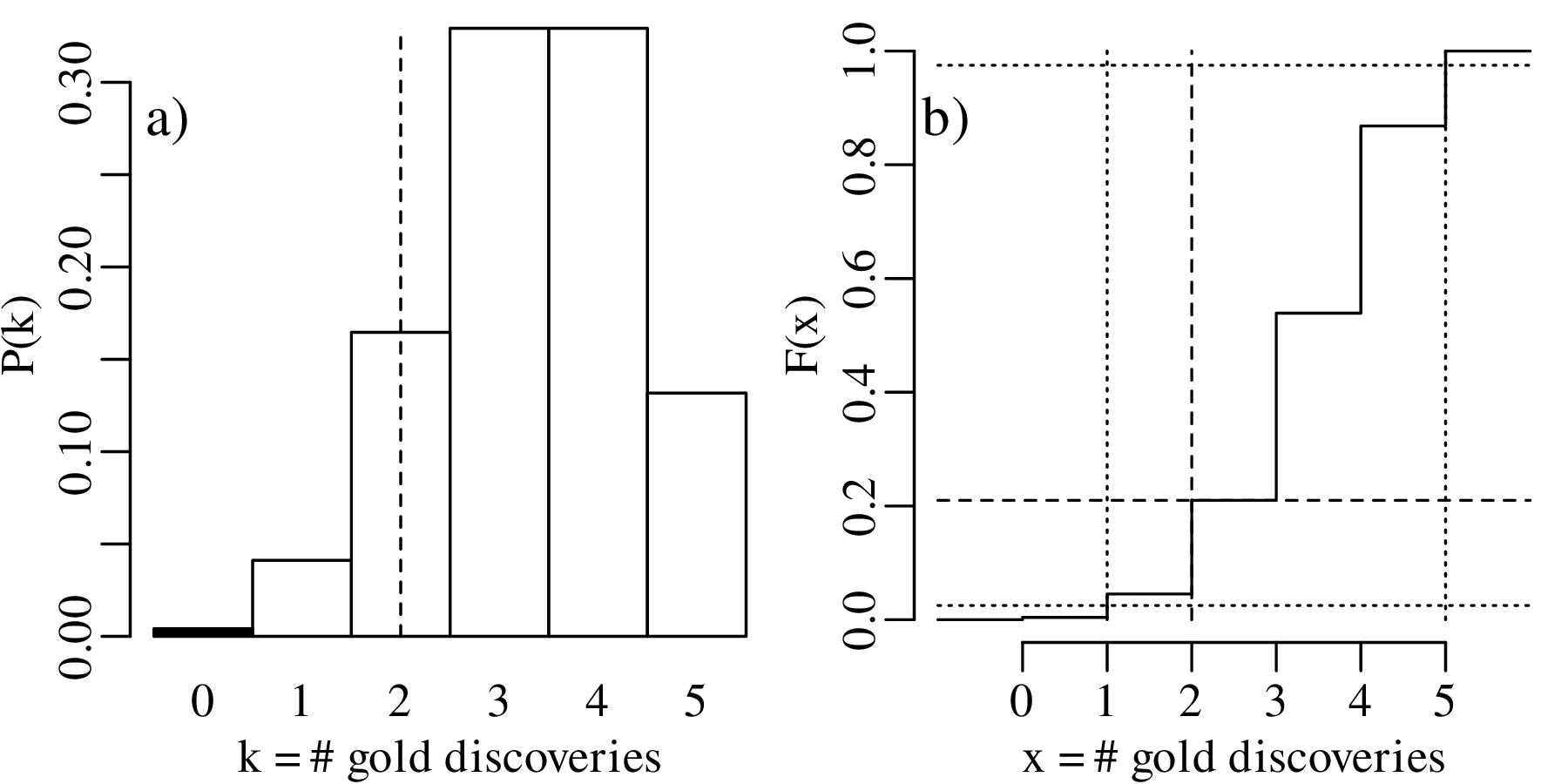

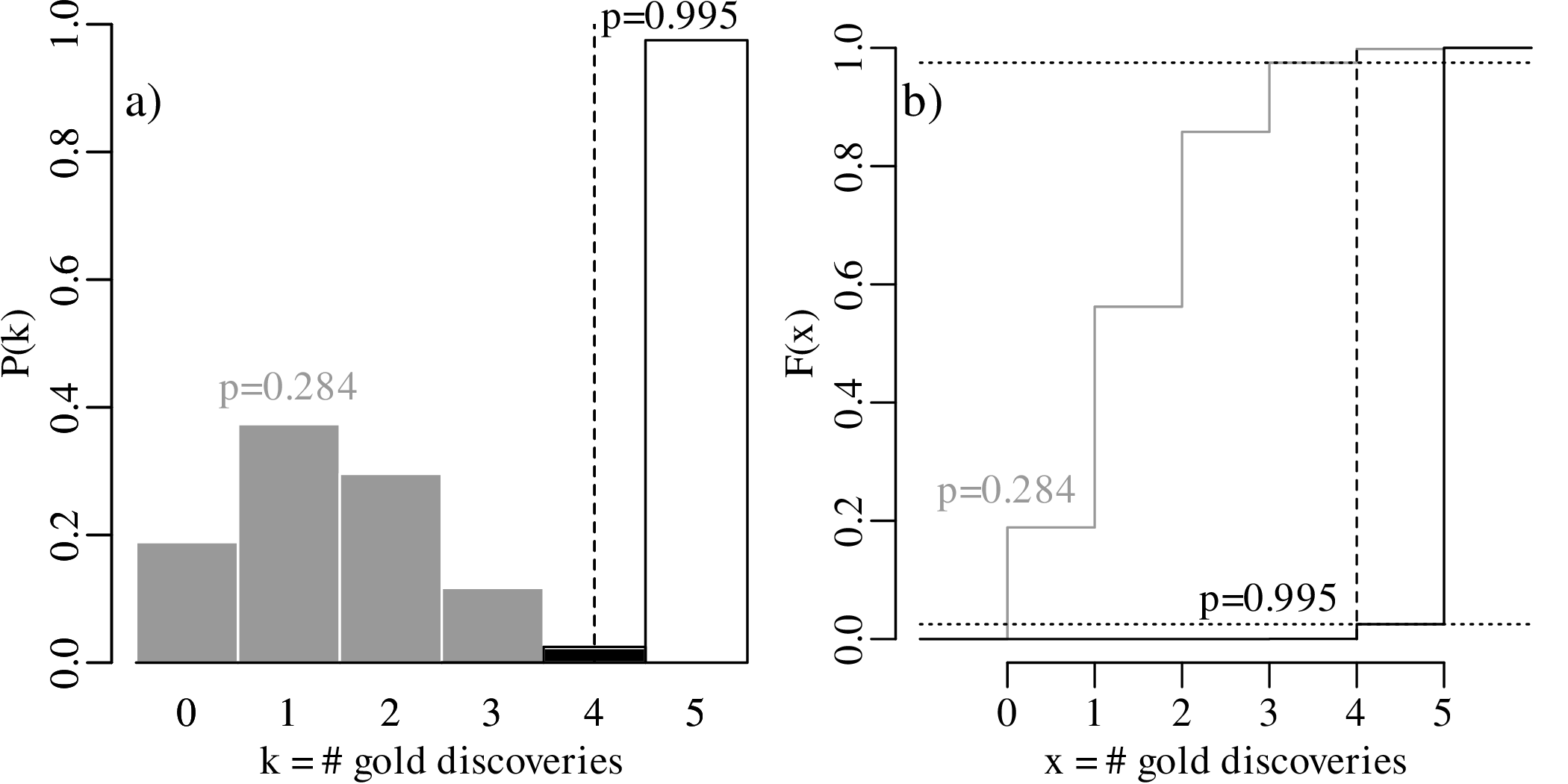

These probabilities form a probability mass function (PMF) and can be visualised as a bar chart:

The generic equation for the PMF of the binomial distribution is

| (5.1) |

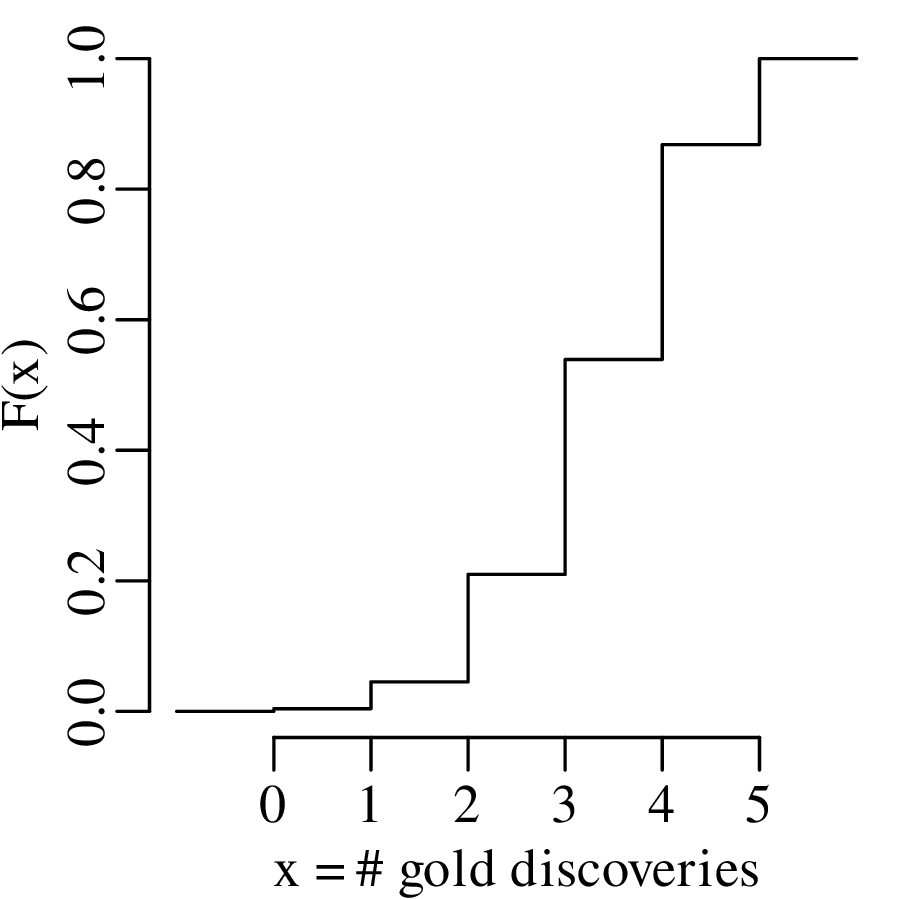

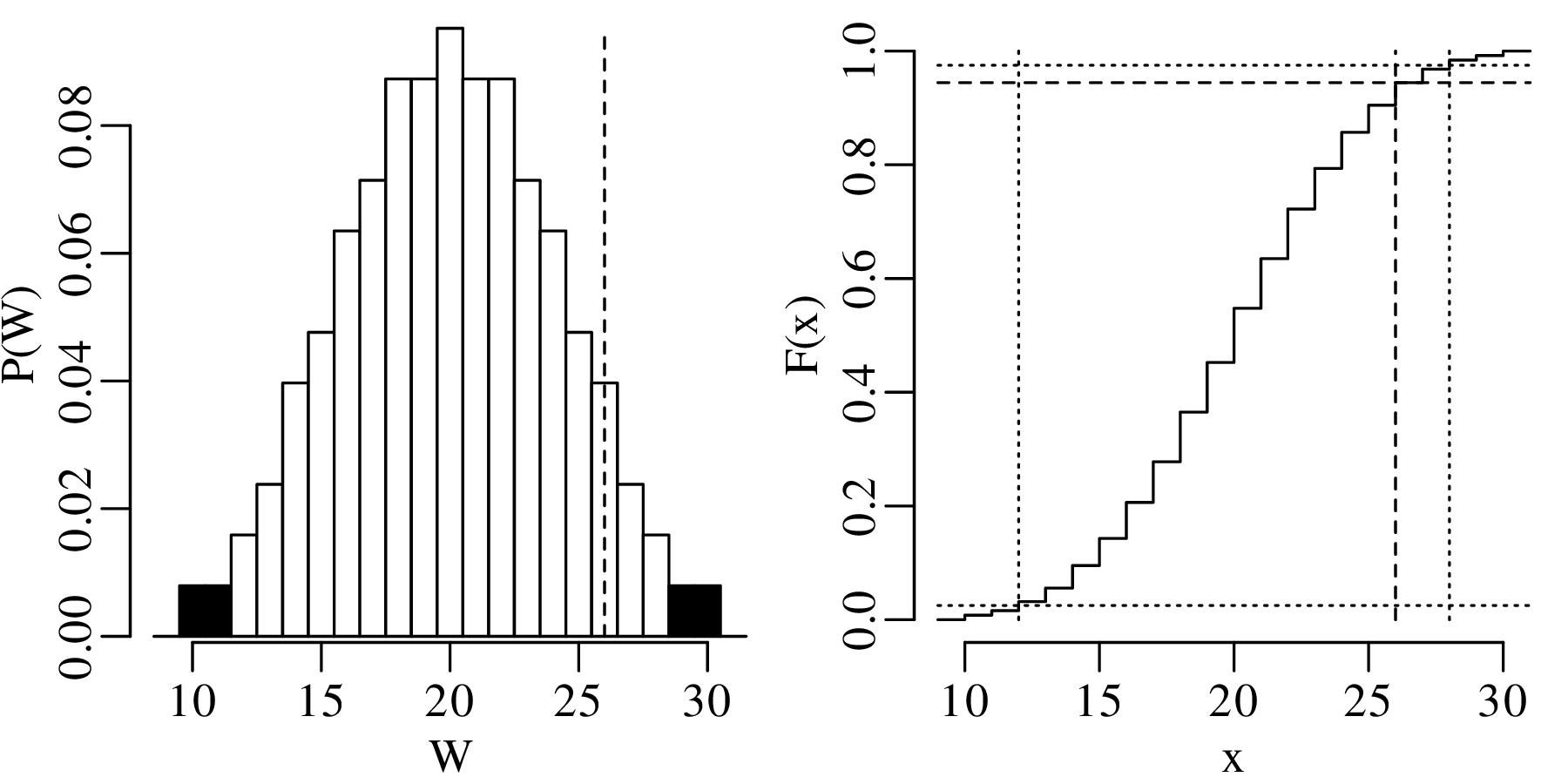

where p is the probability of success and k is the number of successes out of n trials. Equivalently, the results can also be shown as a cumulative distribution function (CDF):

| (5.2) |

The previous section assumed that the probability of success (p in Equation 5.1) is known. In the real world, this is rarely the case. In fact, p is usually the parameter whose value we want to determine based on some data. Consider the general case of k successes among n trials. Then we can estimate p by reformulating Equation 5.1 in terms of p instead of k:

| (5.3) |

This is called the likelihood function. The only difference between the probability mass function (Equation 5.1) and the likelihood function (Equation 5.3) is that former calculates the probability of an outcome k given the parameters n and p, whereas the latter is used to estimate the parameter p given the data n and k. The most likely value of p given n and k can be found by maximising ℒ(p|n,k). This can be achieved by taking the derivative of Equation 5.3 with respect to p and setting it to zero:

which gives

The symbol indicates that

is an estimate for the true parameter value p, which is unknown.

Dividing by

and rearranging:

Dividing both sides by k(1 −

)n−k:

which can be solved for :

| (5.4) |

Let us apply Equation 5.4 to our gold prospecting example. Suppose that only two of the five claims produce gold. Then our best estimate for p given this result is

So based on this very small dataset, our best estimate for the abundance of gold in the Sierra Nevada foothills is 40%. This may be a trivial result, but it is nevertheless a useful one. The derivation of Equation 5.4 from Equation 5.3 follows a recipe that underpins much of mathematical statistics. It is called the method of maximum likelihood. Of course, the derivation of parameter estimates is not always as easy as it is for the binomial case.

Let us continue with our gold prospecting example. Given that only two of the five prospectors found

gold, our best estimate for the abundance of gold-bearing claims in the prospecting area is = 2∕5

(40%). However the introductory paragraph to this chapter mentioned that geological evidence

suggests that 2/3 (67%) of the claims should contain gold. Can the discrepancy between the

predicted and the observed number of successes by attributed to bad luck, or does it mean that

the geological estimates were wrong? To answer this question, we follow a sequence of six

steps:

HA (alternative hypothesis):

p < 2∕3

| k | 0 | 1 | 2 | 3 | 4 | 5 |

| P(T = k) | 0.0041 | 0.0411 | 0.1646 | 0.3292 | 0.3292 | 0.1317 |

| P(T ≤ k) | 0.0041 | 0.0453 | 0.2099 | 0.5391 | 0.8683 | 1.0000 |

The p-value1 is the probability of obtaining test results at least as extreme as the result actually observed, under the assumption that the null hypothesis is correct. For our one-sided test, the p-value is 0.2099, which corresponds to the probability of observing k ≤ 2 successful claims if p = 2∕3.

| k | 0 | 1 | 2 | 3 | 4 | 5 |

| P(T = k) | 0.0041 | 0.0411 | 0.1646 | 0.3292 | 0.3292 | 0.1317 |

| P(T ≤ k) | 0.0041 | 0.0453 | 0.2099 | 0.5391 | 0.8683 | 1.0000 |

k = 0 and k = 1 are incompatible with H0 because the probability of finding gold in k ≤ 1 claims is only 0.0453, which is less than α. Therefore our rejection region contains two values:

which means that we cannot reject H0. Alternatively, and equivalently, we can reach the same conclusion by observing that the p-value is greater than α (i.e., 0.2099 > 0.05). Note that failure to reject the null hypothesis does not mean that said hypothesis has been accepted!

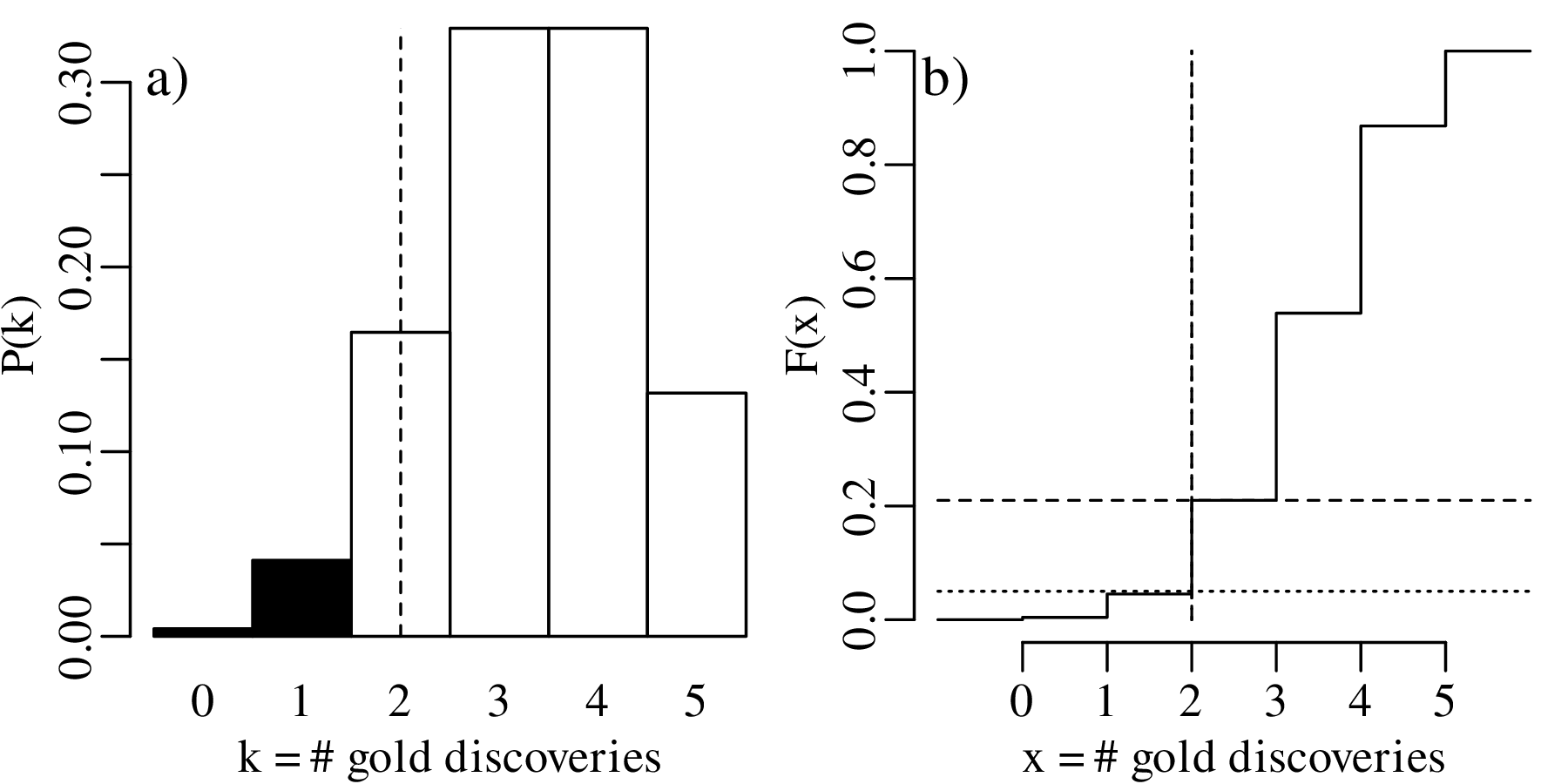

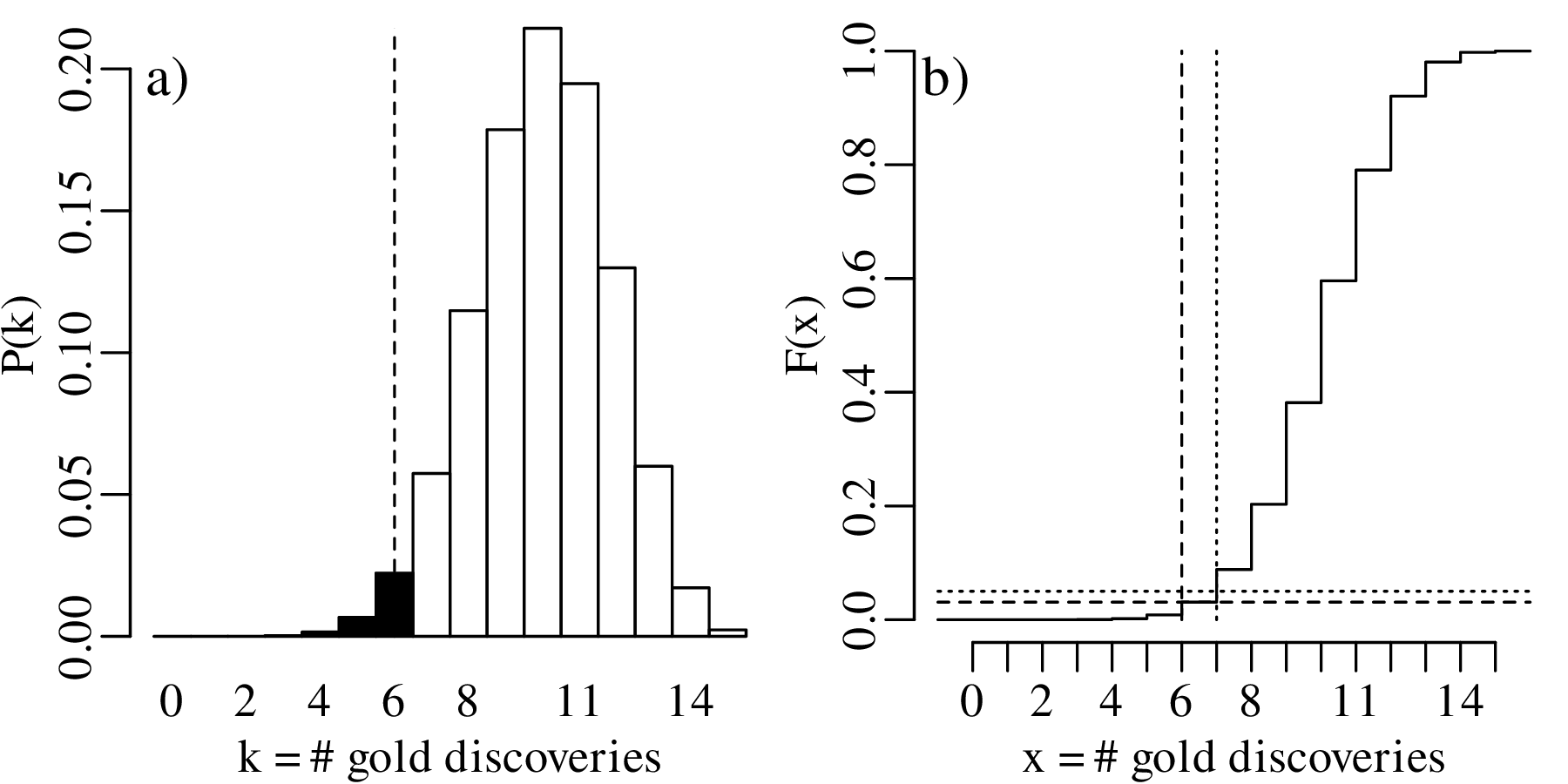

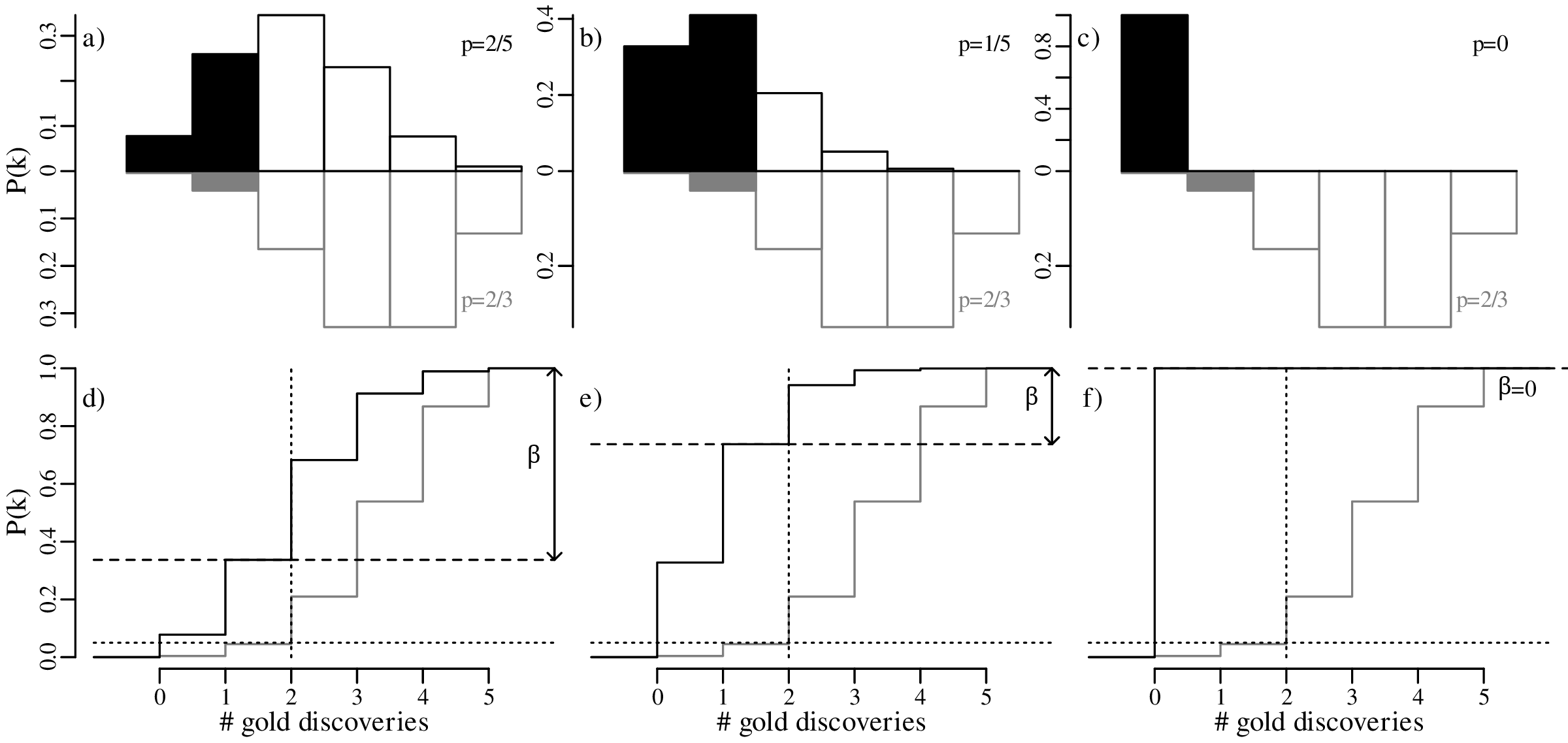

Displaying the rejection region graphically:

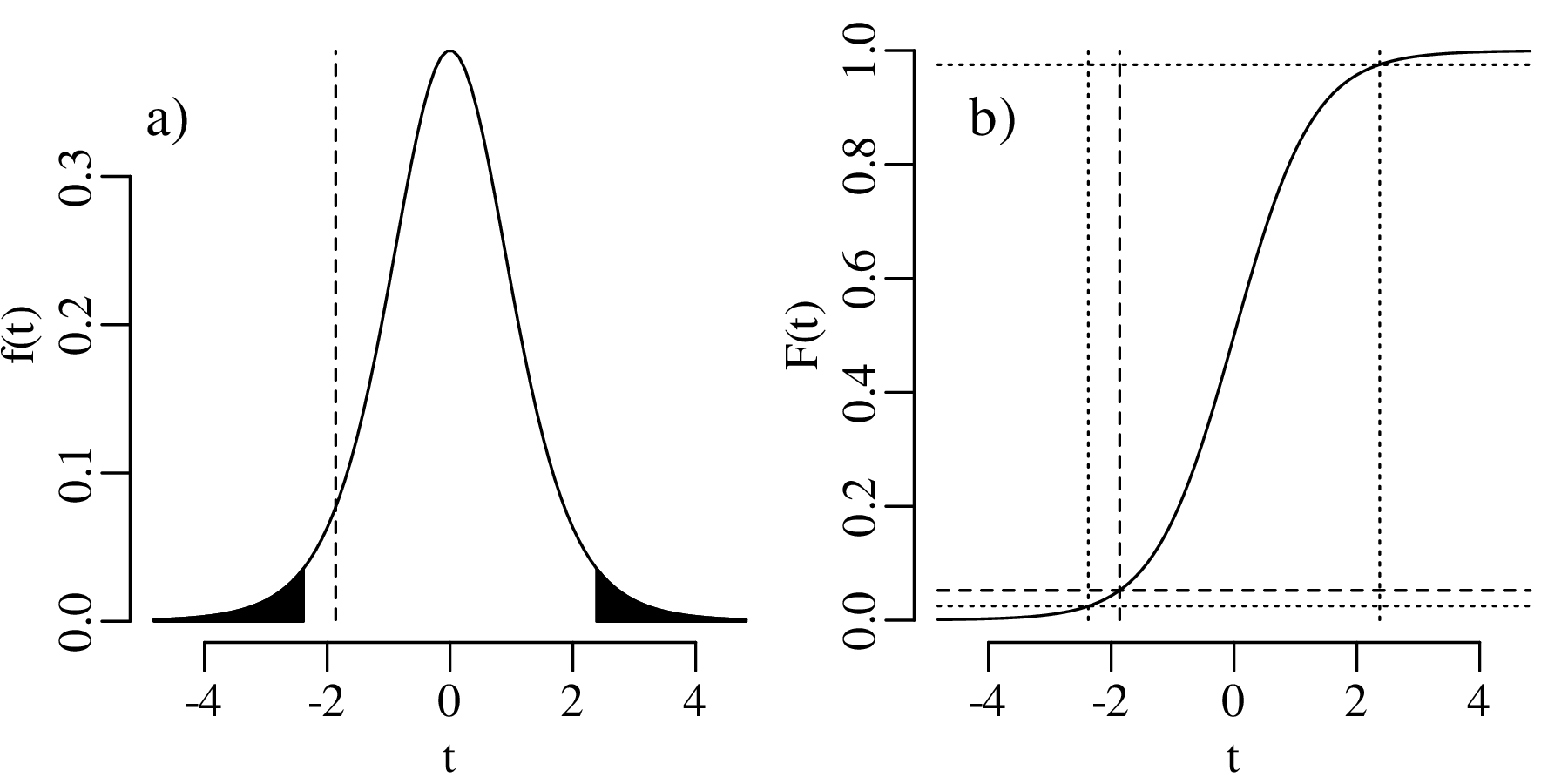

The above hypothesis test is called a one-sided hypothesis test, which refers to the fact that HA specifies a direction (‘<’ or ‘>’). Alternatively, we can also formulate a two-sided hypothesis test:

HA (alternative hypothesis):

p≠2∕3

| k | 0 | 1 | 2 | 3 | 4 | 5 |

| P(T = k) | 0.0041 | 0.0411 | 0.1646 | 0.3292 | 0.3292 | 0.1317 |

| P(T ≤ k) | 0.0041 | 0.0453 | 0.2099 | 0.5391 | 0.8683 | 1.0000 |

| P(T ≥ k) | 1.000 | 0.9959 | 0.9547 | 0.7901 | 0.4609 | 0.1317 |

| k | 0 | 1 | 2 | 3 | 4 | 5 |

| P(T = k) | 0.0041 | 0.0411 | 0.1646 | 0.3292 | 0.3292 | 0.1317 |

| P(T ≤ k) | 0.0041 | 0.0453 | 0.2099 | 0.5391 | 0.8683 | 1.0000 |

| P(T ≥ k) | 1.000 | 0.9959 | 0.9547 | 0.7901 | 0.4609 | 0.1317 |

which yields a smaller rejection region than before, because P(T ≤ 1) = 0.0453, which is greater than α∕2 = 0.025. The same is true for P(T ≥ k) for any k. Therefore:

Displaying the two-sided hypothesis test graphically:

Again, H0 cannot be rejected at an α = 0.05 significance level. So in this case the one-sided and two-sided hypothesis tests produce exactly the same result, as the observed value k = 2 is not in the rejection region for either test. However this is not always the case, as will be shown next.

Suppose that not five but fifteen gold prospectors had purchased a claim in the same area as before. Further suppose that six of these prospectors had struck gold. Then the maximum likelihood estimate for p is:

which is the same as before. The one-sided hypothesis test (H0 : p = 2∕3 vs. HA : p < 2∕3) proceeds as before, but leads to a different table of probabilities:

| k | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| P(T = k) | 7.0×10−8 | 2.1×10−6 | 2.9×10−5 | 2.5×10−4 | 0.0015 | 0.0067 | 0.0223 | 0.0574 |

| P(T ≤ k) | 7.0×10−8 | 2.2×10−6 | 3.1×10−5 | 2.8×10−4 | 0.0018 | 0.0085 | 0.0308 | 0.0882 |

| k | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| P(T = k) | 0.1148 | 0.1786 | 0.2143 | 0.1948 | 0.1299 | 0.0599 | 0.0171 | 0.0023 |

| P(T ≤ k) | 0.2030 | 0.3816 | 0.5959 | 0.7908 | 0.9206 | 0.9806 | 0.9977 | 1.0000 |

The rejection region is shown in bold and consists of

| (5.5) |

which includes k = 6. Therefore H0 is rejected.

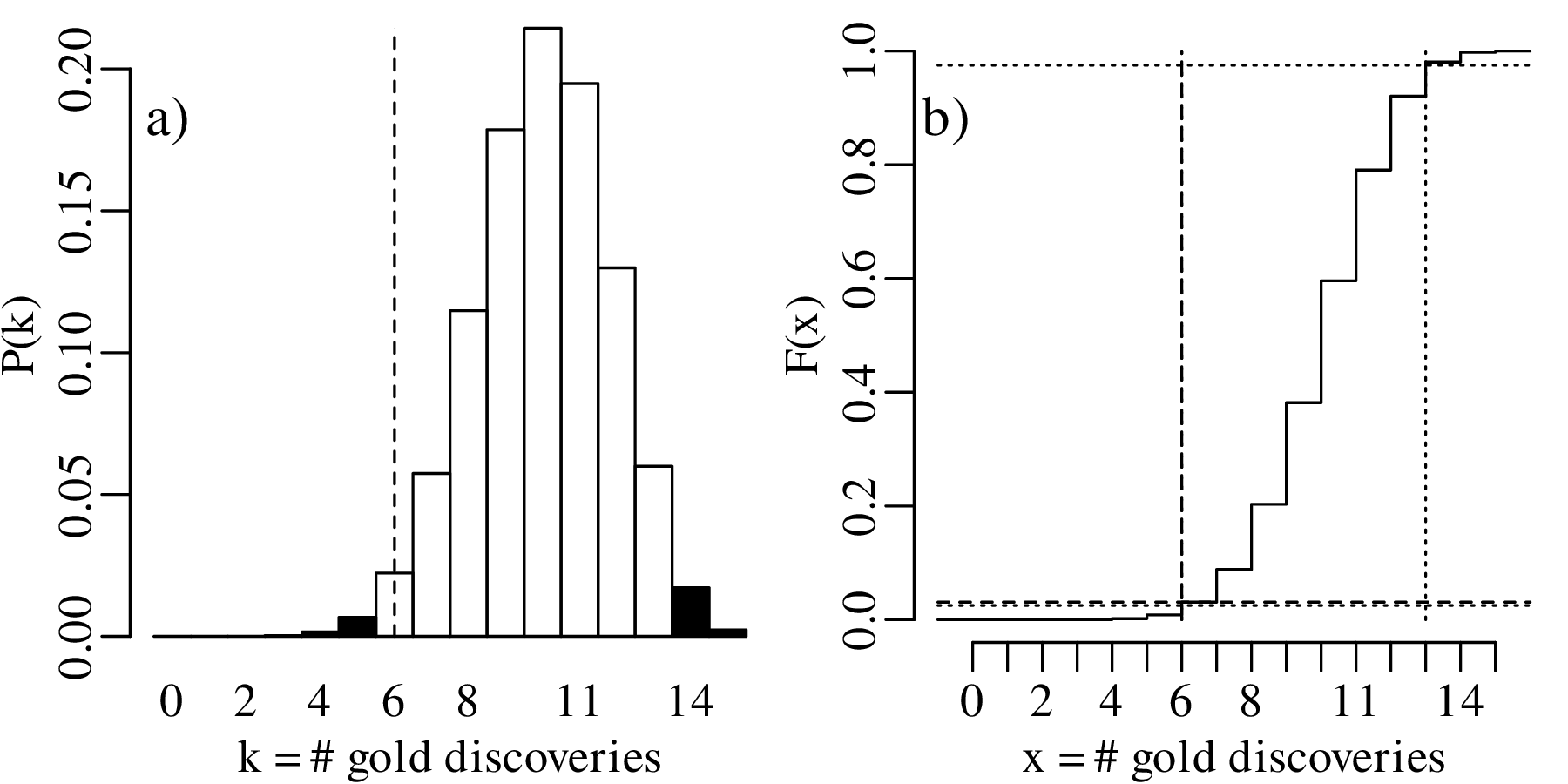

For the two-sided hypothesis test (H0 : p = 2∕3 vs. HA : p≠2∕3):

| k | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| P(T = k) | 7.0×10−8 | 2.1×10−6 | 2.9×10−5 | 2.5×10−4 | 0.0015 | 0.0067 | 0.0223 | 0.0574 |

| P(T ≤ k) | 7.0×10−8 | 2.2×10−6 | 3.1×10−5 | 2.8×10−4 | 0.0018 | 0.0085 | 0.0308 | 0.0882 |

| P(T ≥ k) | 1.0000 | 1-7.0×10−8 | 1-2.2×10−6 | 1-3.1×10−5 | 1-2.8×10−4 | 0.9982 | 0.9915 | 0.9692 |

| k | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| P(T = k) | 0.1148 | 0.1786 | 0.2143 | 0.1948 | 0.1299 | 0.0599 | 0.0171 | 0.0023 |

| P(T ≤ k) | 0.2030 | 0.3816 | 0.5959 | 0.7908 | 0.9206 | 0.9806 | 0.9977 | 1.0000 |

| P(T ≥ k) | 0.9118 | 0.7970 | 0.6184 | 0.4041 | 0.2092 | 0.0794 | 0.0194 | 0.0023 |

The rejection region (which includes both tails of the distribution) is:

| (5.6) |

This region does not include k = 6. Therefore we cannot reject the two-sided null hypothesis that p = 2∕3 at α = 0.05.

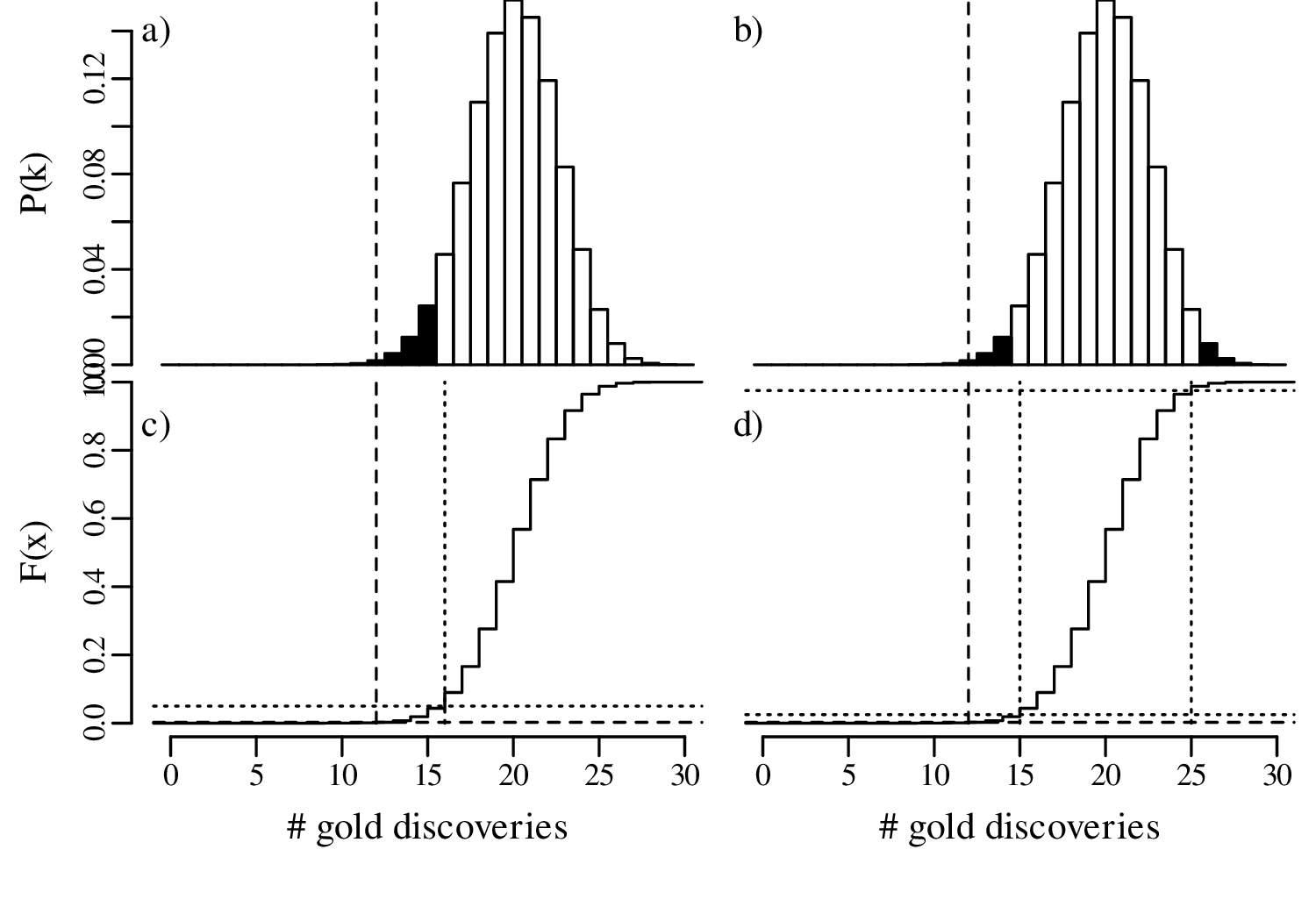

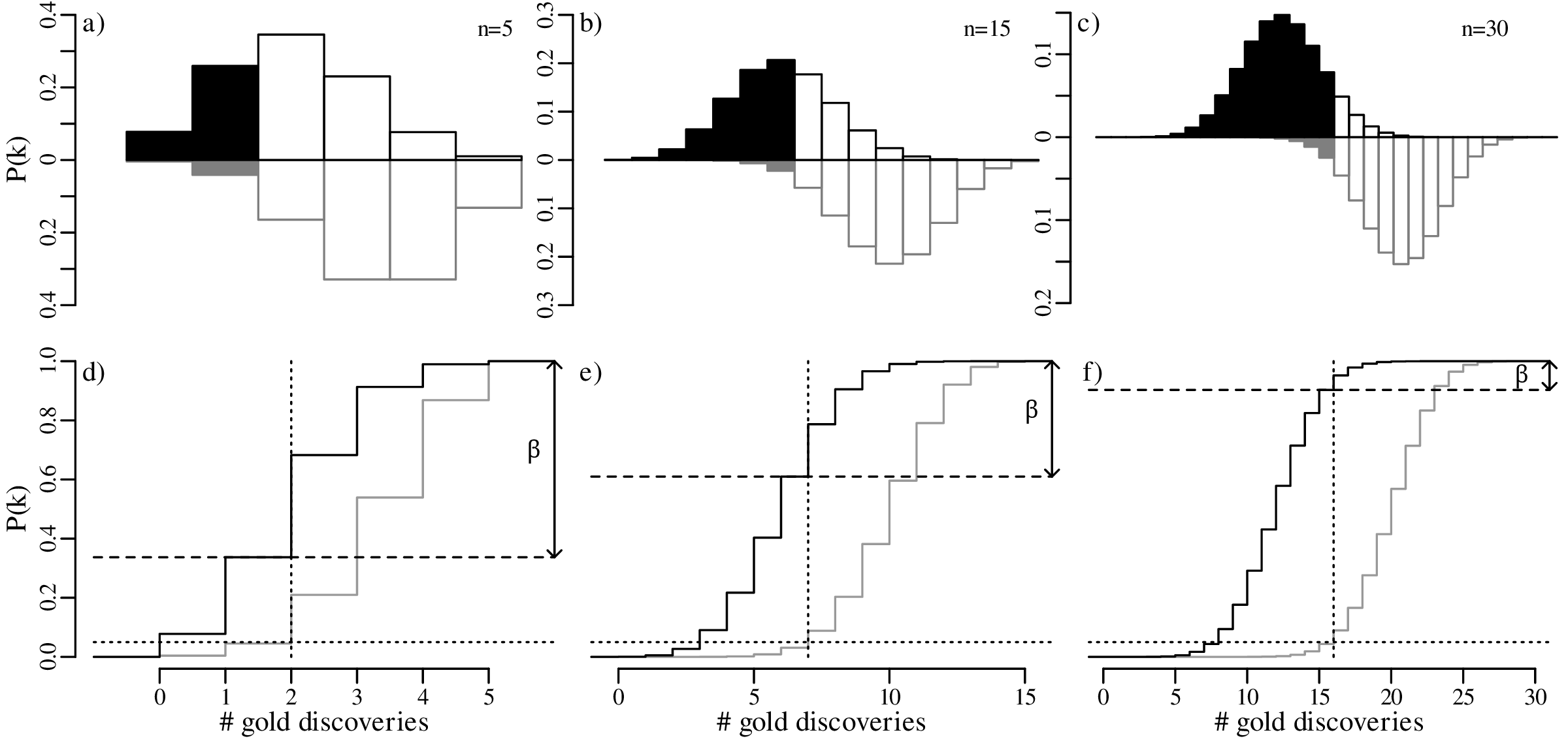

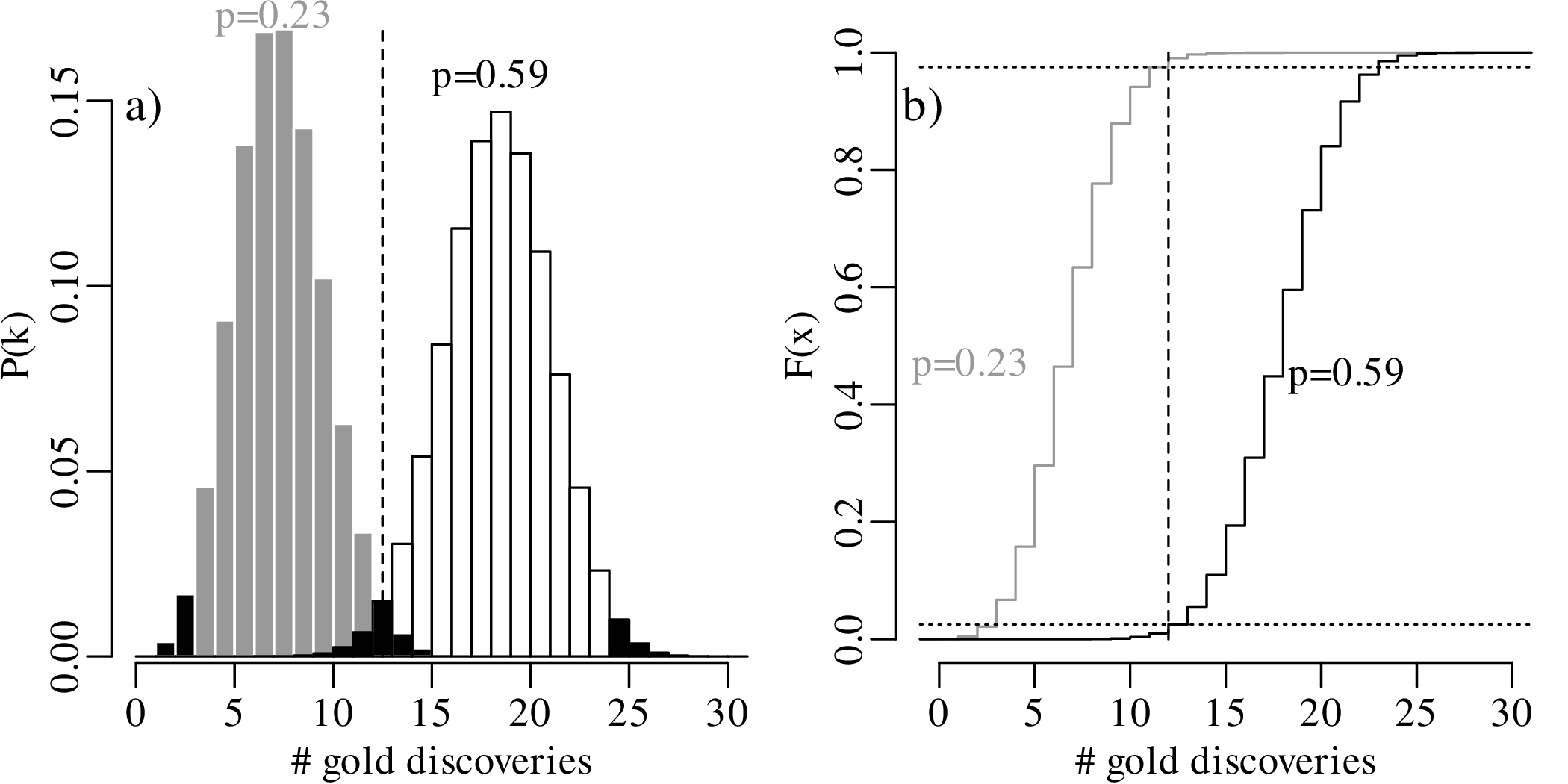

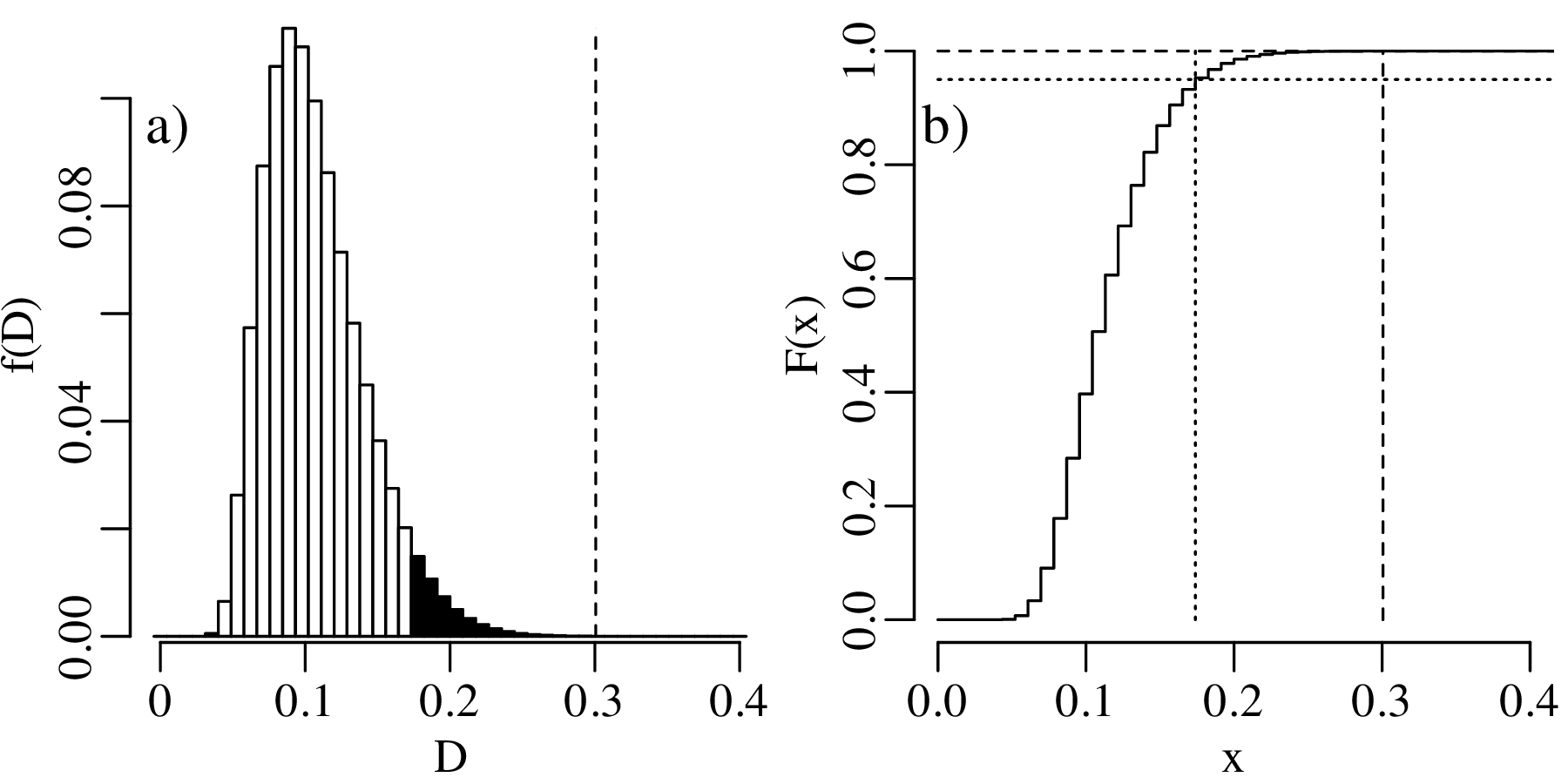

Let us increase our ‘sample size’ (number of prospectors) even more, from 15 to 30, and suppose once again that only 40% of these found gold even though the geological evidence suggested that this should be 67%. The lookup table of probabilities would be quite large, so we will just show the distributions graphically:

In summary, we have compared the same outcome of 40% successes with the null hypothesis p = 2∕3, using three different sample sizes (n):

In statistical terms, the increase in sample size has increased the ‘power’ of the test to reject the hypothesis test. A formal mathemathical definition of this concept will be given in Section 5.4.

There are four possible outcomes for a hypothesis test, which can be organised in a 2 × 2 table:

| H0 is ... | false | true |

| rejected | correct decision | Type-I error |

| not rejected | Type-II error | correct decision |

To appreciate the difference between the two types of errors in this table, it may be useful to compare statistical hypothesis testing with a legal analogue. The jury in a court of justice are in a situation that is similar to that of a statistical hypothesis test. They are faced with a suspect who has either committed a crime or not, and they must decide whether to sentence this person or acquit them. In this case our ‘null hypothesis’ is that the accused is innocent. The jury then needs decide whether there is enough evidence to reject this hypothesis in favour of the alternative hypothesis, which is that the accused is guilty. Casting this process in a second 2 × 2 table:

| the accused is ... | guilty | innocent |

| convicted | correct decision | Type-I error |

| acquitted | Type-II error | correct decision |

A type-I error is committed when a true null hypothesis test is erroneously rejected. This is akin to putting an innocent person in prison. For our gold prospecting example, this means that we reject the expert opinion of the geologist (whose assessment indicated a 2/3 chance of finding gold) when this geologist is in fact correct.

A type-II error is committed when we fail to reject a false null hypothesis. This is akin to letting a guilty person get away with a crime for lack of evidence. In the geological example, this means that we conclude that the geological assessment is right despite it being wrong.

The probability of committing a type-I error is controlled by one parameter:

Using the customary value of α = 0.05, there is a 5% chance of committing a type-I error. So even if the null hypothesis is correct, then we would still expect to reject it once every 20 times. This may be acceptable in geological studies, but probably not in the legal system! The principle that guilt must be proven “beyond any reasonable doubt” is akin to choosing a very small significance level (α ≪ 0.05). However it is never possible to enforce α = 0 unless all suspects are aquitted, so it is inevitable that some innocent people are convicted.

The probability of committing a type-II error (β) depends on two things:

In our geological example, 40% of the prospectors found gold in their claim, so there clearly was some gold present in the area. Suppose that the actual abundance of gold in the prospecting area was indeed 40% (p = 2∕5) instead of p = 2∕3. Then the expected distribution of outcomes would follow a binomial distribution with p = 2∕5. As shown in Section 5.2, the rejection region for the one-sided hypothesis test of H0 : p = 2∕3 vs. HA : p < 2∕3 is R = {0,1} for n = 5. If the actual value for p is 2/5, then the probability of observing a value for k that falls in this rejection region is P(k < 2|n = 5,p = 2∕5) = 0.34. This is known as the power of the statistical test. The probability of committing a type-II error is given by:

| (5.7) |

Next, suppose that the true probability of finding gold is even lower, at p = 1∕5. Under this alternative distribution, the probability of finding gold in k ≤ 1 out of n = 5 claims (i.e., the power) increases to 74%. Therefore, the probability of committing a type-II error has dropped to only 26%.

Finally, consider an end member situation in which the prospecting area does not contain any gold at all (p = 0). Then the probability of finding gold is obviously zero, because F(x = 2) = 0. Under this trivial scenario, the power of the test is 100%, and the probability of committing a type-II error is zero.

Plotting these results graphically:

The effect of sample size was already discussed in Section 5.3. Comparing the predicted outcomes for the null hypothesis H0 : p = 2∕3 to those of the alternative hypothesis HA : p = 2∕5 for sample sizes of n = 5, 15 and 30:

All hypotheses are wrong ... in some decimal place – John Tukey (paraphrased)

All models are wrong, but some are useful – George Box

Statistical tests provide a rigorous mathematical framework to assess the validity of a hypothesis. It is not difficult to see the appeal of this approach to scientists, including geologists. The scientific method is based on three simple steps:

It is rarely possible to prove scientific hypotheses. We can only disprove them. New knowledge is gained when the results of an experiment do not match the expectations. For example:

From this experiment we still don’t know what the lower mantle is made of. But at least we know that it is not olivine. Let us contrast this outcome with a second type of hypothesis:

What have we learned from this experiment? Not much. We certainly did not prove that Earth’s lower mantle consists of perovskite. There are lots of other minerals that are stable at lower mantle pressures. The only thing that we can say is that the null hypothesis has survived to live another day. The scientific method is strikingly similar to the way in which a statistical hypothesis test is carried out. A null hypothesis, like a scientific hypothesis, cannot be proven. It can only be disproved. Rejection of a null hypothesis is the best outcome, because it is the only outcome that teaches us something new. Our understanding of the natural world continues to improve by falsifying current hypotheses using scientific experiments, which leads to revised hypotheses that are closer to the truth.

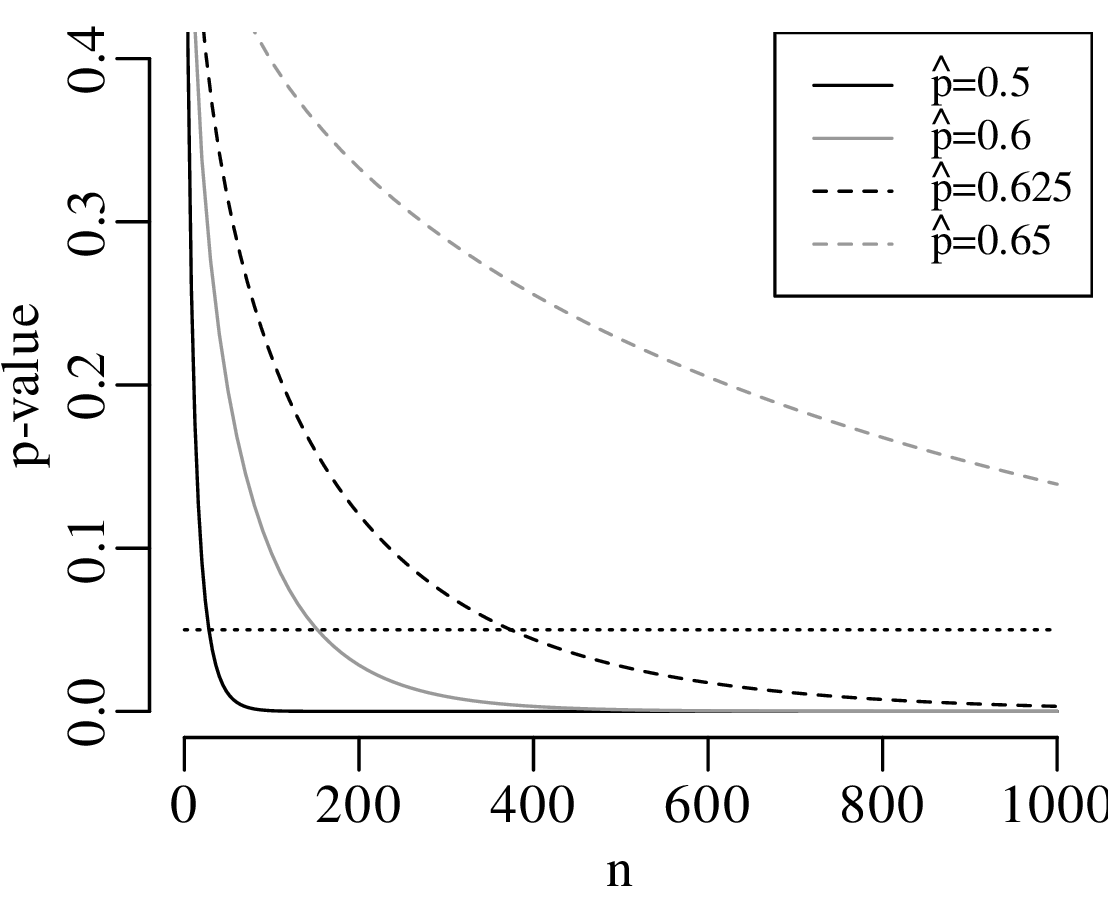

It may seem natural to use the statistical approach to test scientific hypotheses. However doing so is not without dangers. To explain these dangers, let us go back to the power analysis of Section 5.3. The power of our hypothesis test to reject H0 : p = 2∕3 increases with sample size. A small sample may be sufficient to detect large deviations from the null hypothesis. Smaller deviations require larger sample sizes. But no matter how small the violation of the null hypothesis is, there always exists a sample size that is large enough to detect it.

Statistical tests are an effective way to evaluate mathematical hypotheses. They are less useful for scientific hypotheses. There is a profound difference between mathematical and scientific hypotheses. Whereas a mathematical hypothesis is either ‘right’ or ‘wrong’, scientific hypotheses are always ‘somewhat wrong’. Considering our gold prospecting example, it would be unreasonable to expect that p is exactly equal to 2/3, down to the 100th significant digit. Estimating the proportion of gold in the area to be within 10% of the truth would already be a remarkable achievement. Yet given enough data, there will always come a point where the geological prediction is disproved. Given a large enough dataset, even a 1% deviation from the predicted value would yield an unacceptably small p-value:

As another example, suppose that we have analysed the mineralogical composition of two samples of sand that were collected 10 cm apart on the same beach. Our null hypothesis is that the composition of the two samples is the same. Plausible though this hypothesis may seem, it will always be possible to reject it, provided that enough grains are analysed. We may need to classify a hundred, a thousand or even a million grains from each sample, but at some point a ‘statistically significant’ difference will be found. Given a large enough sample, even the tiniest hydraulic sorting effect becomes detectable.

In conclusion, formalised hypothesis tests are of limited use in science. There are just two exceptions in which they do serve a useful purpose:

The previous section showed that simple binary hypothesis tests are of limited use in geology. The question that is relevant to scientists is not so much whether a hypothesis is wrong, but rather how wrong it is. In the context of our gold prospecting example, there is little use in testing whether p is exactly equal to 2∕3. It is far more useful to actually estimate p and to quantify the statistical uncertainty associated with it.

Equation 5.4 showed that, given k successful claims among n total claims, the most likely estimate

for p is k∕n. For example, if we observe k = 2 successful claims among n = 5 trials, then our best

estimate for the abundance of gold is = 2∕5. However this does not rule out other values. Let us now

explore all possible values for p that are compatible with the observed k = 2 successful

claims:

which is greater than α∕2. Consequently, the observation k = 2 falls outside the rejection region of the two-sided hypothesis test, and the proposed parameter value p = 0.1 is deemed to be compatible with the observation.

which is less than the α∕2 cutoff, and so p = 0.9 is not compatible with the observation.

The set of all values of p that are compatible with the observed outcome k = 2 forms a confidence interval. Using an iterative process, it can be shown that the lower and upper limits of this interval are given by:

Repeating this procedure for a different result, for example k = 4, yields a different confidence interval, namely:

What happens if we increase the sample size from n = 5 to n = 30, and the number of successful

claims from k = 2 to k = 12? Then the maximum likelihood estimate remains = 2∕5 as in our first

example, but the 95% confidence interval narrows down to

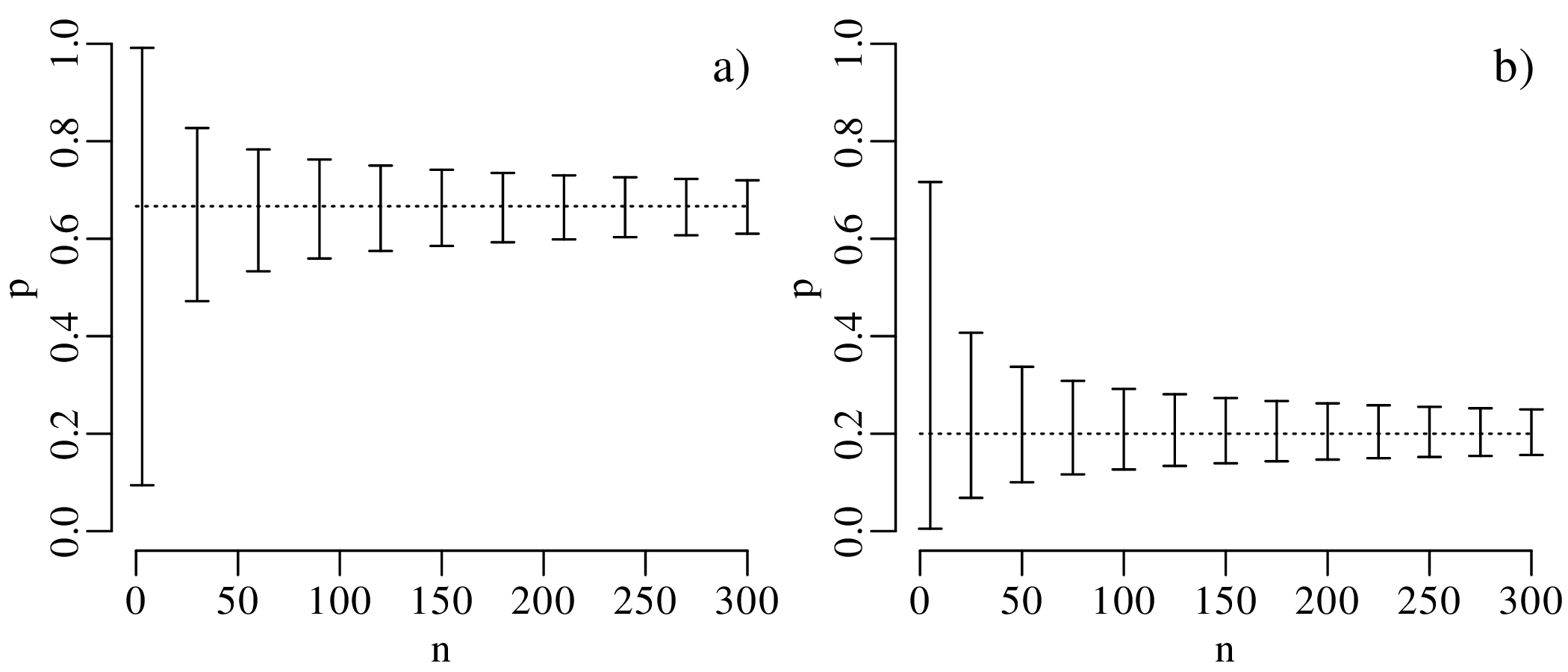

To further explore the trend of decreasing confidence interval width with increasing sample size, let

us evaluate the 95% confidence intervals for = k∕n estimates of 2/3 and 1/5, respectively, over a

range of sample sizes between n = 3 and n = 300:

The confidence intervals become progressively narrower with increasing sample size. This reflects a steady improvement of the precision of our estimate for p with increasing sample size. In other words, large datasets are ‘rewarded’ with better precision.

The confidence intervals of Figure 5.14 are asymmetric but become more symmetric around the estimate with increasing sample size. In fact, for large n (> 20, say) and p not near to 0 or 1, the 95% confidence interval can be approximated as

| (5.8) |

where

| (5.9) |

A derivation of and justification for this approximation will be provided in Section 8.4.

Example 1

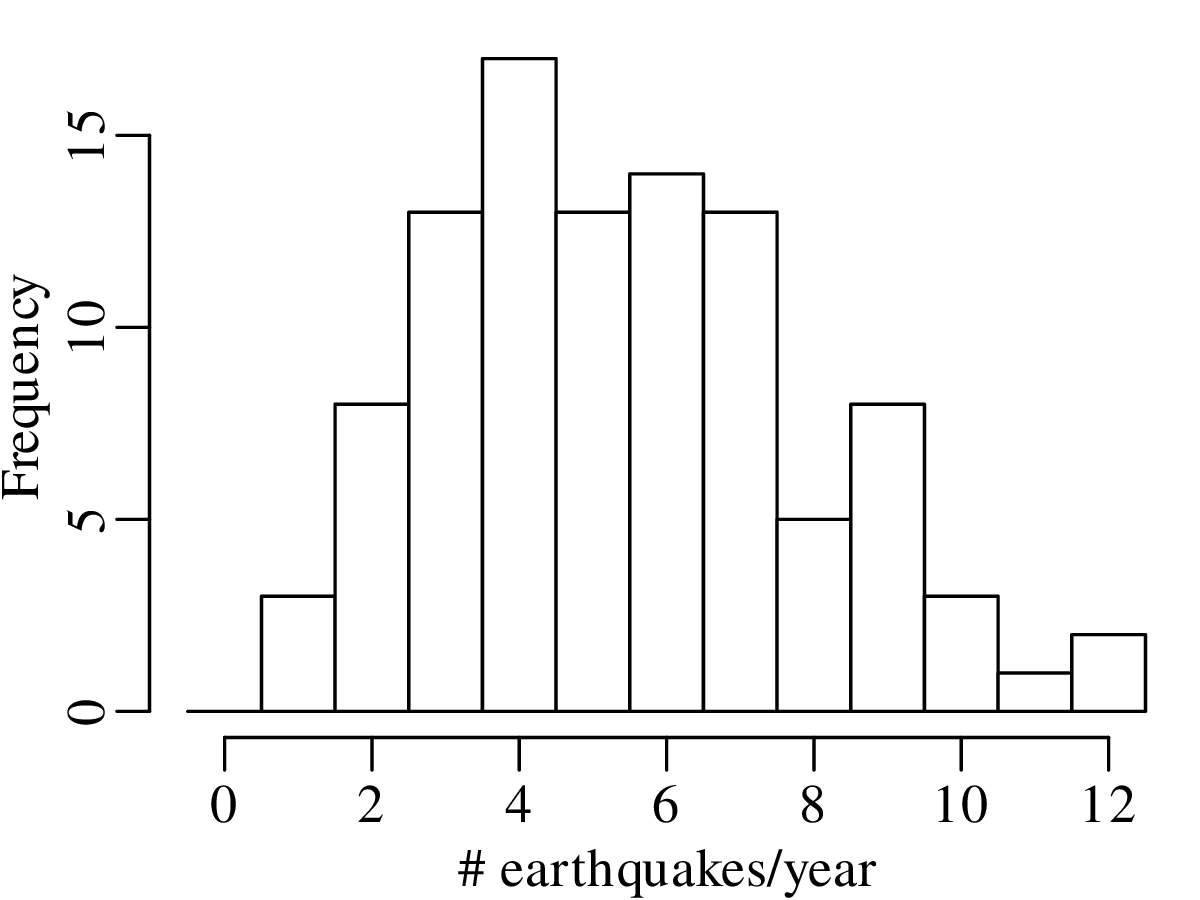

A declustered earthquake catalog1 of the western United States contains 543 events of magnitude 5.0 and greater that occurred between 1917 and 2016:

Note how the mean and the variance of this dataset are similar.

Example 2

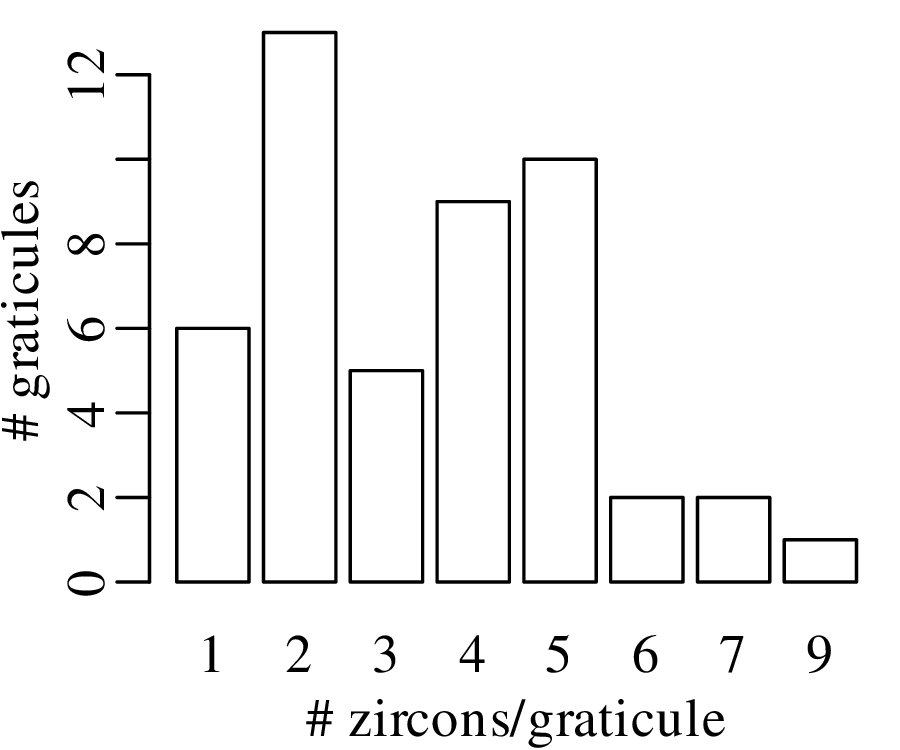

5000 grains of sand have been mounted in an uncovered thin section and imaged with a scanning electron microscope (SEM). The SEM has identified the locations of zircon (ZrSiO4) crystals that are suitable for geochronological dating:

Like the earthquake example, also this zircon example is characterised by similar values for the mean and the variance. This turns out to be a characteristic property of the Poisson distribution.

The Poisson distribution expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a constant mean rate and are independent of the time since the last event. Examples of Poisson variables include the number of

The number of earthquakes including aftershocks and the number of floods per year are not Poisson variables, because they are clustered in time. The Poisson distribution predicts the probability of observing the number of ‘successes’ k given the long term average of successes λ:

| (6.1) |

Thus the Poisson distribution is characterised by a single parameter, λ. Exploring the distribution for different values of this parameter:

| n | 10 | 20 | 50 | 100 | 200 | 500 | 1,000 | 10,000 |

| p | 0.5 | 0.25 | 0.1 | 0.05 | 0.025 | 0.01 | 0.005 | 0.0005 |

| P(k = 2) | 0.0439 | 0.0669 | 0.0779 | 0.0812 | 0.0827 | 0.0836 | 0.0839 | 0.0842 |

The Poisson distribution has one unknown parameter, λ. This parameter can be estimated using the method of maximum likelihood, just like the parameter p of the binomial distribution (Section 5.1). As before, the likelihood function is obtained by swapping the parameter (λ) and the data (k) in the PMF function (Equation 6.1):

| (6.2) |

And as before, we can estimate λ by maximising the likelihood function. Thus, we take the derivative of ℒ with respect to λ and set it to zero:

| (6.3) |

Alternatively, we can also maximise the log-likelihood:

| (6.4) |

and set its derivative w.r.t. λ to zero:

| (6.5) |

Both approaches give exactly the same result because any parameter value that maximises ℒ also

maximises ℒℒ. Thus:

| (6.6) |

which leads to

| (6.7) |

and, hence

| (6.8) |

In other words, the measurement itself equals the ‘most likely’ estimate for the parameter. However, this does not mean that all other values of λ are unlikely. In fact, other values of λ may also be compatible with k, and vice versa. The next section explores which values of λ are reconcilable with a given value of k.

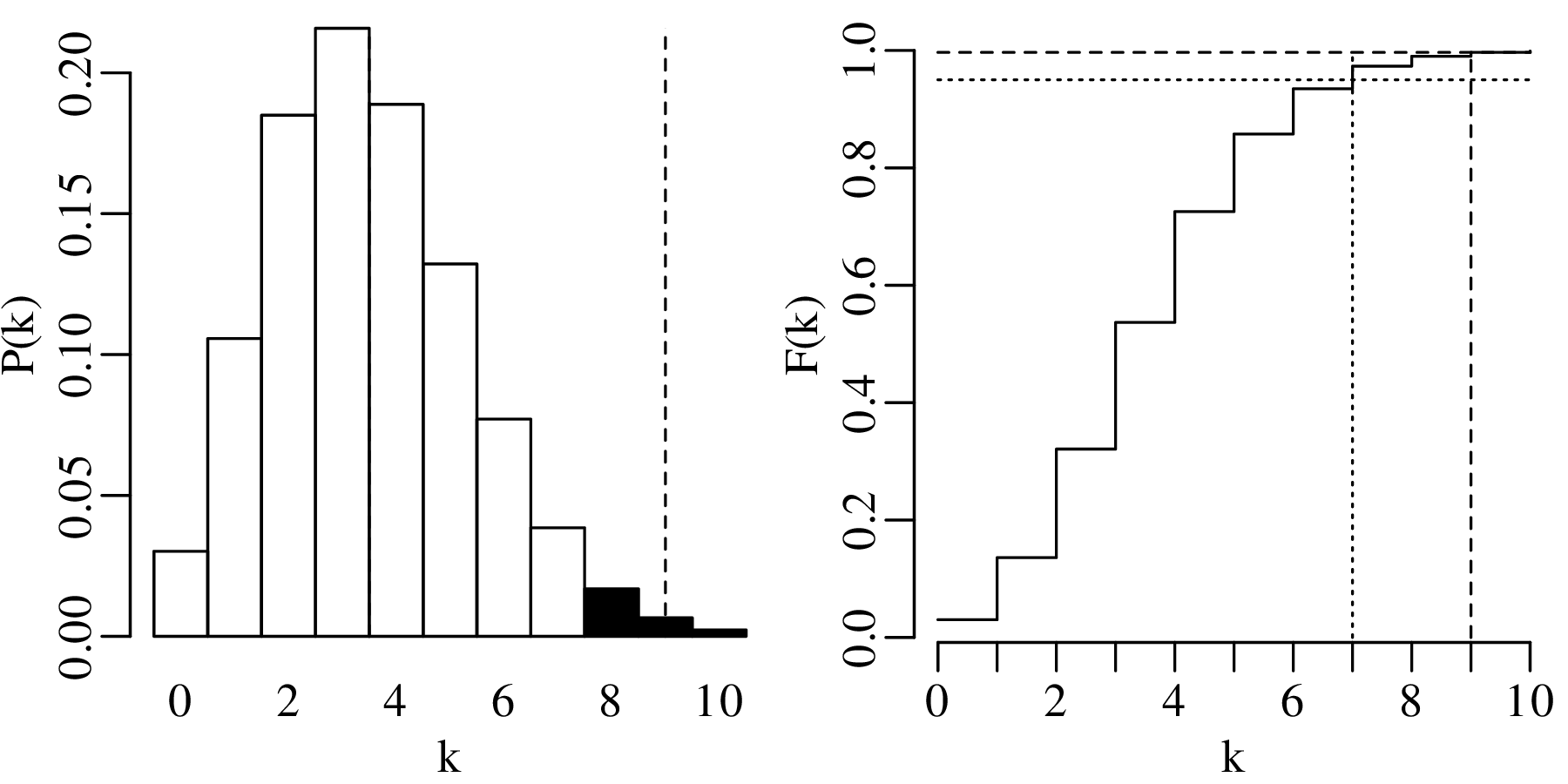

Hypothesis testing for Poisson variables proceeds in exactly the same way as for binomial variables (Section 5.2). For example:

HA (alternative hypothesis):

λ > 3.5

| k | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| P(T = k) | 0.030 | 0.106 | 0.185 | 0.216 | 0.189 | 0.132 | 0.077 | 0.038 | 0.017 | 0.007 | 0.002 |

| P(T ≥ k) | 1.000 | 0.970 | 0.864 | 0.679 | 0.463 | 0.275 | 0.142 | 0.065 | 0.027 | 0.010 | 0.003 |

| k | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| P(T = k) | 0.030 | 0.106 | 0.185 | 0.216 | 0.189 | 0.132 | 0.077 | 0.038 | 0.017 | 0.007 | 0.002 |

| P(T ≥ k) | 1.000 | 0.970 | 0.864 | 0.679 | 0.463 | 0.275 | 0.142 | 0.065 | 0.027 | 0.010 | 0.003 |

Displaying the rejection region graphically:

The observant reader may have noticed that the hypothesis test of Section 6.3 referred to the zircon counting example of Figures 6.3 – 6.4. The average number of observations per bin in this example was 3.5. Therefore, according to Section 6.2, the maximum likelihood estimate for λ is 3.5 as well. According to our hypothesis test, a value of k = 9 is incompatible with a parameter value of λ = 3.5. Yet the observant reader may also have noticed that a value of k = 9 appears in the dataset (Figure 6.4)!

Does this mean that our data do not follow a Poisson distribution?

The answer is no. The apparent contradiction between the point-counting data and the hypothesis test is a result of multiple hypothesis testing. To understand this problem, we need to go back to the multiplicative rule of page 32. The probability of incurring a type-I error is α. Therefore, the probability of not making a type-I error 1 − α = 0.95. But this is only true for one test. If we perform two tests, then the probability of twice avoiding a type-I error is (1 − α)2 = 0.9025. If we do N tests, then the probability of not making a type-I error reduces to (1 − α)N. Hence, the probability of making a type-I error increases to 1 − (1 − α)N. Figure 6.4 contains 4 × 12 = 48 squares. Therefore, the likelihood of a type-I error is not α but 1 − (1 − α)48 = 0.915.

In other words, there is a 91.5% chance of committing a type-I error when performing 48 simultaneous tests. One way to address this issue is to reduce the confidence level of the hypothesis test from α to α∕N, where N equals the number of tests. This is called a Bonferroni correction. In the case of our zircon example, the confidence level would be reduced from α = 0.05 to α = 0.05∕48 = 0.00104 (1 − α = 0.99896). It turns out that the 99.896 percentile of a Poisson distribution with parameter λ = 3.5 is 10. So the observed outcome of k = 9 zircons in the 48 square graticule is in fact not in contradiction with the null hypothesis, but falls within the expected range of values.

Multiple testing is a common problem in science, and a frequent source of spurious scientific ‘discoveries’. For example, consider a dataset of 50 chemical elements measured in 100 samples. Suppose that you test the degree of correlation between each of these elements and the gold content of the samples. Then it is inevitable that one of the elements will yield a ‘statistically significant’ result. Without a multi-comparison correction, this result will likely be spurious. In that case, repetition of the same experiment on 100 new samples would not show the same correlation. Poorly conducted experiments of this kind are called statistical fishing expeditions, data dredging or p-hacking. Sadly they are quite common in the geological literature, and it is good to keep a sceptical eye out for them.

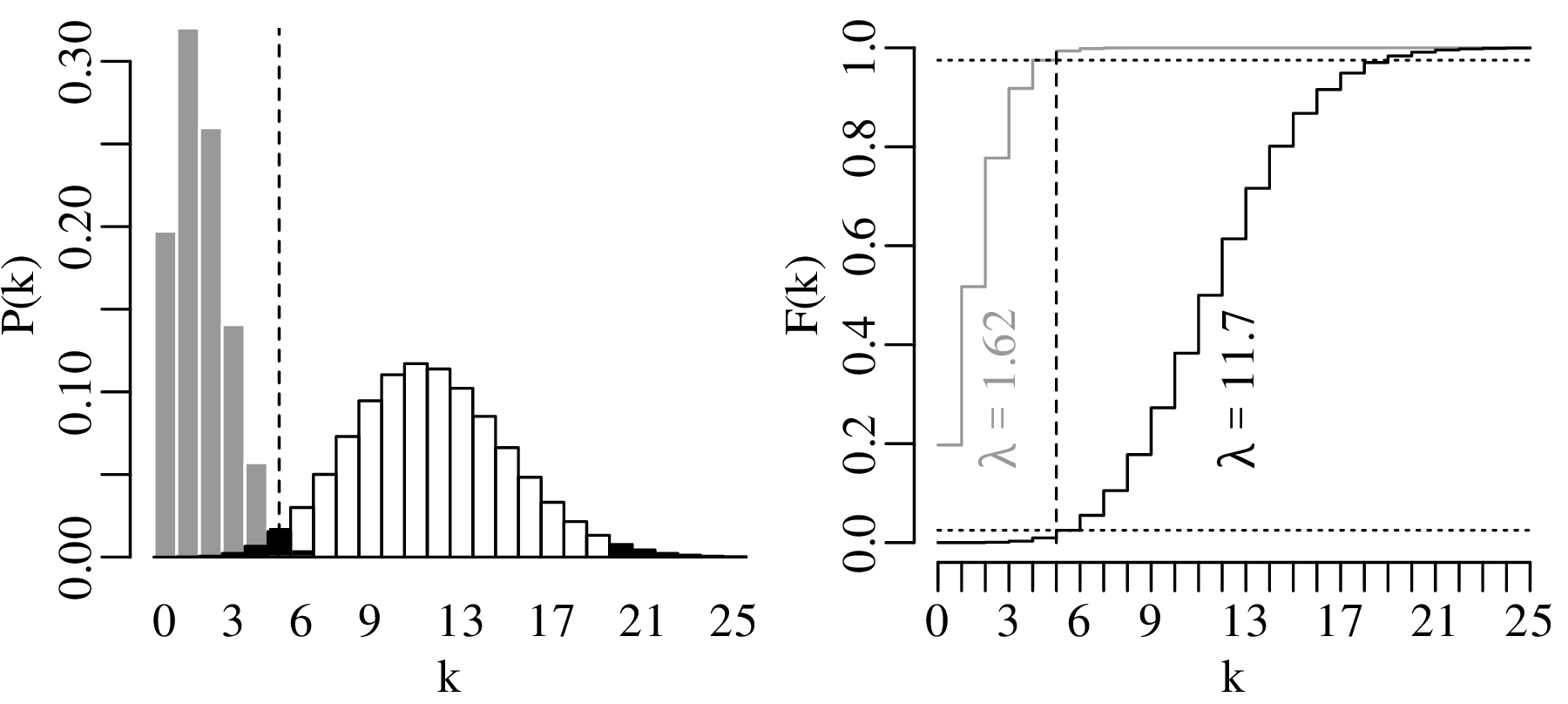

The construction of confidence intervals for the Poisson parameter λ proceeds in pretty much the same way as it did for the binomial parameter p. Let us construct a 95% confidence interval for λ given the observation that k = 5 magnitude 5.0 or greater earthquakes occurred in the US in 2016.

The lower limit of a 95% confidence interval for the number of earthquakes per year is marked by the value of λ that is more than 2.5% likely to produce an observation of k = 5 or greater. This turns out to be λ = 1.62. The upper limit of the confidence interval is marked by the value of λ that is more than 97.5% likely to produce an observation of k = 5 or smaller. This value is λ = 11.7. Hence, the 95% confidence interval is [1.62,11.7]. Note that this interval includes the average of all the 100 preceding years.

Repeating the exercise for all observations in Figure 6.1 yields the following set of 100 confidence intervals for λ:

With a confidence level of α = 0.05, there should be a 5% chance of committing a type-I error. Therefore, we would expect 5% of the samples to be rejected, and 5% of the error bars to exclude the true parameter value. The observed number of rejected samples (3/100) is in line with those expectations.

The binomial (Chapter 5) and Poisson (Chapter 6) distributions are just two of countless possible distributions. Here are a few examples of other distributions that are relevant to Earth scientists:

The negative binomial distribution models the number of successes (or failures) in a sequence of Bernoulli trials before a specified number of failures (or successes) occurs. For example, it describes the number of dry holes x that are drilled before r petroleum discoveries are made given a probability of discovery p:

| (7.1) |

The multinomial distribution is an extension of the binomial distribution where more than two outcomes are possible. For example, it describes the point counts of multiple minerals in a thin section. Let p1,p2,…,pm be the relative proportions of m minerals (where ∑ i=1mpi = 1), and let k1,k2,…,km be their respective counts in the thin section (where ∑ i=1mki = n). Then:

| (7.2) |

The binomial and Poisson distributions are univariate distributions that aim to describe one-dimensional datasets. However the multinomial distribution is an example of a multivariate probability distribution, which describes multi-dimensional datasets.

The uniform distribution is the simplest example of a continuous distribution. For any number x between the minimum a and maximum b:

| (7.3) |

x does not have to be an integer but is free to take any decimal value. Therefore, f(x|a,b) is not referred to as a probability mass function (PMF) but as a probability density function (PDF). Whereas PMFs are represented by the letter P, we use the letter f to represent PDFs. This is because the probability of observing any particular value x is actually zero. For continuous variables, calculating probabilities requires integration between two values. For example:

| (7.4) |

The cumulative density function (CDF) of a continuous variable is also obtained by integration rather than summation. For the uniform distribution:

| (7.5) |

Earthquakes follow a uniform distribution across the day, because they are equally likely to occur at 3:27:05 in the morning as they are at 17:02:58 in the afternoon, say.

We will not discuss these, or most other distributions, in any detail. Instead, we will focus our attention on one distribution, the Gaussian distribution, which is so common that it is also known as the normal distribution, implying that all other distributions are ‘abnormal’.

Let us revisit the Old Faithful dataset of Figure 2.15.

The next three figures derive three new distributions by taking the sum of n randomly selected values from the geyser eruption durations:

Figure 7.5 has the characteristic bell shape of a Gaussian distribution, which is described by the following PDF:

| (7.6) |

where μ is the mean and σ is the standard deviation. It can be mathematically proven that the sum of n randomly selected values converges to a Gaussian distribution, provided that n is large enough. This convergence is guaranteed regardless of the distribution of the original data. This mathematical law is called the Central Limit Theorem.

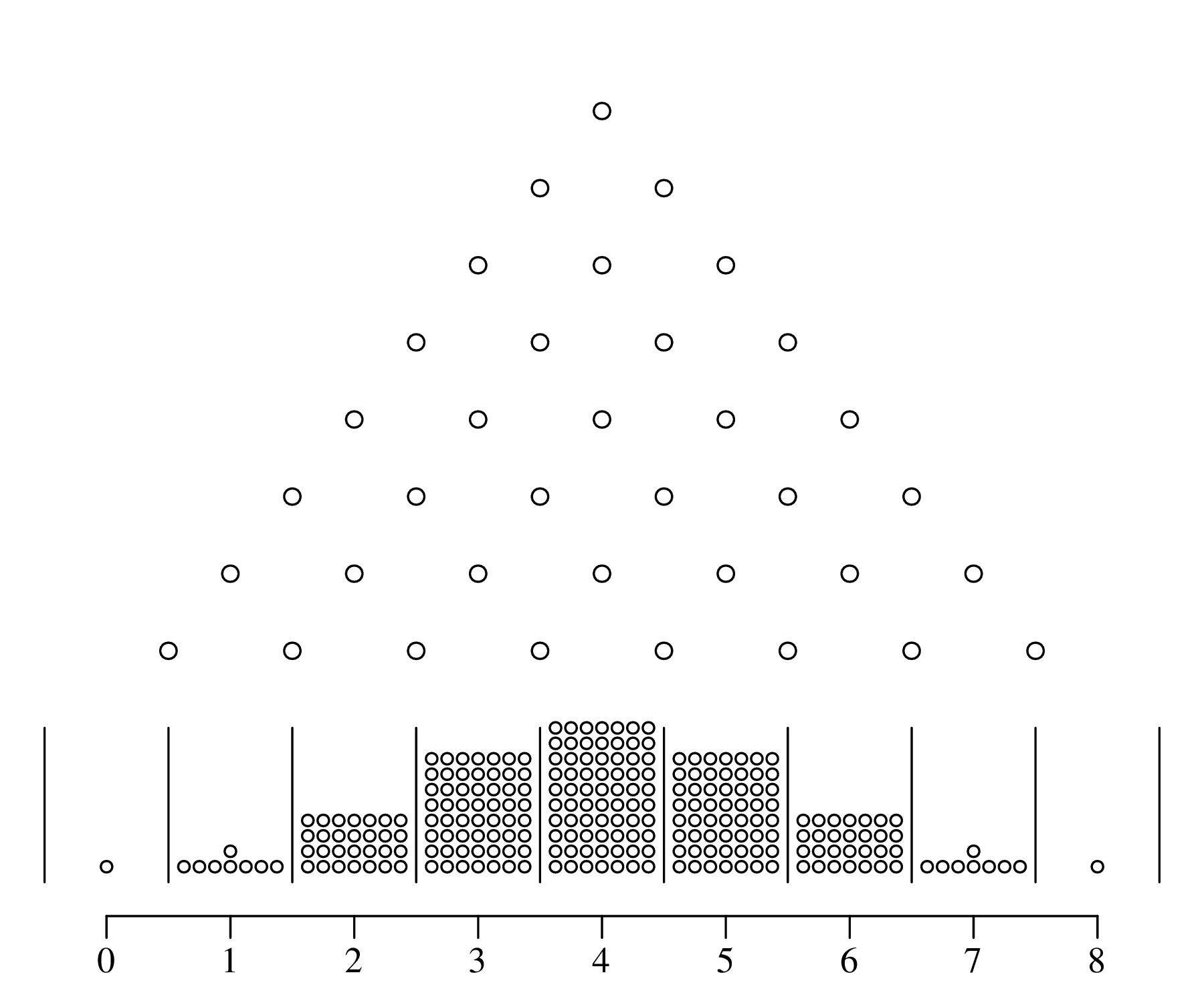

The Gaussian distribution is known as the normal distribution because it naturally arises from additive processes, which are very common in nature. It is easy to create normally distributed values in a laboratory environment. There even exists a machine that generates normally distributed numbers:

Additive processes are very common in physics. For example, when a drop of ink disperses in a volume of water, the ink molecules spread by colliding with the water molecules. This Brownian motion creates a Gaussian distribution, in which most ink molecules remain near the original location (μ), with wide tails in other directions.

The binomial, Poisson, negative binomial, multinomial, uniform and univariate normal distributions are but a small selection from an infinite space of probability distributions. These particular distributions were given a specific name because they commonly occur in nature. However the majority of probability distributions do not fall into a specific parametric category. For example, the bivariate distribution of Old Faithful eruption gaps and durations (Figure 2.15) is not really captured by any of the aforementioned distributions. In fact, it is quite easy to invent one’s own distributions. Here are four examples of such creations in two-dimensional data space:

| (7.7) |

This matrix expression is completely described by five parameters: the means μx and μy, the standard deviations σx and σy, and the covariance σx,y. One-dimensional projections of the data on the X- and Y-axis yield two univariate Gaussian distributions.

The univariate normal distribution is completely controlled by two parameters:

μ and σ are unknown but can be estimated from the data. Just like the binomial parameter p (Section 5.1) and the Poisson parameter λ (Section 6.2), this can be done using the method of maximum likelihood. Given n data points {x1,x2,…,xn}, and using the multiplication rule, we can formulate the normal likelihood function as

| (7.8) |

μ and σ can be estimated by maximising the likelihood or, equivalently, the log-likelihood:

| (7.9) |

Taking the derivative of ℒℒ with respect to μ and setting it to zero:

| (7.10) |

which is the same as Equation 3.1. Using the same strategy to estimate σ:

| (7.11) |

which is almost the same as the formula for the standard deviation that we saw in Section 3.2 (Equation 3.3):

| (7.12) |

There are just two differences between Equations 3.3/7.12 and Equation 7.11:

The two differences are related to each other. The subtraction of 1 from n is called the Bessel correction and accounts for the fact that by using an estimate of the mean (), rather than the true value of the mean (μ), we introduce an additional source of uncertainty in the estimate of the standard deviation. This additional uncertainty is accounted for by subtracting one degree of freedom from the model fit.

Finally, for multivariate normal datasets, we can show that (proof omitted):

| (7.13) |

or, if μx and μy are unknown and must be estimated from the data as well:

| (7.14) |

Suppose that the extinction of the dinosaurs has been dated at 65 Ma in one field location, and a meteorite impact has been dated at 64 Ma elsewhere. These two numbers are effectively meaningless in the absence of an estimate of precision. Taken at face value, the dates imply that the meteorite impact took place 1 million years after the mass extinction, which rules out a causal relationship between the two events. However, if the analytical uncertainty of the age estimates is significantly greater than 1 Myr, then such a causal relationship remains plausible. There are two aspects of analytical uncertainty that need to be considered:

accuracy is the closeness of a statistical estimate to its true (but unknown) value.

precision is the closeness of multiple measurements to each other.

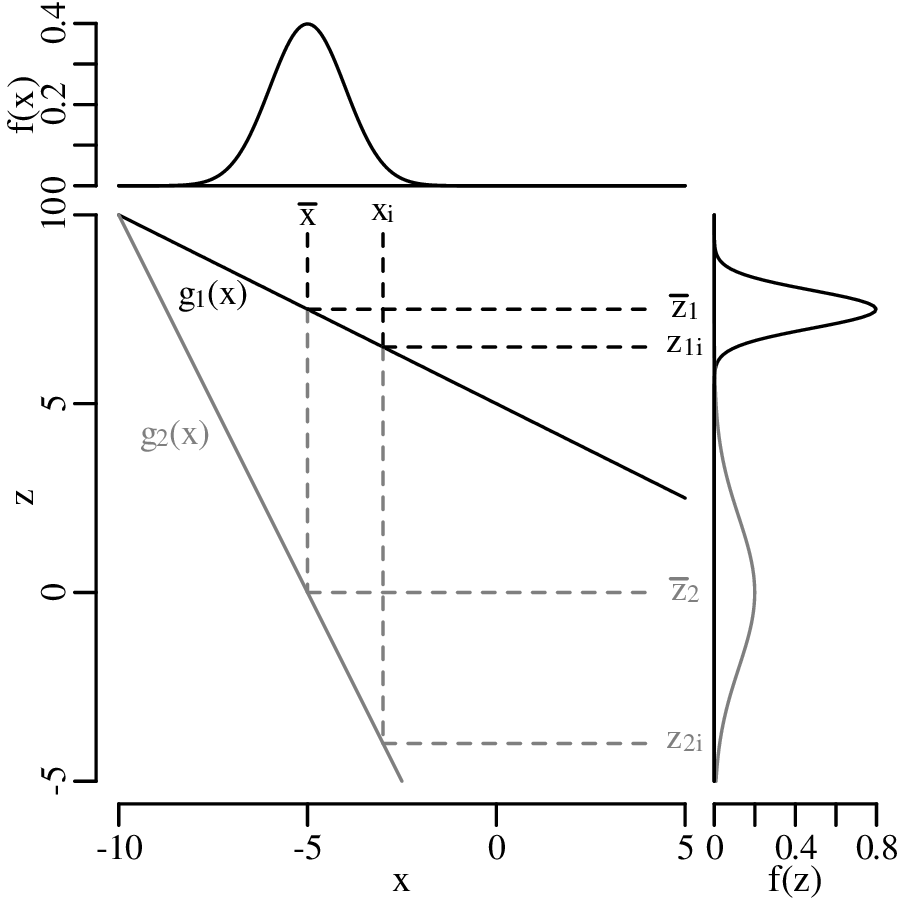

Suppose that a physical quantity (z) is calculated as a function (g) of some measurements (x):

| (8.1) |

and suppose that replicate measurements of x (xi, for i = 1...n) follow a normal distribution with mean and standard deviation s[x]. Then these values can be used to estimate s[z], the standard deviation of the calculated value z. If the function g is (approximately) linear in the vicinity of , then small deviations ( − xi) of the measured parameter xi from the mean value are proportional to small deviations ( − zi) of the estimated quantity z from the mean value = g(x).

Recall the definition of the sample standard deviation and variance (Equation 7.12):

| (8.2) |

Let ∂z∕∂x be the slope of the function g with respect to the measurements x (evaluated at )1 then:

| (8.3) |

Plugging Equation 8.3 into 8.2, we obtain:

| (8.4) |

s[z] is the standard error of z, i.e. the estimated standard deviation of the inferred quantity z.

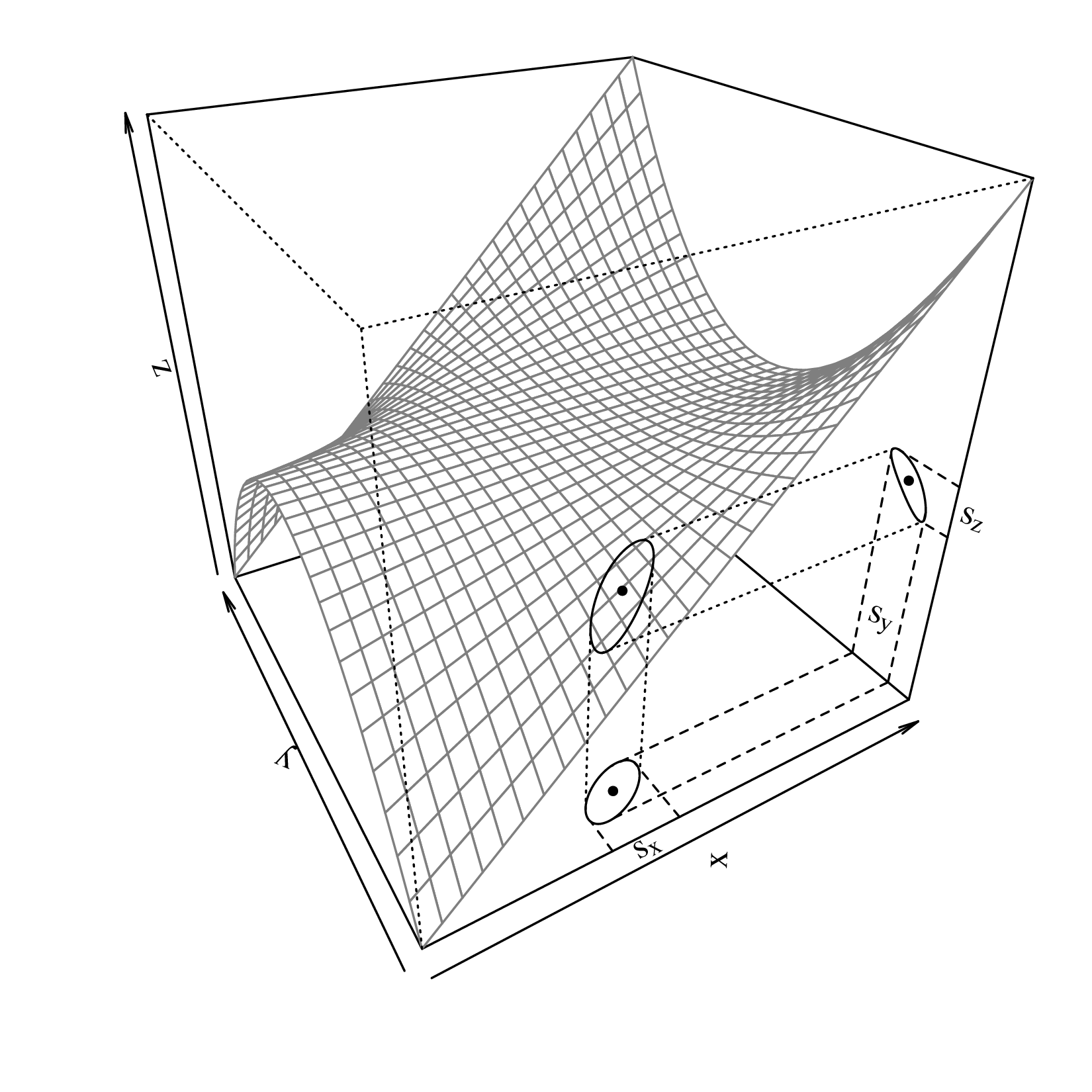

Equation 8.4 is the general equation for the propagation of uncertainty with one variable. Next, let us move on to multivariate problems. Suppose that the our estimated quantity (z) is calculated as a function (g) of two measurements (x and y):

| (8.5) |

and further suppose that x and y follow a bivariate normal distribution (Equation 7.7), then μx, μy, σx, σy and σx,y can all be estimated (as ,ȳ,s[x],s[y] and s[x,y]) from the input data ({xi,yi}, for i = 1...n). These values can then be used to infer s[z], the standard error of z, in exactly the same way as for the one dimensional case.

Differentiating g with respect to x and y:

| (8.6) |

Plugging Equation 8.6 into Equation 8.2:

| (8.7) |

After some rearranging (similar the derivation of Equation 8.4), this leads to:

| (8.8) |

This is the general equation for the propagation of uncertainty with two variables, which can also be written in a matrix form:

| (8.9) |

where the innermost matrix is known as the variance-covariance matrix and the outermost matrix (and its transpose) as the Jacobian matrix. The advantage of the matrix formulation is that it can easily be scaled up to three or more dimensions.

Equation 8.8/8.9 is the generic error propagation formula. This section will apply this formula to some common mathematical functions. It will use the following notation:

a,b,c,… are constants, i.e. values that are known without uncertainty (s[a] = s[b] = s[c] = … = 0);

x and y are measurements whose uncertainties (s[x] and s[y]) were estimated from replicates;

z = g(x,y) is the estimated quantity.

For example, z = x∕2 + y3 can be written as z = ax + yb, where a = 1∕2 and b = 3.

Taking the derivatives of z with respect to x and y:

Plugging these into Equation 8.8:

| (8.10) |

If x and y are uncorrelated (i.e., s[x,y] = 0), then the variance of the sum equals the sum of the variances.

The partial derivatives of z with respect to x and y are

Plugging these into Equation 8.8:

| (8.11) |

Note that, if x and y are uncorrelated, then Equations 8.10 and 8.11 are identical.

The partial derivatives are

Plugging these into Equation 8.8:

Dividing both sides of this equation by z2 = (axy)2:

which simplifies to:

| (8.12) |

(s[x]∕x) and (s[y]∕y) represent the relative standard deviations of x and y. These are also known as the coefficients of variation (CoV). If x and y are uncorrelated, then the squared CoVs of a product equals the sum of the squared CoVs.

The partial derivatives are

Plugging these into Equation 8.8:

Dividing both sides of this equation by z2 = 2:

which simplifies to:

| (8.13) |

If x and y are uncorrelated, then the uncertainty of the quotient (Equation 8.13) equals the uncertainty of the product (Equation 8.12).

The partial derivative of z w.r.t. x is

Plugging this into Equation 8.8:

Dividing both sides by z2 = 2:

which simplifies to

| (8.14) |

The partial derivative of z w.r.t. x is

Plugging this into Equation 8.8:

| (8.15) |

The partial derivative of z w.r.t. x is

Plugging this into Equation 8.8:

Dividing both sides by z2 = 2:

which simplifies to

| (8.16) |

Error propagation for more complicated functions can either be derived from Equation 8.8 directly, or can be done with Equations 8.10–8.16 using the chain rule. For example, consider the following equation:

| (8.17) |

which describes the distance d travelled by an object as a function of time t, where d0 is the position at t = 0, v0 is the velocity at t = 0, and g is the acceleration. Although Equation 8.17 does not directly fit into any of the formulations that we have derived thus far, it is easy to define two new functions that do. Let

| (8.18) |

and

| (8.19) |

then Equation 8.18 matches with the formula for addition (Equation 8.10):

where a ≡ d0, b ≡ v0, x ≡ t, c ≡ 0 and y is undefined. Then uncertainty propagation of Equation 8.18 using Equation 8.10 gives:

| (8.20) |

Similarly, Equation 8.19 matches with the formula for powering (Equation 8.16):

where a ≡ g, b ≡ 2 and x ≡ t. Applying Equation 8.16 to Equation 8.19 yields:

| (8.21) |

Combining Equations 8.18 and 8.19 turns Equation 8.17 into a simple sum:

whose uncertainty can be propagated with Equation 8.10:

Substituting Equation 8.20 for s[x]2 and Equation 8.21 for s[y]2:

which leads to the following expression for the uncertainty of d:

| (8.22) |

To illustrate the use of this formula, suppose that d0 = 0 m, v0 = 10 m/s and g = 9.81 m/s2. Further suppose that we measure the time t with a watch that has a 1/10th of a second precision2 (s[t] = 0.1 s). Then we can predict how far the object will have travelled after 5 seconds:

| (8.23) |

Using Equation 8.22, the uncertainty3 of d is given by:

| (8.24) |

Thus the predicted displacement after 5 seconds can be reported as 295.250 ± 9.861 m, or as 295.2 ± 9.9 m if we round the estimate to two significant digits. Note how Equations 8.23 and 8.24 specifies the units of all the variables. Checking that these units are balanced is good practice that avoids arithmetic errors.

As defined in Section 8.1, the standard error is the estimated standard deviation of some derived quantity obtained by error propagation. The mean of set of numbers is an example of such a derived quantity, and its estimated uncertainty is called the standard error of the mean. Let {x1,x2,…xn} be n measurements of some quantity x, and let be its mean (Equation 3.1):

Applying the error propagation formula for a sum (Equation 8.10):

If all the xis were drawn from the same normal distribution with standard deviation s[x], then

which simplifies to

| (8.25) |

The standard error of the mean monotonically decreases with the square root of sample size. In

other words, we can arbitrarily increase the precision of our analytical data by acquiring more data.

However, it is important to note that the same is generally not the case for the accuracy

of those data (Figure 8.1). To illustrate the effect of the square root rule, consider the

statistics of human height as an example. The distribution of the heights of adult people is

approximately normal with a mean of 165 cm and a standard deviation of 10 cm. There about

5 billion adult humans on the planet. Averaging their heights should produce a value of

165 cm with a standard error of 10∕ = 1.4 × 10−4 cm. So even though there is a lot

of dispersion among the heights of humans, the standard error of the mean is only 1.5

microns.

We used the method of maximum likelihood to estimate the parameters of the binomial (Section 5.1), Poisson (Section 6.2) and normal (Section 7.4) distributions. An extension of the same method can be used to estimate the standard errors of the parameters without any other information. Let ẑ be the maximum likelihood estimate of some parameter z. We can approximate the log-likelihood with a second order Taylor series in the vicinity of ẑ:

By definition, ∂ℒℒ∕∂z = 0 at ẑ. Therefore, the likelihood (ℒ(z) = exp[ℒℒ(z)]) is proportional to:

which can also be written as:

This equation fits the functional form of the normal distribution (Equation 7.6):

which leads to

| (8.26) |

−ẑ is known as the Fisher Information. Equation 8.26 can be generalised to multiple

dimensions:

| (8.27) |

where is the estimated covariance matrix and (ℋ)−1 is the inverse of the (‘Hessian’) matrix of second

derivatives of the log-likelihood function with respect to the parameters.

To illustrate the usefulness of Equation 8.26, let us apply it to the Poisson distribution. Recalling

the log-likelihood function (Equation 6.4) and denoting the maximum likelihood estimate of the

parameter by :

Taking the second derivative of ℒℒ with respect to λ:

| (8.28) |

Plugging Equation 8.28 into 8.26:

Recalling that = k (Equation 6.8), we get:

| (8.29) |

Thus we have proved that the variance of a Poisson variable equals its mean, which was already shown empirically in Chapter 6.

Using the same rationale to estimate the standard error of a binomial variable, we take the logarithm of Equation 5.3:

the second derivative of which is:

At the maximum likelihood estimate = n∕k (Equation 5.4), this becomes:

so that the variance of p is:

which proves Equation 5.9.

The previous chapters have introduced a plethora of parametric distributions that allow us to test hypotheses and assess the precision of experimental results. However, these inferences are only as strong as the assumptions on which they are based. For example, Chapter 5 used a binomial distribution to assess the occurrence of gold in a prospecting area, assuming that the gold was randomly distributed across all the claims; and Chapter 6 used a Poisson distribution to model earthquakes, assuming that the earthquake catalog was free of clusters and that all aftershocks had been removed from it. This chapter will introduce some strategies to test these assumptions, both graphically and numerically.

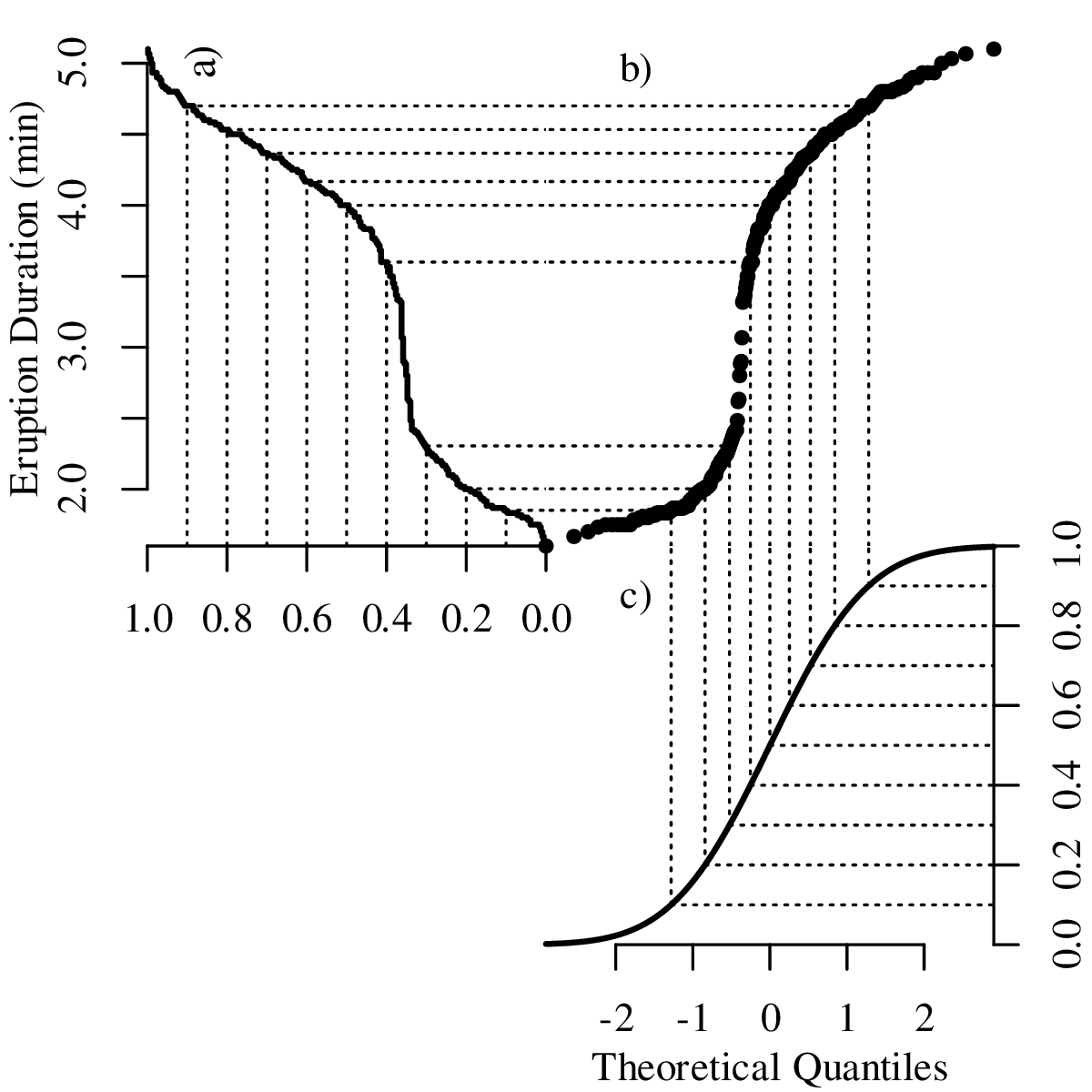

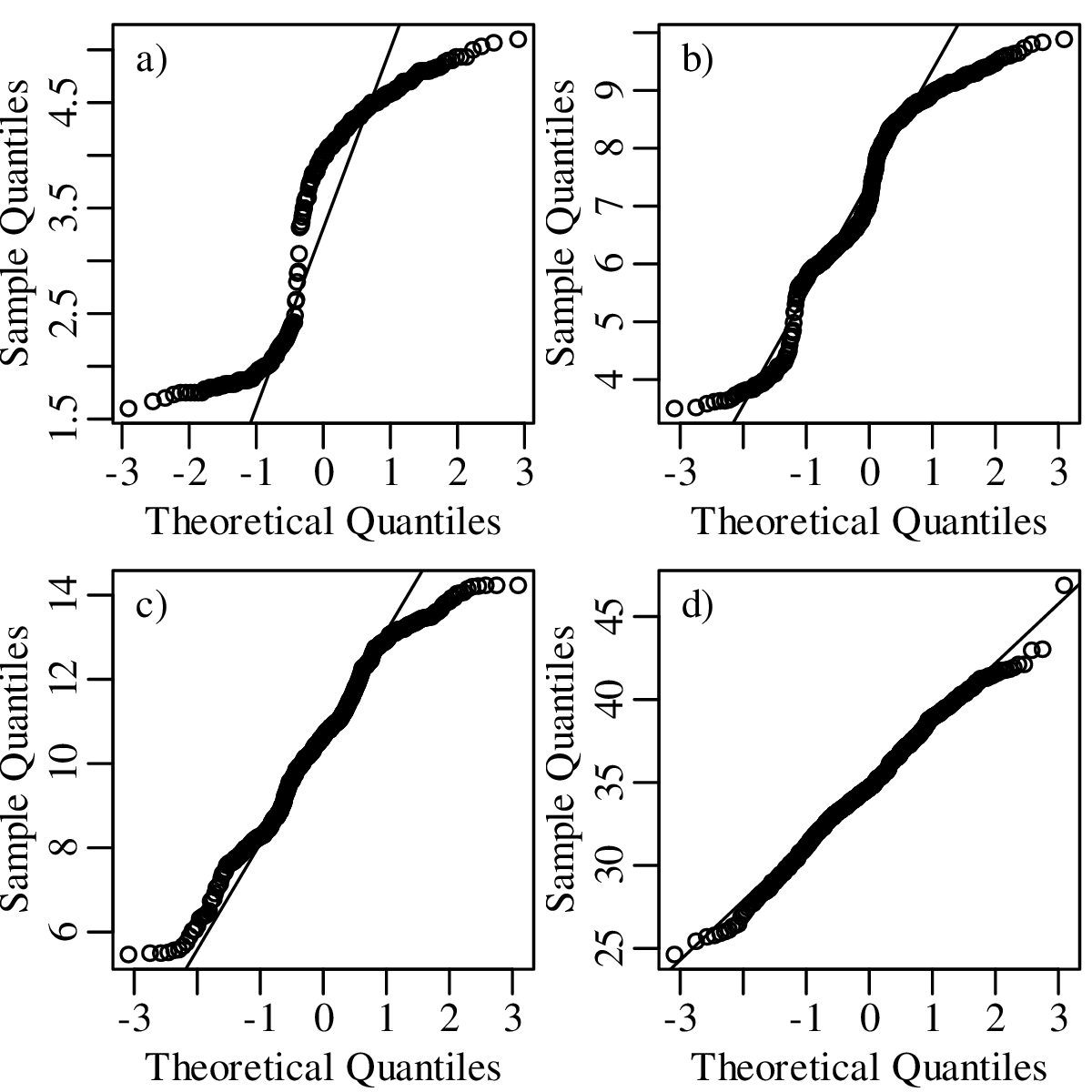

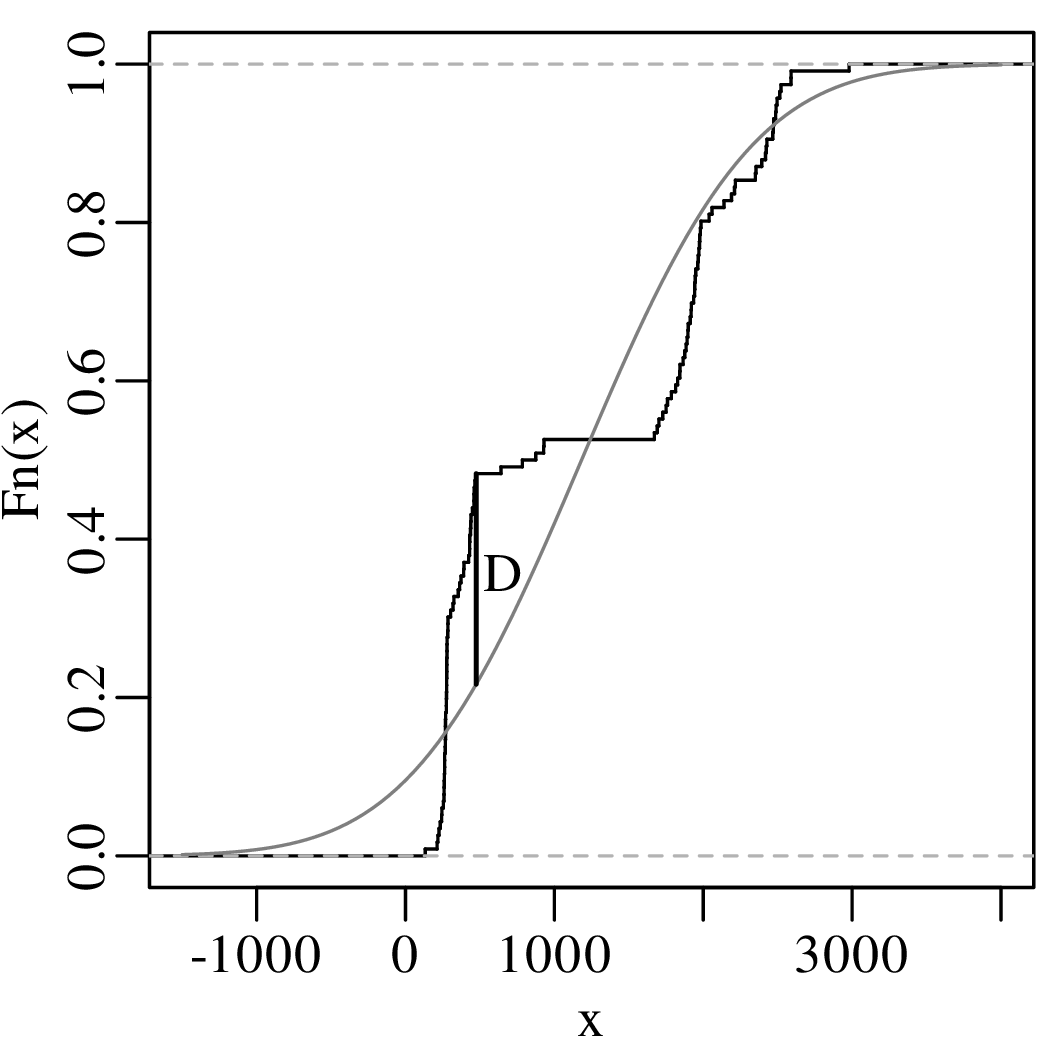

As the name suggests, a quantile-quantile or Q-Q plot is a graphical method for comparing two probability distributions by plotting their quantiles against each other. Q-Q plots set out the quantiles of a sample against those of a theoretical distribution, or against the quantiles of another sample. For example, comparing the Old Faithful eruption duration dataset to a normal distribution:

We can use the Q-Q plot to illustrate the effect of the Central Limit Theorem (Figure 7.2):

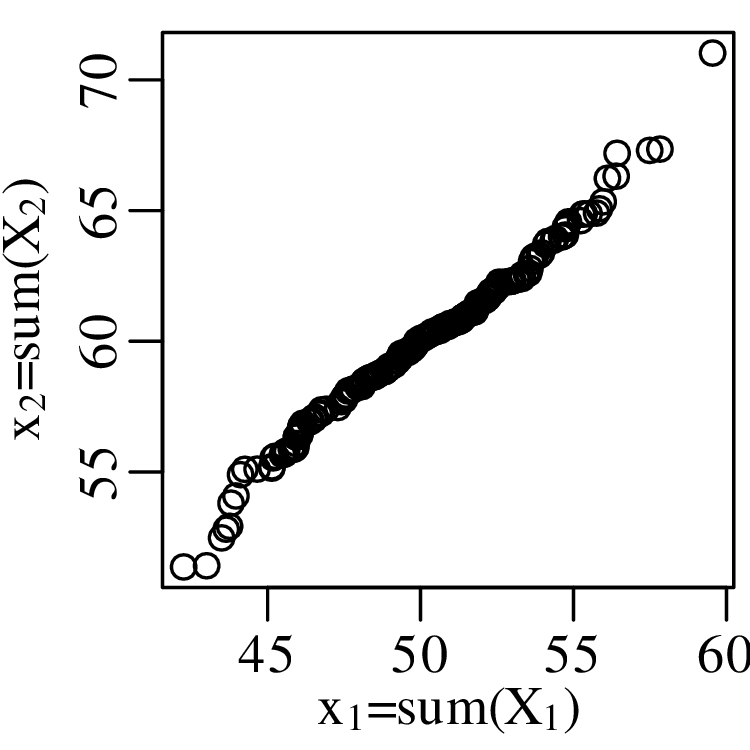

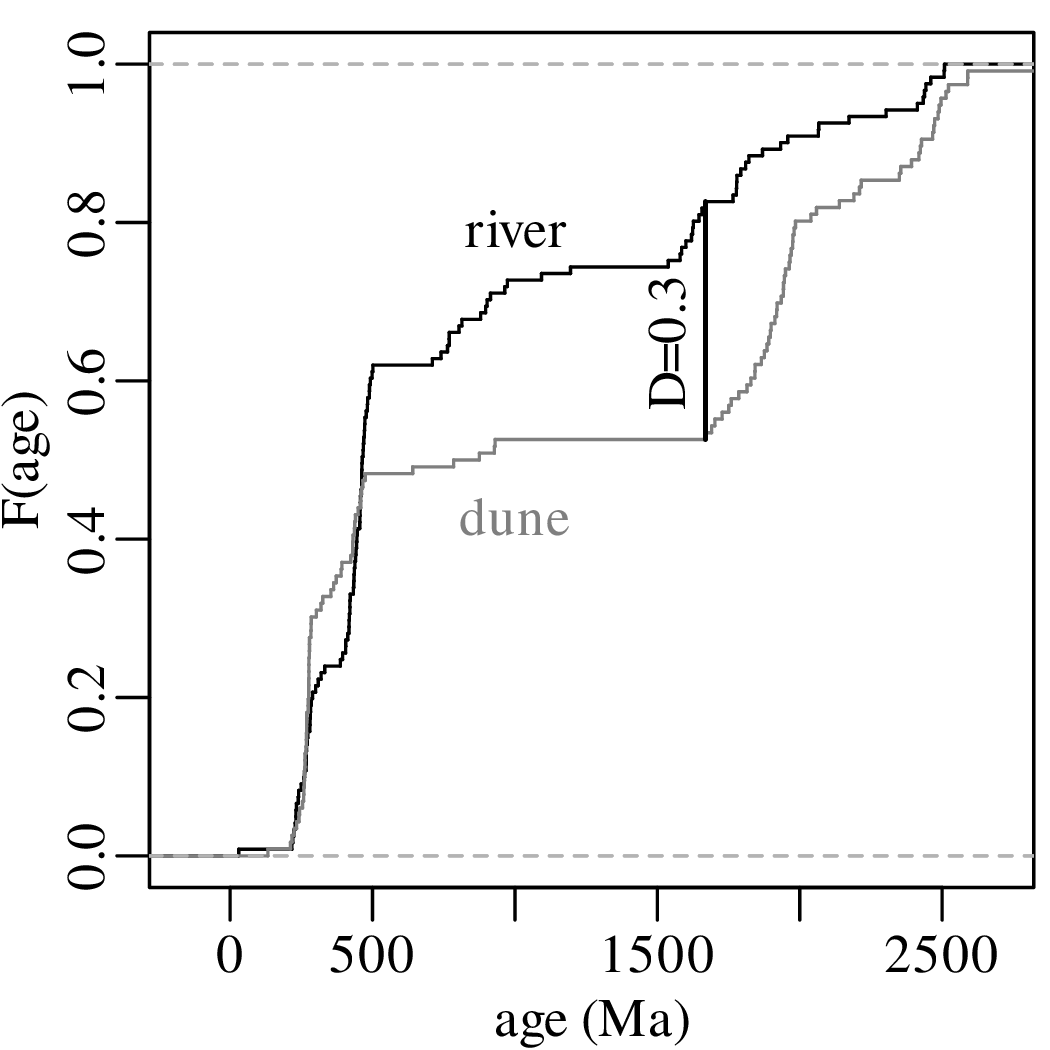

Q-Q plots cannot only be used to compare sample distributions with theoretically predicted parametric distributions, but also to compare one sample with another. For example:

The Q-Q plot in Figure 9.3 compared two samples that were normally distributed with different means. However, it may not be clear if the difference between the means is statistically significant or not. Before we address this problem, let us first look at a related but slightly simpler problem. Consider the density of 5 gold coins as an example:

| coin # | 1 | 2 | 3 | 4 | 5 |

| density (g/cm3) | 19.09 | 19.17 | 19.31 | 19.07 | 19.18 |

The density of pure gold is 19.30 g/cm3. We might ask ourselves the question if the five coins are

made of pure gold, or if they consist of an alloy that mixes gold with a less dense metal? To answer

this question, we assume that the sample mean follows a normal distribution with mean μ

and standard deviation σ∕ (following the same derivation as Equation 8.25). Thus, the

parameter

| (9.1) |

follows a standard normal distribution whose mean is zero and whose standard deviation is one. As we have seen in Section 7.4, σ is unknown but can be estimated by the sample standard deviation s[x]. We can then replace Equation 9.1 with a new parameter

| (9.2) |

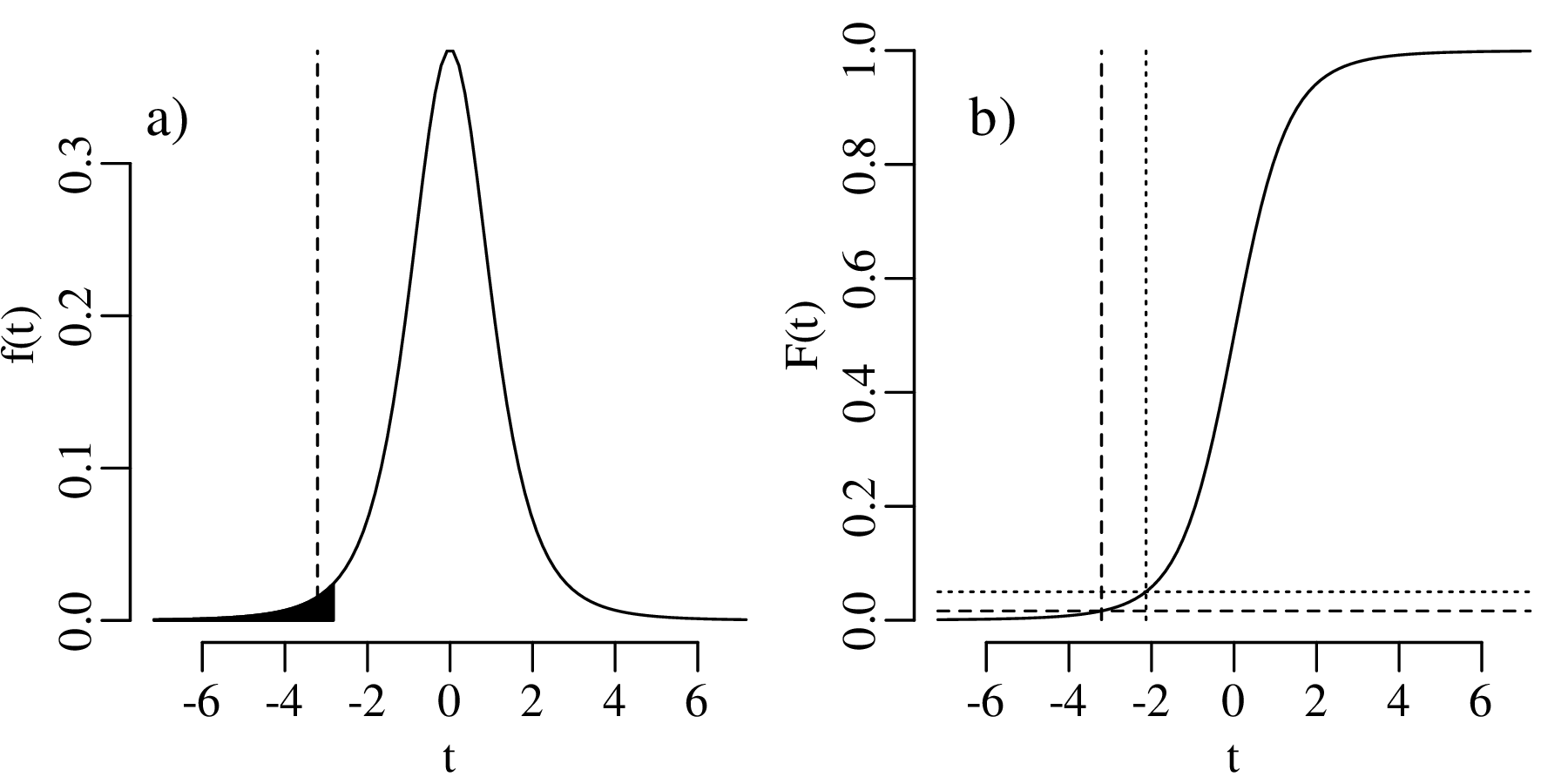

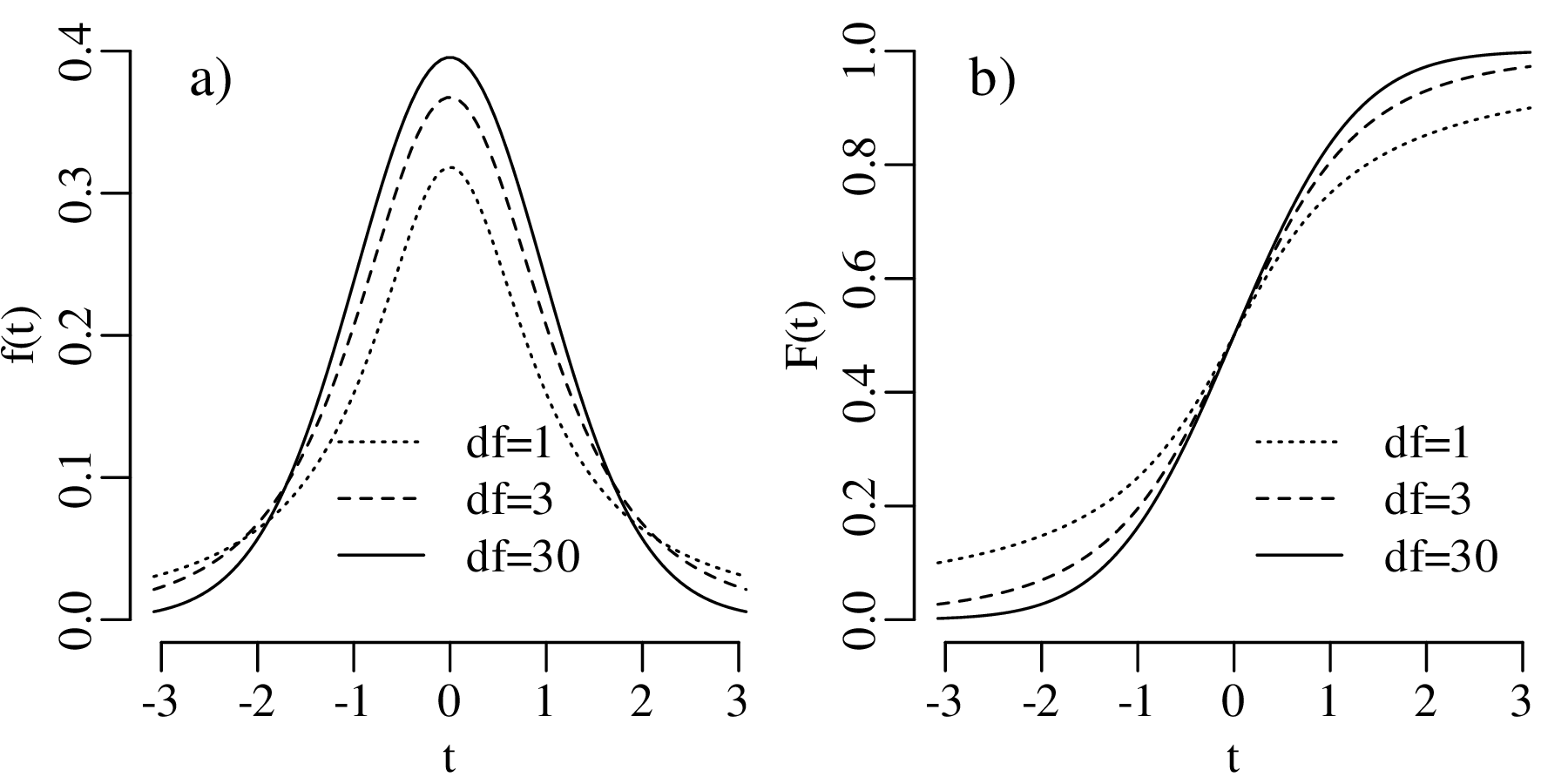

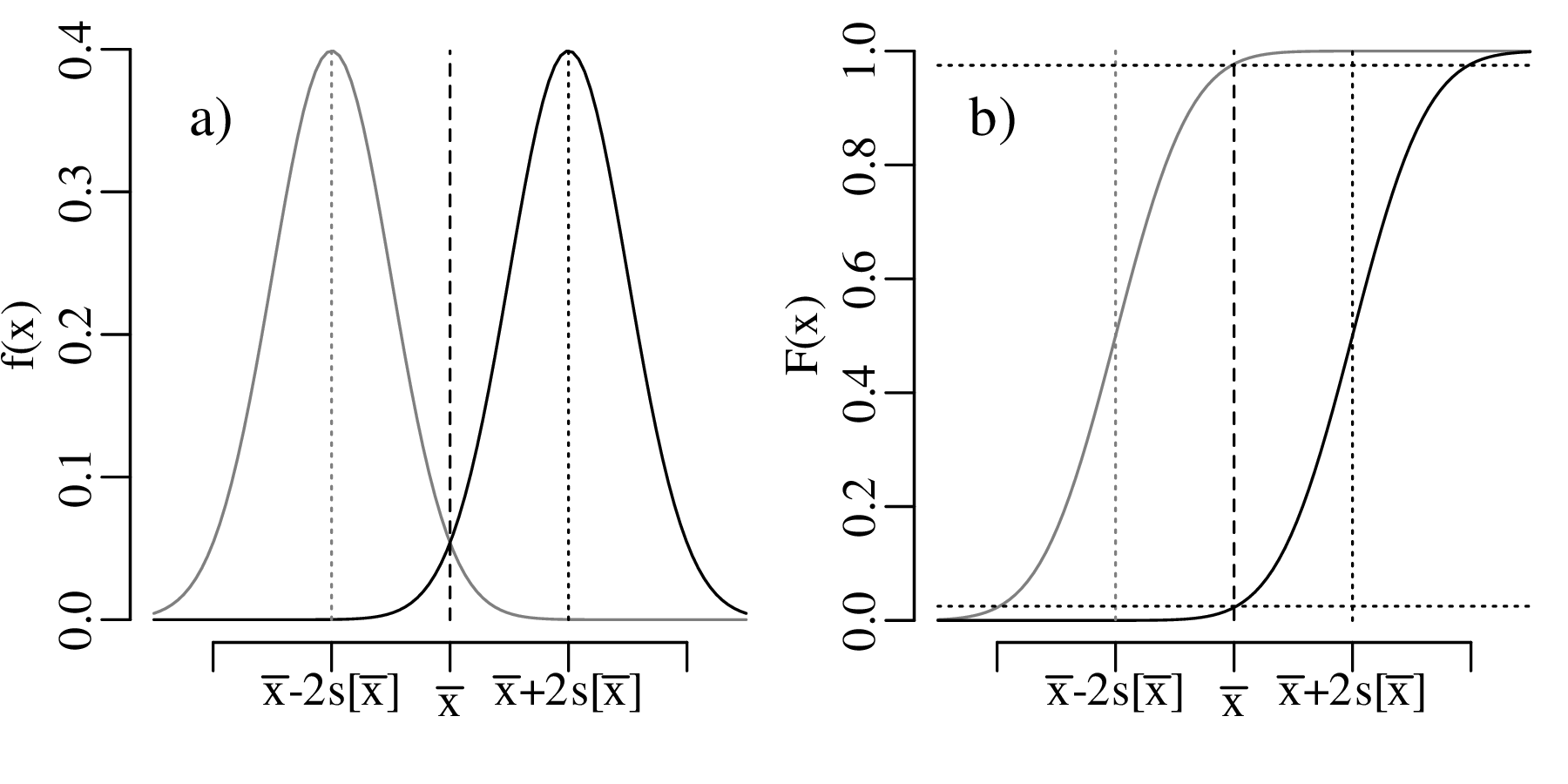

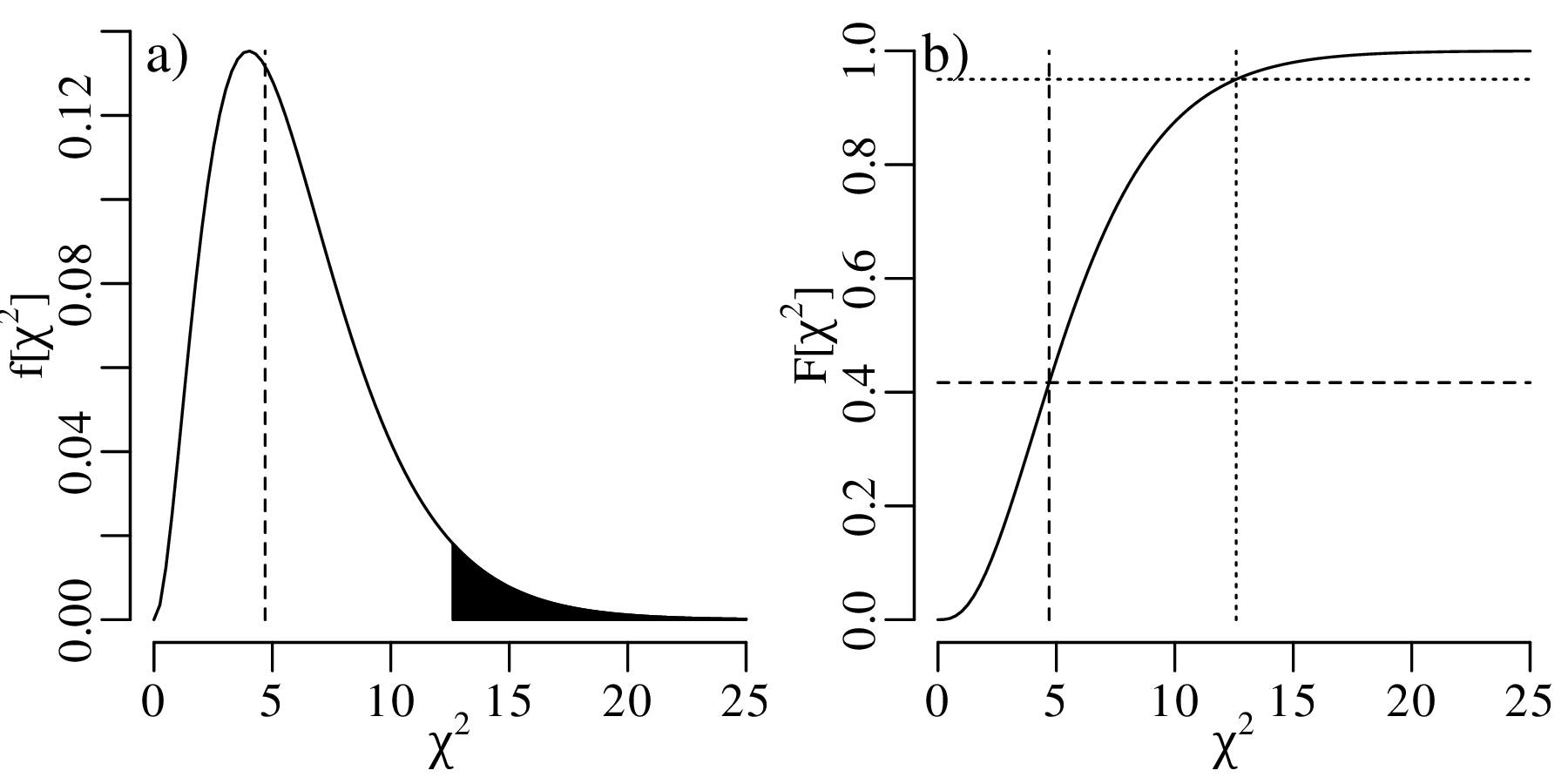

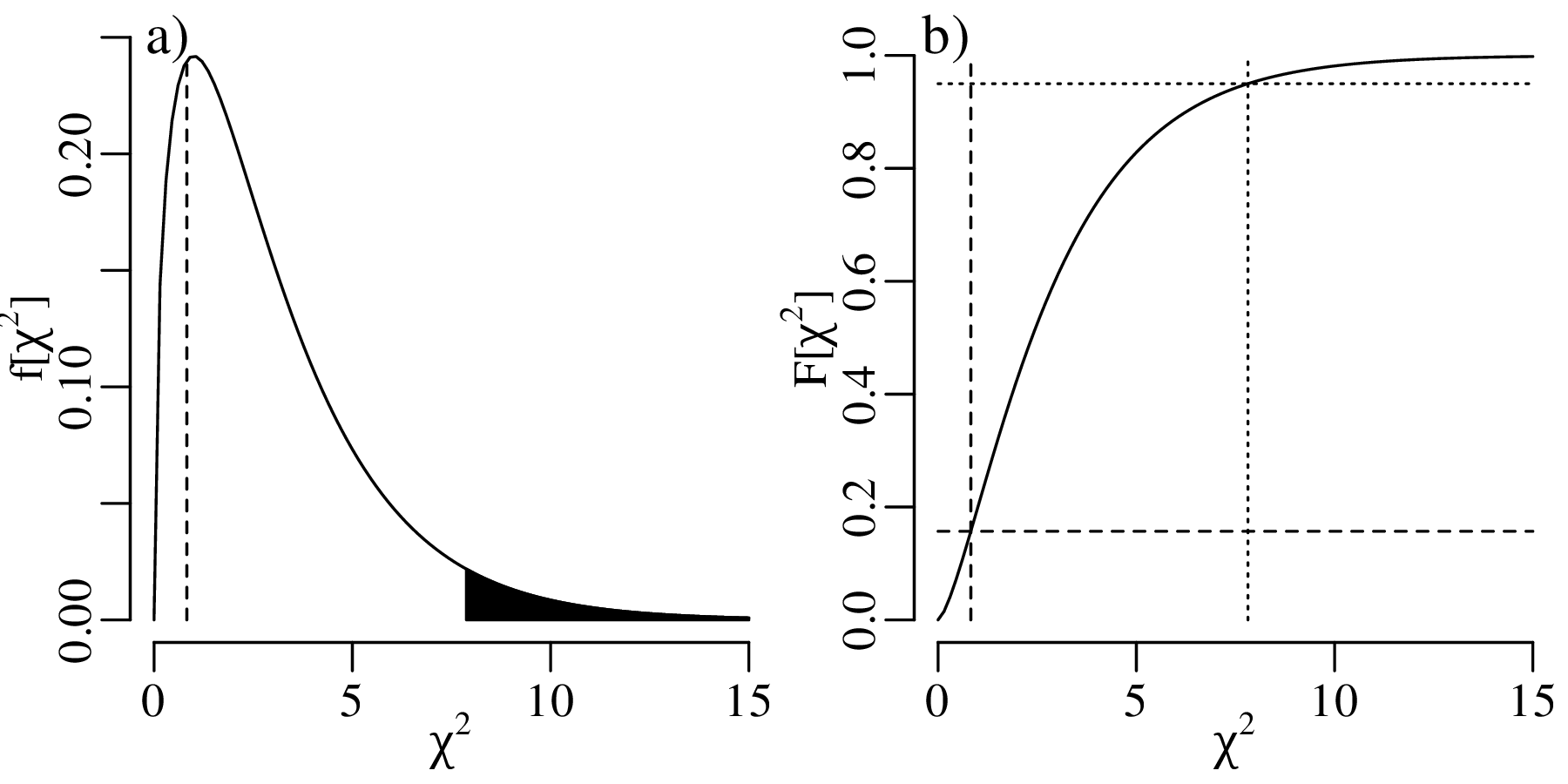

However, t does not follow a normal distribution but a Student t-distribution with (n − 1) degrees of freedom where the (n− 1) plays a similar role as the Bessel correction of Section 7.4. It accounts for the ‘double use’ of the data to estimate both the mean and the standard deviation of the data. The t-distribution forms the basis of a statistical test that follows the same sequence of steps as used in Sections 5.2 and 6.3.

HA (alternative hypothesis):

μ < 19.30

| (9.3) |

where = 19.164, μ∘ = 19.30, s[x] = 0.0948, and n = 5 so that t = −3.2091.

| t | -3.75 | -3.2091 | -2.78 | -2.13 | -1.53 | -0.74 | 0 | 0.74 | 1.53 | 2.13 | 2.78 | 3.75 |

| P(T ≤ t) | 0.01 | 0.0163 | 0.025 | 0.05 | 0.1 | 0.25 | 0.5 | 0.75 | 0.9 | 0.95 | 0.975 | 0.99 |

where the observed value is marked in italics.

| t | -3.75 | -3.2091 | -2.78 | -2.13 | -1.53 | -0.74 | 0 | 0.74 | 1.53 | 2.13 | 2.78 | 3.75 |

| P(T ≤ t) | 0.01 | 0.0163 | 0.025 | 0.05 | 0.1 | 0.25 | 0.5 | 0.75 | 0.9 | 0.95 | 0.975 | 0.99 |

The one-sided rejection region consists of all t < −2.10.

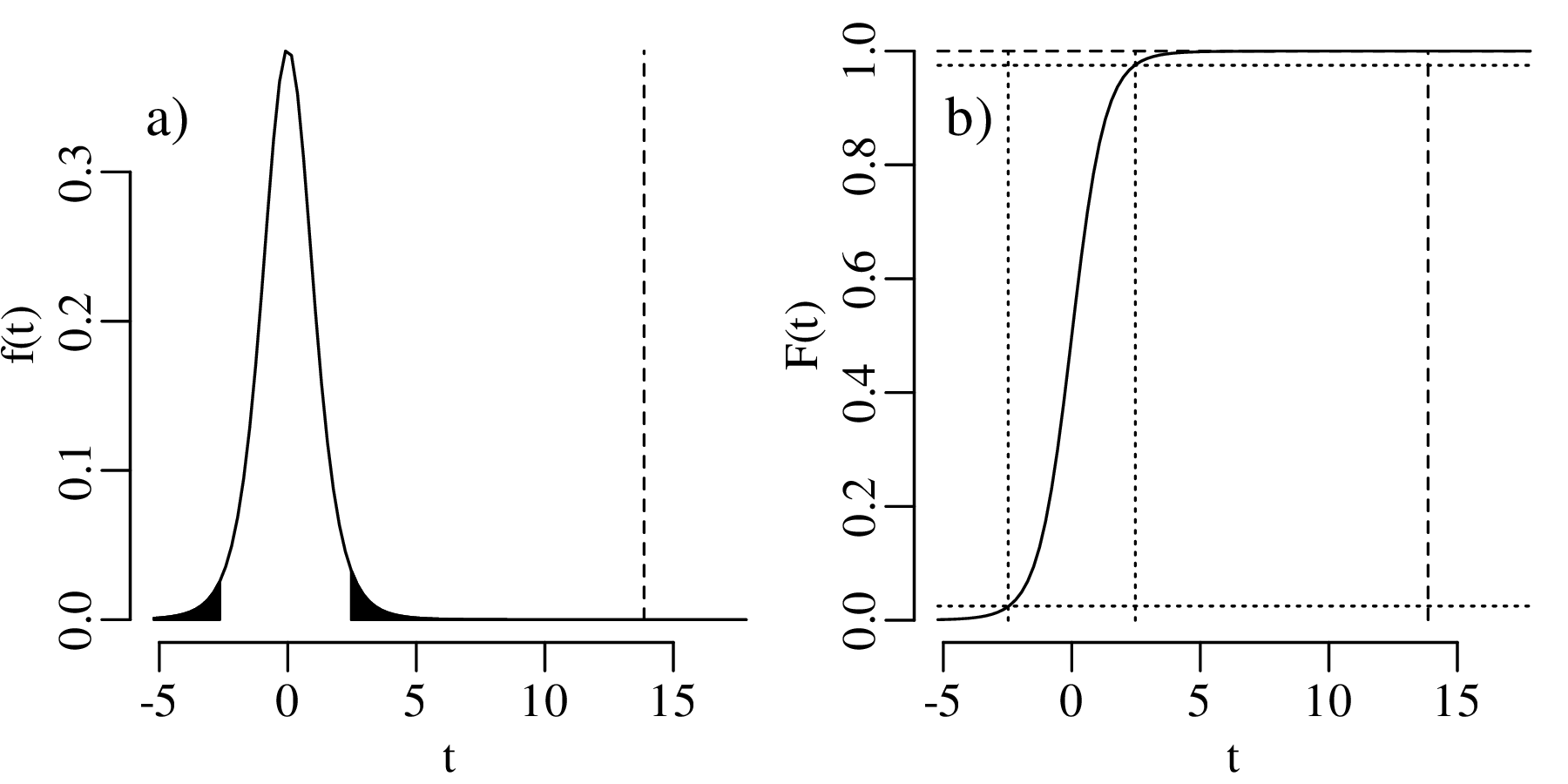

We therefore conclude that the coins are not made of pure gold. Here is a graphical representation of this test:

The comparison between the mean of a sample (of coin densities) and a particular value (19.30 g/cm3) is called a one sample t-test. If we want to compare the mean densities of two samples, then this requires a two sample t-test. For example, consider the following two collections of coins:

| coin # | 1 | 2 | 3 | 4 | 5 |

| density (1st collection) | 19.09 | 19.17 | 19.31 | 19.07 | 19.18 |

| density (2nd collection) | 19.30 | 19.33 | 19.15 | 19.32 |

The average densities of collection 1 and 2 are 19.164 g/cm3 and 19.275 g/cm3, respectively. If we assume that the two collections have the same variance, then we can test whether the difference between the two means is significant or not.

HA (alternative hypothesis):

μ1≠μ2

| (9.4) |

where 1 = 19.164, n1 = 5, 2 = 19.275, n2 = 4, and sp is the pooled standard deviation:

| (9.5) |

in which s[x1] = 0.095 and s[x2] = 0.084 are the standard deviations of the first and second coin collection, respectively. Plugging the data into equations 9.4 and 9.5 yields t = −1.857.

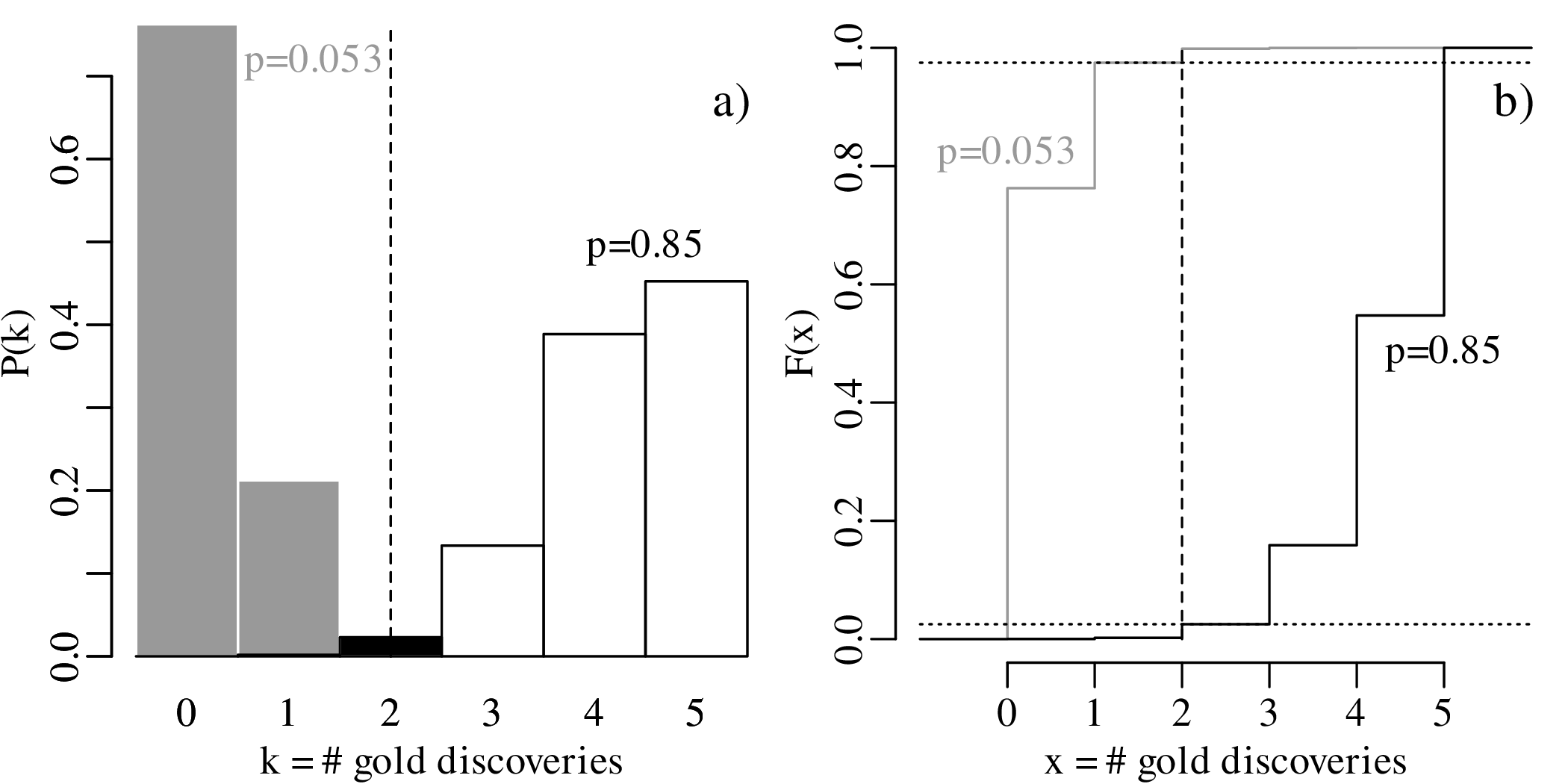

| t | -3.00 | -2.36 | -1.89 | -1.857 | -1.41 | -0.71 | 0.00 | 0.71 | 1.41 | 1.89 | 2.36 | 3.00 |

| P(T ≤ t) | 0.01 | 0.025 | 0.05 | 0.053 | 0.1 | 0.25 | 0.5 | 0.75 | 0.9 | 0.95 | 0.975 | 0.99 |

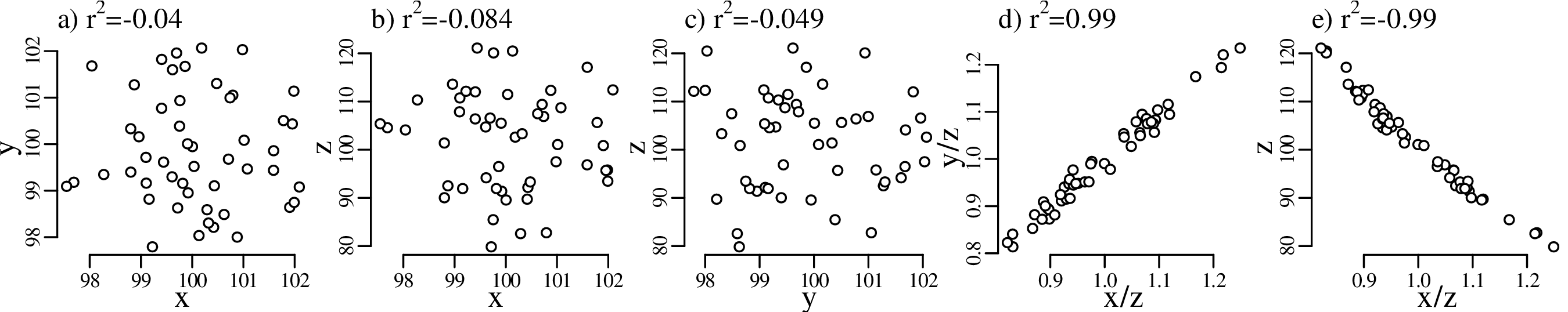

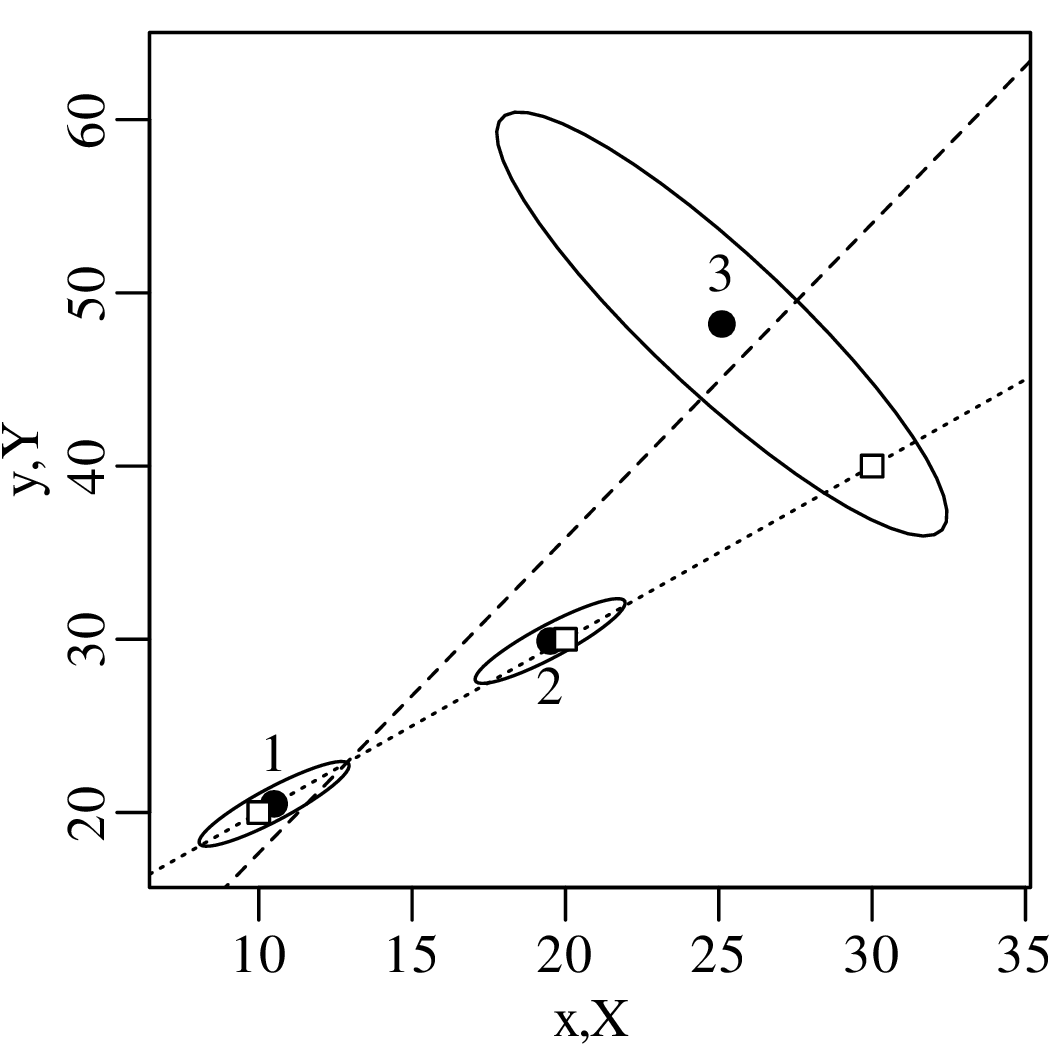

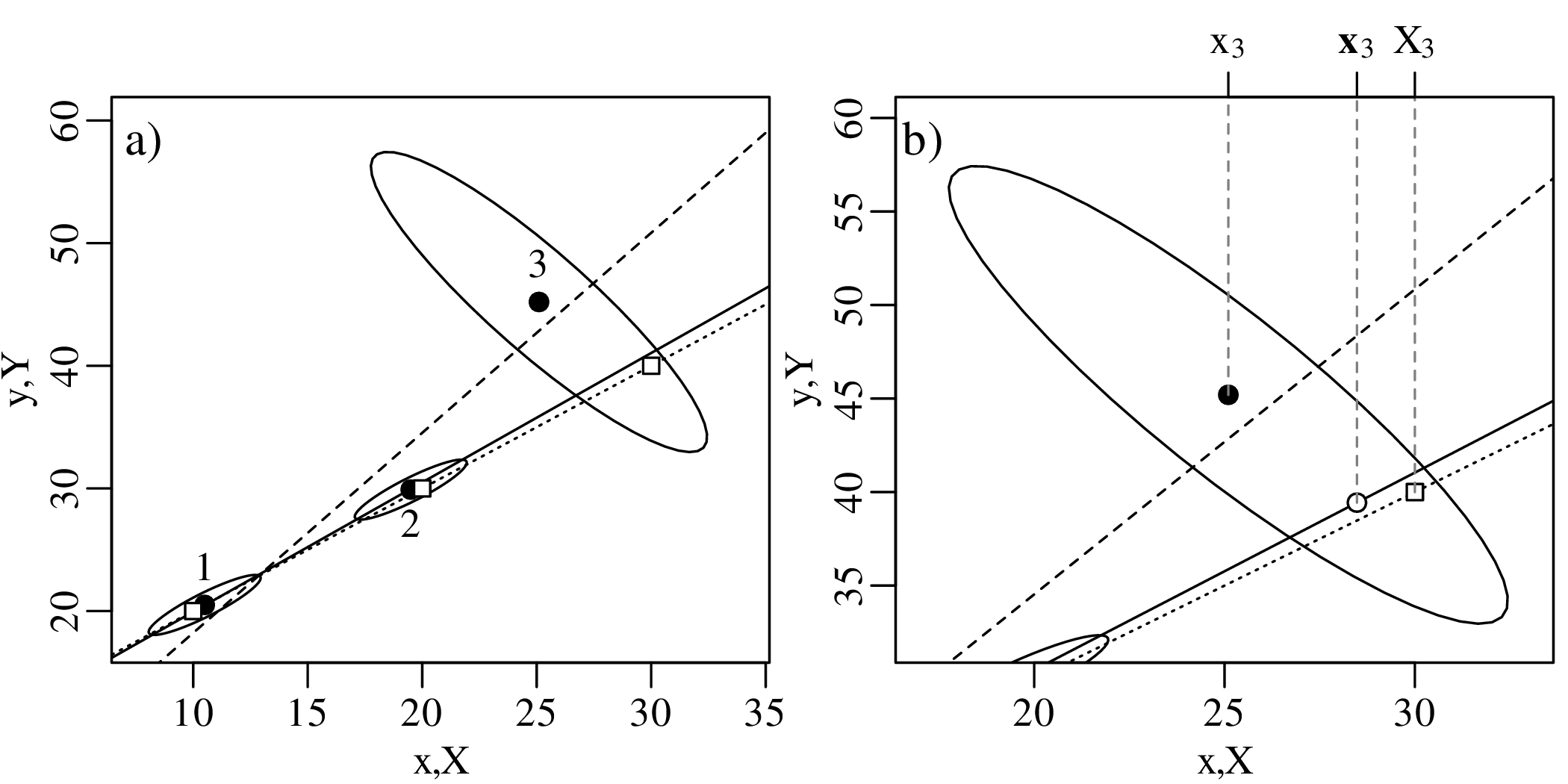

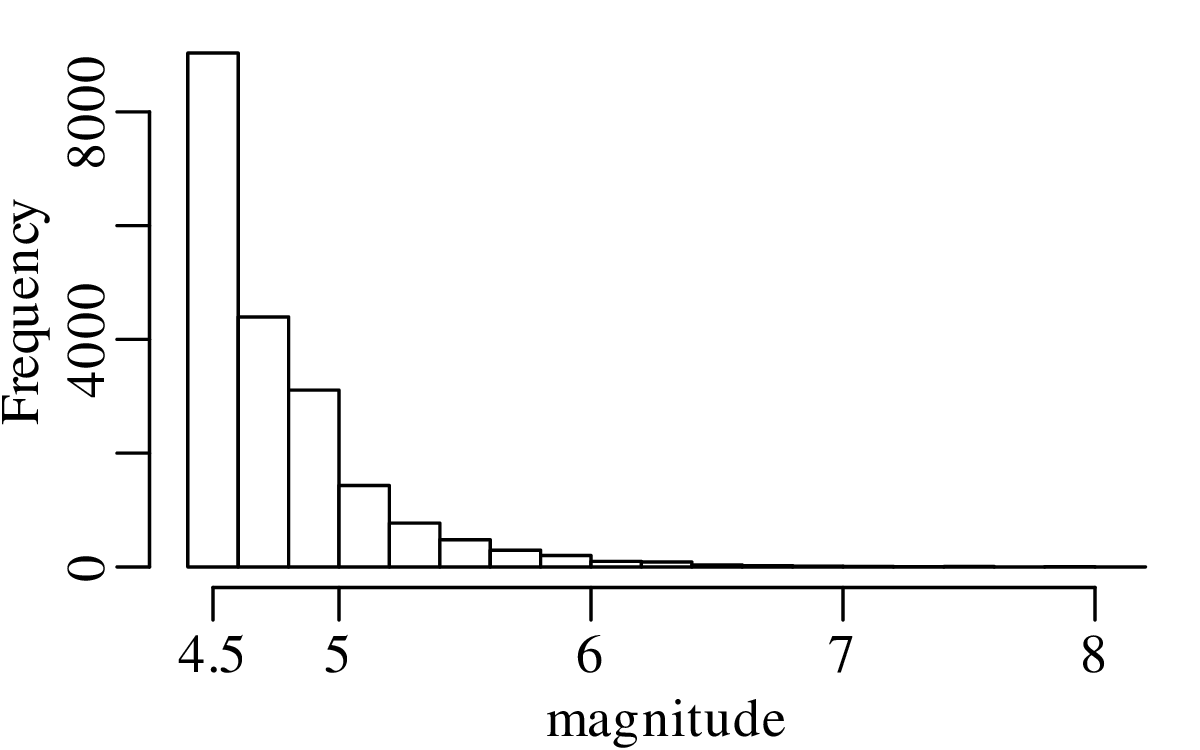

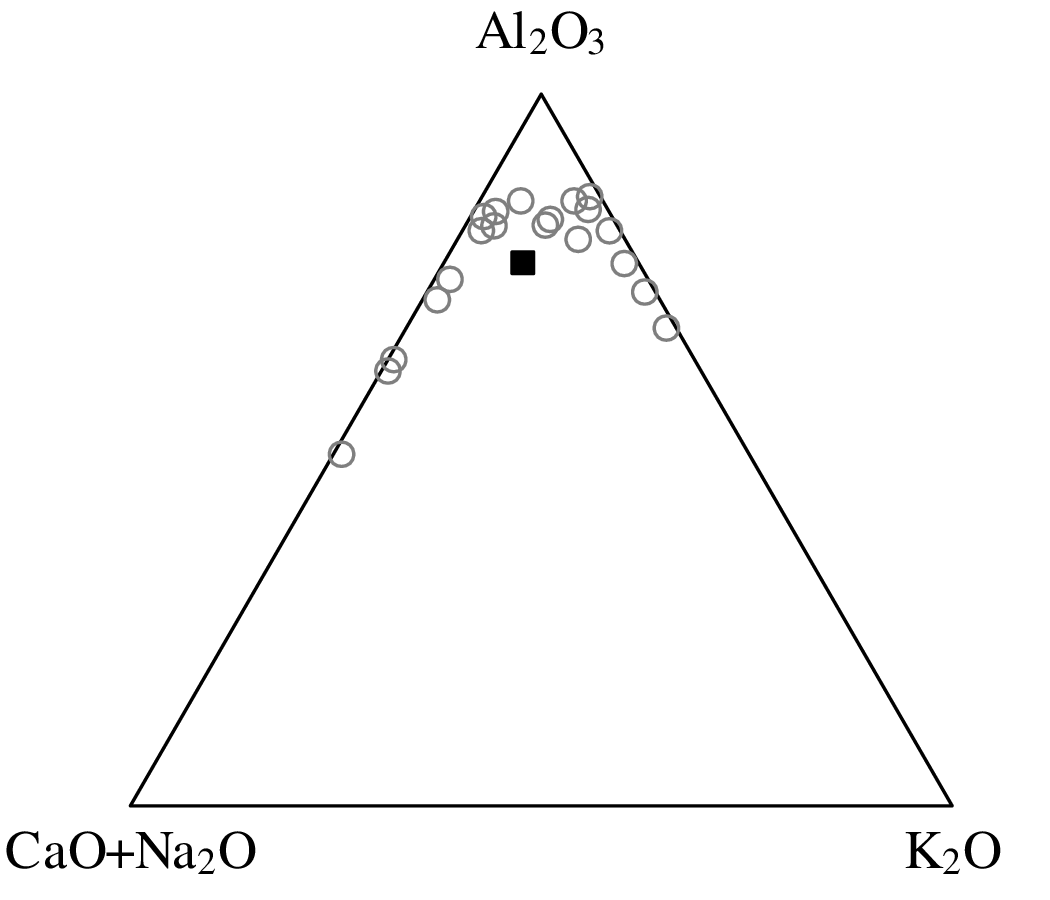

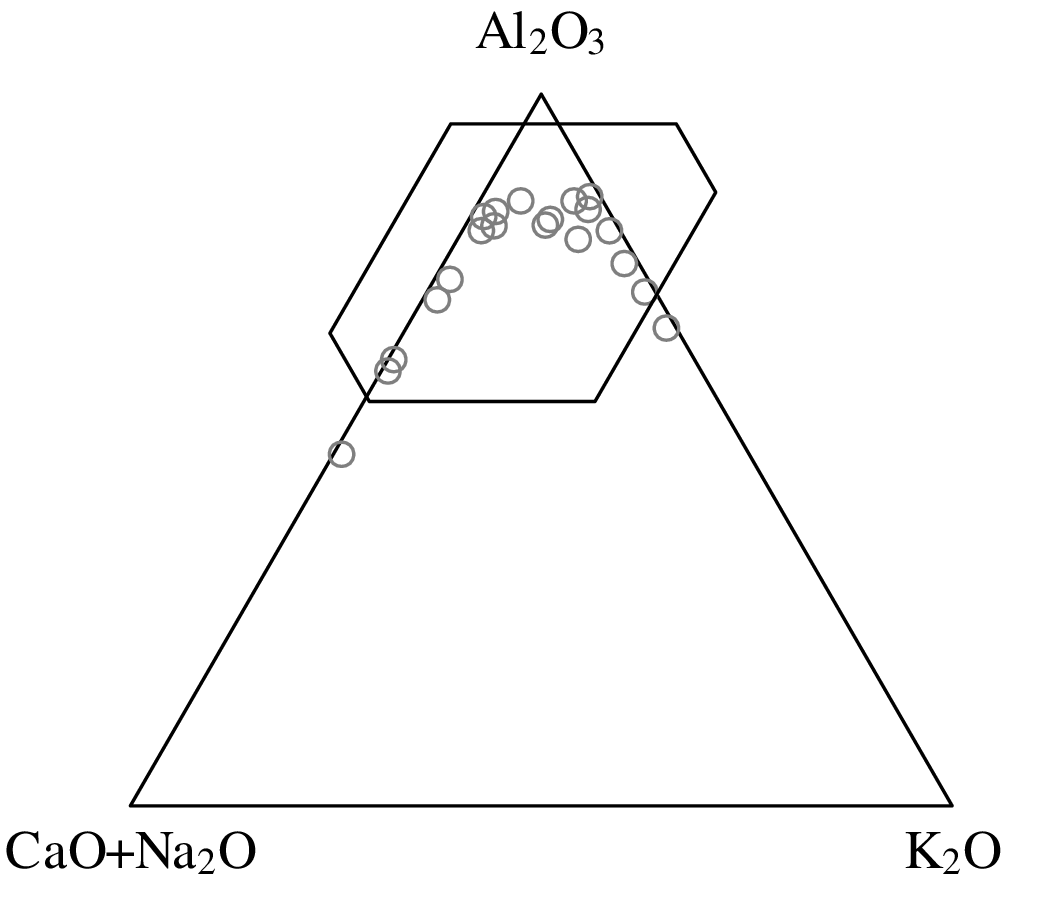

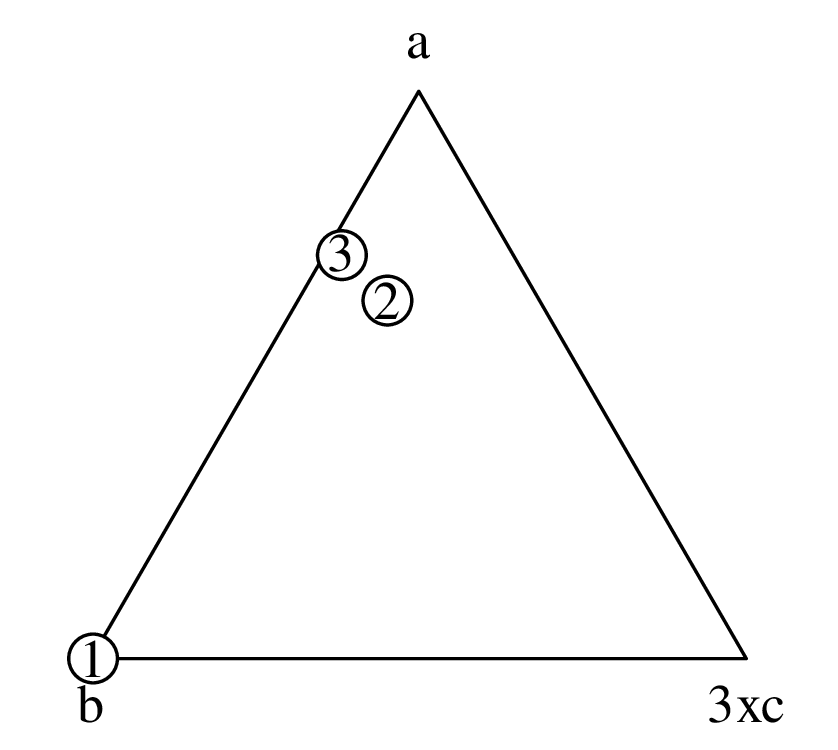

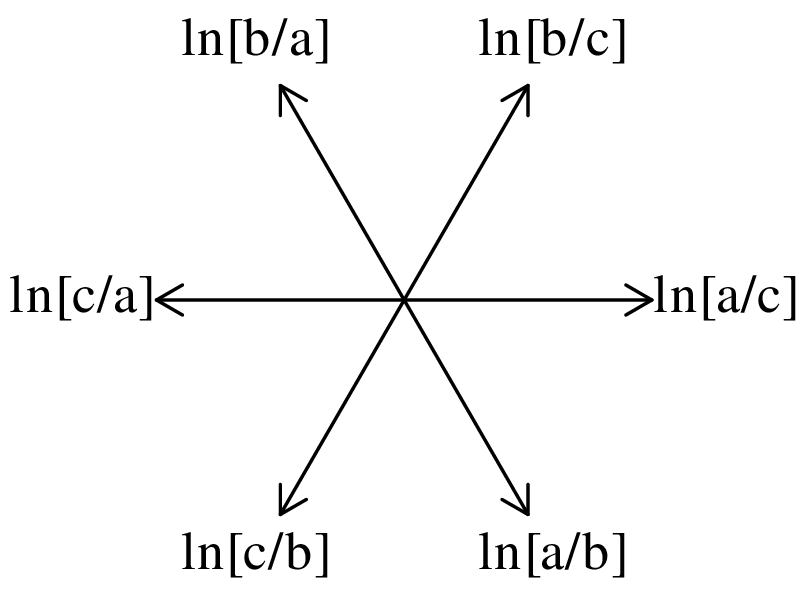

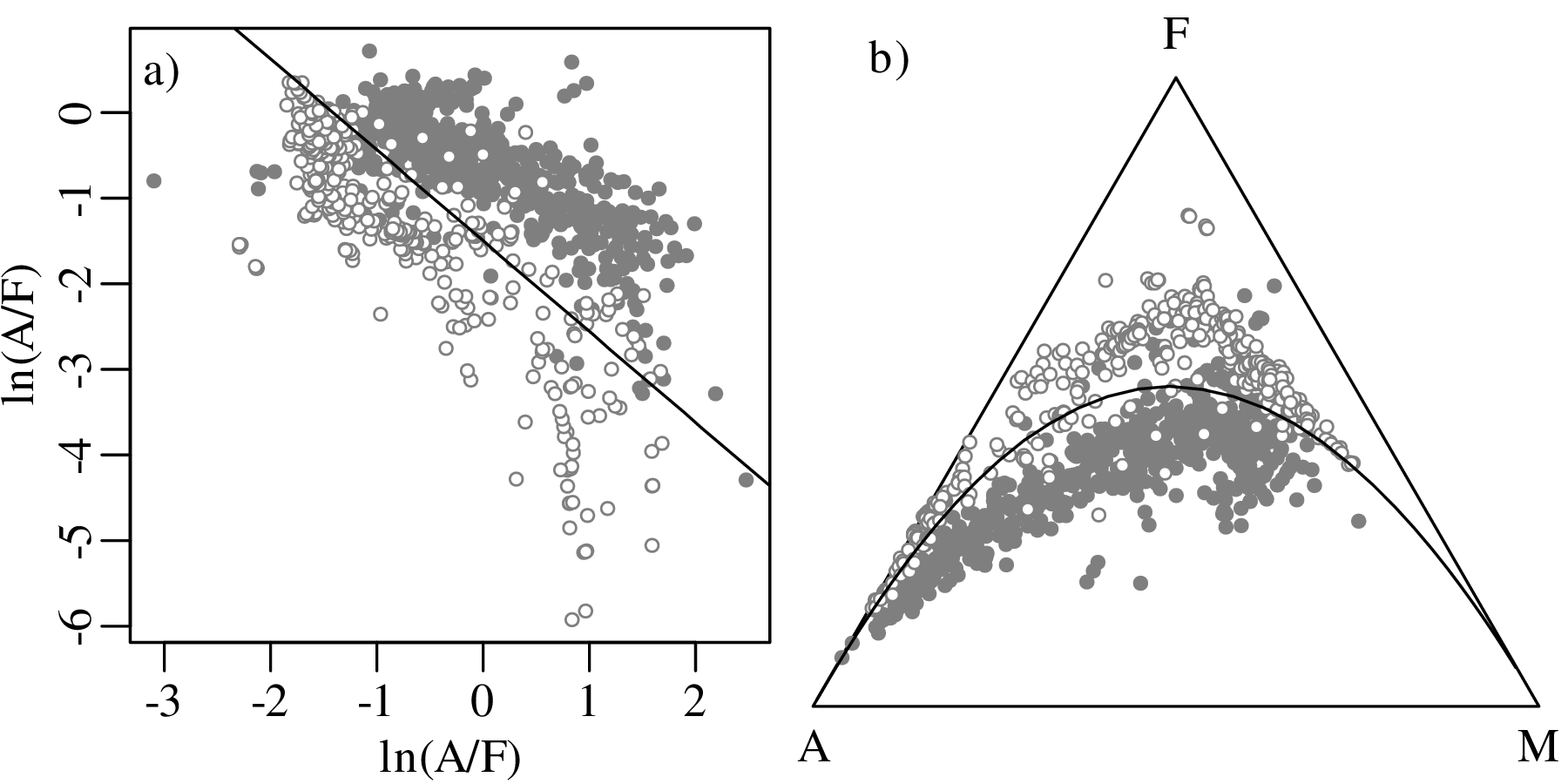

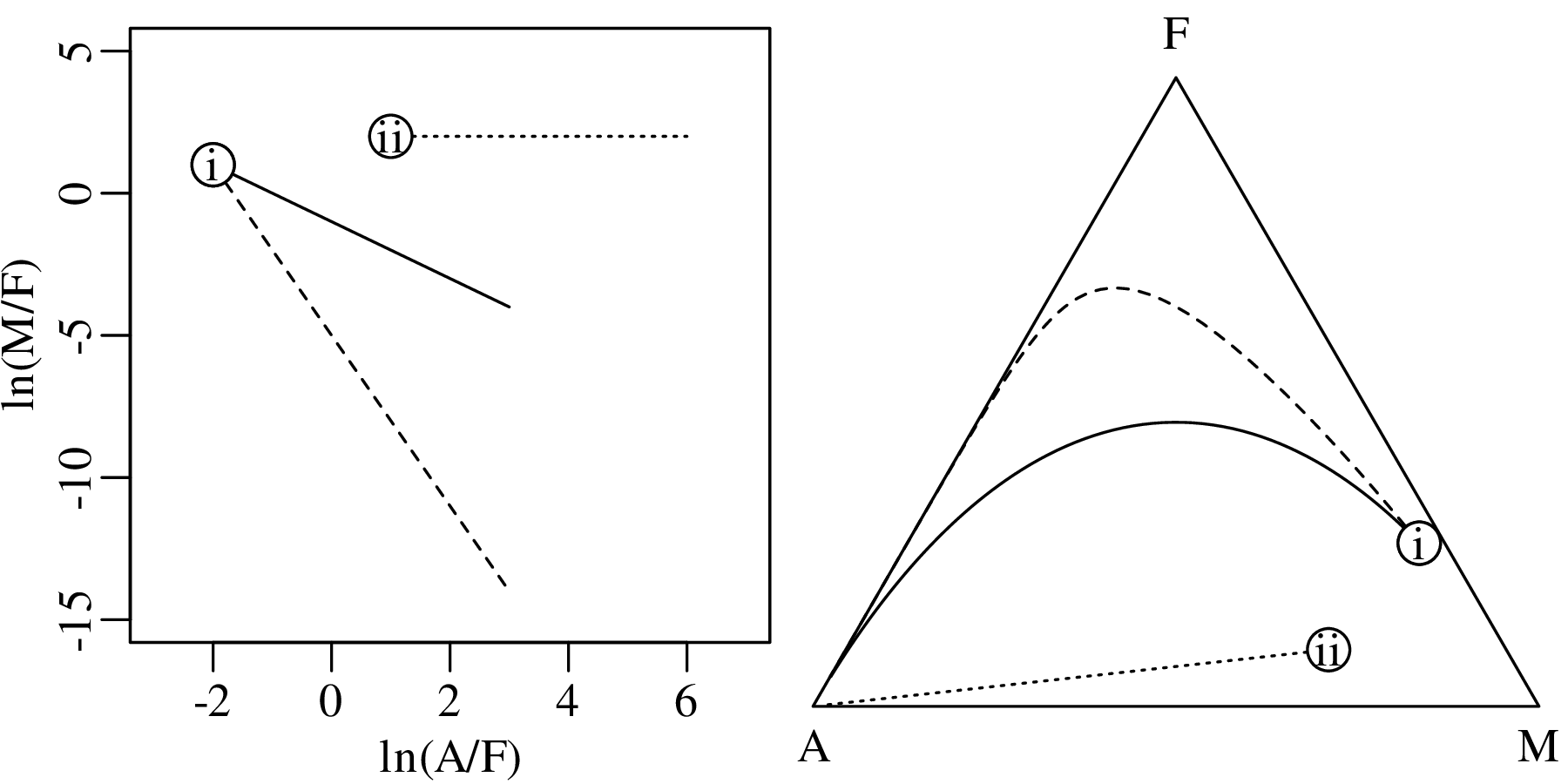

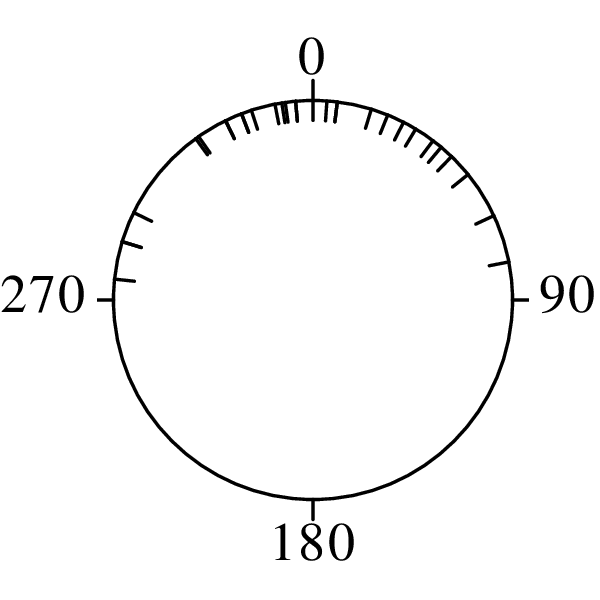

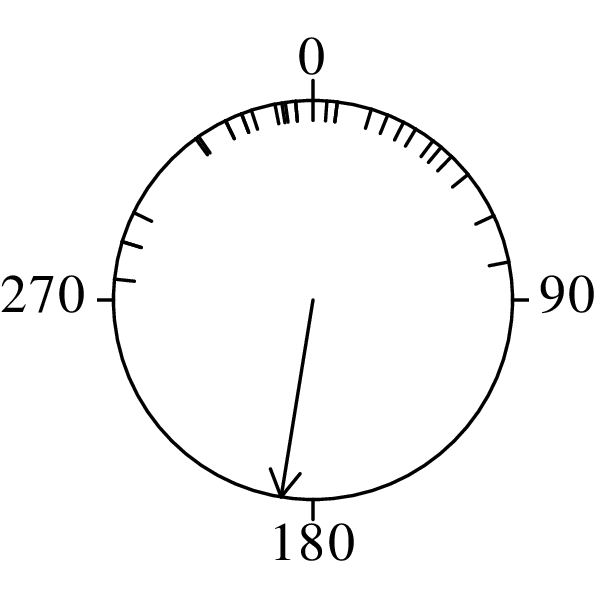

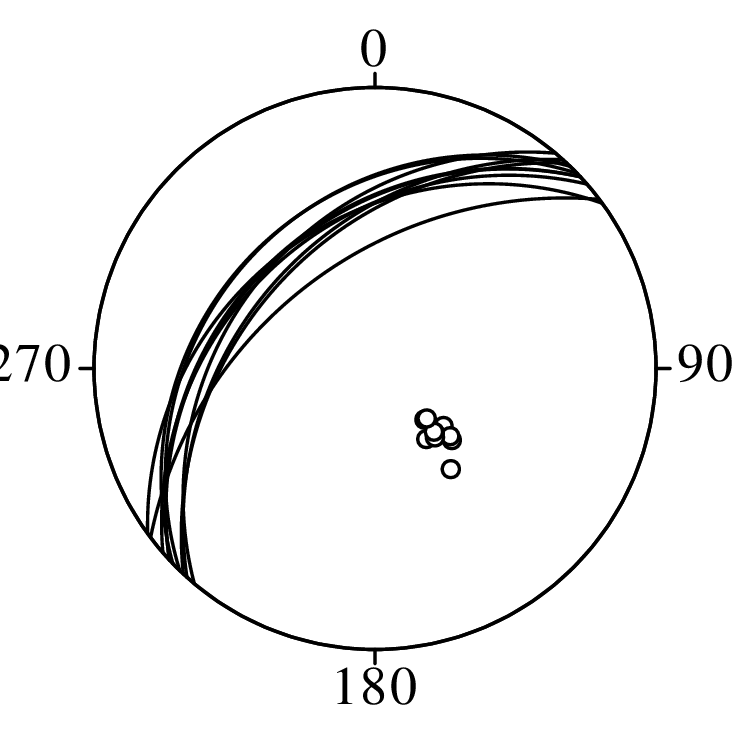

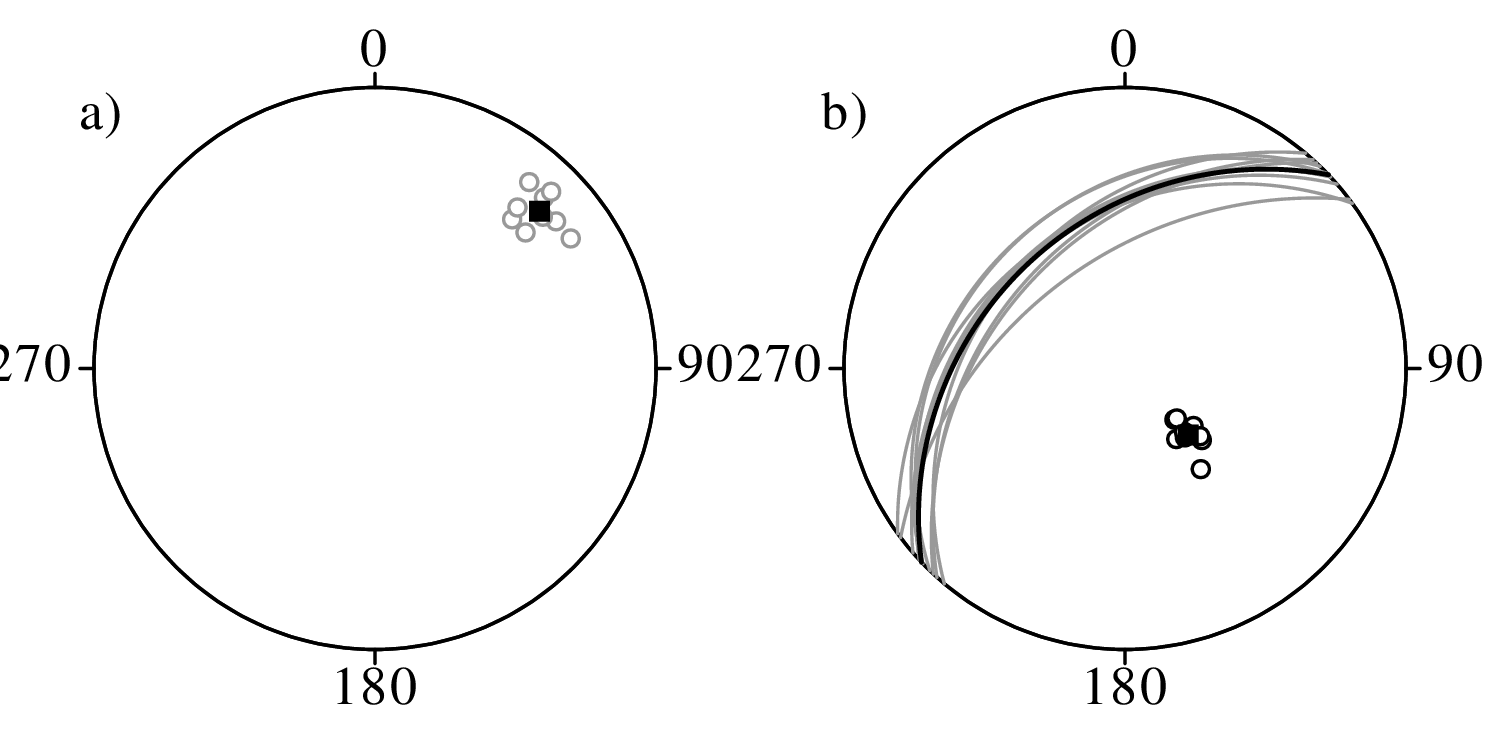

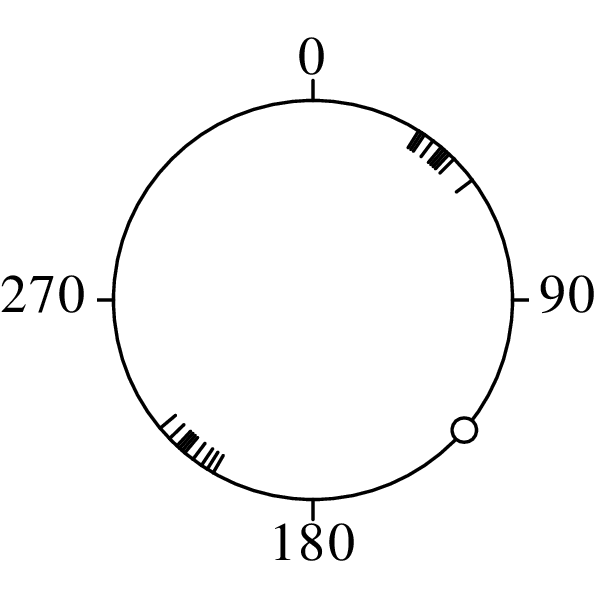

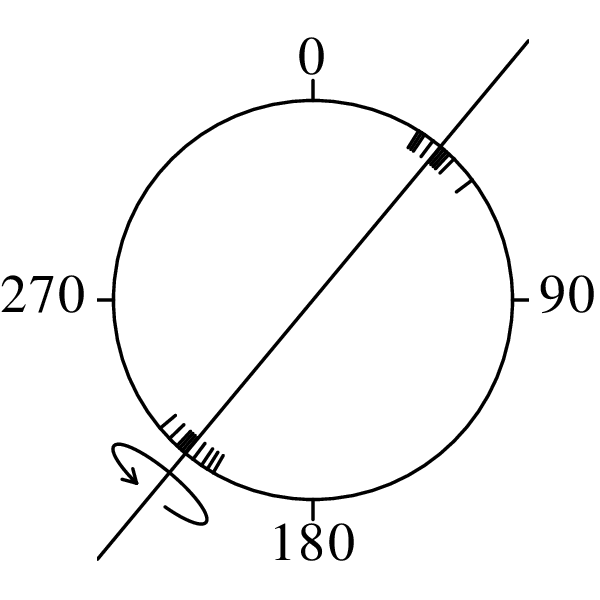

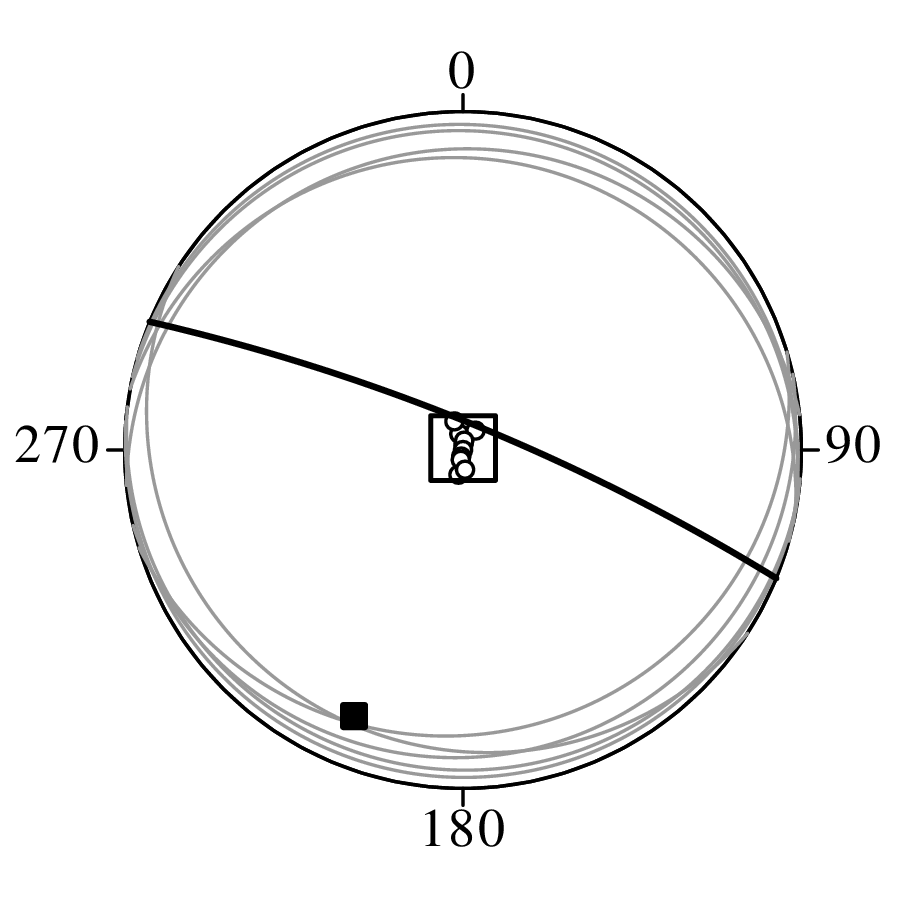

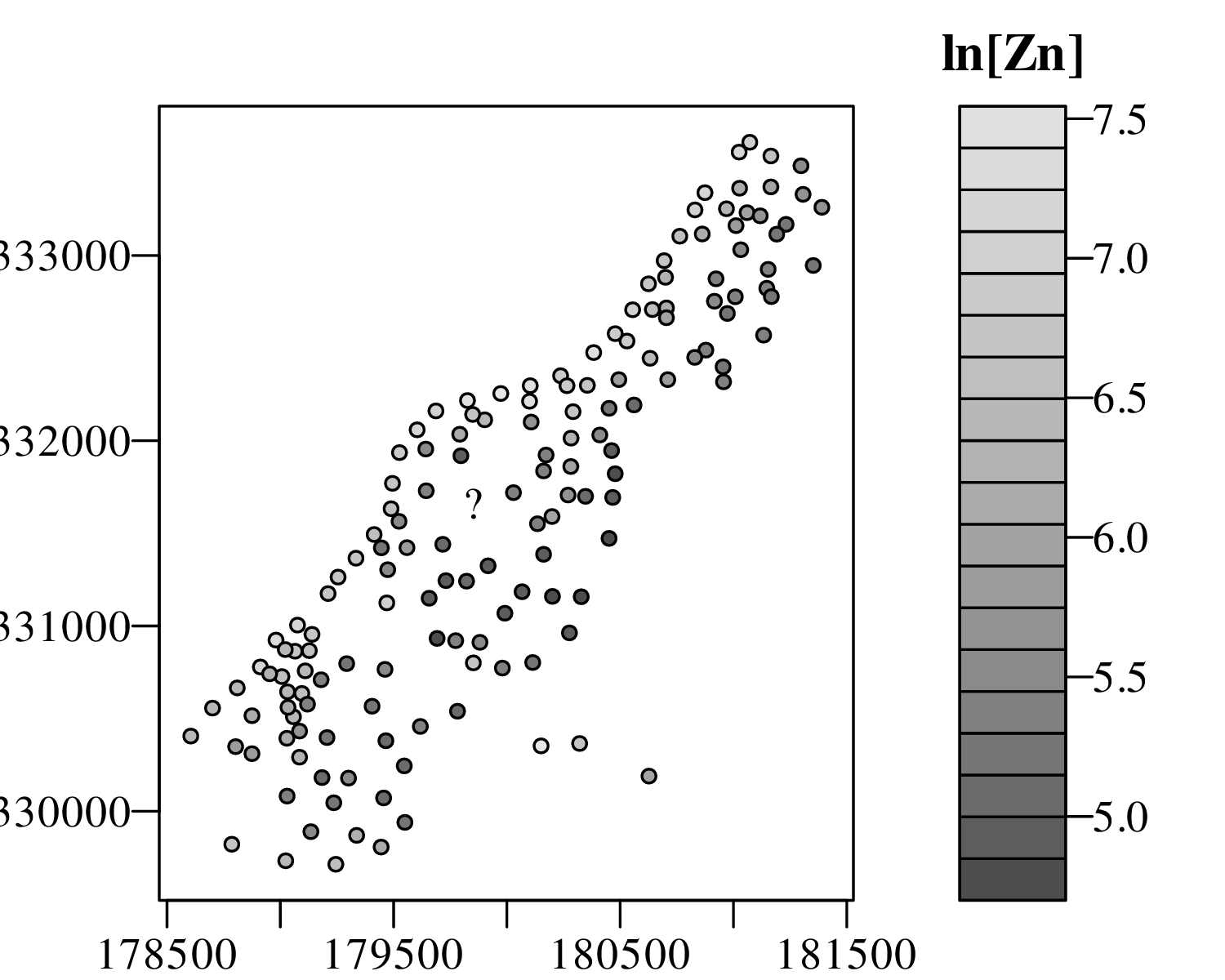

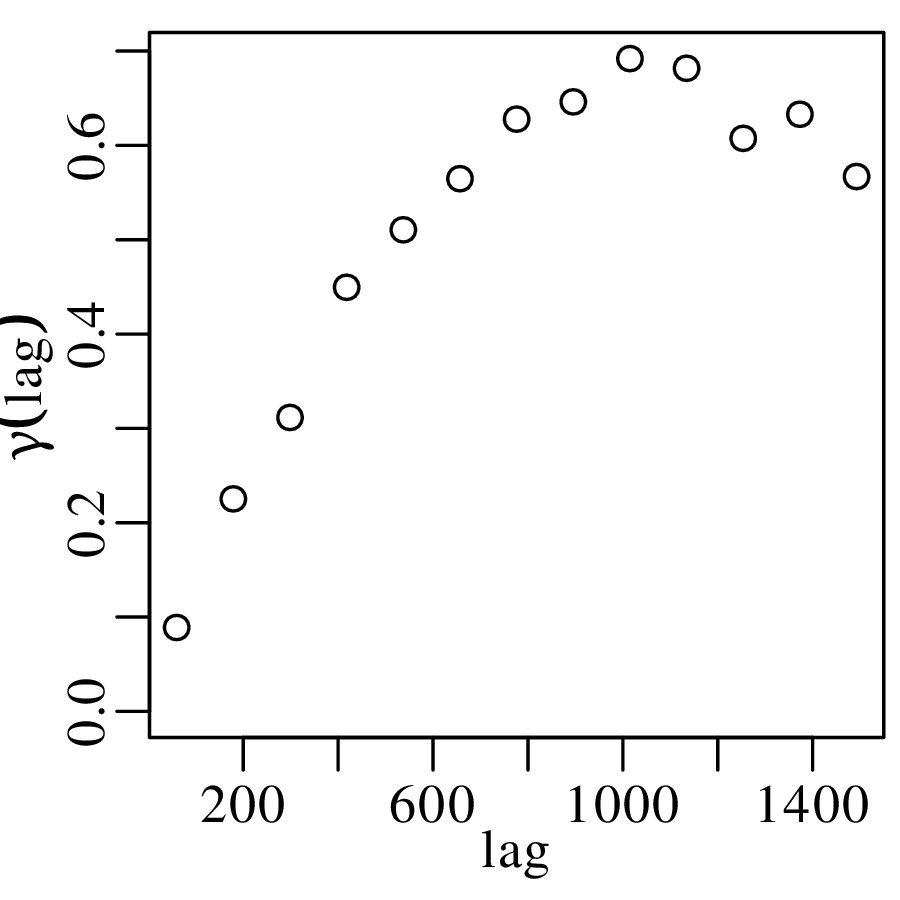

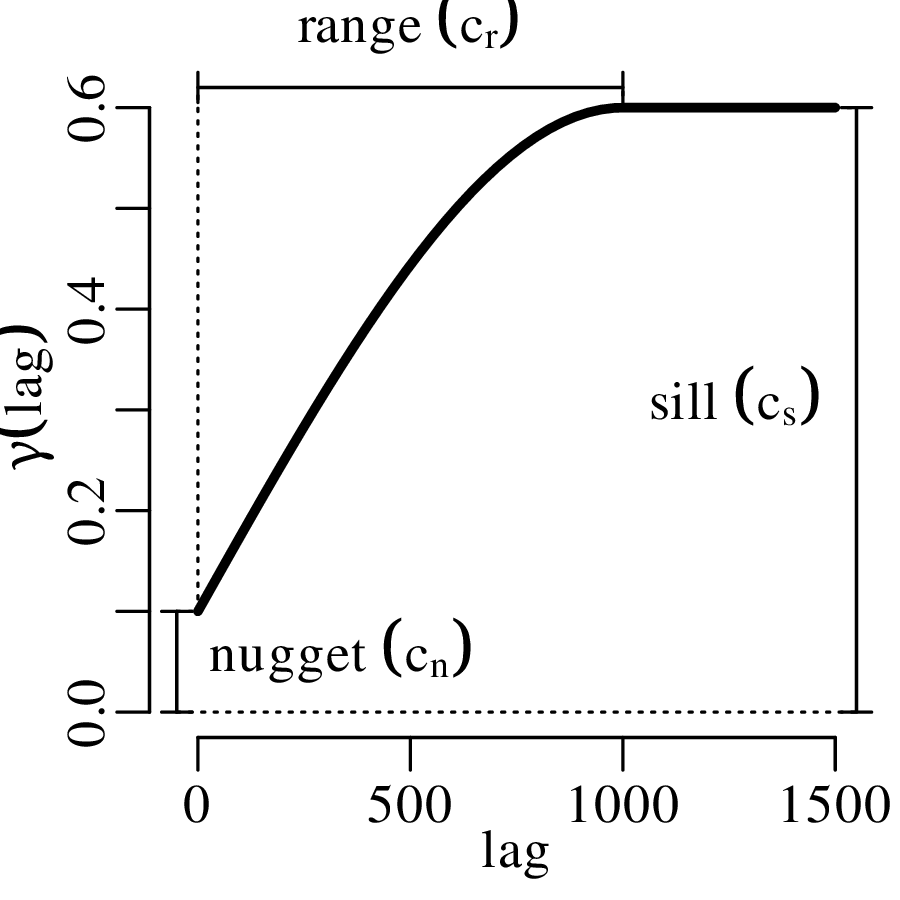

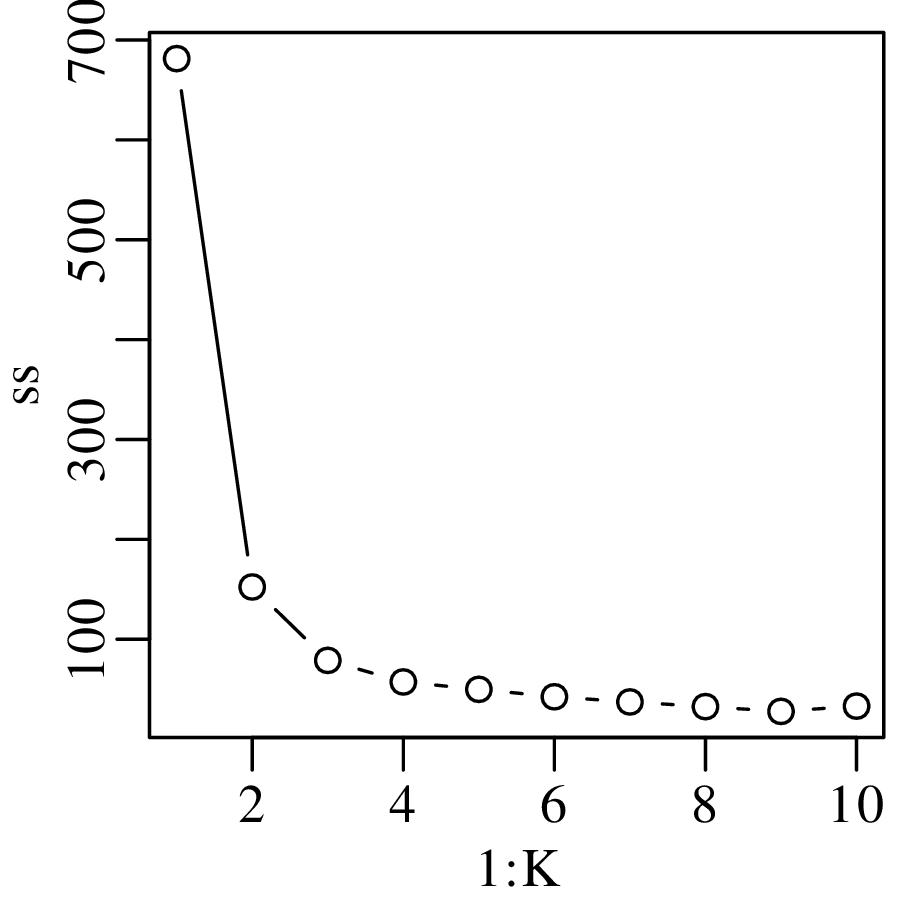

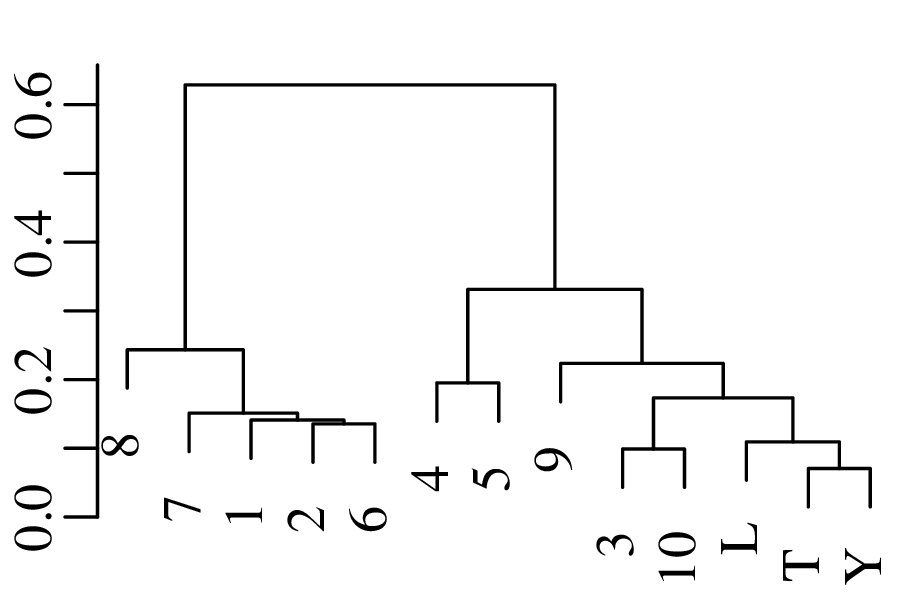

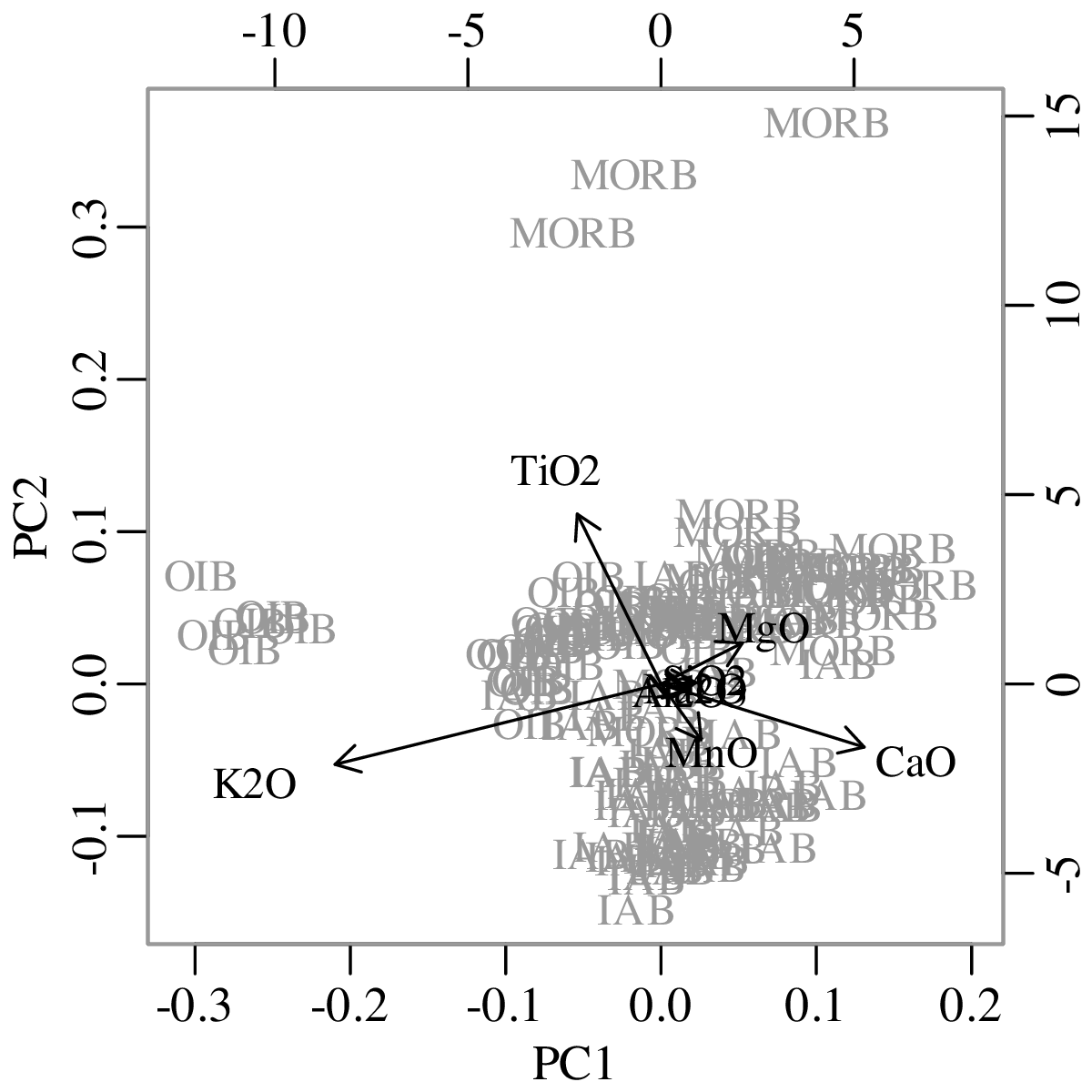

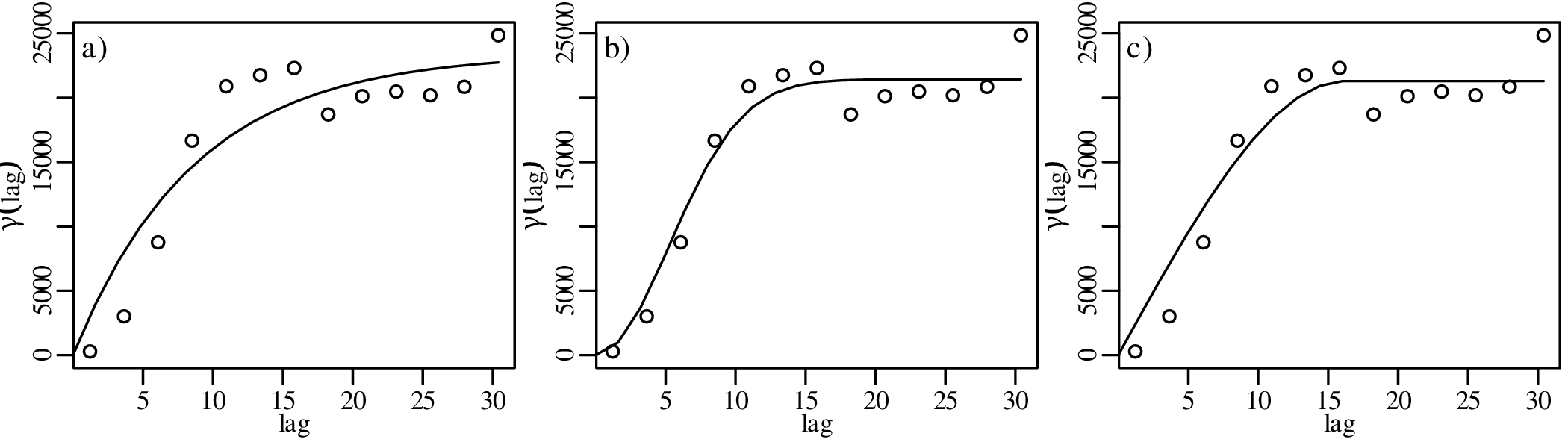

| P(T ≥ t) | 0.99 | 0.975 | 0.95 | 0.947 | 0.9 | 0.75 | 0.5 | 0.25 | 0.1 | 0.05 | 0.025 | 0.01 |